Clear Sky Science · en

Manipulable object processing reveals distinct neural and behavioral signatures for visual, functional, and manipulation properties

How Our Brains Make Sense of Everyday Tools

Picking up a pair of scissors or turning a key in a lock feels effortless, but behind these simple actions lies an intricate choreography inside the brain. This study asks a deceptively simple question: when we look at and use everyday objects like tools, does the brain treat how they look, how we use them, and what they are for as separate kinds of information? By teasing these pieces apart, the researchers show that our brains organize knowledge about objects in a surprisingly structured and efficient way.

Seeing, Using, and Purpose as Separate Clues

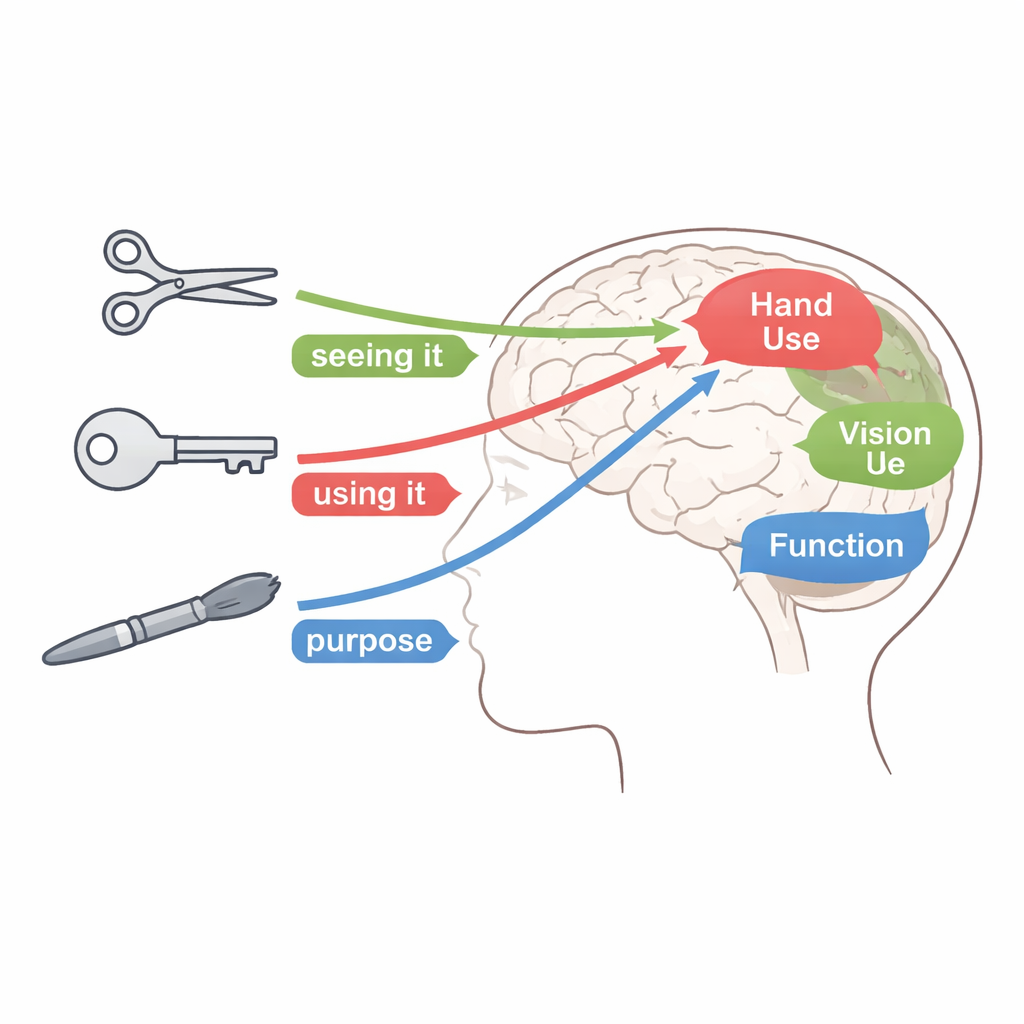

The authors focus on “manipulable objects” such as scissors, keys, and paintbrushes—things we can grasp and use to achieve a goal. They divide what we know about these objects into three types of information. First is vision: what the object looks like, including shape, color, and material. Second is manipulation: how we move our hands and fingers to use it, such as a precise pinch grip or a full-handed grasp. Third is function: what the object is for—cutting, cleaning, opening, and so on. Earlier work with people who had brain damage hinted that these kinds of knowledge can break down separately, but it was unclear how they are organized in the healthy brain when all three are studied side by side.

Testing How Similar Objects Feel to the Brain

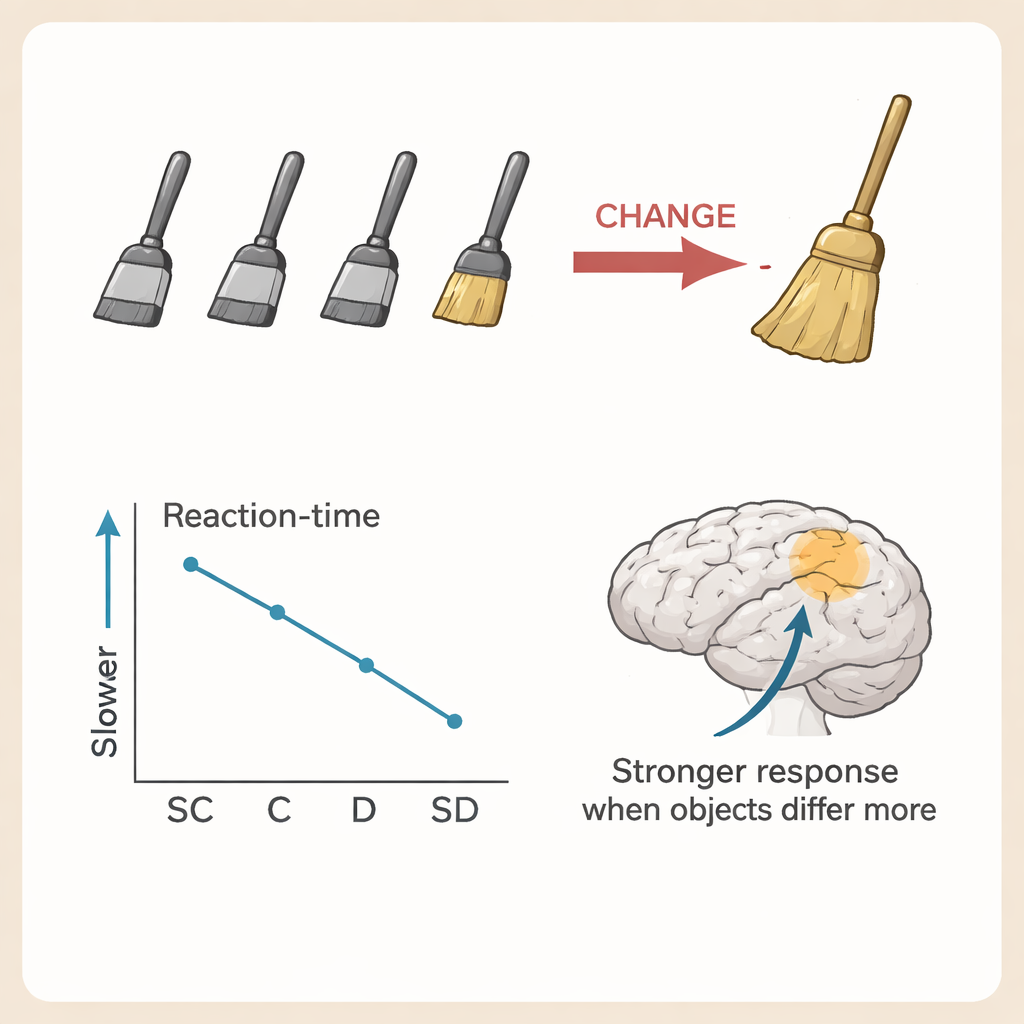

To probe this organization, the team built detailed feature lists for 80 tools, based on thousands of descriptions from volunteers. For each object pair, they computed how similar the two were in appearance, in how they are manipulated, or in their function. Then, in a series of behavioral experiments, participants saw trains of images showing several versions of one object, followed by a new object that was either very similar, moderately similar, or very different in one of the three knowledge types, while the other two types were held roughly constant. The task was simple: press a button as soon as you notice that the object has changed. Across all three kinds of knowledge, people were slower to spot a change when the new object was more similar to the previous ones along the tested dimension, suggesting that similarity in vision, manipulation, or function each independently makes objects harder to distinguish.

Watching Brain Activity Adapt and Then “Release”

In matching brain-scanning experiments, other groups of volunteers lay in an MRI scanner and simply watched similar sequences of object images without pressing any buttons. The researchers used a technique called adaptation: when the same type of object is shown repeatedly, activity in related brain regions tends to decrease, and then rebound—or “release”—when something meaningfully different appears. By gradually changing how similar the final object was to the earlier ones in only one knowledge type at a time, the team could see where in the brain responses grew stronger as the objects became less similar. Visual similarity drove graded changes mainly in regions of the ventral visual stream, including the fusiform gyrus and neighboring areas that are known to process shape, material, and surface features. Similarity in hand use influenced areas in the dorsal visual stream, especially in and around the intraparietal sulcus, which are involved in guiding grasping and hand movements. Functional similarity, in turn, shaped responses in lateral occipitotemporal regions that are thought to encode higher-level action goals, such as “cutting” or “opening.”

Where Different Types of Knowledge Come Together

Although each type of information had its own preferred network, they were not entirely isolated. Some regions in the middle of the temporal lobe, particularly the medial fusiform gyrus and the collateral sulcus, showed sensitivity to more than one knowledge type. These areas sit at a crossroads, with connections to regions involved in vision, hand control, and action understanding. The authors suggest that these zones may act as integration hubs, combining how an object looks, how it is used, and what it is for into a richer, unified representation. Once this integrated picture is formed, it can be shared with parietal and frontal areas to support smooth, goal-directed interaction with the object.

What This Means for Everyday Life

For a non-specialist, the key message is that the brain does not store “scissors” in a single mental box. Instead, it breaks the object down into at least three intertwined streams: its appearance, the hand movements needed to use it, and its purpose. Each of these is handled in partly separate brain regions and is organized by how similar different objects are along that dimension. This division of labor helps explain why some patients can recognize an object but not know how to use it, or can describe what it is for but fail to perform the action. More broadly, the study shows that our knack for instantly recognizing and using tools is built on a finely tuned system that sorts and integrates different kinds of knowledge, allowing us to move through a world full of objects with remarkable ease.

Citation: Valério, D., Peres, A. & Almeida, J. Manipulable object processing reveals distinct neural and behavioral signatures for visual, functional, and manipulation properties. Commun Psychol 4, 28 (2026). https://doi.org/10.1038/s44271-026-00393-z

Keywords: object recognition, tool use, brain networks, visual cognition, neuroscience