Clear Sky Science · en

All eyes, no IMU: learning flight attitude from vision alone

Seeing Like an Insect

Small flying robots usually rely on tiny motion sensors to keep themselves upright, a bit like an inner ear for machines. But insects manage agile flight with far simpler hardware, leaning heavily on what they see. This study shows that a drone can do something similar: fly stably using only a special kind of camera and a compact artificial brain, without the usual motion sensors. That shift could make future palm‑sized and insect‑scale drones lighter, cheaper, and more robust.

Why Get Rid of the Usual Sensors?

Attitude control—keeping a drone correctly tilted with respect to gravity—is normally handled by an onboard unit that measures acceleration and spin. These inertial sensors work well, but they add weight, use power, and can be a single point of failure. In contrast, many flying insects have no dedicated gravity sensor and instead extract clues about their tilt from the way the world moves across their eyes. If robots could copy this trick, very small flyers might need only vision for both seeing and balancing, simplifying their design and making it easier to shrink them toward insect size.

A Camera That Sees Only Change

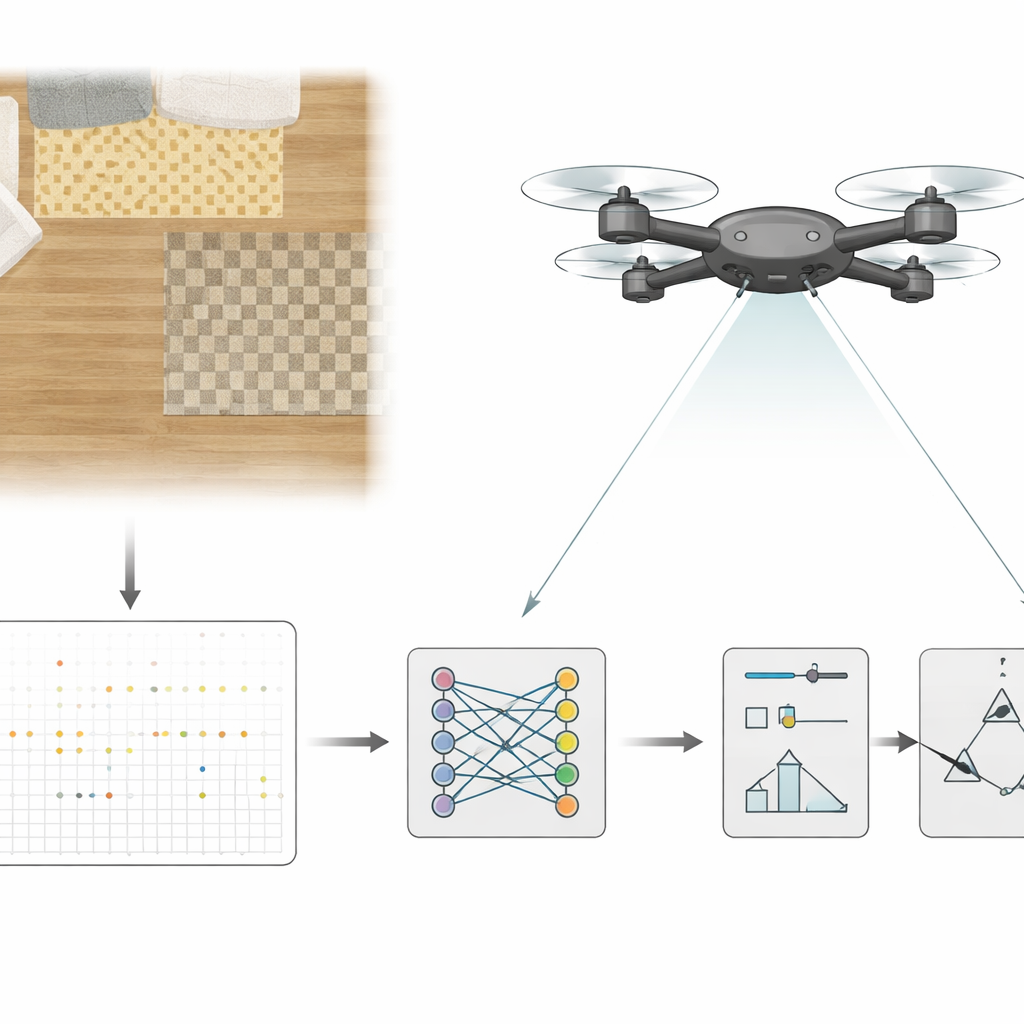

Instead of a standard video camera, the researchers use an event-based camera aimed downward from the drone. Rather than sending full images at fixed intervals, this sensor reports only tiny brightness changes at each pixel, and it does so extremely quickly. The stream of events is bundled into brief slices, each covering just five thousandths of a second, and these slices are fed to a small recurrent convolutional neural network running on an onboard graphics chip. Over time, the network learns to turn patterns of visual change into estimates of the drone’s tilt and how fast it is rotating, effectively replacing the traditional motion unit in the control loop.

Teaching a Drone to Balance With Vision Alone

To train this artificial brain, the team first flew their quadrotor in an indoor arena while still using a conventional sensor suite. During these flights they recorded the event stream from the camera along with the tilt and rotation values estimated by the standard controller. They then trained the network, under supervision, to reproduce those values from the visual data alone. In later tests, the roles were reversed: the drone flew with the network’s estimates closing the loop, while independent motion‑capture or onboard measurements served only to measure how well it was doing. The system kept the drone hovering and following pilot‑commanded paths for minutes at a time, with most tilt errors within a few degrees and rotation errors within modest limits, sufficient for stable flight.

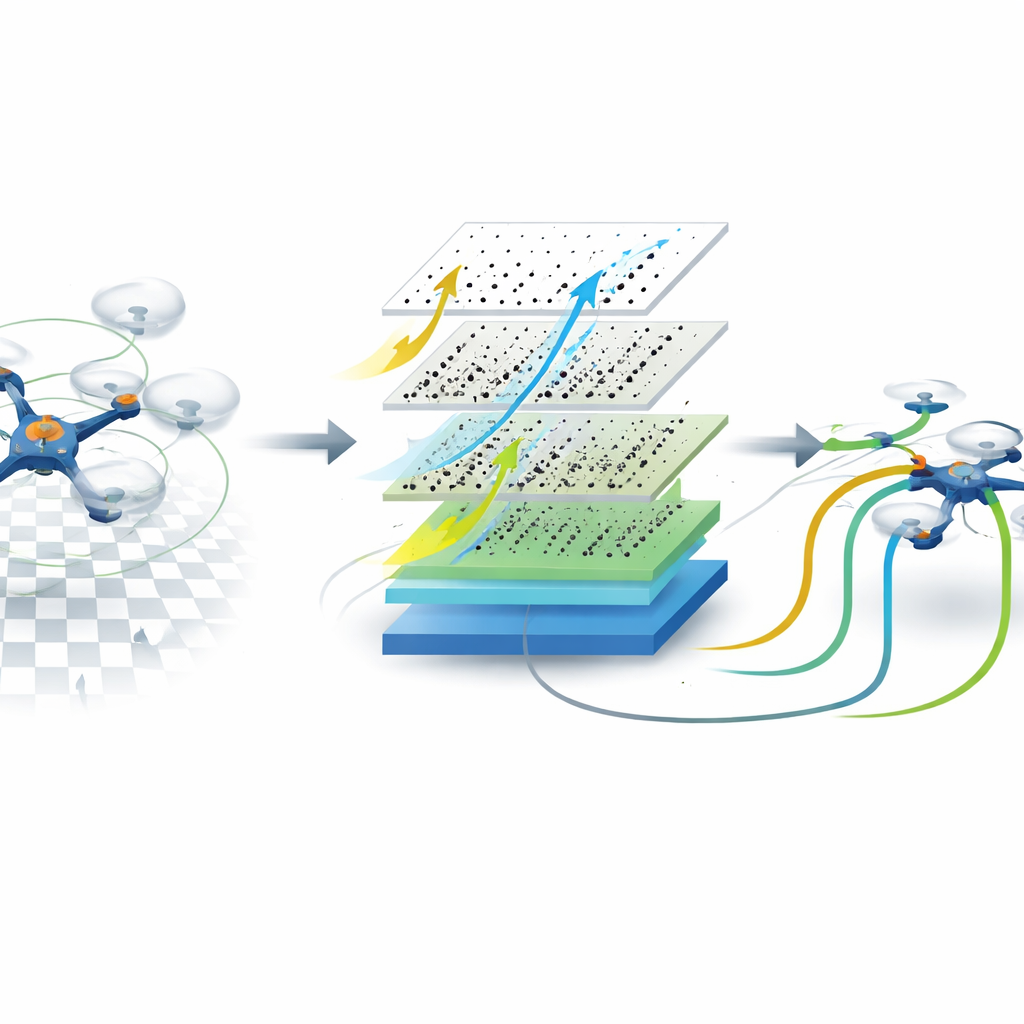

Peering Inside the Learned Visual Skill

The researchers probed what makes this vision-only control work best. They compared different network designs, added or removed extra inputs such as motor speeds or gyro signals, and varied how much of the camera’s field of view was used. Networks with memory—able to integrate visual information over time—were crucial for accurately following rapid rotations, while memory‑less versions struggled. A wide field of view, which exposes distant horizon‑like cues at the edges of the image, gave the lowest raw errors in familiar scenes. Surprisingly, however, forcing the network to look only at the central part of the image, where such static cues are missing, made it rely more on motion patterns instead of scene appearance. Although this reduced absolute accuracy, it improved how gracefully the system adapted when moved to very different environments, suggesting that an internal sense of motion was being learned.

Toward Tiny, Vision‑First Flying Robots

Overall, the work shows that a drone can keep itself upright and controllable using only what it sees, with no inertial sensor in the loop. By pairing an event camera with a compact neural network, the system reaches the speed and responsiveness needed for real‑time control while cutting hardware weight and complexity. For everyday readers, the main message is that future swarms of tiny, insect‑like flying robots may balance and navigate using a single smart eye, much as insects do, opening the door to lighter, more energy‑efficient machines that can safely explore cluttered, unpredictable spaces.

Citation: Hagenaars, J.J., Stroobants, S., Bohté, S.M. et al. All eyes, no IMU: learning flight attitude from vision alone. npj Robot 4, 21 (2026). https://doi.org/10.1038/s44182-026-00081-4

Keywords: vision-based flight control, event camera drones, bio-inspired robotics, neural network controllers, insect-scale UAVs