Clear Sky Science · en

Embodied tactile perception of soft objects properties

Why Teaching Robots to Feel Matters

Imagine a robot gently examining a patient’s abdomen, sorting ripe fruit without bruising it, or assembling fragile parts by touch alone. To do any of this safely, robots must learn to “feel” soft objects in a rich, human‑like way. This article describes how researchers built an electronic skin and a new kind of learning model so robots can better sense the softness, shape, and surface texture of squishy objects—bringing machines a step closer to truly dexterous touch.

Building a High-Tech Sense of Touch

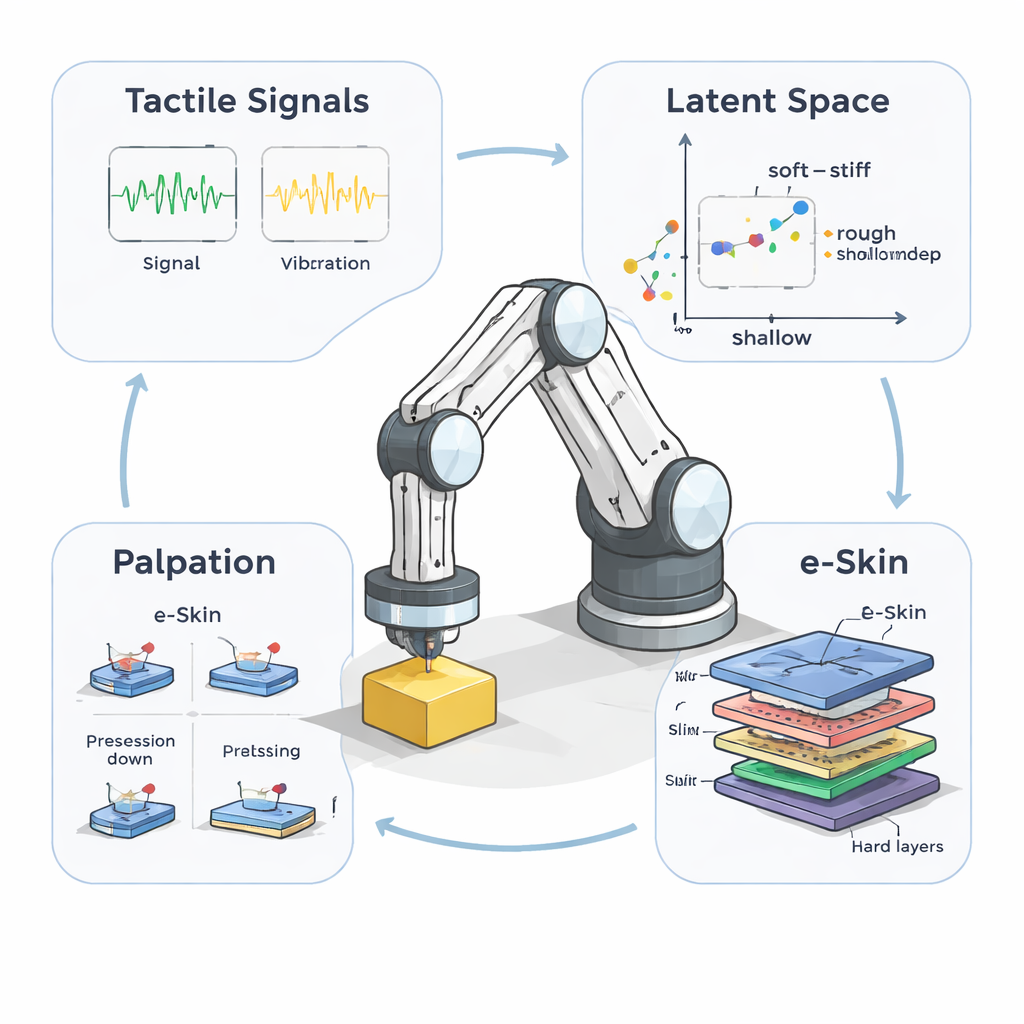

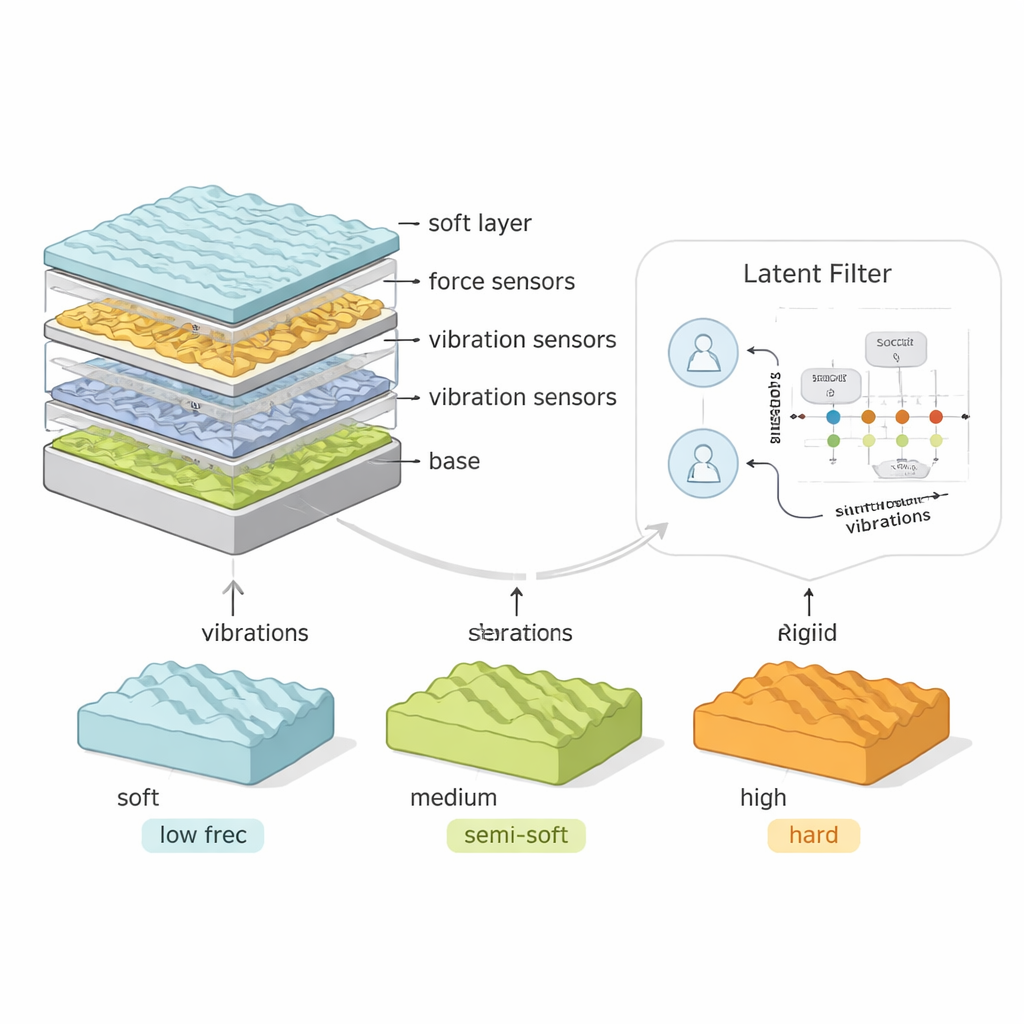

Human skin is soft, layered, and packed with different touch receptors that react to pressure, stretch, and vibration. The team set out to mimic these abilities in a robot. They created a modular electronic skin, or e‑Skin, made from stacked silicone layers with embedded sensors. Two layers contain dense grids of force sensors that measure how much the skin is pushed in different spots, while a third layer holds tiny accelerometers that pick up fast vibrations, like the buzz you feel when sliding a finger over rough fabric. By swapping silicone types, they could make the e‑Skin itself softer or stiffer, and by turning sensor layers on or off, they could test different combinations of “sense organs.”

Designing a World of Soft Things

To study touch in a controlled way, the researchers needed more than simple rubber blocks. They created a library of “wave objects” with carefully tuned properties. Each object had a rippled top surface whose bumps could be shallow or tall (amplitude) and close together or far apart (spatial frequency), and each was cast from materials ranging from very soft silicone to rigid plastic. Some samples also hid a thin stiff layer under a soft surface, mimicking tissues or materials that change as you press deeper. This allowed the team to know the exact softness and texture of every object the robot touched, so they could compare what the robot “felt” with the ground truth.

Teaching Robots to Explore by Touch

Just as people poke, press, and slide their fingers in different ways to judge an object, the robot used three basic palpation moves. In pressing, it moved straight up and down to probe bulk softness. In precession, it tilted and rolled the e‑Skin, contacting several nearby regions and probing more complex shapes. In sliding, it moved sideways across the surface to highlight fine textures and friction. For each object, the robot performed these motions with varying depths and speeds, generating thousands of time‑varying touch signals—forces changing across the skin and vibrations rippling through it. These rich, dynamic data streams are far more informative than a single static poke.

Discovering Hidden Patterns in Touch

To make sense of this flood of information, the authors introduced a machine‑learning model they call the Latent Filter. Instead of trying to label objects directly, the model learns an internal “map” where each point summarizes the robot’s ongoing interaction with an object. This latent space is structured so that some components respond quickly to immediate touch signals, while others integrate information slowly over time. By training the Latent Filter on many interactions, the team showed that this internal map naturally lines up with meaningful physical traits like surface roughness, bump height, and stiffness—even though the model was never explicitly told these labels. A separate regression step confirmed that these hidden features could predict an object’s true mechanical properties with good accuracy.

How Skin, Senses, and Motion Work Together

The experiments revealed that no single sensor layer or motion strategy is best for all situations. Combining vibration and force information through a “late fusion” approach—where each type of signal is processed separately before being merged—yielded the most reliable understanding of soft objects. Two force layers helped the system sense shear and stretch, which are vital for detecting stiffness and hidden internal structure, while vibrations were especially useful for feeling fine textures during sliding. The mechanical softness of the e‑Skin itself also mattered: stiffer skins were better for measuring overall stiffness and shape, while softer skins excelled at sensing subtle variations in compliant or layered materials. The results suggest that robot touch should be co‑designed: the properties of the skin, the sensing electronics, and the way the robot moves all need to be tuned together.

What This Means for Everyday Robots

By uniting a layered, human‑inspired e‑Skin with a powerful learning model that respects the role of action, this work shows how robots can build a deeper sense of touch. Instead of relying only on cameras or simple force thresholds, future machines could feel how an object yields, vibrates, and changes as they explore it, then adjust their grip or motion on the fly. Such capabilities are key for applications like medical palpation, soft‑food handling, and manipulating deformable items in homes and factories. In simple terms, the study demonstrates that to touch the world as effectively as we do, robots must not only have good sensors, but also the right “body” and the right habits of movement—and a smart way to weave all that information into a coherent understanding of what they are feeling.

Citation: Dutta, A., WM Devillard, A., Zhang, Z. et al. Embodied tactile perception of soft objects properties. npj Robot 4, 15 (2026). https://doi.org/10.1038/s44182-026-00077-0

Keywords: robotic touch, electronic skin, soft object sensing, tactile perception, embodied robotics