Clear Sky Science · en

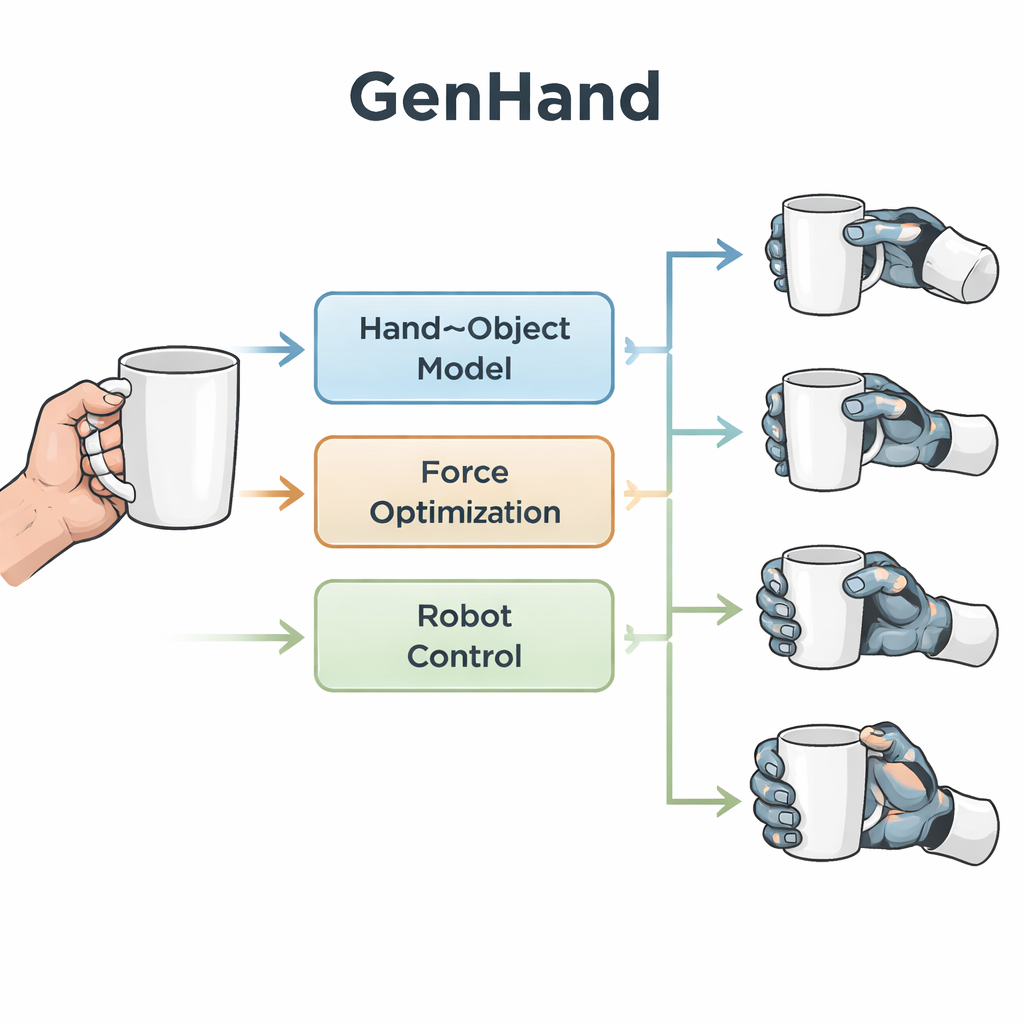

GenHand: generalised human grasp kinematic retargeting

Teaching Robots to Hold Things Like We Do

From picking up a coffee mug to twisting a screwdriver, our hands make object handling look effortless. Robots, however, often struggle to grasp everyday items reliably, especially when their grippers look nothing like a human hand. This article introduces GenHand, a system that learns from human hand movements in ordinary images and converts them into stable, human-like grasps for many different types of robot hands.

Why Robot Hands Need More Than Copycat Moves

Many current teleoperation and imitation-learning systems try to copy the pose of a human hand directly onto a robot hand. They match fingertip locations and joint angles as closely as possible. This works only when the robot hand closely resembles a human hand and has a similar number of fingers and joints. As soon as the robot gripper is simpler—for example, just two flat fingers—the copied pose may no longer create a secure grasp. These approaches also largely ignore the shape of the object and where solid contact should occur, so the resulting grasps can slip, lose balance, or never quite touch the surface correctly.

Looking at Hands and Objects Together

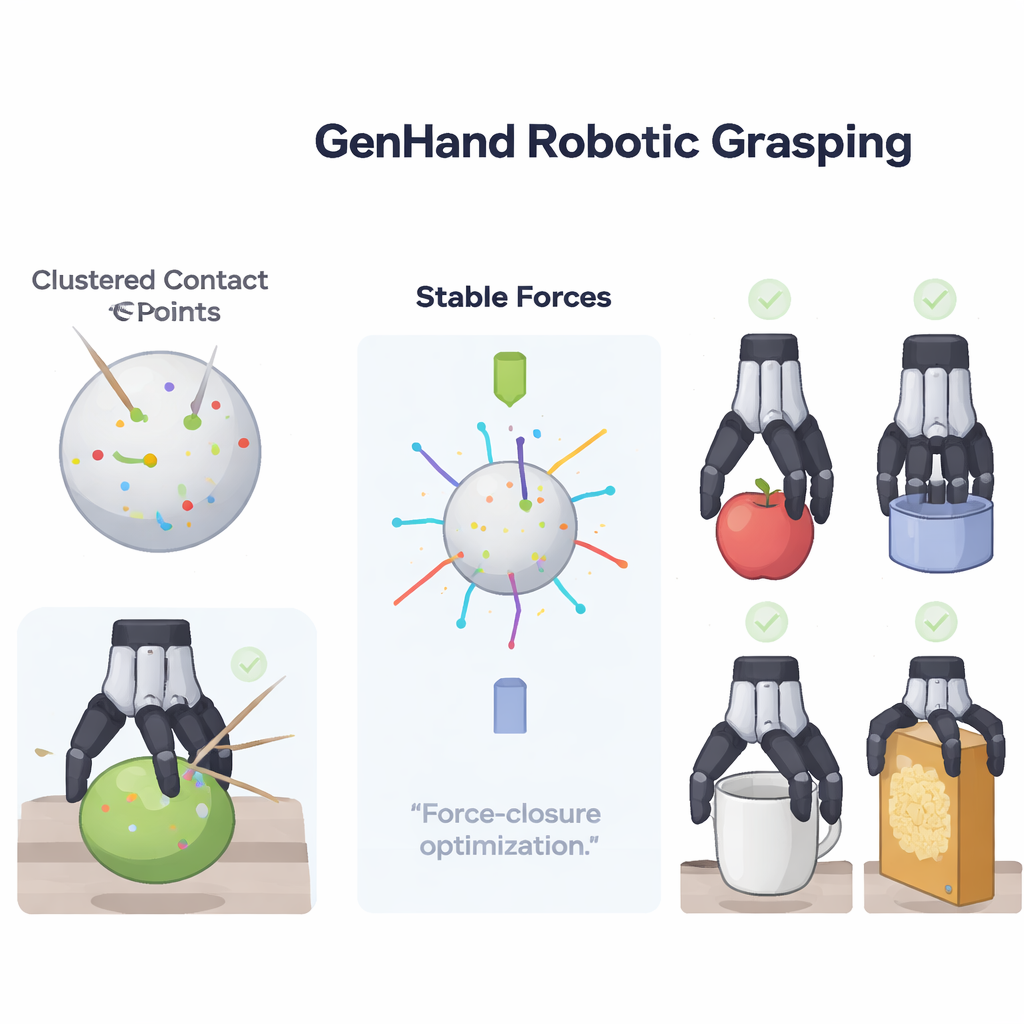

GenHand tackles this problem by focusing on the interaction between the hand and the object, not just on the hand’s shape. Starting from a regular RGB image, the system reconstructs a detailed 3D model of the object and a parametric 3D model of the human hand. It uses a neural network to infer the hand’s pose, and an advanced "signed distance" representation to recover the object’s surface. From this pair of models, GenHand determines where the human fingertips actually make contact and in what directions they push on the object. It then clusters these contact points into a small set of meaningful regions and force directions that summarize the essential structure of the human grasp while filtering out unnecessary details.

Reinventing the Grasp for Each Robot

Once GenHand understands the key contact regions and how they support the object, it builds a new set of "contact anchors" that suit the particular robot gripper. For a simple two-finger gripper, it may keep only two opposing contact regions, like a pair of thumbs squeezing a box. For more dexterous hands with three, four, or five fingers, it can assign additional anchors to better match the rich contact pattern of the human grasp. A mathematical optimization step then searches for contact locations on the object surface that can balance forces and torques in all directions, a property known as force closure. Crucially, GenHand stays close to the original human contacts while insisting that the resulting grip would be physically stable in the real world.

From Stable Contacts to Real Robot Motions

With stable contact anchors in place, a second optimization stage finds actual joint angles and wrist motions for the robot that can realize those anchors without violating joint limits or causing collisions with the object. To do this, GenHand repeatedly matches the robot’s potential contact sites to the desired anchors, adjusts the pose, and checks whether links penetrate the object. This process is applied to a range of robot hands—from a simple Robotiq two-finger gripper up to a highly articulated five-finger Shadow hand—and tested in physics simulation. Compared with a leading baseline that only mimics fingertip geometry, GenHand produces much lower imbalance in forces, more accurate surface contact, and significantly higher success rates when lifting and holding 20 everyday objects across different friction conditions.

Where This Could Take Everyday Robots

To a lay reader, the bottom line is that GenHand gives robots a better sense of "how" to hold things, not just "where" to put their fingers. By learning from real human grasps and enforcing basic rules of physical stability, it can retarget the same human demonstration to very different robot hands while still achieving solid, reliable grasps. This makes teleoperated robots easier to control, helps learning systems train on richer examples, and brings us closer to home and workplace robots that can safely manipulate the same wide variety of objects that people do.

Citation: Qi, L., Popoola, O., Imran, M.A. et al. GenHand: generalised human grasp kinematic retargeting. npj Robot 4, 19 (2026). https://doi.org/10.1038/s44182-026-00076-1

Keywords: robotic grasping, teleoperation, human demonstration, robot hands, manipulation