Clear Sky Science · en

Affordable 3D-printed miniature robotic gripper with integrated camera for vision-based force and torque sensing

Why Tiny Soft Grippers Matter

Robots are getting smaller and more delicate jobs, from assembling tiny gears to picking ripe berries without bursting them. But most robot hands still squeeze blindly, with little sense of how hard they press. This article presents a low-cost, 3D‑printed miniature gripper, nicknamed the “Seezer,” that can both grab fragile objects and “feel” forces using a tiny camera inside its fingertips instead of expensive force sensors.

A Gentle Hand That Sees

The Seezer is a soft, compliant robotic gripper whose fingers flex instead of hinging like metal pliers. Its key idea is to build almost everything in one piece on a consumer‑grade 3D printer: a monolithic finger part that includes flexible joints, fingertip shapes tailored to the task, and small built‑in markers. This disposable finger module slides onto a compact motor unit that houses a miniature camera and lights. As the motor rotates a worm gear, the flexible joints bend and the fingers close around an object, while the camera watches the fingertips and the space in front of the gripper.

Reading Force from Finger Bends

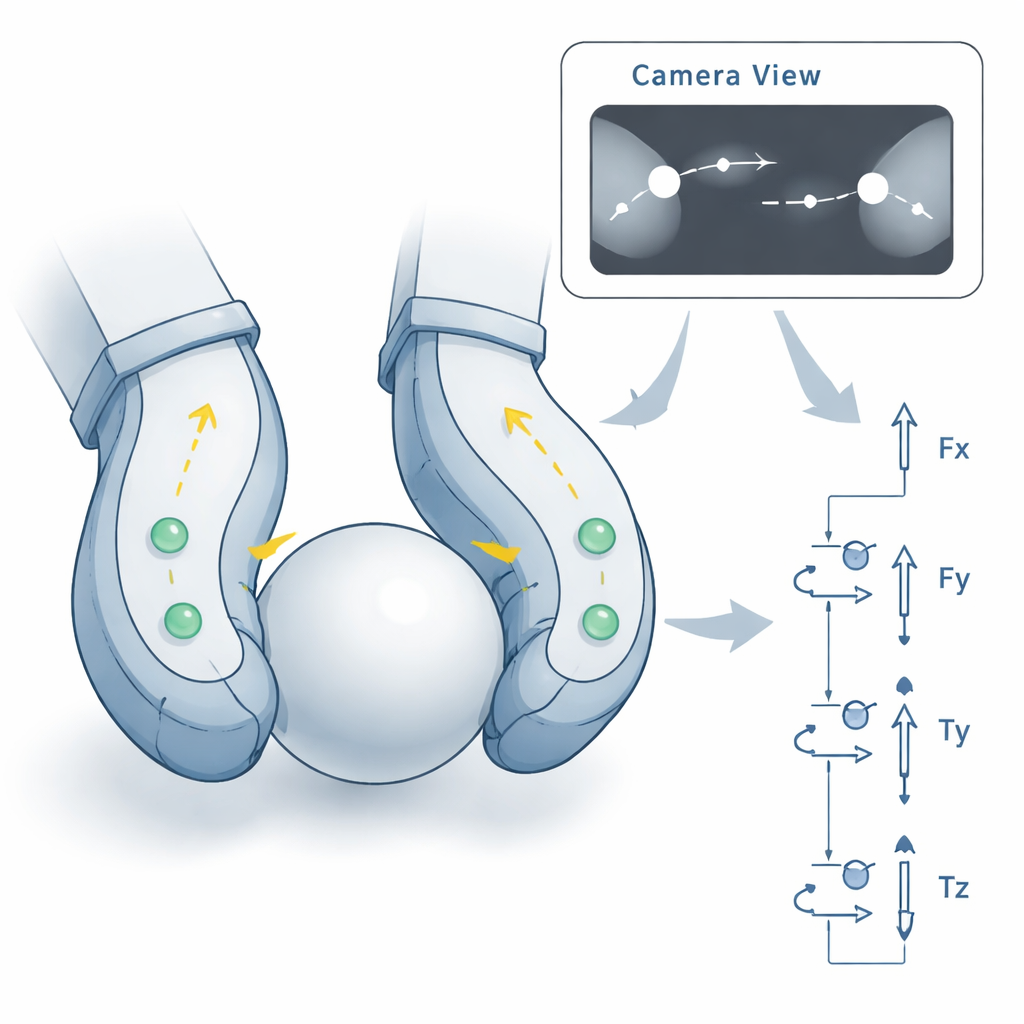

Instead of embedding wires, strain gauges, or pressure pads into the fingers, the Seezer paints the inside with information-rich visual cues. Each fingertip carries small round “fiducial” markers whose positions in the camera image change whenever the finger deforms. Software first detects and tracks these markers in each frame. Then, based on a short calibration sequence, simple mathematical models learn how shifts in marker position relate to the actual push and pull forces on each fingertip. By combining the three fingertip forces with basic physics, the system estimates the overall forces and twists on the gripper in all six directions, as well as the squeezing force between the fingers.

How Well It Feels Forces

To check how accurate this camera-based sensing is, the authors compared the Seezer’s estimates with readings from a high‑precision commercial force/torque sensor in a controlled laboratory setup. With one version of the finger design (stiffer tips), the gripper measured gripping forces up to about 1.1 newtons with typical errors between 8% and 17%, and full six‑axis forces and torques with errors mostly between 8% and 24%. A softer fingertip version traded maximum force for more sensitivity, producing smaller forces but still comparable percentage errors. Importantly, the models needed only 31 to 141 calibration data points—far fewer than the thousands of images often required by deep‑learning methods that work on full camera frames.

From Tiny Gears to Soft Berries

Two demonstration tasks highlight what this gripper could do in real‑world settings. In one, the Seezer repeatedly picked small 3D‑printed gears off axles, moved them, and placed them back, using the internal camera both to align the gear keyway with the axle and to monitor finger motion. This mimics fine industrial assembly work in tight spaces. In another, the gripper harvested redcurrants from their stems. Here, the system watched its own estimated gripping force in real time and stopped closing once a preset force threshold was reached, so that the berry was plucked without being crushed. Both examples ran on inexpensive electronics and showed that one design could handle rigid and soft objects of a few millimeters in size.

Challenges and Future Uses

The Seezer is still a proof of concept and has limitations. The marker tracking works best in steady, well-lit scenes with uncluttered backgrounds; changing lighting, shiny surfaces, and complex motions can cause tracking errors. The camera’s modest frame rate also constrains how quickly the system can react for tight force control or rich haptic feedback. The finger materials may fatigue or change behavior over long use, and the team has not yet systematically tested performance over extended periods. The authors argue that more robust tracking algorithms or combining their hardware with modern deep‑learning force estimators could boost accuracy and reliability, while advances in 3D printing should allow further miniaturization and sterilizable disposable fingers for surgical or laboratory use.

What This Means for Everyday Robotics

In simple terms, this work shows that a small, cheap robot hand can both see and feel by watching how its own soft fingers bend. With only modest calibration data and off‑the‑shelf parts, the Seezer estimates how hard it is squeezing and which way contact forces and torques act, accurate enough for gentle handling tasks. If its robustness is improved, the same approach could help future robots handle small, fragile items—like medical devices, electronics, fruit, or even tissue in minimally invasive surgery—without bulky sensors or complex hardware, bringing sensitive touch to places where space and cost are at a premium.

Citation: Duverney, C., Gerig, N., Hüls, D. et al. Affordable 3D-printed miniature robotic gripper with integrated camera for vision-based force and torque sensing. npj Robot 4, 10 (2026). https://doi.org/10.1038/s44182-026-00075-2

Keywords: soft robotic gripper, vision-based force sensing, 3D-printed robotics, miniature manipulation, haptic feedback