Clear Sky Science · en

Model predictive game control for personalized and targeted interactive assistance

Robots That Feel Like Good Training Partners

Imagine a workout partner or physical therapist who always knows when to help you lift, when to let you struggle a bit, and how hard you plan to move next. This paper shows how to give contact robots—like exoskeletons used in rehab or factories—a similar kind of intuition. By mathematically "guessing" how a person intends to move over the next second or two, the robot can share effort smoothly, reduce fatigue, and subtly guide how people move and learn.

Why Sharing Effort With Robots Is Hard

When a robot is physically linked to a person—helping them move a limb or carry a heavy object—both are constantly pushing and reacting. Traditional robot controllers mostly ignore what the human is planning to do; they just chase performance targets like accuracy or energy savings. That can make the robot either too stiff and bossy, or too passive and unhelpful. Real human partners do better: they sense how the other is moving, adapt to their capabilities, and encourage different behaviors, from relaxation to intense effort. The authors argue that to get robots closer to this kind of interaction, the robot must explicitly model how the human plans movements and how much effort they are willing to invest.

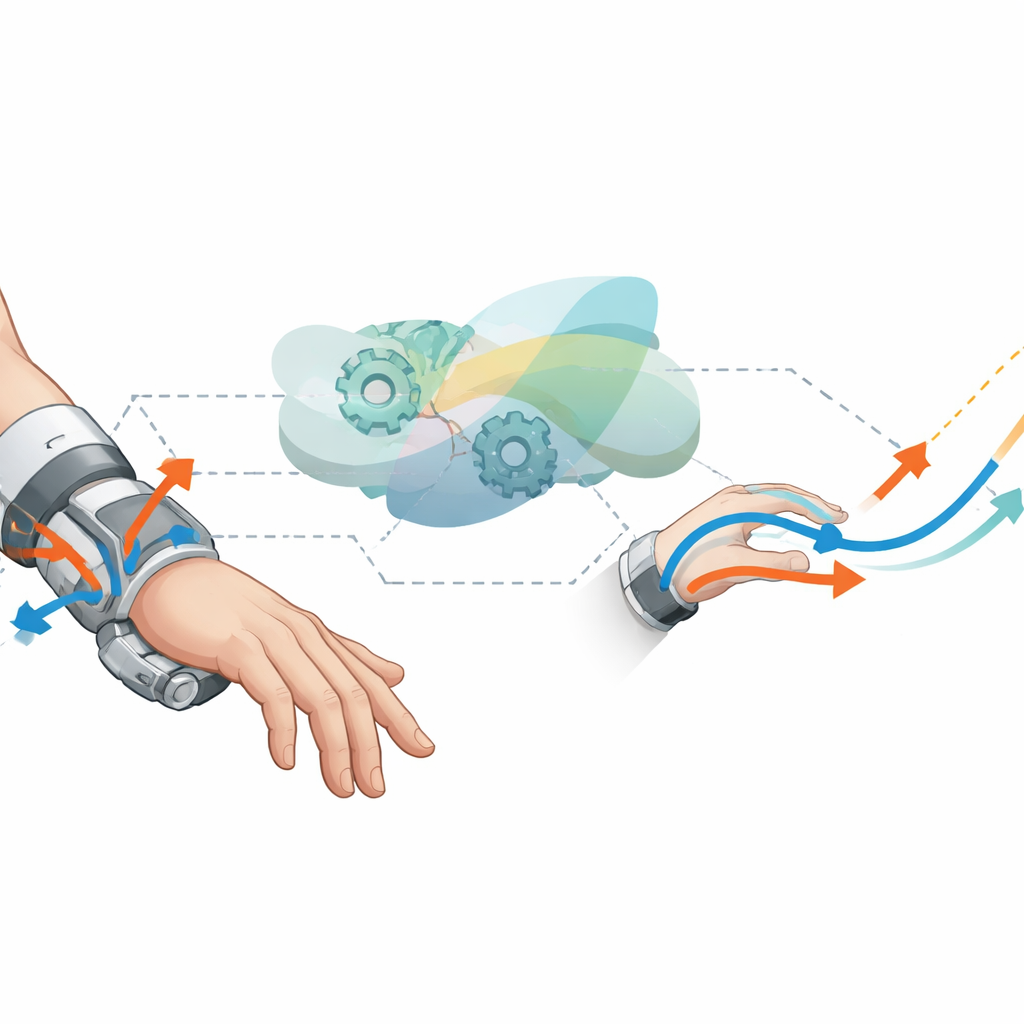

A Game-Theory View of Human–Robot Interaction

The researchers build on game theory—the mathematics of strategic interaction—to treat the human and the robot as two "players" sharing the same task. Each has its own goals: the human wants to track the desired motion while spending as little effort as possible, and the robot wants to help track the motion while also reducing the person’s effort. Crucially, both are assumed to look only a short time ahead, over a finite planning window of about one to two seconds, reflecting how people naturally plan movements. Within this window, the team derives a compact formula for a Nash equilibrium: a balanced pattern of forces where neither human nor robot can improve their outcome without the other changing too. This equilibrium defines how much each should push at each moment.

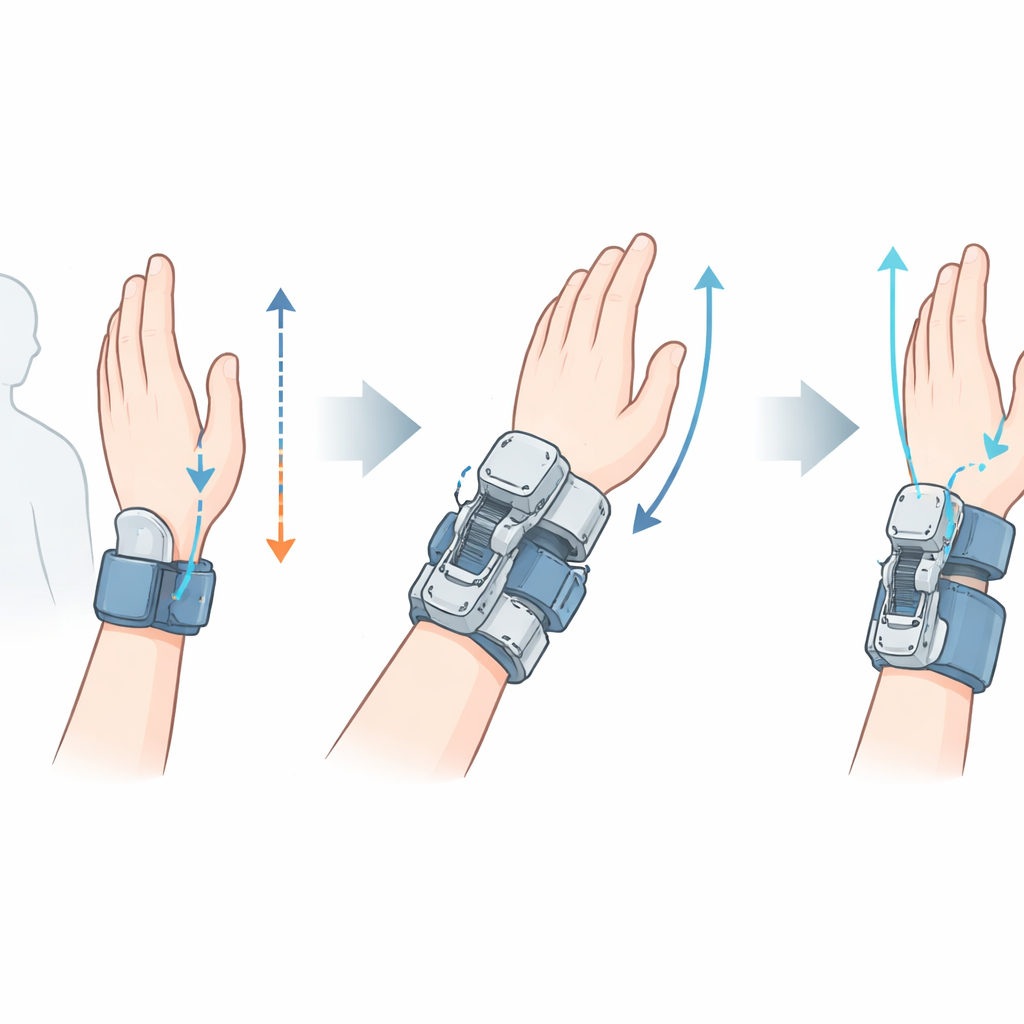

Teaching the Robot to Read Human Intent

For the robot to play this "movement game," it must first infer how strongly the human cares about accuracy versus effort—a hidden preference that varies from person to person and even over time. The authors solve this with an inverse game approach. As the person moves, sensors measure their joint angle and the torque they produce. The controller then repeatedly searches for the set of human preferences that would best explain the recent history of motion and forces. With those preferences in hand, it predicts how the human is likely to act over the next short horizon and computes the robot’s optimal assisting force. All of this runs in real time on a wrist exoskeleton that helps subjects track a moving target with their hand.

Humans and Robots Learn to Coordinate

The team tested their controller with thirty healthy adults in three experiments. In the first, people were told to switch between actively following the target and staying passive. The controller rapidly adjusted its internal estimate of how much the person cared about accuracy, rising during active phases and falling close to zero when they relaxed. In the second experiment, participants alternated between trials with and without robotic assistance. With the new controller, the robot cut human joint effort and muscle activity, while improving tracking accuracy. Over repeated trials, each person’s interaction pattern settled into a stable, individual "equilibrium," and the correlation between human and robot forces increased—evidence of growing mutual understanding. In the third experiment, the researchers introduced a single assistance knob, a meta-parameter that shifts how strongly the robot tries to minimize the person’s effort. Turning this knob smoothly changed how much effort humans chose to contribute, without degrading task performance.

Steering Behavior With a Single Dial

The assistance meta-parameter lets designers span a spectrum of interaction styles with one control: from almost no help, through equal sharing of effort, all the way to near-complete support where the robot leads and the human can relax. At intermediate settings, humans tended to coordinate best with the robot, each carrying roughly half the load. The pattern of inferred human preferences remained consistent for each person across different assistance levels—except when the robot did almost everything, at which point behaviors became more uniform because people largely stopped engaging. This shows that the robot can both uncover individual control styles and gently nudge them, for example by asking users to do more in one phase of training and less in another.

What This Means for Rehab and Work

To a layperson, the key message is that this controller makes robots act more like smart partners than rigid machines. By predicting how we intend to move and adjusting how much they help, robots can reduce our effort when needed, encourage us to work harder when useful, and keep movements accurate and stable. The same mathematical framework can be tuned for rehabilitation—gradually shifting effort from robot to patient—or for collaborative manufacturing, where people and robots share loads safely and efficiently. In essence, the study shows that people naturally adapt to a robot that "plays the same game" they do, opening the door to more personalized, targeted forms of interactive assistance.

Citation: Hafs, A., Farr, A., Verdel, D. et al. Model predictive game control for personalized and targeted interactive assistance. Commun Eng 5, 57 (2026). https://doi.org/10.1038/s44172-026-00605-8

Keywords: human-robot interaction, exoskeleton assistance, game-theoretic control, motor rehabilitation, shared control