Clear Sky Science · en

Bridging modalities with AI: a review of AI advances in multimodal biomedical imaging

Seeing More Than Meets the Eye

Modern medicine relies heavily on images—from X‑rays and MRI scans to microscope slides of tissue—to understand what is happening inside the body. This review explains how artificial intelligence (AI) can weave together many different types of medical images into a single, richer picture of disease. For a lay reader, the appeal is clear: these advances could mean earlier cancer detection, more precise diagnoses, and treatments tailored to the individual rather than the average patient.

Why One Picture Is No Longer Enough

Each imaging technique shows only part of the story. Radiology tools like CT, MRI and ultrasound reveal the shape and structure of organs, while nuclear scans such as PET highlight how active a tumour is. Under the microscope, pathologists see how cells are arranged, and spectroscopic methods read out chemical fingerprints of tissues. Optical methods like optical coherence tomography can zoom in on fine layers in the eye or skin. On their own, these “single‑view” snapshots can miss important clues. When combined, however, they can link how a tumour looks, how it behaves, and what molecules drive it, giving doctors a more complete understanding of disease.

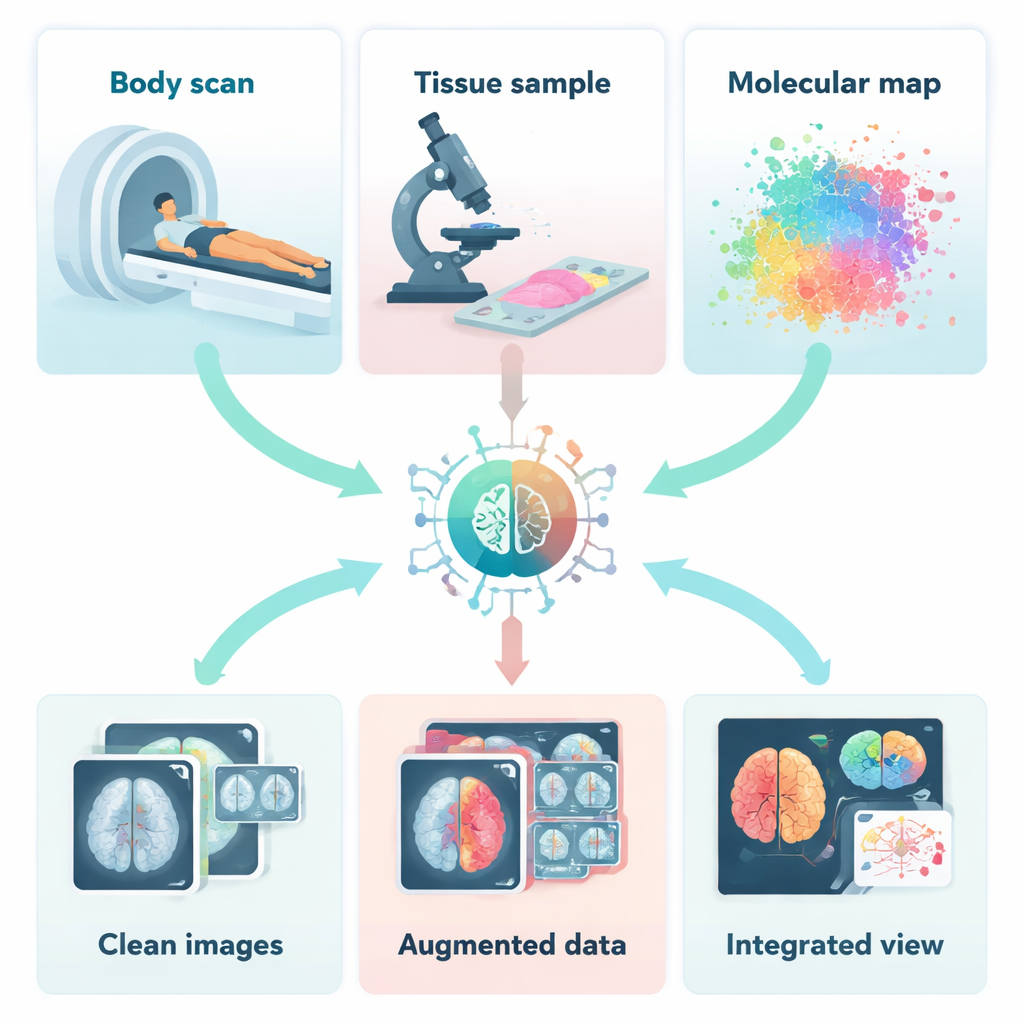

How AI Cleans and Complements Medical Images

Before different images can be combined, they must be cleaned, aligned and sometimes even created from scratch. The authors describe how AI helps remove noise and motion blur from scans, rescue detail from low‑dose CT or PET images, and correct artefacts that would otherwise confuse doctors and computers alike. Deep learning systems can learn, from examples, how a clean image should look and then restore new scans accordingly. Other AI models generate realistic synthetic images to “bulk up” small datasets or fill in missing types of scans. This is especially valuable for rare diseases, where there may be very few real examples on which to train diagnostic tools.

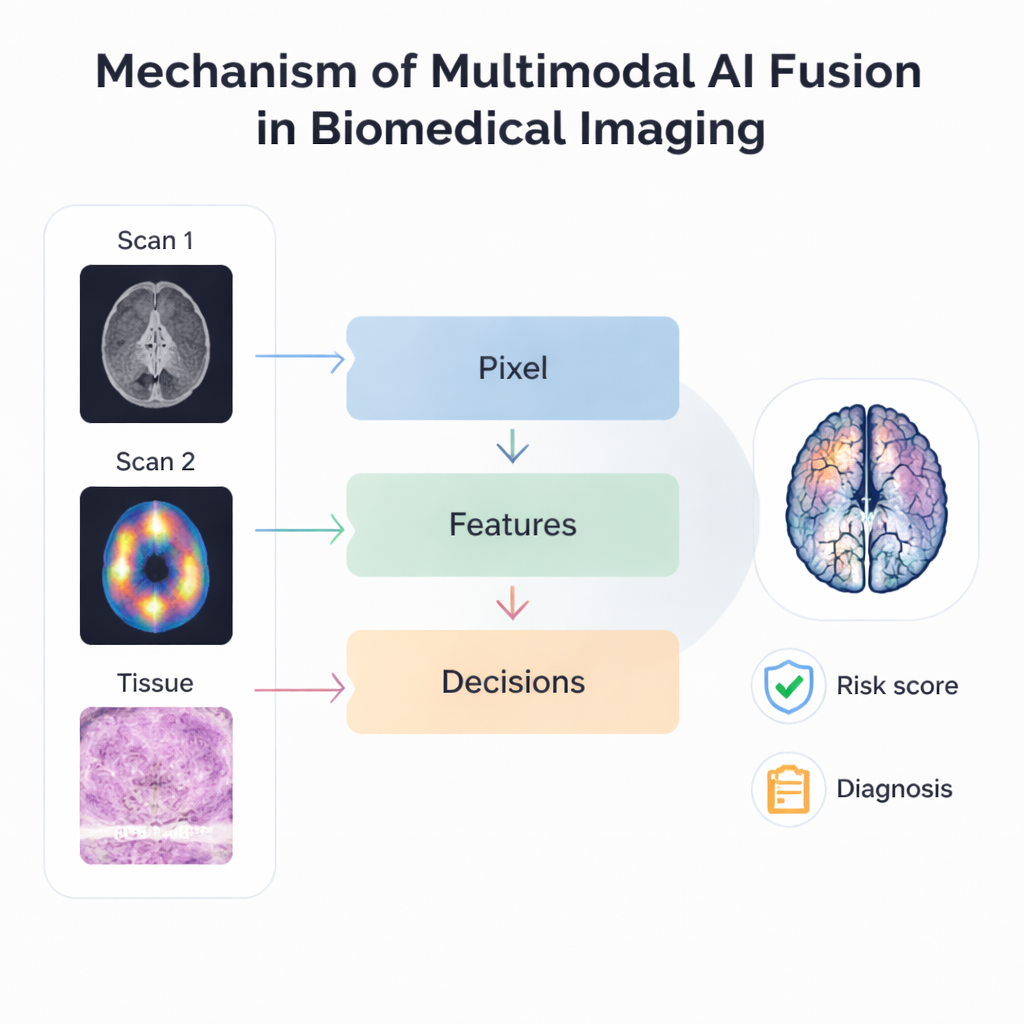

Blending Different Views Into One Story

The heart of the review is how AI actually fuses multiple imaging sources. At the most basic level, pixel‑based methods overlay scans such as MRI and PET so that structure and activity appear in a single, sharper image. More advanced approaches extract key patterns or “features” from each modality and combine those patterns rather than the raw pictures, making the process more robust to differences in resolution and alignment. Late‑stage or “decision‑level” fusion goes further, allowing separate AI models to analyse different images and then vote or average their predictions. Hierarchical systems mix several of these ideas, stacking different fusion stages so that they can handle everything from tiny cellular details to organ‑wide changes within one framework.

From Better Images to Better Care

These fusion techniques are already being tried in many clinical scenarios. Combining multiple MRI sequences improves brain tumour segmentation, while joining mammograms, ultrasound and MRI boosts breast cancer detection and risk prediction. Linking digital pathology slides with radiology images helps forecast tumour genetics and patient survival without needing extra tests. AI also supports “data‑driven imaging,” where subtle patterns in scans are correlated with gene activity or patient outcomes, promising more accurate prognosis and better selection of therapies. New foundation models and multimodal large language models aim to generalise across tasks and imaging types, and even connect images with written clinical notes, moving toward universal tools that can adapt to many diseases and hospitals.

Trust, Fairness and the Road Ahead

Despite the excitement, the authors stress that important challenges remain. Medical images vary widely between hospitals, machines and patient groups, which can make AI brittle or biased if not carefully addressed. Many powerful models behave like black boxes, making it hard for clinicians to see why a particular decision was made. The review discusses efforts to highlight which regions of each image most strongly influence predictions and to design fairer, more transparent systems. It also notes ethical issues around privacy, data sharing and the heavy computing demands of large models. Looking forward, the authors envision specialised AI “agents” that continuously monitor imaging, wearable sensors and health records, assist clinicians in real time, and help coordinate long‑term care. For patients, the bottom line is that combining many kinds of medical images with AI could mean faster answers, more personalised treatments and, ultimately, better outcomes—provided these technologies are developed and deployed responsibly.

Citation: Doan, L.M.T., Shahhosseini, K., Verma, S. et al. Bridging modalities with AI: a review of AI advances in multimodal biomedical imaging. Commun Eng 5, 30 (2026). https://doi.org/10.1038/s44172-026-00602-x

Keywords: multimodal biomedical imaging, medical AI, image fusion, radiology and pathology, precision medicine