Clear Sky Science · en

Machine learning-facilitated real-time acoustic trapping in time-varying multi-medium environments toward magnetic resonance imaging-guided microbubble manipulation

Guiding Tiny Drug Carriers with Sound and Scans

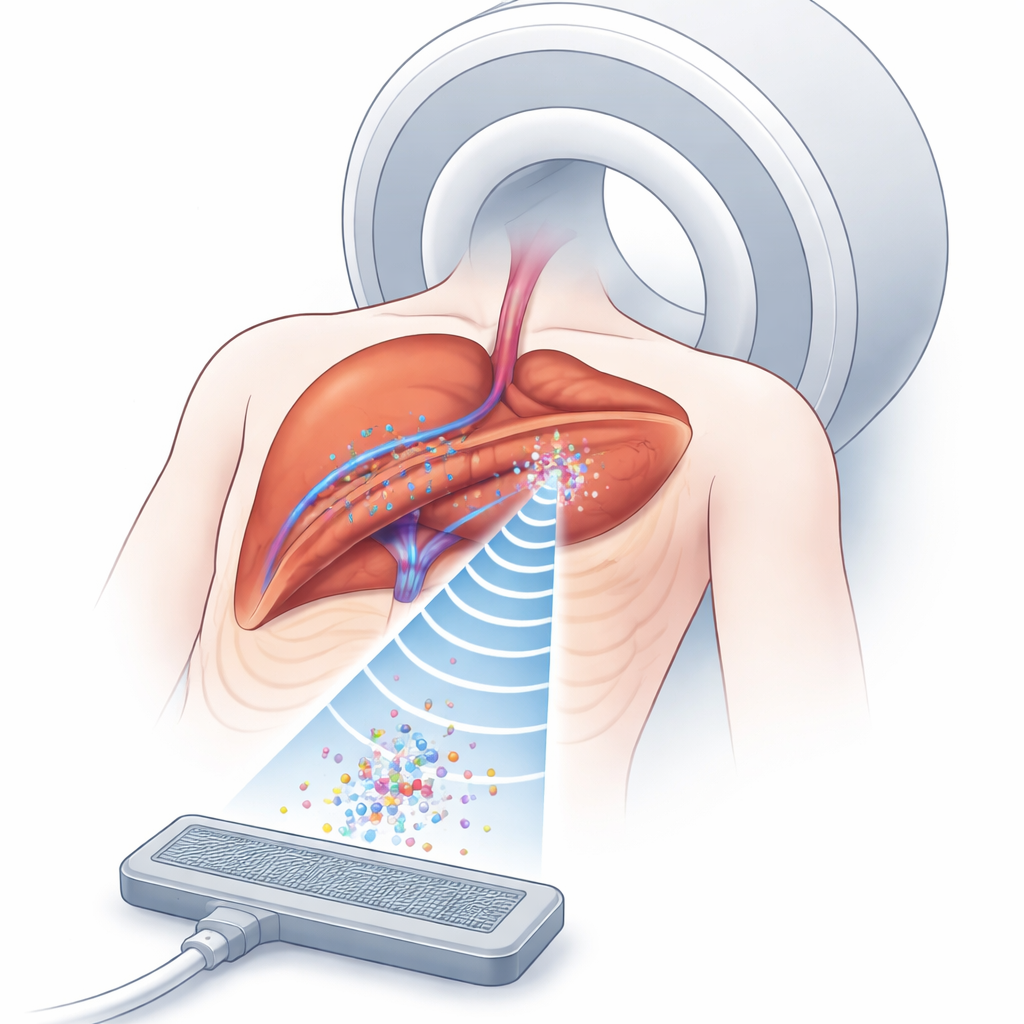

Modern cancer treatments increasingly rely on smart drug carriers that can deliver medicine directly to tumors while sparing healthy tissue. This study explores a futuristic way to steer such carriers inside the body using ultrasound "traps" guided by magnetic resonance imaging (MRI). By combining sound waves, medical imaging, and machine learning, the researchers aim to keep microscopic bubbles of drug carriers parked near moving tumors, even as the body shifts with every breath.

Why Trapping Microscopic Bubbles Matters

Drug-carrying microbubbles travel through blood vessels and can release their payload when triggered by ultrasound. The challenge is keeping enough of these bubbles in the right spot, long enough, deep inside the body. Ultrasound can create invisible pockets of force—acoustic traps—that hold small objects in mid-fluid without touching them. MRI, meanwhile, can see both the tissue and the pattern of ultrasound effects, even inside organs. Putting these two tools together offers a way to concentrate drug carriers around tumors more precisely than with drugs alone. But in real people, tissues of different types—fat, muscle, organs, and moving lungs—bend and distort sound waves, making it very hard to form and maintain a stable trap at the exact tumor location.

The Problem of a Moving, Layered Body

In simple settings like air or water, engineers already know how to use phased arrays of ultrasound emitters to push, pull, and spin tiny objects. Inside the body, however, sound must cross multiple layers with different densities and speeds, and the boundaries between them cause refraction and distortion. Traditional computational methods can, in principle, correct for this by calculating how long sound takes to travel from each emitter to the target point. But such approaches slice the body into millions of tiny blocks and simulate wave propagation through each—an extremely time-consuming process that only works if the tissues remain almost perfectly still. Breathing alone can shift abdominal tissues by several millimeters, quickly making any precomputed solution outdated.

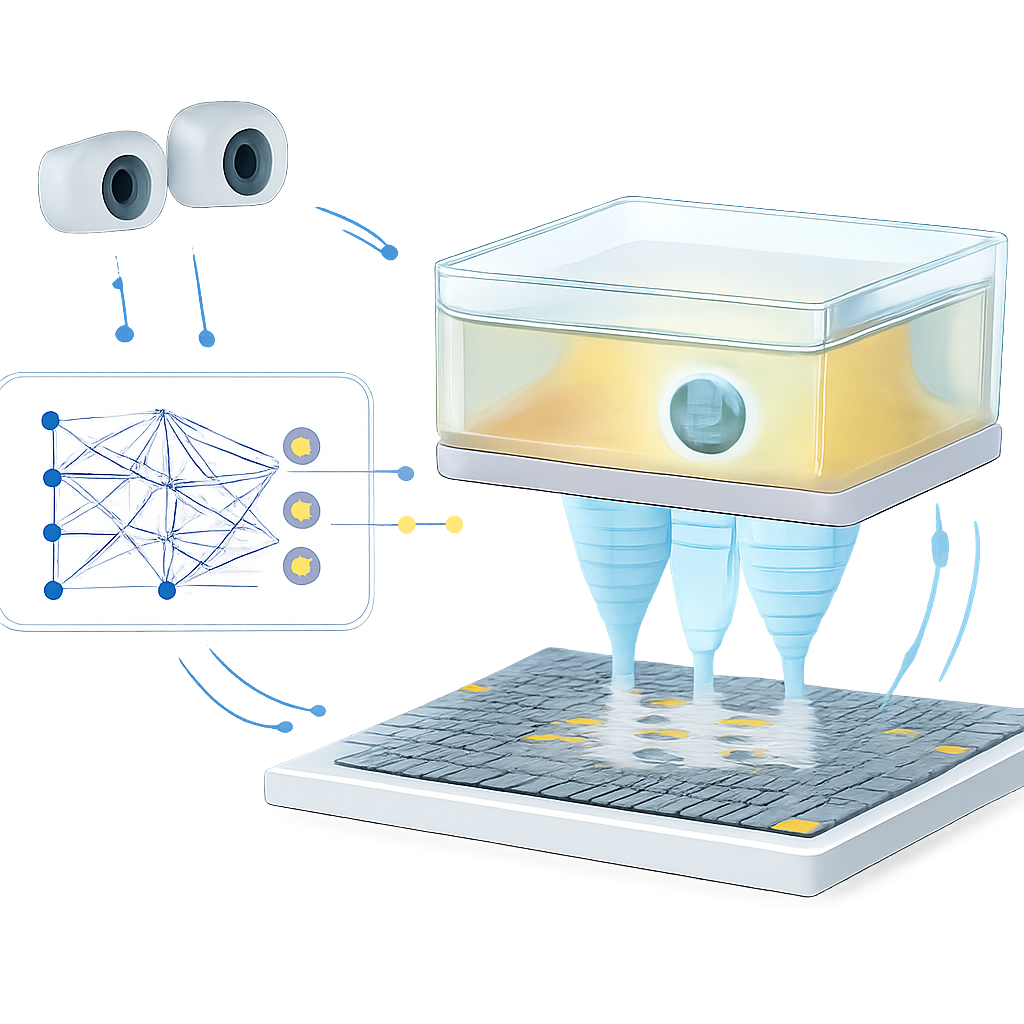

Teaching a Model to Predict Sound Pathways

The authors tackle this bottleneck with a learning-based model that acts as a fast shortcut: instead of simulating sound waves every time, they train a neural network to predict how long each ultrasound pulse will need to reach a target point. They first build a detailed virtual environment using a gas-filled chamber separated from air by a thin plastic film, mimicking how sound passes through different tissue layers. Using a physics-based simulator, they generate a training set of sound travel times between many targets and a 14-by-14 ultrasound array. They also let the chamber shift in two directions to imitate motion, and describe its position by tracking three visual markers, similar to how future MRI-visible markers would track a patient’s breathing. The trained network learns to map the desired trap position plus the chamber position directly to the required timing pattern for all 196 emitters, achieving microsecond-level accuracy in just about 26 milliseconds.

Closing the Loop with Vision and Rapid Updates

Speed alone is not enough; the trap must also adapt when the environment changes unexpectedly. To demonstrate this, the team builds a closed-loop control system. Stereo cameras watch a small polystyrene bead held aloft by the acoustic trap inside the moving chamber. When the bead drifts from its target beyond a set threshold, the system nudges the target position, feeds the updated coordinates and chamber pose into the learning model, and quickly refreshes the phase pattern driving the array. In experiments, the system can update the phase pattern up to 15 times per second, steering the bead along H-, K-, and U-shaped paths with about 1 millimeter average error—comparable to the positioning precision of some clinical focused ultrasound systems. The same feedback principle also reduces how long the bead strays from its intended spot when the chamber moves, showing that the control loop can compensate for motion and unmodeled effects from the plastic film and support structure.

What This Means for Future Treatments

To a non-specialist, the core message is that the researchers have built a kind of remote-controlled, non-contact “tractor beam” that could one day park drug-laden bubbles near a tumor and keep them there, even as the patient breathes. Their machine learning model replaces heavy simulations with a fast predictor, while cameras (and eventually MRI markers) tell the system how the body is moving so the trap can be retuned on the fly. Although the present work uses air, gases, and plastic rather than real tissues, and levitates a plastic bead instead of true microbubbles, it demonstrates real-time control in a moving, layered medium. With stronger hardware, higher ultrasound frequencies, and MRI-based motion tracking, this approach could evolve into a clinical tool for MRI-guided, robot-assisted ultrasound therapies that deliver drugs more accurately and safely deep inside the body.

Citation: Wu, M., Li, X. & Tang, T. Machine learning-facilitated real-time acoustic trapping in time-varying multi-medium environments toward magnetic resonance imaging-guided microbubble manipulation. Commun Eng 5, 52 (2026). https://doi.org/10.1038/s44172-026-00600-z

Keywords: acoustic trapping, MRI-guided therapy, microbubble drug delivery, machine learning in ultrasound, noninvasive robotic manipulation