Clear Sky Science · en

Adaptive hierarchical learning for uncertainty-aware distributed energy resource planning

Smarter local power for a changing world

As homes, businesses, and electric vehicles plug in more rooftop solar panels, batteries, and other local energy devices, the neighborhood grid is becoming far more complex. Utilities and private owners must decide where to place these resources and how big they should be, even though no one can perfectly predict future sunshine, electricity demand, or the inner workings of the power network. This study introduces a new artificial-intelligence-based planning approach that learns from real-world data instead of relying on rigid mathematical models, promising cheaper and more reliable clean power for everyday consumers.

The challenge of guessing the future grid

Modern distribution grids host many types of distributed energy resources, including solar farms, battery storage, small gas turbines, and devices that fine-tune voltage. These assets are spread across many locations and influenced by weather, human behavior, and market forces, creating multiple layers of uncertainty. Traditional planning tools try to handle this by building detailed models of the network and then simulating a limited set of “what if” scenarios, such as a few typical days of high or low demand. But third-party operators, like solar or battery owners and virtual power plants, often do not know the full grid layout or its safety limits because of privacy and regulatory barriers. As a result, they must make long-term investment and daily operating decisions without a complete picture, and the old scenario-based methods struggle to stay reliable and affordable in this information-poor setting.

A two-level learning brain for the grid

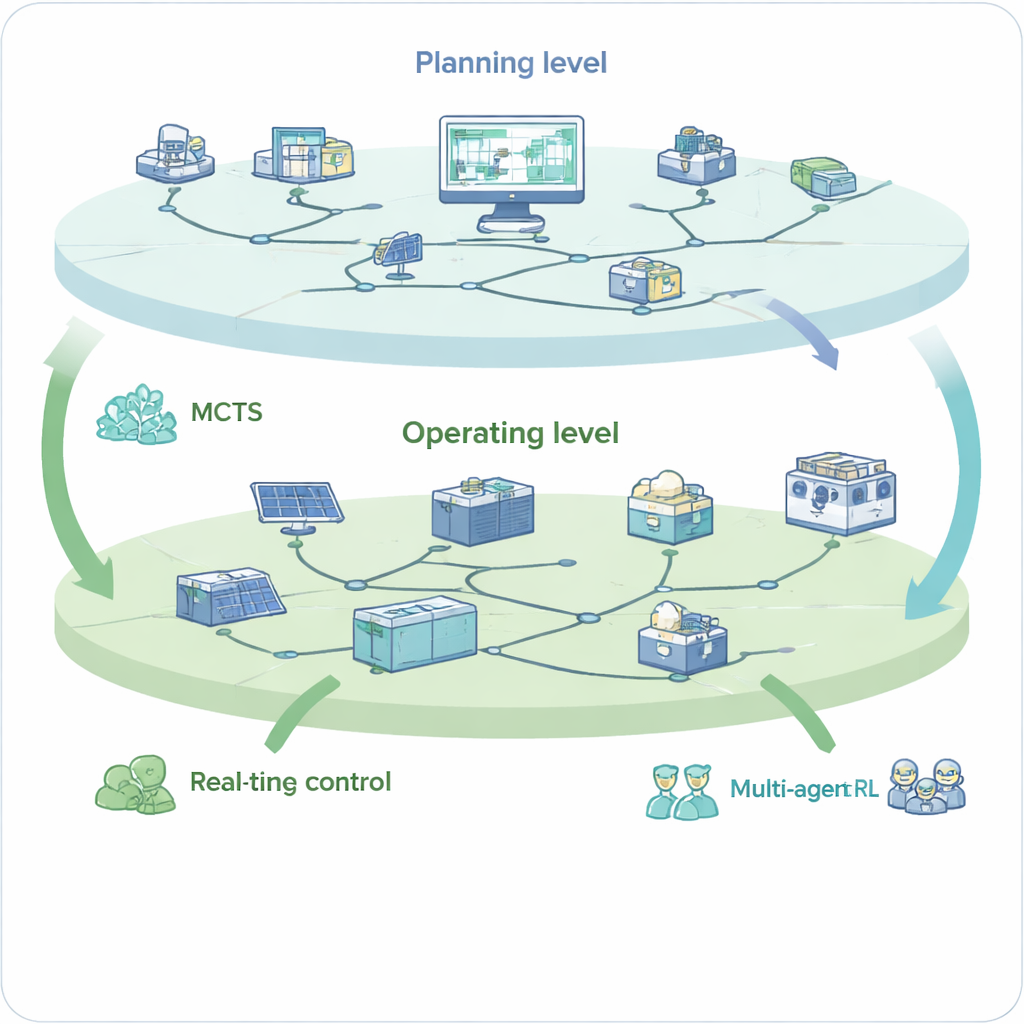

The authors propose an adaptive hierarchical learning framework that treats grid planning as a two-level game between long-term investment and short-term operation. At the upper level, a distribution system operator chooses where to place different resources and how large they should be. At the lower level, the owners of these resources decide how to run them in real time to meet electricity demand while respecting hidden network limits such as safe voltage ranges. Instead of solving huge mathematical equations, the upper level uses Monte Carlo Tree Search, a method that explores many possible investment combinations and gradually narrows in on the most promising ones. The lower level uses multi-agent deep reinforcement learning, where virtual “agents” controlling batteries, gas turbines, and voltage devices learn good operating rules directly from data and grid responses. Together, these two layers form a closed loop: planning decisions shape operating conditions, and operating outcomes feed back into better future plans.

Learning from uncertainty instead of fearing it

By design, the new framework does not require full knowledge of the grid model or preset scenarios. The operating agents only see local measurements and limited information, just as real-world operators would. Over many simulated days, they interact with the network, try different actions, and receive rewards based on costs and service quality. This trial-and-error process teaches them how much solar power can be accepted, when to charge or discharge batteries, and how to adjust support devices to keep voltages within safe bounds. Meanwhile, the planning layer tests many investment options using the learned operating behaviors as a guide, gradually favoring combinations of device types, locations, and capacities that lead to low overall costs and stable operation. In effect, the system “discovers” the network’s hidden safety margins and the best ways to use local resources, without ever being handed a full engineering blueprint.

Better performance on today’s and tomorrow’s grids

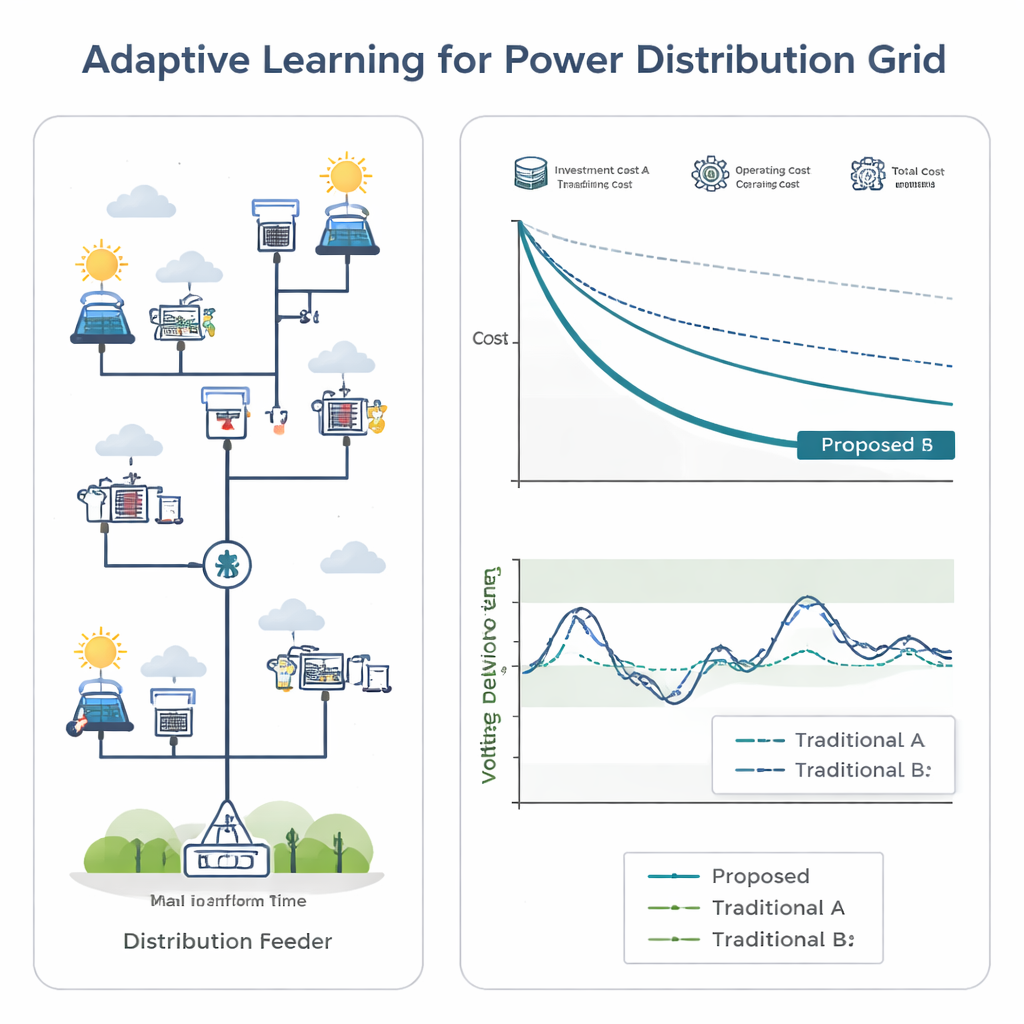

The researchers tested their approach on two distribution networks: a standard 33-node benchmark and a larger, realistic 152-node system. In both cases, the learning-based method reduced investment spending substantially compared with traditional optimization techniques, while also cutting how often customers or solar plants had to be curtailed. It kept voltages much closer to the desired range, with far fewer violations of safety limits, even when the test conditions differed from the data used for training. Importantly, once training was complete, the system could generate new planning and operating decisions in roughly an hour, making it practical for real-world replanning after events such as storms or rapid growth in electric vehicle charging.

What this means for everyday power users

From a layperson’s perspective, this work shows that the local grid can be planned more like a learning, adaptive organism than a static machine. Instead of betting on a small set of forecasted futures, utilities and energy service companies can let algorithms continuously learn from actual demand and renewable output, even when some details of the grid are hidden. The result is a smarter placement and operation of solar panels, batteries, and other devices that keeps lights on, lowers unnecessary spending, and makes better use of clean energy. Over time, such learning-based planning could help neighborhoods integrate more renewables and electric vehicles without expensive overbuilding or risking reliability.

Citation: Xiang, Y., Li, L., Lu, Y. et al. Adaptive hierarchical learning for uncertainty-aware distributed energy resource planning. Commun Eng 5, 40 (2026). https://doi.org/10.1038/s44172-026-00591-x

Keywords: distributed energy resources, power distribution grid, reinforcement learning, energy planning, renewable integration