Clear Sky Science · en

Multiview deep learning improves detection of major cardiac conditions from echocardiography

Why this matters for heart health

Every day, heart ultrasound scans help doctors decide who needs urgent treatment and who can safely go home. But these scans capture the heart from many different angles, and no human—or computer—can look at every frame in perfect detail. This study shows how a new kind of artificial intelligence can watch several of these moving views at once, much like an expert cardiologist does, and in doing so becomes better at spotting important heart problems.

Seeing a 3D organ with 2D movies

The heart is a three-dimensional, constantly moving organ, yet standard echocardiograms record it as dozens or even hundreds of flat, two-dimensional movies. Each view reveals different walls, chambers, and valves. A cardiologist mentally stitches these views together to form a 3D picture before deciding whether the heart is pumping well, relaxing properly between beats, or leaking through its valves. Most existing AI tools, however, look at just one view at a time or one still image at a time, which means they can easily miss trouble that only shows up from another angle.

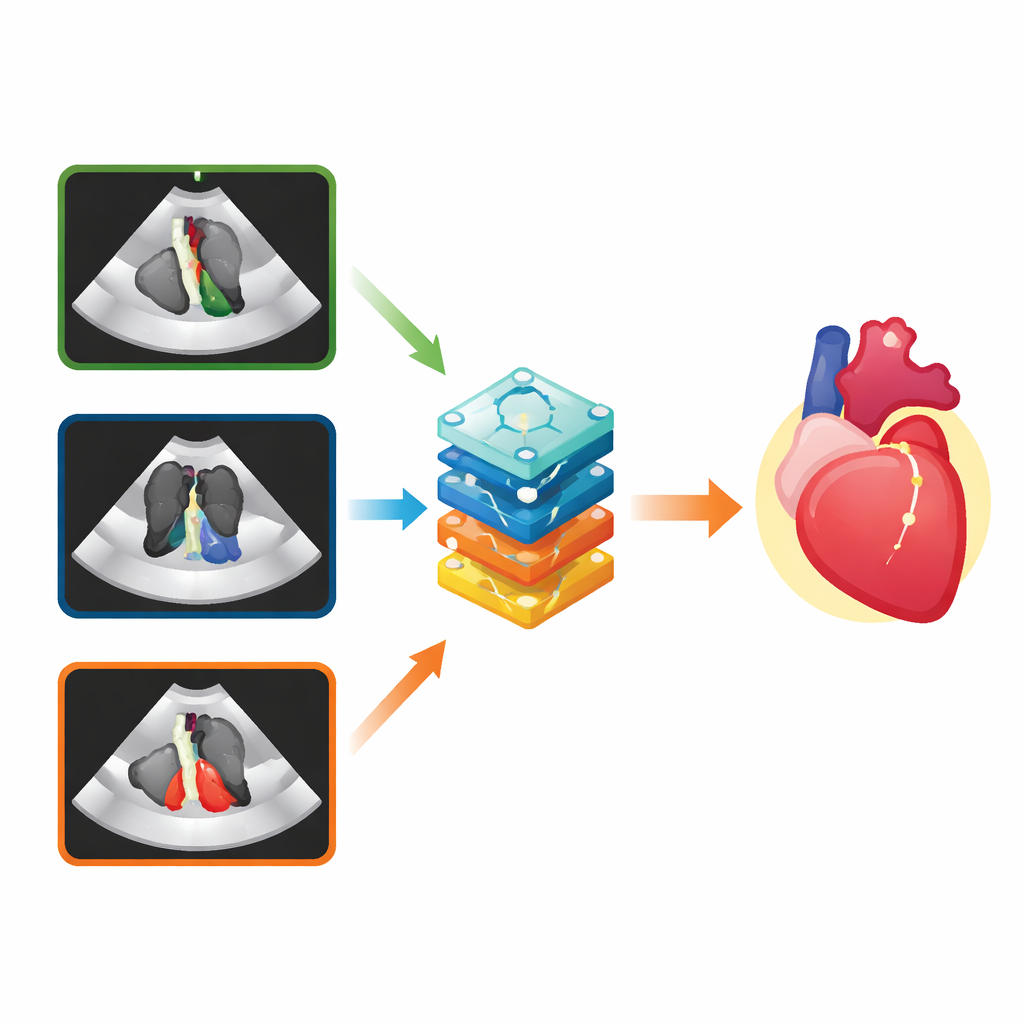

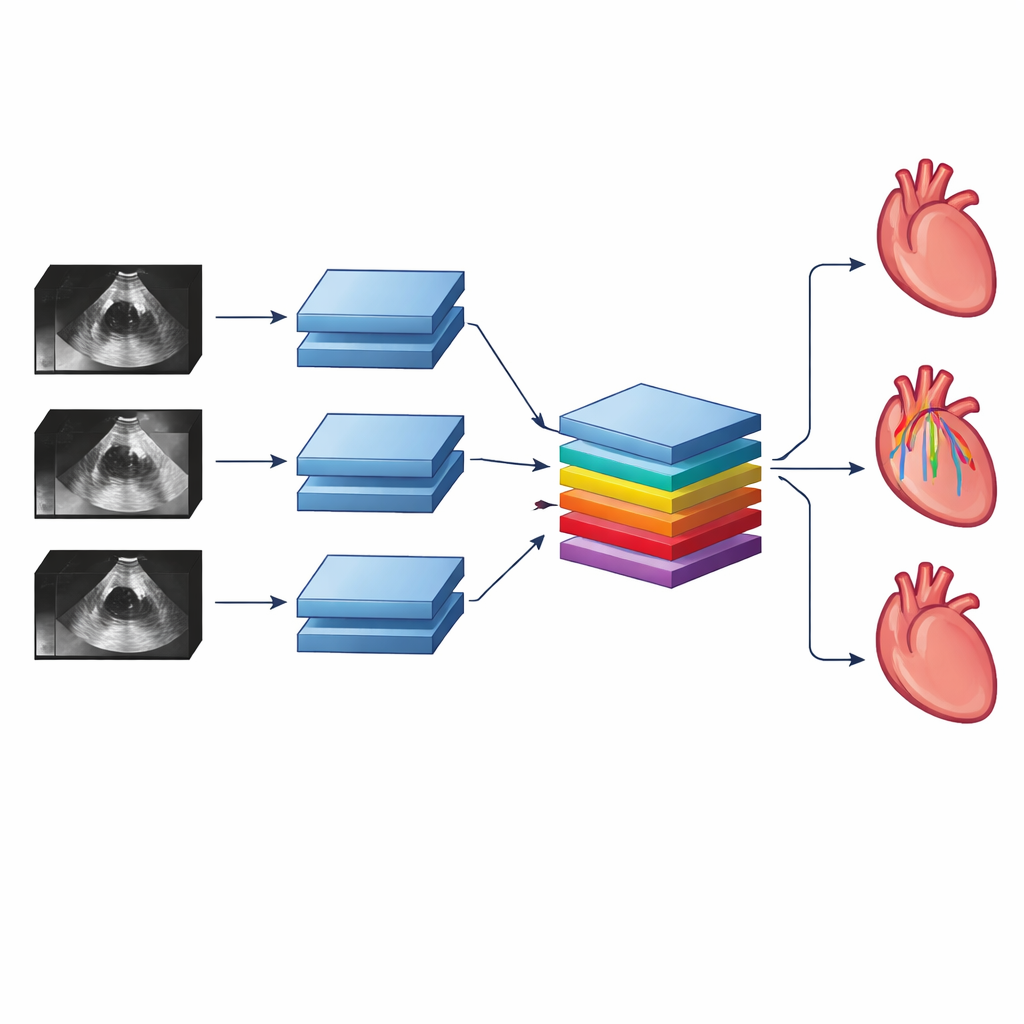

Teaching AI to watch from many angles

The researchers designed a “multiview” deep neural network that can take in three ultrasound videos from different angles at once. Inside the network, early layers watch each video over time, learning patterns of motion within that view. A special set of layers then combines information across views, allowing the system to notice, for example, how a heart chamber that looks normal in one view may look enlarged or weak in another. This mirrors how a human reader cross-checks clues across views, but the AI can do it for every frame of every video with consistent attention.

Putting the system to the test

To see whether this multiview approach truly helps, the team trained the network on tens of thousands of echocardiograms from adults treated at the University of California, San Francisco. They focused on three kinds of diagnoses. First was any abnormal size or pumping function of the main heart chambers. Second was a more subtle problem called diastolic dysfunction, in which the heart relaxes poorly between beats—a condition that doctors usually cannot judge from standard brightness-only videos alone. Third was significant leakage of the main heart valves, seen using color ultrasound signals that show blood flow.

For each of these tasks, the scientists built comparison systems that followed the current norm: single-view AI models trained on just one video angle, and a simple “average” that combined the outputs of three separate single-view models. Across the board, the multiview network was more accurate. A common metric called the area under the receiver operating characteristic curve, which summarizes how well a test separates diseased from healthy cases, improved by about 0.06 to 0.09 over the best single-view model. Even the averaged models, which already did better than any single view alone, still lagged behind the purpose-built multiview network.

Checking performance in the real world

To make sure the system was not just tuned to a single hospital’s habits, the authors tested their trained models on echocardiograms from the Montreal Heart Institute in Canada, collected years later and interpreted with slightly different measurement rules. Despite these differences, the multiview network again showed strong performance for chamber problems and valve leakage, and only a modest drop for diastolic dysfunction. The team also sliced the data by age, sex, and the type of ultrasound machine used, finding that accuracy remained consistently high across groups.

Peeking inside the black box

Using visualization techniques that highlight which image regions most influenced the AI’s decisions, the researchers confirmed that the network tended to focus on medically sensible structures: the pumping walls of the heart for chamber problems, the upper left chamber for diastolic dysfunction, and valve tissue plus flow signals for valve leakage. While such tools only offer a rough window into the system’s “thinking,” they help reassure clinicians that the AI is not basing its answers on stray artifacts or labels burned into the images.

What this means for future care

To a non-specialist, the key message is that teaching AI to watch the heart from several angles at once makes it better at telling normal from abnormal, and even enables new diagnoses that human readers usually cannot make from the same raw videos. The work suggests that future ultrasound systems could automatically flag scans with likely serious problems so that doctors can review them sooner, while giving more routine studies a lower priority. More broadly, the study offers a blueprint for using multiview AI with many kinds of medical imaging, potentially improving the speed and reliability of diagnoses across the body.

Citation: Barrios, J.P., Ansari, M.U., Olgin, J.E. et al. Multiview deep learning improves detection of major cardiac conditions from echocardiography. Nat Cardiovasc Res 5, 234–245 (2026). https://doi.org/10.1038/s44161-026-00786-7

Keywords: echocardiography, deep learning, cardiac imaging, valve disease, diastolic dysfunction