Clear Sky Science · en

Synthetic X‑ray‑driven tracking and control of miniature medical devices

Smaller Tools, Safer Surgeries

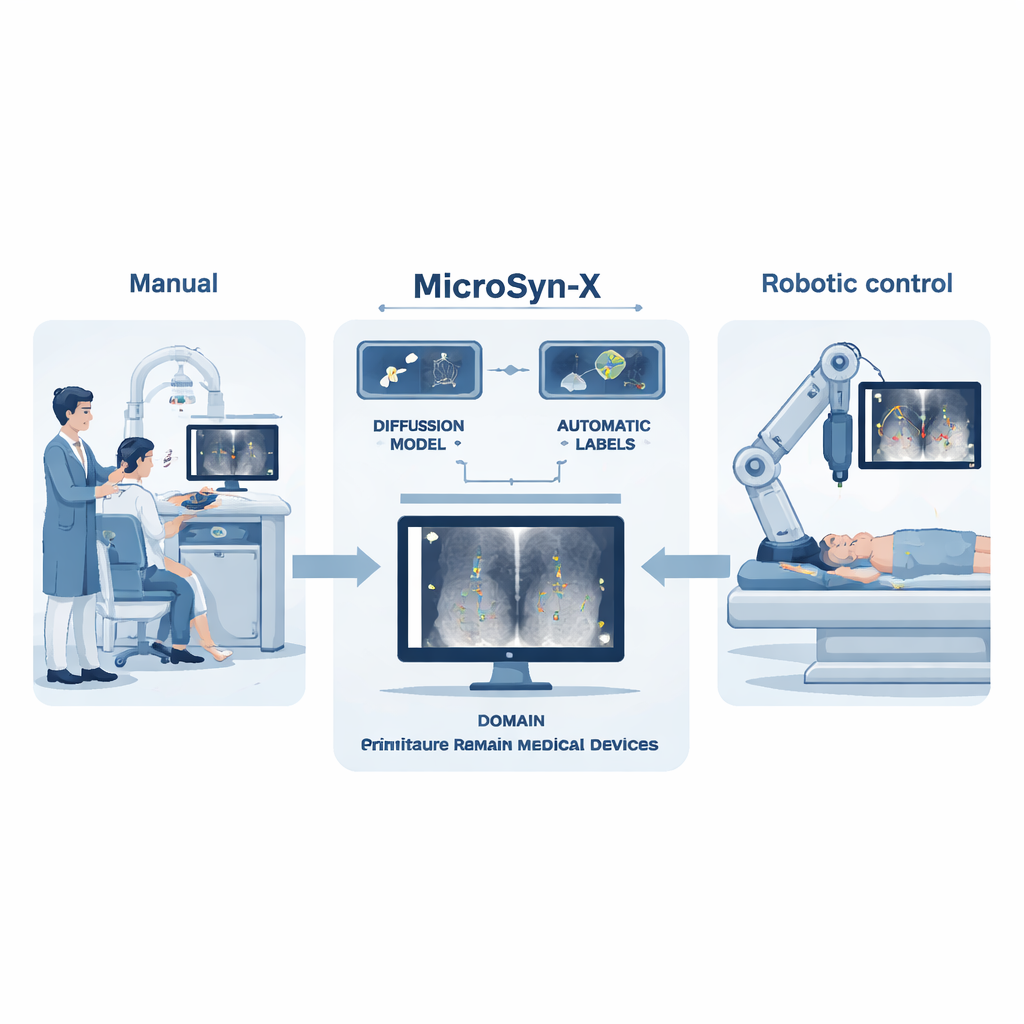

Surgeons are beginning to use tiny, wire-free medical tools that can crawl through blood vessels and other narrow passages to deliver drugs, open clogged arteries or measure vital signals from deep inside the body. These miniature medical devices promise gentler procedures and faster recovery—but only if doctors can see and steer them safely in real time. This paper introduces MicroSyn-X, a new way to train computers to track these tiny devices on X-ray images, paving the way for more precise and less invasive surgeries.

The Problem with Invisible Helpers

Today’s surgical imaging workhorse is X-ray fluoroscopy, which shows moving shadows of bones, vessels and instruments on a screen. Miniature devices, however, are so small and faint that they often blend into the noisy background. They can be hidden by bone, metal tools or contrast dyes, and soft or liquid robots constantly change shape as they move. Human operators must watch the screen carefully and adjust magnets or catheters by hand, a slow and tiring process that risks mistakes. Training computer vision—software that can “see” on its own—could help, but it usually needs huge collections of carefully labeled images. For these new devices, such datasets barely exist because collecting them is costly, time-consuming and limited by patient privacy.

Teaching Computers with Fake, but Faithful, X-rays

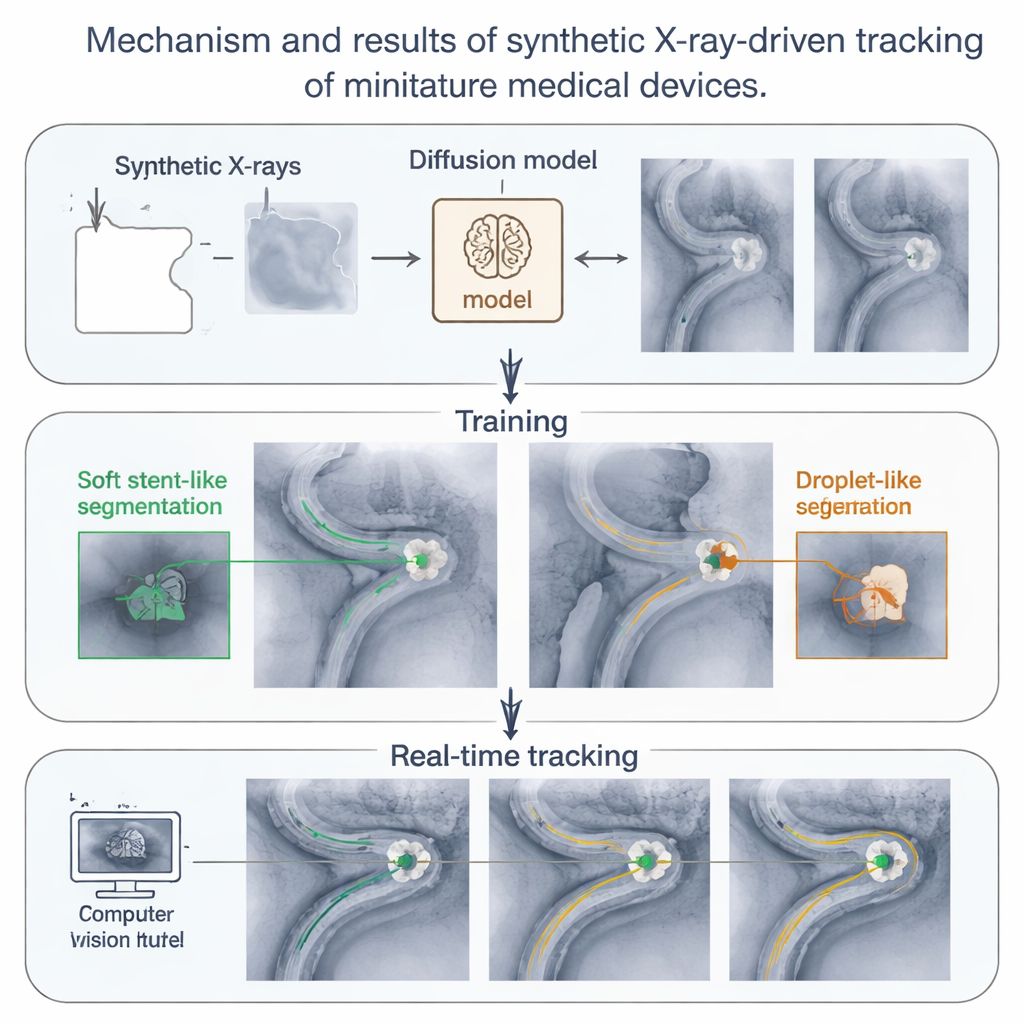

MicroSyn-X tackles this data bottleneck by creating its own highly realistic X-ray images, complete with built-in labels that tell a learning algorithm exactly where each device is. First, the system uses a modern image generator called a diffusion model to produce lifelike X-ray backgrounds of organs, bones and surgical tools, guided by simple prompts and rough masks that outline tissue, metal and fluid-filled channels. Then, images of the miniature devices—either photographed once on a clean background or drawn mathematically for liquid droplets—are digitally blended into these scenes so they look like they were truly inside the body. Because the computer knows exactly where each device was placed, it automatically generates precise outlines and bounding boxes, eliminating tedious human labeling.

Preparing for the Real World with Controlled Chaos

A key innovation of MicroSyn-X is “domain randomization,” a deliberate injection of variety into the synthetic images. The system automatically changes organ shapes, device positions, brightness, noise level and even how much the devices are hidden by bones or tools. It also creates many different shapes for liquid robots, which can stretch, split into swarms and rejoin. By confronting the learning algorithm with thousands of slightly different situations—many of which are rare or impractical to capture in real patients—the authors train models that focus on the essential visual cues of the devices rather than on superficial patterns. Tests show that models trained purely on these synthetic images can match or outperform models trained on real X-rays, especially in hard cases with low contrast, high noise or heavy occlusion.

From Computer Screen to Robot in the Operating Room

The researchers go beyond software demonstrations and link MicroSyn-X directly to a robotic system. A robotic arm holds a strong magnet near tissue while a C-arm fluoroscope captures X-ray images. The MicroSyn-X–trained vision model finds soft stent-shaped robots and liquid droplets in each frame, and a tracking algorithm stitches these detections into smooth paths, even when the devices briefly disappear behind bone. Using this feedback, the robot guides devices through twisting artificial vessels, real animal organs outside the body and live arteries in rabbits and rats. The system successfully steers multiple devices at once, follows them through branching vessels and monitors swarms of liquid droplets that split and merge under magnetic control—all in real time under challenging imaging conditions.

Toward Smarter, Less Invasive Care

In simple terms, this work shows that computers can learn to track tiny surgical tools safely inside the body by practicing on vast libraries of carefully crafted “fake” X-rays instead of scarce real ones. MicroSyn-X turns synthetic imaging into a practical engine for robotics: it creates realistic training data, teaches vision models and feeds their output into a magnetic navigation system that has already worked in living animals. As these methods mature and are tested in more complex cases, they could help surgeons perform delicate procedures with greater accuracy and less strain, bringing us closer to a future where fleets of miniature robots quietly improve treatment from the inside out.

Citation: Wang, C., Kang, W., Sun, M. et al. Synthetic X‑ray‑driven tracking and control of miniature medical devices. Nat Mach Intell 8, 276–291 (2026). https://doi.org/10.1038/s42256-026-01190-3

Keywords: miniature medical devices, X-ray imaging, synthetic data, medical robotics, computer vision