Clear Sky Science · en

Meta-designing quantum experiments with language models

Teaching Machines to Design Quantum Experiments

Quantum technologies promise ultra-secure communication, powerful new computers and exquisitely precise sensors, but turning the math of quantum physics into real laboratory setups is fiendishly hard. This paper shows how an AI language model can learn to write short pieces of computer code that, in turn, generate whole families of quantum experiments. Instead of giving scientists just one clever design, the AI uncovers general rules that humans can read, reuse and build on.

From One-Off Tricks to General Rules

Today, artificial intelligence is already used to search for quantum experiments that create a specific strange state of light or matter. These tools can outperform human intuition, but they usually spit out a single solution: one detailed setup for one particular goal. Understanding why that solution works, or how to scale it up, is left to the researcher and is often close to impossible. The authors argue that what scientists really need are not isolated recipes but reusable design principles—something closer to a cookbook than a one-line tip.

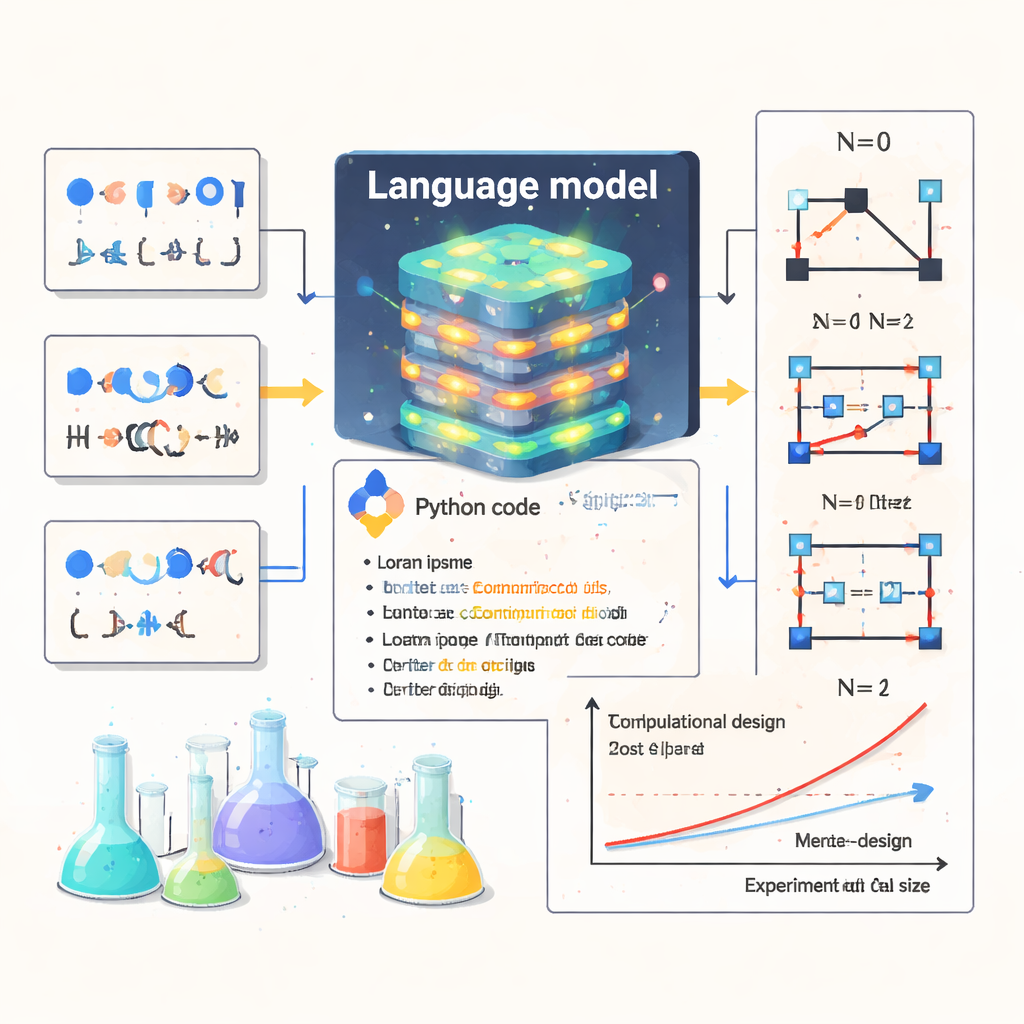

A New Idea: Meta-Design

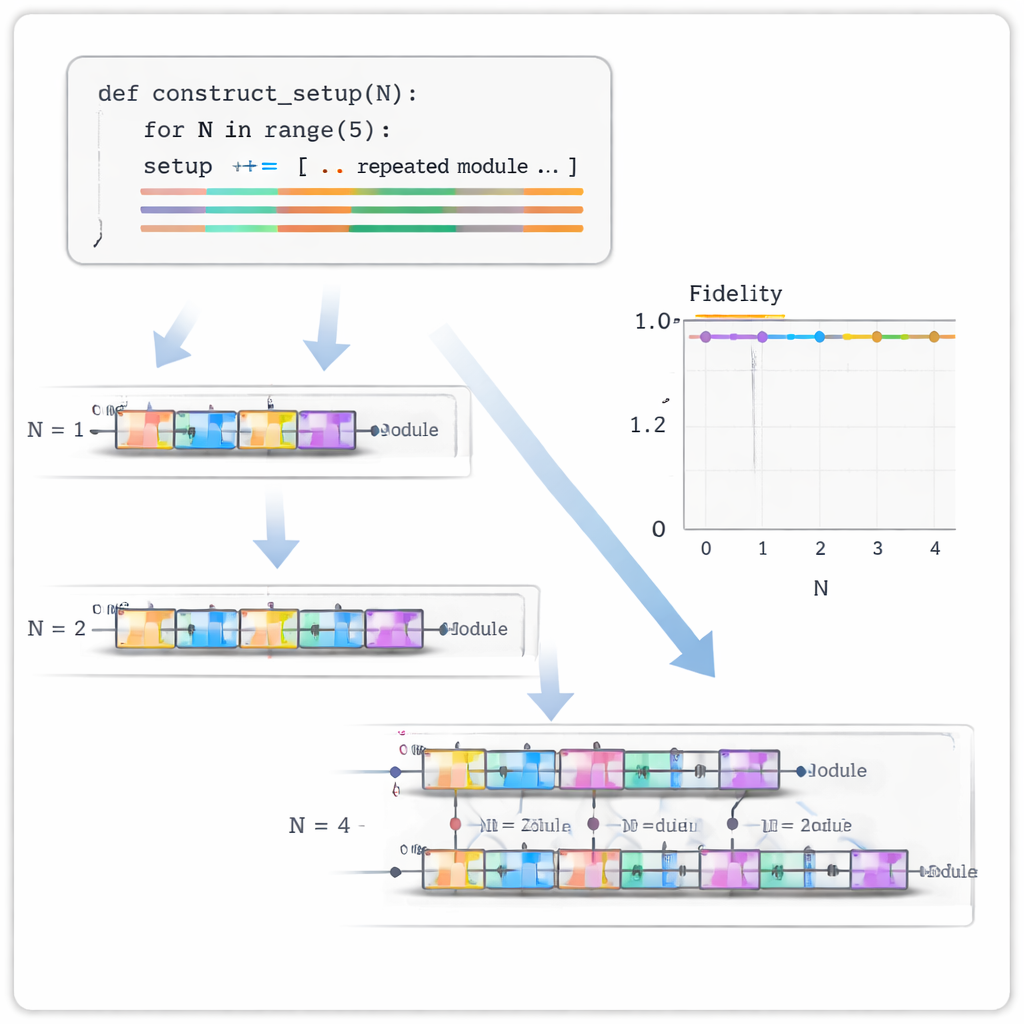

The team introduces what they call “meta-design.” Instead of asking the computer to design a single experiment, they ask a transformer-based language model to write Python code that itself generates many experiments. A typical example is a function called construct_setup(N). For any chosen size N, this function outputs the full blueprint of an experiment that should create the right quantum state for that size. In quantum optics, where researchers manipulate single particles of light, this means the code decides how to connect photon-pair sources, beam splitters and detectors to produce highly entangled states as the number of particles grows.

Training on Synthetic Quantum Worlds

To teach the model this skill, the authors exploited a useful asymmetry. Given a description of an experimental setup, it is relatively easy for a computer to calculate which quantum state will come out. The reverse problem—finding a setup that produces a desired state—is much harder. The researchers therefore randomly generated millions of short Python programs, ran them for a few small sizes (N = 0, 1, 2), and computed the resulting three quantum states. Each training example paired “three example states” with “the code that produced all of them.” The language model learned to read those three states as a kind of pattern and to predict the underlying code that would keep working as N increases.

Discovering and Rediscovering Quantum Patterns

Once trained, the model was tested on 20 families of quantum states that physicists care about, many drawn from earlier work on automated quantum experiment design. For each family, the model saw only the first three states and was asked to generate candidate programs. The resulting codes were executed and checked for how closely they matched the target states, not only for the seen sizes but also for larger ones. In six of the 20 cases, the AI produced programs that were exactly right and continued to work as systems grew, including two classes for which no general construction was previously known. One relates to spin systems where neighboring “spin-up” particles never sit side by side, inspired by Rydberg-atom experiments; another reproduces the ground states of the celebrated Majumdar–Ghosh model from condensed-matter physics. The model also successfully rediscovered known constructions for famous states such as GHZ and Bell states.

Beyond Photons: Circuits and Graphs

The authors further showed that the same meta-design strategy applies outside optical experiments. They trained similar models to write quantum circuit code—sequences of standard gates acting on qubits—that generate target states on quantum computers. They also used it to generate simple rules for building graph states, where qubits arranged in lines, rings or star shapes serve as resources for a style of quantum computing based on measurements alone. In both cases, the AI produced short, readable programs that correctly scaled from small to larger systems.

Why This Matters for Science

For non-specialists, the key message is that this approach turns AI from a black box that merely suggests answers into a tool that exposes underlying scientific structure. By writing human-readable code that generalizes, the language model reveals patterns in families of quantum states and experiments that researchers can inspect, test and adapt. This not only cuts down the staggering computing costs of designing ever-larger experiments one by one, it also opens a path toward using language models as partners in scientific discovery across many fields—from new microscopy setups to advanced materials—where what we really seek are simple rules hidden inside complex phenomena.

Citation: Arlt, S., Duan, H., Li, F. et al. Meta-designing quantum experiments with language models. Nat Mach Intell 8, 148–157 (2026). https://doi.org/10.1038/s42256-025-01153-0

Keywords: quantum experiment design, language models, photonic quantum states, program synthesis, scientific discovery