Clear Sky Science · en

Simulation-based inference for precision neutrino physics through neural Monte Carlo tuning

Fine-Tuning the Eyes of Neutrino Telescopes

Future neutrino experiments aim to answer big questions about the universe, such as how tiny neutrinos are ordered in mass and how stars explode. To do that, their giant detectors must measure energy with exquisite precision—far better than what simple textbook formulas can handle. This paper shows how modern machine-learning tools can help tune and validate the complex simulations that link what happens inside a detector to the flashes of light we actually record.

Why Understanding Detector Response Is So Hard

In experiments like the Jiangmen Underground Neutrino Observatory (JUNO) in China, neutrinos interact in a vast tank of liquid that produces light when particles pass through. That light is collected by thousands of photomultiplier tubes as tiny electrical pulses, counted as “photo-electrons.” The challenge is to turn those counts back into the original particle energy. In reality, this relationship is not a neat straight line: it depends on the geometry of the detector, the behavior of the liquid, and several intertwined physical effects. Traditional approaches relied on hand-tuning simulation parameters until the simulated spectra looked roughly like the calibration data, a method that becomes unmanageable for modern, high-precision experiments.

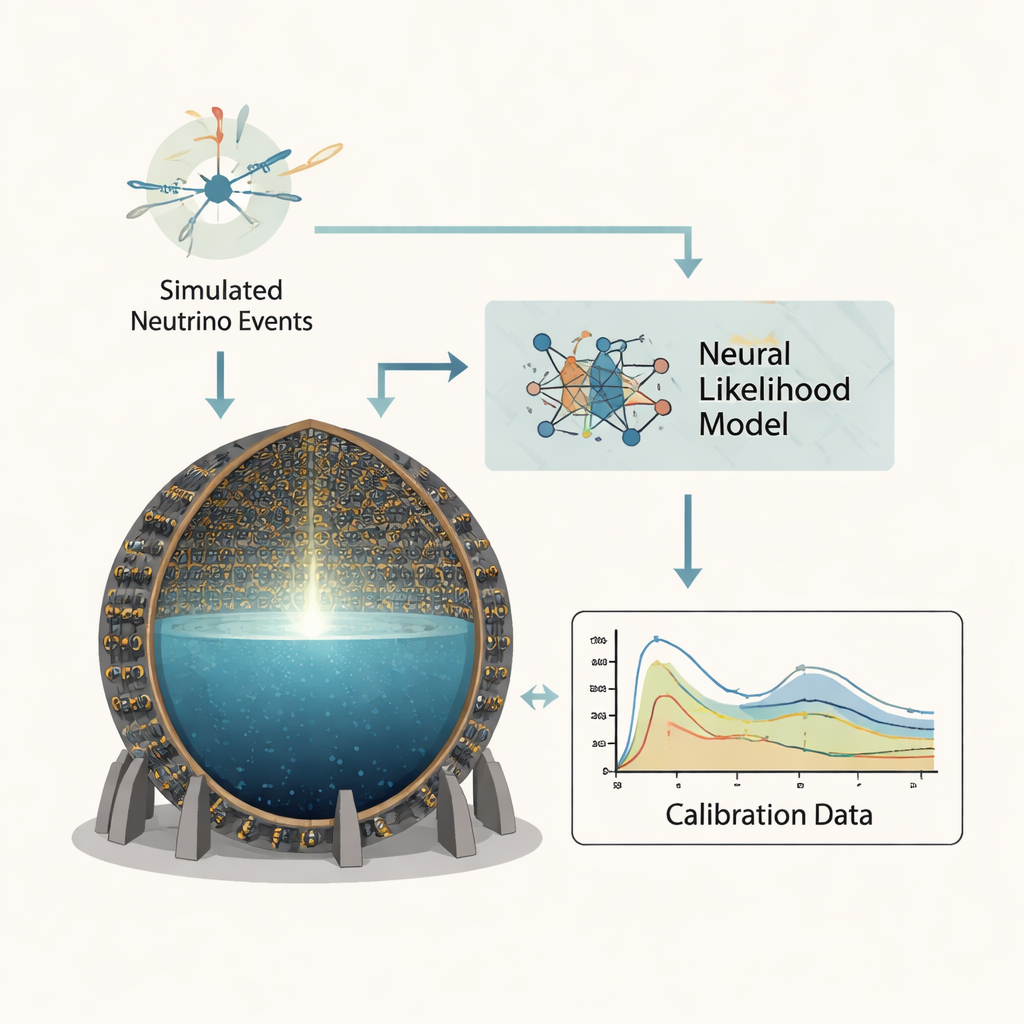

Teaching Neural Networks to Emulate the Simulator

The authors adopt a strategy known as simulation-based inference, where instead of trying to write down an exact mathematical formula for the detector response, they let simulations and neural networks do the heavy lifting. They focus on three key parameters that govern how JUNO converts true energy into detected light: a coefficient describing how light production is “quenched” at high ionization density, an overall light yield setting the average brightness, and a factor controlling the amount of Cherenkov light. Using JUNO’s official Monte Carlo software, they generate about a billion simulated calibration events from five radioactive sources placed at the detector center, each event summarized by a single number: the total collected light. This forms the training ground for neural networks that learn how likely any given light signal is, for any choice of the three parameters.

Two Complementary Machine-Learning Lenses

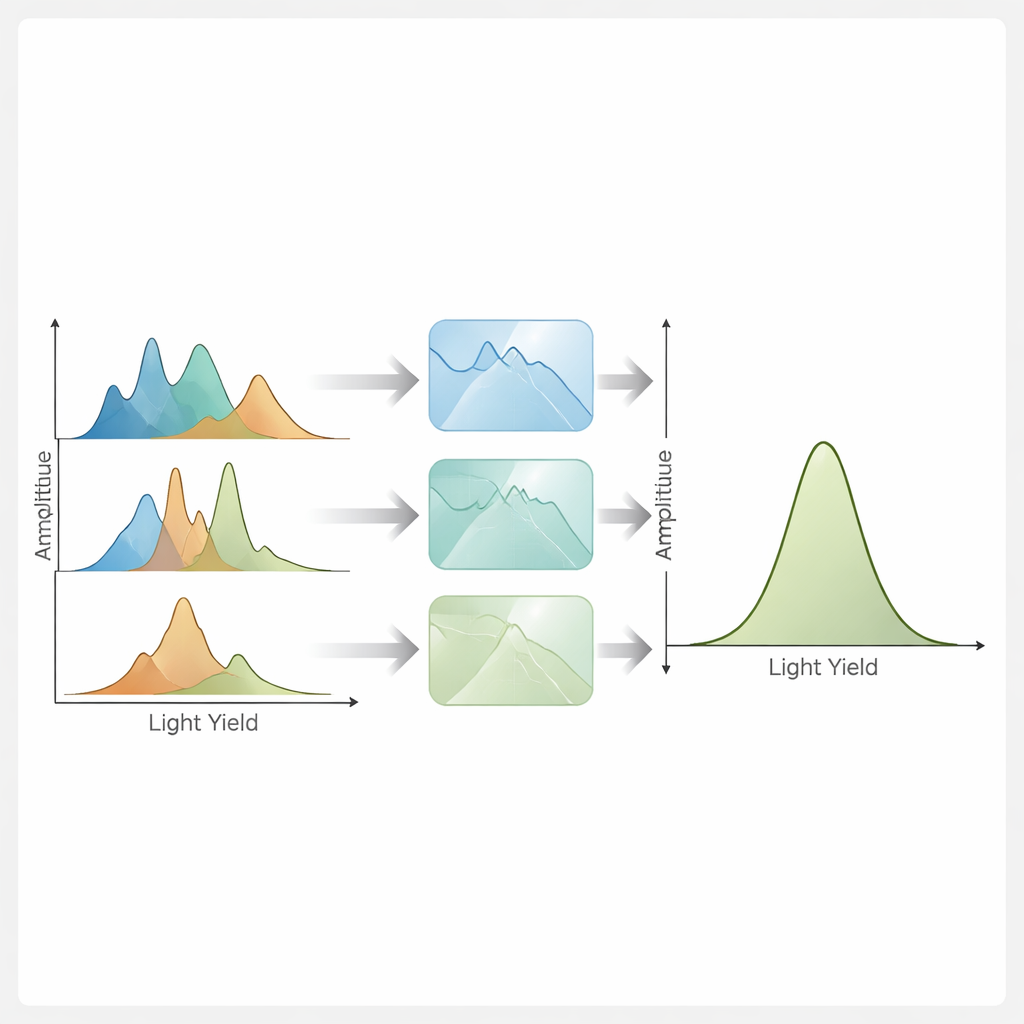

The team develops two complementary neural “likelihood estimators” that approximate the probability of observing a given light signal for specific detector settings. The first, called a Transformer Encoder Density Estimator, uses a transformer architecture—the same family of models behind many language tools—to directly predict a finely binned histogram of the light spectrum for each combination of parameters and source. This naturally supports traditional binned statistical analyses. The second, called a Normalizing Flows Density Estimator, uses a chain of invertible transformations to map the complicated, multi-peaked spectra into a simple bell-shaped distribution. Because these transformations are mathematically controlled, the method can evaluate the exact probability for each unbinned event, enabling analyses that use the full information in the data.

Checking Accuracy, Precision, and Robustness

To prove that these neural tools are trustworthy, the authors subject them to rigorous tests. First, they check whether the models can reproduce the simulated spectra across thousands of combinations of the three parameters, using several statistical distances that compare predicted and “true” distributions. Both methods track sharp peaks and subtle spectral features extremely well, with discrepancies at the level of a few parts in a thousand. Next, they plug the learned likelihoods into established statistical engines—Bayesian nested sampling, Markov chain Monte Carlo, and classical minimization—to recover the original simulation parameters from mock datasets. Across a wide range of parameter values and event statistics, the recovered parameters are unbiased and their quoted uncertainties match the actual spread of results. Uncertainties shrink with more data exactly as expected from basic counting statistics, and the methods faithfully capture strong correlations among the parameters.

From Months of Computing to Seconds

One striking outcome is the computational speed-up. Running full detector simulations with enough events to characterize each parameter point can take many hours per setting on a conventional processor. Once trained, however, the transformer model can generate a predicted spectrum in a few milliseconds, and the normalizing-flow model can evaluate probabilities for tens of thousands of events in well under a tenth of a second. This makes it realistic to scan large parameter spaces and to quantify systematic uncertainties that would otherwise be prohibitively expensive, opening the door to more detailed and reliable detector calibrations.

What This Means for Future Neutrino Experiments

For non-specialists, the core message is that this work turns complicated, slow detector simulations into fast, accurate surrogates without sacrificing physical meaning. The three tuned parameters still correspond directly to real properties of the detector and its liquid, so the results remain interpretable to physicists. The study shows that both neural approaches can pin down these parameters with extremely small biases and errors limited mainly by how much data is available. As upcoming experiments such as JUNO, DUNE, and Hyper-Kamiokande push toward sub-percent precision in neutrino measurements, methods like these will be essential to ensure that what we infer about the universe is not limited by how well we understand our detectors.

Citation: Gavrikov, A., Serafini, A., Dolzhikov, D. et al. Simulation-based inference for precision neutrino physics through neural Monte Carlo tuning. Commun Phys 9, 63 (2026). https://doi.org/10.1038/s42005-026-02499-6

Keywords: neutrino detectors, machine learning, Monte Carlo tuning, normalizing flows, simulation-based inference