Clear Sky Science · en

Linguistic pitch is hierarchically encoded in the right ventral stream

How Your Brain Hears Questions and Statements

When someone says “You’re going?” versus “You’re going.”, you instantly hear one as a question and the other as a statement—even if the words are identical. What changes is the melody of the voice, especially the rise and fall of pitch. This paper uncovers how the brain turns those pitch patterns into clear, meaningful categories like “question” or “statement,” and shows that this process unfolds in a precise sequence across the right side of the brain.

The Voice Melody That Carries Meaning

Spoken language is more than just consonants and vowels. A key ingredient is the fundamental frequency of the voice, often called f0, which we experience as pitch. The height of f0 helps us tell speakers apart (for example, male versus female voices), while the way f0 rises or falls across a sentence signals sentence type. A final rise in pitch tends to mark a yes–no question; a final fall marks a statement. In this study, volunteers listened to short French words whose pitch patterns had been carefully morphed along a continuum from clearly “statement-like” to clearly “question-like,” while their brain activity was measured using magnetoencephalography (MEG), a technique that tracks fast-changing brain signals.

From Raw Sound to Stable Categories

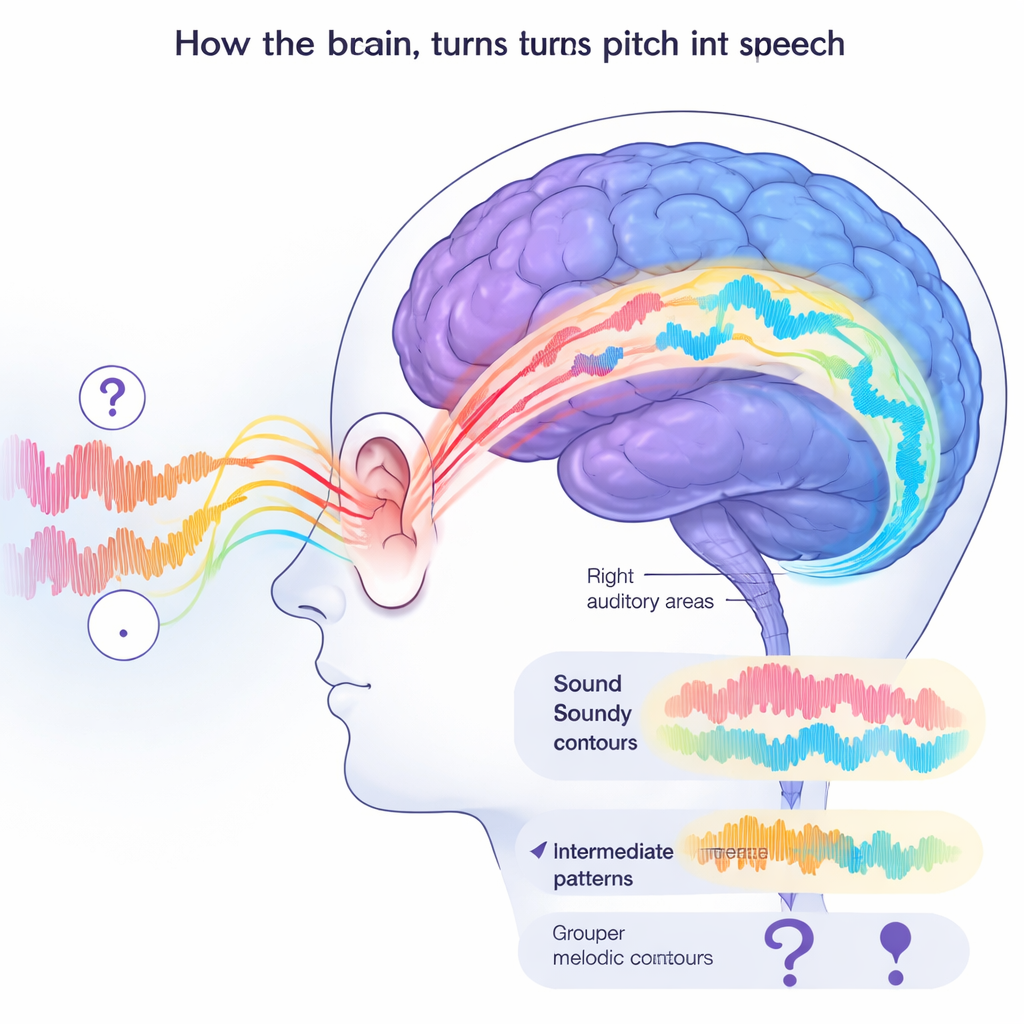

The researchers asked whether the brain treats these pitch cues as raw acoustic details or as stable, higher-level categories. Behaviorally, people behaved as if they were hearing two clear-cut sentence types: they reliably labeled sounds as questions or statements, largely ignoring differences in exact pitch shape and in speaker voice. In the brain, early activity patterns still contained rich information about both who was speaking and how the pitch was changing. But as time went on, later activity patterns became less sensitive to these surface details and instead reflected just the sentence type—mirroring the “all-or-nothing” way people ultimately judged the sounds.

A Right-Sided Pathway for Linguistic Pitch

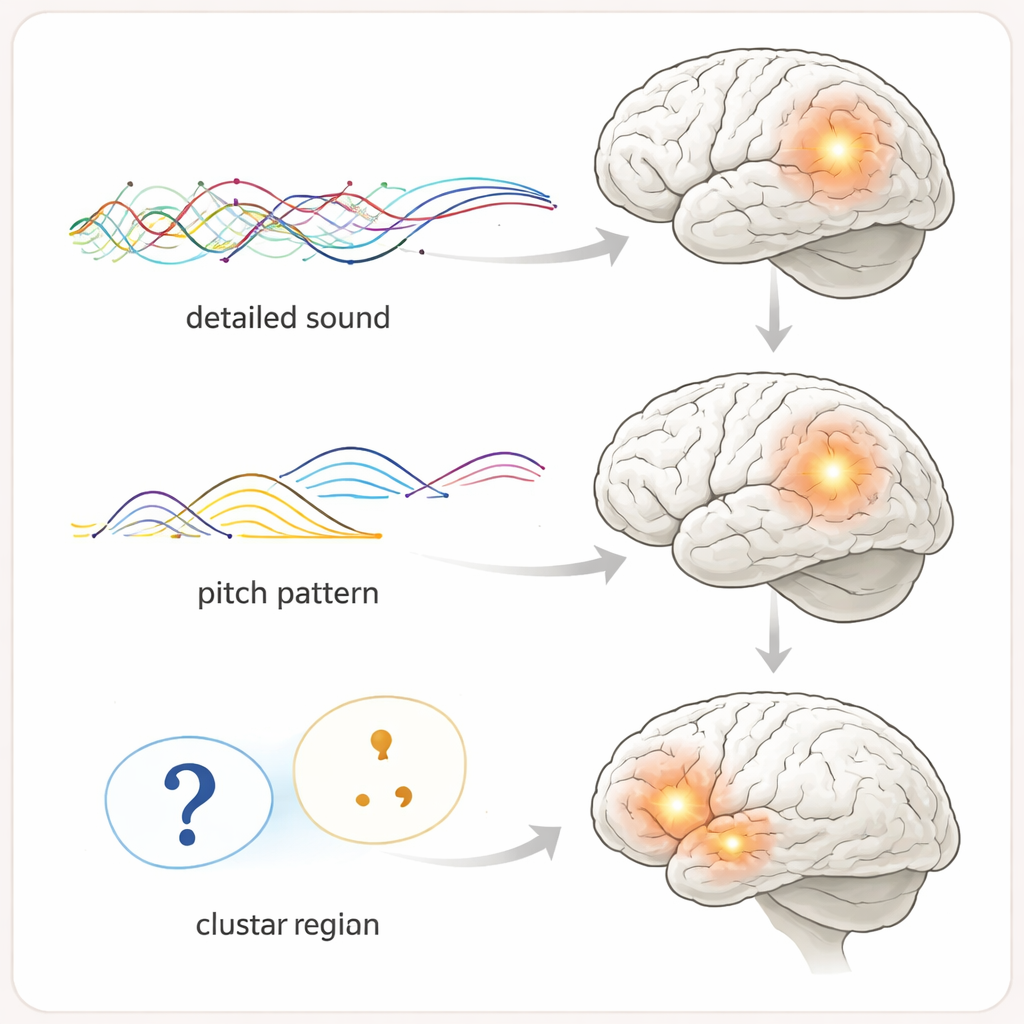

To map where these different stages arise, the team traced activity back into the brain. They found that early representations of pitch live in right auditory regions near the ear, which faithfully track both pitch height and its detailed contour. Slightly later and farther forward along the right superior temporal gyrus, the brain carries a more streamlined representation in which information about the speaker’s typical pitch has been factored out, while the overall rising or falling pattern is retained. Furthest along this path, in even more anterior right temporal regions, the brain’s activity reflects only the abstract category—“this sounds like a question” versus “this sounds like a statement”—no matter which speaker produced it. A matching region on the left side, deep in frontal and insular areas, also carries these abstract categories, closely tied to the act of making a decision.

Linking Brain Patterns to Choices

The study went further by asking how the “shape” of these brain representations relates to what people actually do. Using mathematical tools that compare patterns of brain responses across different sounds, the authors showed that only the anterior right temporal region clearly grouped together all question-like sounds and separated them from statement-like sounds, regardless of the speaker. People whose brains made this separation cleanly were better at the task: they categorized more accurately and showed sharper transitions between “question” and “statement” responses. In left frontal–insular areas, the strength of these abstract pitch categories tracked how quickly the brain could accumulate enough evidence to decide, as estimated by a decision-making model that combines both speed and accuracy.

Teamwork Across Hemispheres

The researchers also examined how these brain areas talk to each other over time. They found fast, high-frequency communication flowing from the right anterior temporal region to the left frontal–insular region late in each trial, as if relaying a finished “sentence type” signal to a decision hub. Slower rhythmic coordination from the left frontal–insular area to a region involved in planning movements on the right side suggested that decision-related information was being fed to systems that help prepare the button presses used to report answers. Together, these interactions show that understanding the melody of speech is not confined to one patch of cortex, but emerges from a dynamic conversation between right-sided pitch processors and left-sided decision circuits.

Why This Matters for Everyday Listening

For non-specialists, the core message is simple: your brain uses a right-hemisphere pathway to peel away the messy details of pitch in speech—who is speaking, how exactly their voice wiggles—to arrive at clean, reliable categories like “question” and “statement.” These abstract pitch representations are not just theoretical constructs; their clarity predicts how well and how quickly you can tell sentence types apart. The work suggests that subtle difficulties with this pathway could affect how people interpret tone of voice, and it provides a blueprint for studying how pitch is processed in more natural conversations, different languages, and clinical conditions that alter speech perception.

Citation: Oderbolz, C., Orpella, J. & Meyer, M. Linguistic pitch is hierarchically encoded in the right ventral stream. Commun Biol 9, 267 (2026). https://doi.org/10.1038/s42003-026-09545-7

Keywords: speech perception, pitch, prosody, auditory cortex, brain networks