Clear Sky Science · en

Scalable depression monitoring with smartphone speech using a multimodal benchmark and topic analysis

Listening to Mood in Everyday Life

Depression often ebbs and flows from week to week, but clinic visits and questionnaires catch only brief snapshots. This study explores whether the way people talk into their smartphones at home can offer a more continuous window into how depressed they feel. By turning short weekly voice messages into patterns that computers can read, the researchers ask: can ordinary speech become a practical early‑warning signal for changes in mood?

Turning Weekly Check‑Ins into Data

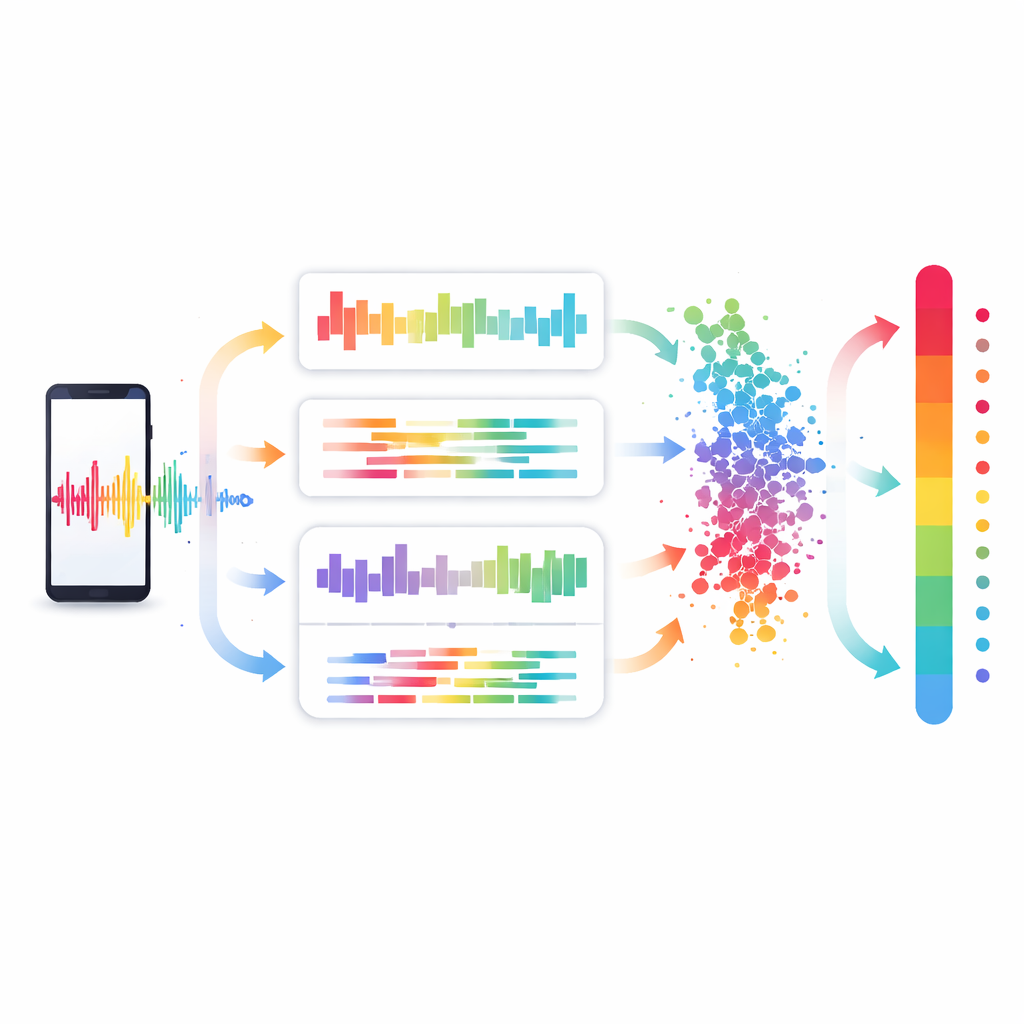

In a long-running project, 284 adults in Germany—some with a history of major depression and some without—used an app to answer the same spoken question once a week: “How did you feel last week?” Over several years they produced 3,151 short voice diaries, each paired with a depression score from the well‑known Beck Depression Inventory (BDI), a 21‑item self‑report scale. The team fed these audio recordings through a robust speech‑recognition system running locally on the phone or nearby computers, converting spoken German into text while preserving natural hesitations, fillers, and small grammatical details. From both the sound and the words, they extracted many different kinds of features, including timing measures, hand‑crafted acoustic summaries, modern audio embeddings, and dense text embeddings produced by large language models.

Finding the Most Telling Signal

To see which aspects of speech best tracked how depressed people felt, the researchers compared these feature types within the same statistical framework. They trained support‑vector regression models to predict each person’s BDI score from a given diary, carefully separating data so that a person’s diaries never appeared in both training and test sets. All models beat a dummy baseline, but one signal stood out: sentence embeddings from large language models, which compress the meaning and structure of a whole diary into a single vector. A model based on the Qwen3‑8B embedding predicted BDI scores with an average error of about 4.6 points on the 0–63 scale, explaining roughly one‑third of the score differences between diaries. Combining two text‑embedding models improved accuracy slightly further, while adding audio‑only information or simple acoustic markers contributed little beyond what the words themselves already carried.

Looking Inside the Black Box

Building trust in such tools requires more than raw accuracy. The team therefore probed how and why their models worked. First, they repeated the analysis within only the group diagnosed with major depressive disorder, showing that text embeddings still captured meaningful differences in symptom severity even among patients rather than merely separating them from healthy volunteers. Next, they deliberately scrambled the transcripts before embedding—shuffling word order, stripping away small grammatical endings, or masking most words—to see how performance changed. Predictions worsened most when topical content was removed, but also declined when syntax and function words were disrupted. This pattern suggests that the models rely on multiple levels of language, from what people talk about to how they phrase it, rather than on simple topic keywords alone.

Uncovering Common Themes in How People Talk

To add a human‑readable layer to their system, the researchers applied a modern topic‑modeling method known as BERTopic to the best text embeddings. This unsupervised approach grouped diaries into six broad themes such as general weekly updates, distress and care, physical rehabilitation and activity, and teaching or work context. When they compared these themes with BDI scores, a clear pattern emerged. Diaries dominated by distress and care—ruminating about feelings, sleep problems, treatment decisions, and coping efforts—tended to coincide with higher depression scores. In contrast, diaries centered on physical activity, rehabilitation exercises, or routine teaching work were linked to lower scores. Correlations between topics and individual BDI items, such as loss of interest or fatigue, were modest but pointed in clinically sensible directions, supporting the idea that these themes reflect genuine aspects of mood and functioning.

What This Could Mean for Everyday Care

The study shows that modern language‑based representations of short, weekly voice diaries can estimate depression severity with reasonable precision, usually staying within about one symptom band on the BDI scale. Rather than serving as a stand‑alone diagnostic tool, such a system could help track trends over time—highlighting when someone’s mood appears to worsen by a meaningful margin and prompting closer attention from clinicians or patients themselves. While the work still faces important hurdles, including privacy protection, adaptation to other languages and cultures, and better tracking of changes within a single person, it points toward a future where a simple spoken check‑in on a smartphone might quietly help monitor mental health between visits.

Citation: Emden, D., Richter, M., Chevance, A. et al. Scalable depression monitoring with smartphone speech using a multimodal benchmark and topic analysis. npj Digit. Med. 9, 230 (2026). https://doi.org/10.1038/s41746-026-02486-9

Keywords: depression monitoring, smartphone speech, digital phenotyping, language embeddings, mental health apps