Clear Sky Science · en

Combining federated learning and travelling model boosts performance and opens opportunities for digital health equity

Why Sharing Medical Insights Without Sharing Data Matters

Modern medicine increasingly relies on artificial intelligence to find patterns in scans and health records. But patient data are sensitive and often cannot leave the hospital where they were collected. This creates a tension: how can hospitals around the world team up to train powerful AI tools, without sending raw patient data across borders or into big central servers? This study introduces a new way to do just that, aiming not only for accuracy, but also for fairness between well‑resourced hospitals and smaller, under‑resourced clinics.

Two Ways to Teach an AI Without Moving Data

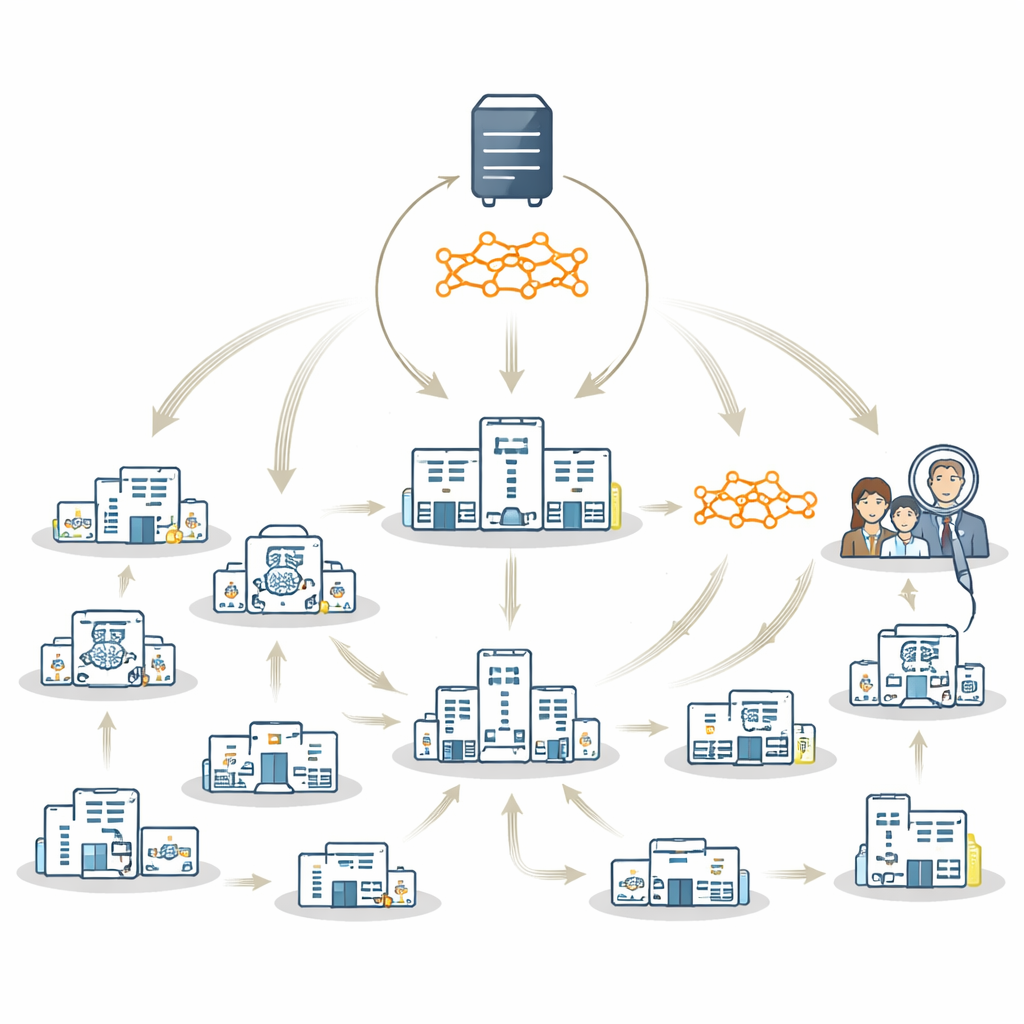

Today, two main strategies let hospitals train AI together while keeping data on site. In federated learning, each hospital trains its own local copy of a model in parallel; these local models are then combined into a shared "global" model on a central server. In a travelling model approach, there is only one model that moves from hospital to hospital, training on each site in turn. Both methods protect privacy, but each has drawbacks. Federated learning can struggle when some hospitals have very little data or do not see all types of patients; combining weak or unbalanced local models may lead to a poor global model that mainly reflects large, wealthy sites. The travelling model is more robust to these imbalances but can be slower and harder to manage.

A Hybrid Strategy That Uses the Best of Both Worlds

The authors propose FedTM, a hybrid training scheme that blends the strengths of federated learning and the travelling model. Training happens in two phases. First comes a "warmup" phase where only the largest hospitals, with more complete and balanced datasets, train the model in parallel using standard federated learning techniques. This creates a strong starting model. Then comes a "refinement" phase, where this warmed‑up model visits every site in sequence, including very small clinics that may have just a few brain scans or even only one patient. In this second phase, the model is gradually updated as it travels, incorporating knowledge from each site without ever needing their data to leave local control.

Testing the Method on Parkinson’s Disease Brain Scans

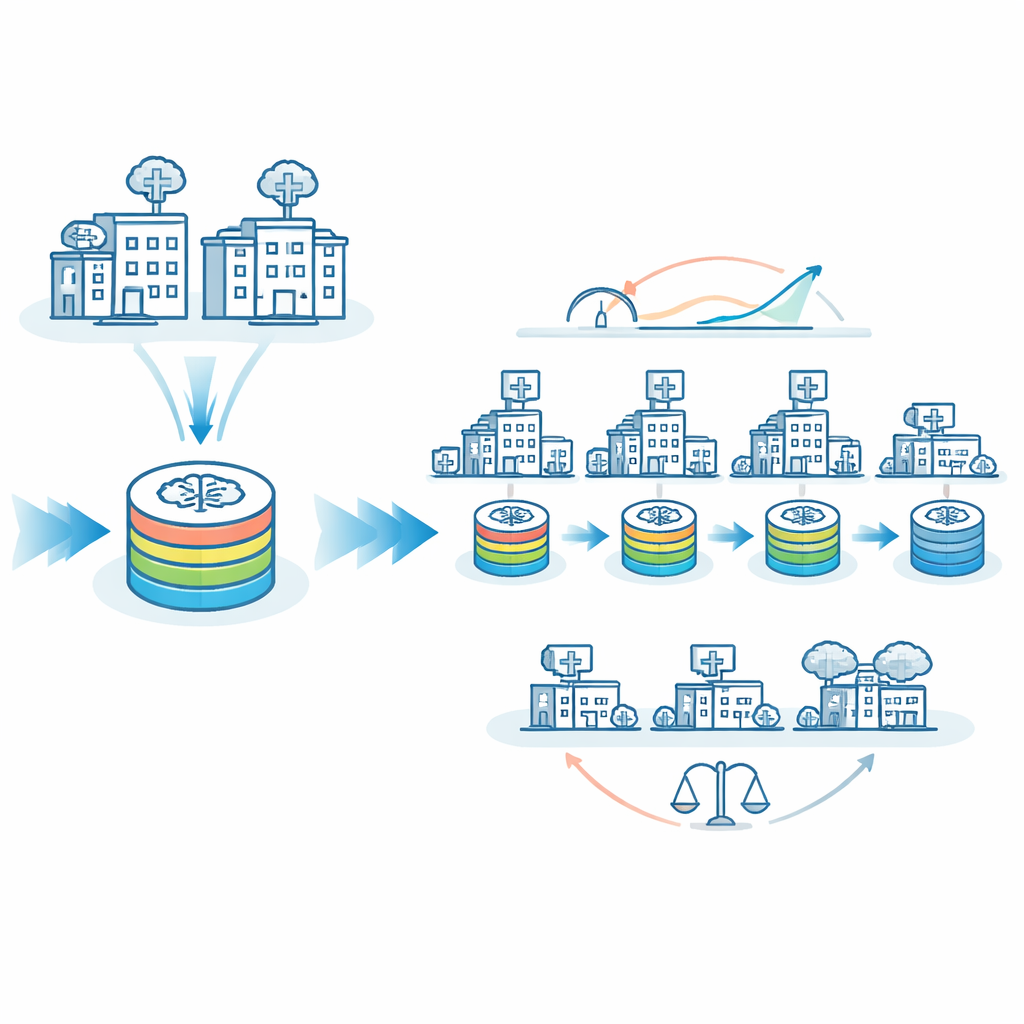

To put FedTM to the test, the researchers used 1,817 brain MRI scans drawn from 83 imaging sites worldwide to train an AI system to distinguish people with Parkinson’s disease from healthy individuals. This is a particularly challenging setting: more than half of the sites contributed fewer than ten scans, only about a third had both patient and healthy control data, and scanning protocols differed widely. Under these real‑world conditions, pure federated learning failed to learn the task well, while a pure travelling model performed better but still left room for improvement. FedTM, especially when the warmup involved the seven largest and most balanced sites, clearly outperformed both: the area under the ROC curve, a standard measure of classification quality, rose from 77% with the travelling model alone to about 82% with FedTM, with similar gains across other clinically important metrics such as sensitivity, specificity, and F1‑score.

Making AI Fairer Across Big and Small Hospitals

A major concern in medical AI is equity: does a model work just as well for patients at small, rural, or under‑resourced hospitals as it does for those at large academic centers? The team examined how often the AI made wrong predictions at “larger” versus “smaller” sites. With the travelling model alone, misclassification rates differed by about 8 percentage points between these groups. With FedTM tuned appropriately, misclassification rates for larger and smaller sites became almost identical, around 26%. In other words, the model became not only more accurate overall, but also more even‑handed. FedTM also shifted most of the heavy computation into the warmup phase at better‑resourced sites, cutting the number of training cycles that small sites had to run nearly in half, while keeping total training time similar.

What This Means for Global Digital Health

FedTM offers a practical path toward AI tools that respect privacy, improve performance, and share benefits more fairly across the globe. By allowing even sites with very little data to influence the final model, this framework can help ensure that people in under‑resourced or remote settings are not left behind when new diagnostic tools are developed. While the study focused on a single type of brain scan and one disease, the approach can, in principle, be adapted to many other medical problems. As health systems increasingly adopt mobile devices and wearables, and as regulations emphasize data sovereignty, hybrid strategies like FedTM may become key to building trustworthy, inclusive, and responsible medical AI.

Citation: Souza, R., Stanley, E.A.M., Ohara, E.Y. et al. Combining federated learning and travelling model boosts performance and opens opportunities for digital health equity. npj Digit. Med. 9, 294 (2026). https://doi.org/10.1038/s41746-026-02483-y

Keywords: federated learning, travelling model, Parkinson’s disease, medical imaging AI, health equity