Clear Sky Science · en

Robust and interpretable unit level causal inference in neural networks for pediatric myopia

Why this matters for families and doctors

Childhood nearsightedness, or myopia, is rising at an alarming pace worldwide, especially in East Asia. Parents want to know which habits, body traits, and family factors truly cause their children’s eyesight to worsen, not just which ones happen to “go along with” bad vision. At the same time, modern artificial intelligence (AI) tools can predict who will become myopic, but they often act as opaque black boxes. This study brings these worlds together, showing how a neural network can be redesigned to reveal which specific factors likely cause myopia to develop, child by child, in a way that doctors can understand and trust.

Following thousands of children over time

The researchers analyzed data from the Anyang Childhood Eye Study, a large school-based project in central China that followed more than 3000 first-graders for six years. Each year, children underwent detailed eye examinations and answered questionnaires about their daily lives. From this rich record, the team distilled 16 key features that capture behavior (such as near work and time spent outdoors), body measurements (such as height and pulse), diet, eye structure (including axial length and corneal shape), and family history of glasses-wearing. They trained a standard feedforward neural network to predict whether a child would develop myopia at any point during the six-year follow-up, achieving accuracy comparable to or better than strong traditional models like logistic regression and random forests.

Turning a black box into a cause-and-effect map

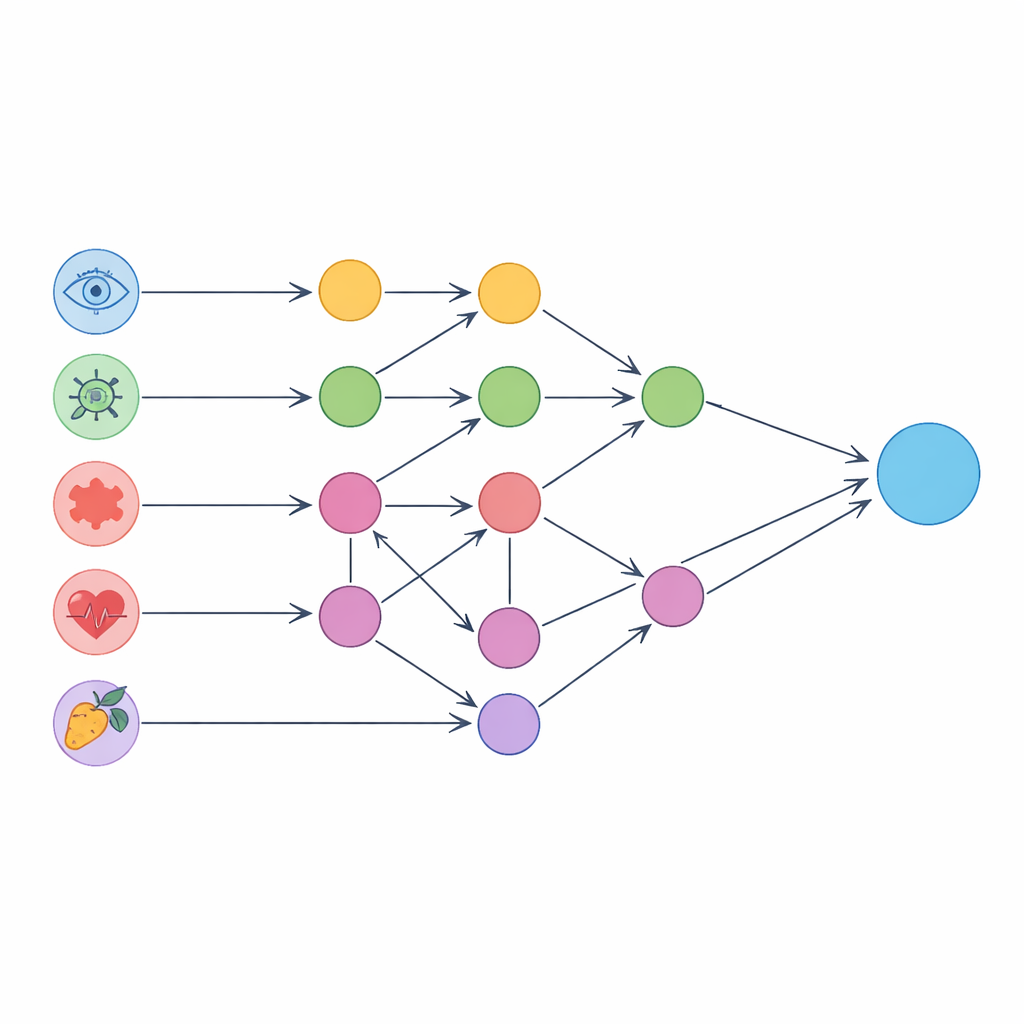

Rather than stopping at prediction, the authors asked a deeper question: which inputs are likely driving those predictions through cause-and-effect pathways? They first used a causal discovery algorithm to infer a directed network of relationships among the 16 features themselves, based purely on the observational data. This graph matched many known clinical links—for example, parental myopia, gender, focusing ability, and corneal curvature all influenced eye length and refraction, and eye length in turn affected how light focuses in the eye. The team then overlaid this graph on the neural network’s input layer, grouping each input neuron into one of three categories: isolated units that do not cause or depend on other inputs, pure units that act through clean chains of mediators, and confounded units whose effects are tangled with other variables.

Peering into different types of inputs

For isolated units, such as pulse rate or certain dietary measures, the authors estimated how changing just that one feature would shift the network’s output toward “myopic” or “non-myopic.” Higher pulse, which may reflect better blood flow, emerged as protective against myopia in line with earlier medical studies. Some other isolated factors, like carbonated drink and egg intake, showed patterns that clashed with prior reports, likely because of imbalanced diets in specific subgroups of the cohort. For pure units, including height, gender, parental myopia, focusing ability, and corneal curvature, the team traced both direct and indirect paths through the causal graph. They confirmed, for example, that taller children tended to have longer eyes and were more prone to myopia, not because height itself is harmful, but because eye growth accompanies body growth.

Handling tangled influences with smarter statistics

The most challenging factors—axial length and cycloplegic refraction—are both central to myopia and heavily intertwined with other eye traits. To deal with these confounded units, the researchers built a domain-adaptive meta-learning system that rebalanced the data using techniques similar to those in modern causal inference. By estimating how likely each child was to fall into different “treatment” levels of eye length or refraction, and by using an ensemble of tree-based models, they could estimate how changes in these measures would causally affect predicted myopia risk. The resulting patterns, such as longer eyes raising risk and weaker focusing power aligning with more myopia, agreed well with long-standing clinical knowledge. A battery of “refutation” tests—adding fake confounders, resampling data, and using placebo variables—showed that these causal estimates were stable and not artifacts of overfitting.

What this means for clearer, fairer medical AI

In the end, the study demonstrates that a deep neural network for pediatric myopia can be pulled apart into meaningful building blocks that echo real biology rather than opaque numerical tricks. By classifying inputs into isolated, pure, and confounded roles and then applying tailored causal methods to each, the framework reveals which lifestyle factors seem genuinely protective, which body measurements act as early warning signals, and where the model’s internal logic conflicts with medical consensus. While the work does not replace clinical trials, it offers a powerful lens for checking and improving AI tools before they guide care. More broadly, the approach is model-agnostic and could be applied to other health problems, pushing medical AI toward systems that are not only accurate, but also transparent, testable, and aligned with the goals of precision and equitable healthcare.

Citation: Jin, Z., Kang, M., Zhao, W. et al. Robust and interpretable unit level causal inference in neural networks for pediatric myopia. npj Digit. Med. 9, 263 (2026). https://doi.org/10.1038/s41746-026-02442-7

Keywords: pediatric myopia, causal inference, explainable AI, neural networks, digital medicine