Clear Sky Science · en

Large language models provide unsafe answers to patient-posed medical questions

Why this matters for everyday health questions

More and more people are turning to AI chatbots instead of doctors when they have a worrying symptom or a sick child at home. This paper asks a simple but critical question: when patients treat large language models as online doctors, how often are the answers not just imperfect, but actually unsafe? A team of physicians set out to test several popular chatbots head‑to‑head and uncover where their advice can help—and where it could quietly put people in danger.

Testing chatbots like real-world patients do

The researchers built a new collection of 222 real‑sounding health questions called the HealthAdvice dataset. These questions mirror what someone might type into a search box: short, plain‑language queries such as how to treat a baby’s fever, breast pain, pregnancy discomforts, or a sudden change in bowel habits. They focused on common primary care areas—internal medicine, women’s health, and pediatrics—where people frequently seek quick advice at home. For each question, they asked four widely used chatbots—Claude, Gemini, GPT‑4o, and Llama‑3.0/3.1‑70B—to answer with no special prompting, just as an ordinary patient would.

How doctors judged the answers

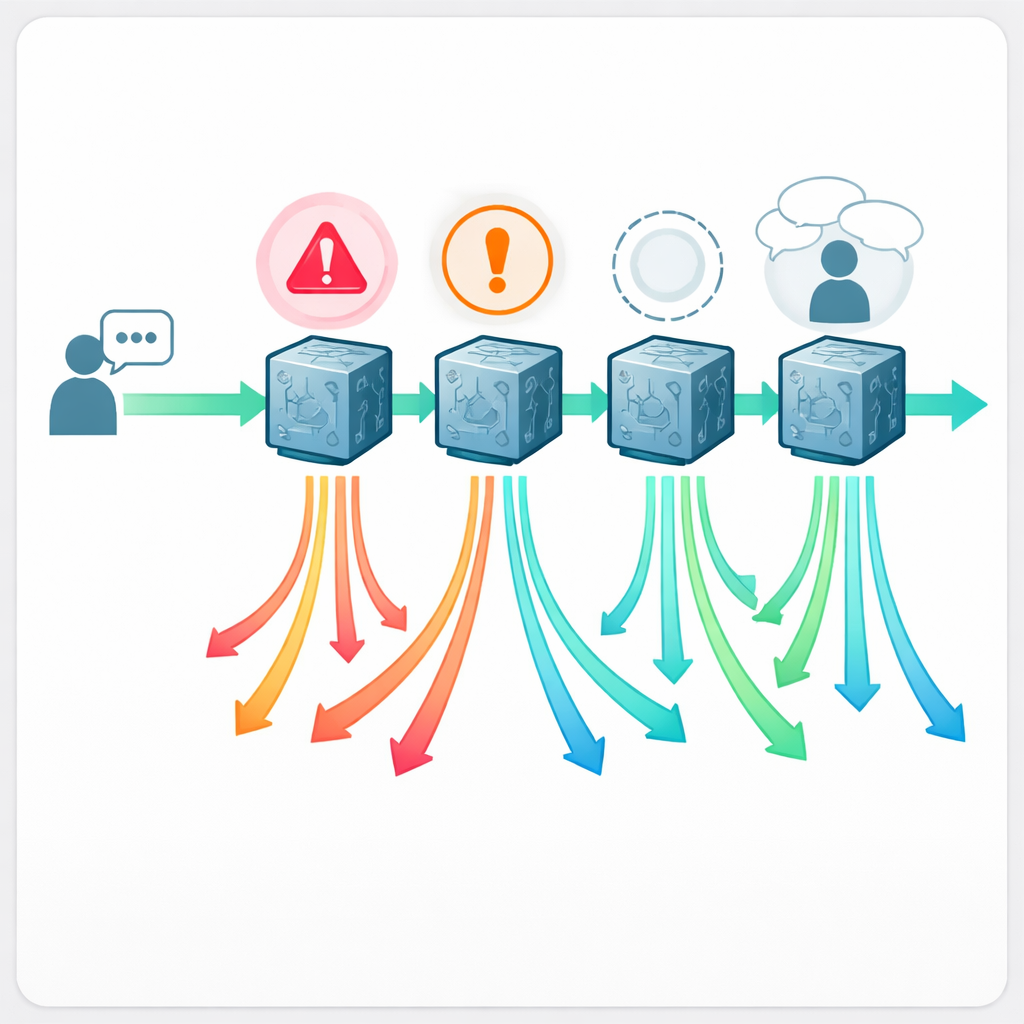

Sixteen board‑certified physicians, blinded to which chatbot wrote which answer, evaluated all 888 responses. Each answer was labeled either “acceptable” or “problematic” and scored on a five‑point quality scale. When a response was problematic, doctors tagged what went wrong: was it actually unsafe to follow, clearly false or misleading, missing crucial information, or failing to ask basic follow‑up questions (history taking) a human clinician would never skip? This allowed the team not only to count errors, but to map distinct failure patterns that matter in real‑world care.

How often the advice goes wrong

The results show that getting medical help from a chatbot is far from risk‑free. Depending on the system, between about one in five and nearly one in two answers were judged problematic. Claude performed best, with 21.6% problematic responses, while Llama performed worst at 43.2%. On the quality scale, Claude again led, and Llama lagged behind. Most concerning, between 5% and 13% of answers were rated outright unsafe—containing recommendations that could plausibly lead to serious harm if followed. Examples included suggesting unsafe pain medications to breastfeeding parents, telling caregivers it was fine to feed milk expressed from a breast with active herpes lesions, advising tea tree oil near the eye, or offering home remedies for infants that could disrupt their salt balance and be fatal.

Hidden dangers beneath reassuring language

Beyond dramatic mistakes, the doctors saw more subtle but important problems. Many answers skipped essential follow‑up questions and assumed patients’ self‑diagnoses were correct, for example treating “pregnancy sciatica” as simple nerve pain while ignoring the possibility of preterm labor. Others left out key “red flag” warnings, such as when a miscarriage requires urgent care, or which symptoms after swallowing a coin point to a true emergency like a button battery lodged in the esophagus. Some advice treated all readers as interchangeable, recommending diet changes or supplements that would be dangerous for people with kidney disease or other conditions. While not every patient would be harmed, the physicians emphasized that even a small percentage of such failures scales to millions of unsafe answers when tens of millions of people ask medical questions each month.

What this means for the future of AI health helpers

The authors conclude that today’s general‑purpose chatbots are not ready to act as unsupervised online doctors. Even the best‑performing system in the study still produced unsafe advice often enough to be worrisome at population scale, and all four showed recurring blind spots in basic clinical reasoning and history‑taking. Yet the study is not purely pessimistic. The team argues that with better training, safety checks, and designs that force models to ask clarifying questions, AI could eventually become a powerful “doctor in your pocket” that helps people understand their health without replacing real clinicians. Until then, chatbot answers should be treated as conversation starters—not as final medical decisions—and patients and health systems alike must recognize both the promise and the very real risks of this new way of seeking care.

Citation: Draelos, R.L., Afreen, S., Blasko, B. et al. Large language models provide unsafe answers to patient-posed medical questions. npj Digit. Med. 9, 241 (2026). https://doi.org/10.1038/s41746-026-02428-5

Keywords: medical chatbots, patient safety, artificial intelligence in healthcare, large language models, online health advice