Clear Sky Science · en

Anatomy-guided visual prompt tuning for cross-modal breast cancer understanding

Smarter Screening for a Common Cancer

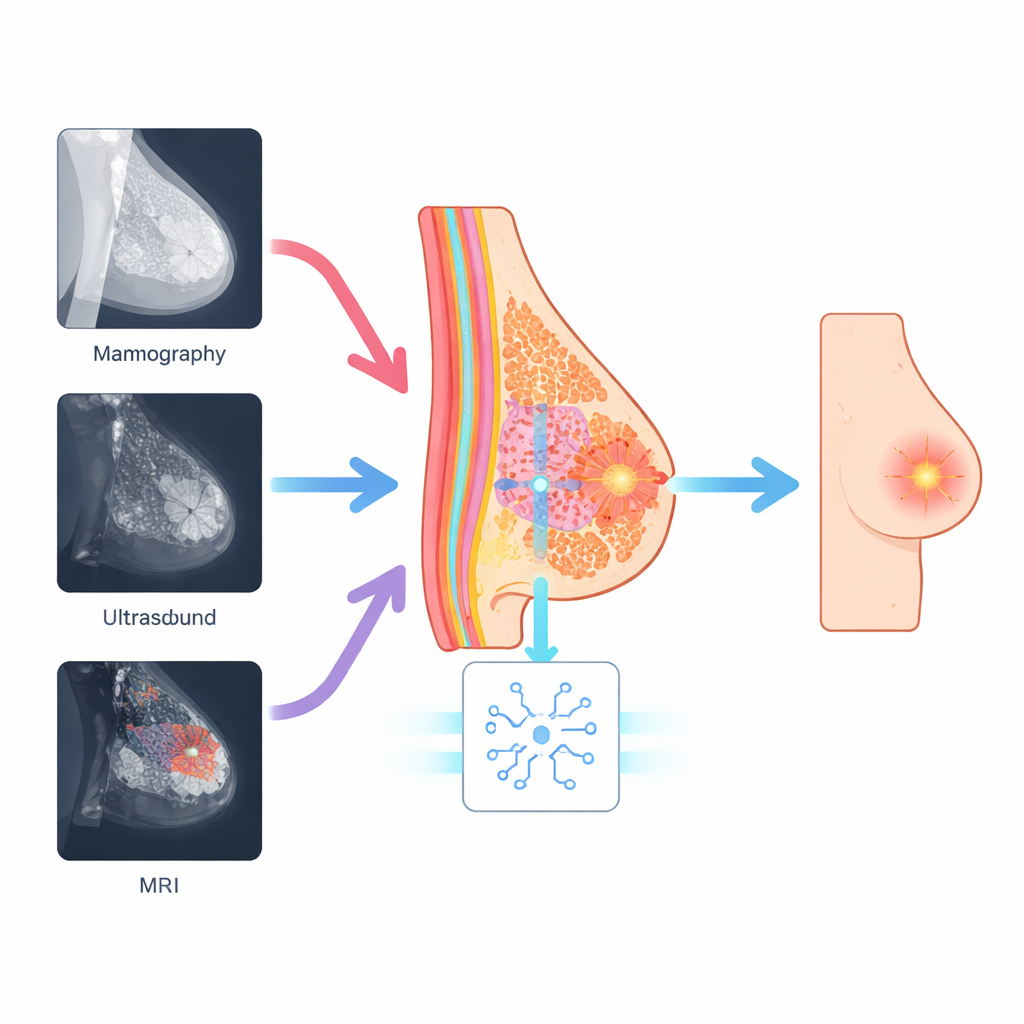

Breast cancer is one of the leading causes of cancer death in women, and doctors increasingly rely on computer programs to help read complex medical images. But mammograms, ultrasound scans, and MRI pictures all show the breast in very different ways, making it hard for current artificial intelligence systems to stay reliable across machines and hospitals. This study introduces a new AI approach that "thinks" about the underlying breast anatomy rather than just the brightness patterns in each image, leading to more accurate and more consistent detection of suspicious areas.

Why Different Scans Confuse Computers

Mammography, ultrasound, and MRI each use different physics to look inside the breast. A cancer that appears as a bright speckle on an ultrasound image might show up as a subtle shadow on a mammogram or as a glowing patch on MRI. Many modern AI systems, including powerful vision transformers and vision–language models, learn mainly from overall image appearance. They often miss tiny but important details like microcalcifications or irregular borders, and their performance can drop sharply when moved from one type of scanner or hospital to another. This gap between training conditions and real-world clinics has limited the trust doctors can place in such tools.

Using the Breast Itself as a Guide

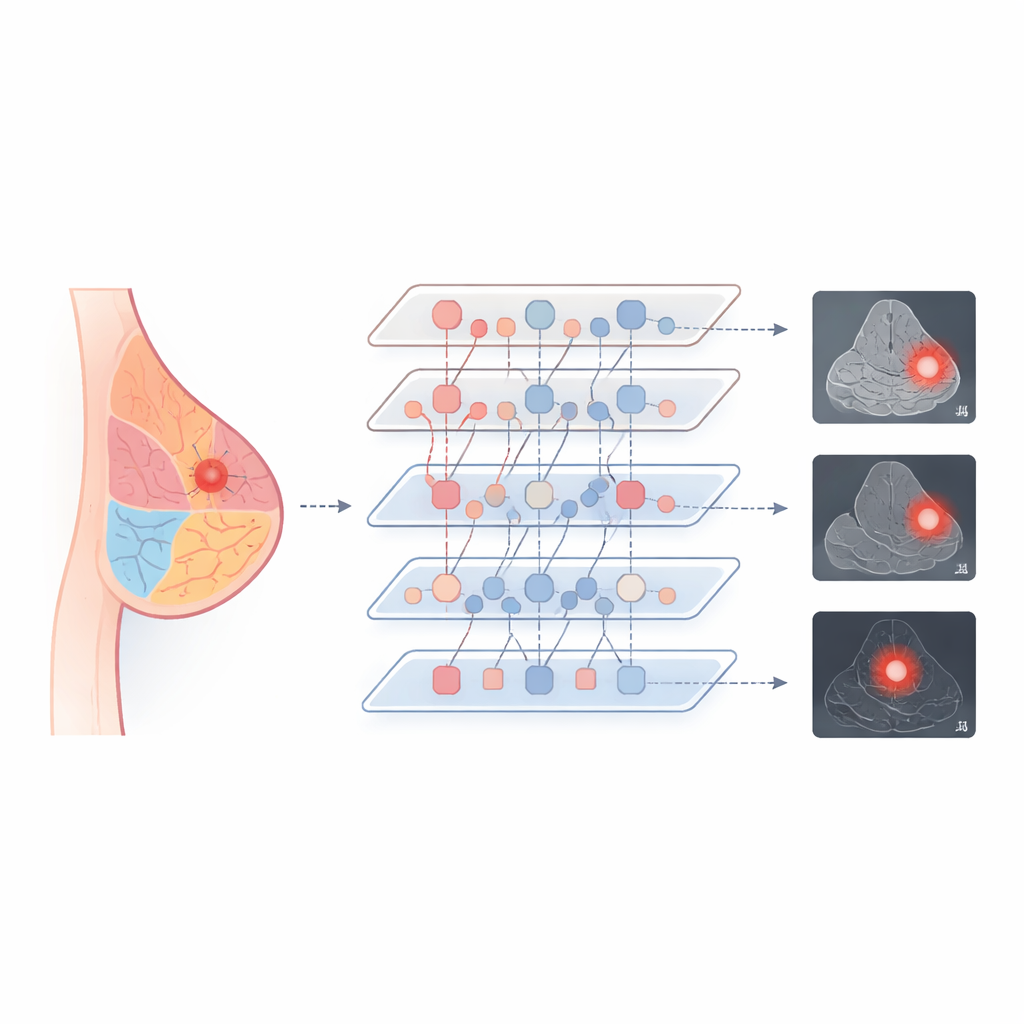

The researchers argue that, even though the pictures look different, the actual biology of the breast does not change between scans. Every image still contains glandular tissue, fat, and ductal structures arranged in a recognizable pattern. Their method, called Anatomy-Guided Visual Prompt Tuning (A-VPT), builds this basic map of the breast directly into the AI model. Instead of adjusting millions of internal weights, the system adds a small set of extra "prompt" signals that tell the network which tissue regions it is looking at. These prompts are generated from coarse anatomical maps or learned tissue cues and then injected layer by layer into a frozen, pre-trained transformer. In effect, the model is constantly reminded where the ducts, glands, and fat are, so it can judge suspicious areas in the right context.

Teaching One System to Speak Many Imaging Languages

To make the model work across imaging types, the team designed a training scheme that forces the AI to treat similar tissues similarly, no matter how they are scanned. They align the internal fingerprints of fatty, glandular, and ductal regions taken from mammography, ultrasound, and MRI, nudging them together in a shared space. Where text reports are available, the system also links these tissue patterns to short descriptive phrases, tying visual features to medical language. During processing, specialized interaction modules let the anatomy prompts and the image features exchange information in both directions, with a gating step that controls how strongly anatomy influences each layer. This combination helps the model focus on the right structures while staying stable and efficient.

Better Accuracy with a Lighter Touch

The authors tested A-VPT on three well-known breast imaging collections covering all three modalities. Compared with traditional deep networks and several popular ways of fine‑tuning large models, their method achieved the highest scores for both classifying lesions as benign or malignant and outlining their boundaries. It did particularly well when asked to use knowledge from one scan type to interpret another—for example, training on mammograms and then evaluating on ultrasound—where older methods often stumbled. Strikingly, A-VPT reached these results while updating less than 2% of the model’s parameters, which reduces computing needs and makes it easier to deploy in real hospitals. Visualizations of where the model "looked" showed that it concentrated on realistic glandular and peritumor regions, suggesting that its decisions match radiologists’ reasoning.

What This Means for Patients and Clinics

In plain terms, this work shows that teaching AI systems about basic anatomy can make them both smarter and more understandable. By anchoring its reasoning to the real structure of the breast, A-VPT is better at spotting and outlining tumors across different imaging methods, with fewer adjustments and more transparent behavior. If further validated, this strategy could support more consistent screening and diagnosis in diverse settings, from large medical centers to smaller clinics, and could be extended to other organs such as the lung or liver. Ultimately, anatomy-aware AI may become a key partner in earlier and more reliable cancer detection.

Citation: Zhao, S., Meng, Q., He, Y. et al. Anatomy-guided visual prompt tuning for cross-modal breast cancer understanding. npj Digit. Med. 9, 240 (2026). https://doi.org/10.1038/s41746-026-02417-8

Keywords: breast cancer imaging, medical AI, vision transformers, cross-modal learning, anatomy-guided prompts