Clear Sky Science · en

Independent and collaborative performance of large language models and healthcare professionals in diagnosis and triage

Why this matters for your next doctor visit

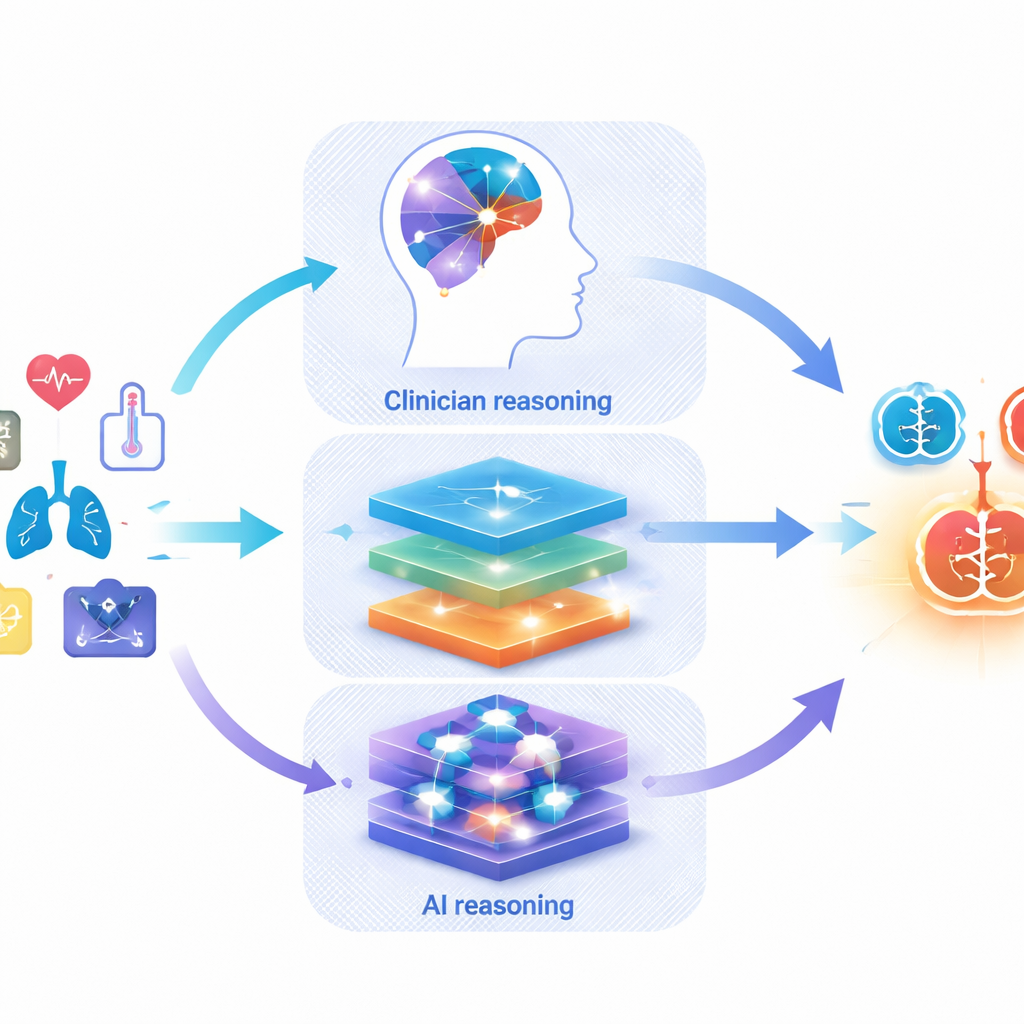

When you type your symptoms into an online chat bot or ask an AI app what might be wrong, you are using the same kind of technology that doctors are now testing in hospitals: large language models, or LLMs. This study asks a simple but vital question: how well do these tools actually diagnose illness and decide how urgent a case is, compared with real healthcare professionals—and what happens when the two work together?

How the researchers took a big-picture look

The authors did not test a single chatbot in one clinic. Instead, they combined evidence from 50 separate studies carried out around the world between 2020 and 2025. These studies covered many specialties, from eye disease and brain scans to emergency care. In each one, doctors and one or more LLMs were given the same descriptions of real or carefully designed patient cases. The LLMs had to suggest possible diagnoses or decide how quickly a patient needed care, while the doctors did the same. In some studies, doctors were also shown the AI’s suggestions to see whether this helped them perform better.

How good are AI systems on their own?

Across all studies, the AI tools could often place the right diagnosis somewhere on their list, but they still usually fell short of doctors when forced to pick just one answer. When only the top guess was counted, LLMs were about 11% less accurate than healthcare professionals on average. As the list of allowed guesses grew longer, that gap shrank and eventually disappeared—by the time ten possible diagnoses were allowed, the AI systems were at least as likely as doctors to include the correct one. For triage decisions—judging how urgent a case was and what level of care was needed—AI and humans performed similarly overall. However, results varied widely across individual models and testing setups, hinting that some tools are much more dependable than others.

What happens when doctors use AI as a teammate?

Nine studies looked directly at collaboration: doctors first worked alone and then repeated the task with help from an LLM. Here the news was encouraging. When supported by AI, doctors were more accurate overall, especially when allowed to give several possible diagnoses. For example, with help from an LLM, their accuracy for short candidate lists improved by around 10–40%, depending on how many options were considered. This suggests that AI is particularly useful as a brainstorming partner that widens the set of possibilities and nudges clinicians to consider less obvious conditions, while the human expert still makes the final call.

Why today’s results may look better than real life

Even though the numbers sound promising, the authors warn that most existing studies are far from perfect. Many relied on neat, textbook-style case summaries or rare cases chosen for teaching, not the messy, incomplete stories that patients present with in real clinics. Only a handful used real-time patients. Details about how cases were selected, how the AI tools were configured, and how answers were judged were often missing. Visual information such as scans or skin photos was used less often, and when images alone were tested, experienced clinicians clearly outperformed the AI. The researchers also highlight that junior clinicians and experts may respond differently to AI advice, and that issues like data privacy, hidden bias, and over‑trust in machine suggestions remain largely untested in day‑to‑day practice.

What this means for patients and the future of care

Overall, the study suggests that current chatbots and LLMs are not ready to replace your doctor, but they may soon become valuable assistants. Used wisely, they can help generate broader lists of possible diagnoses and support more accurate decision-making, especially when doctors remain in charge and treat AI as a second opinion rather than a final verdict. Before these tools are woven into routine care, however, the authors argue that we need better-designed, real-world trials, clearer reporting standards, and strong safeguards around safety, fairness, and privacy. For patients, this means that AI may eventually help your care team think more broadly and act faster, but any trustworthy system must be tested just as rigorously as a new drug or medical device.

Citation: Chen, M., Wu, Y., Ma, J. et al. Independent and collaborative performance of large language models and healthcare professionals in diagnosis and triage. npj Digit. Med. 9, 222 (2026). https://doi.org/10.1038/s41746-026-02409-8

Keywords: medical diagnosis AI, clinical triage, large language models, doctor AI collaboration, digital health safety