Clear Sky Science · en

CFG-MambaNet: Contextual and Frequency-Guided Mamba Network for medical image segmentation

Why clearer medical images matter

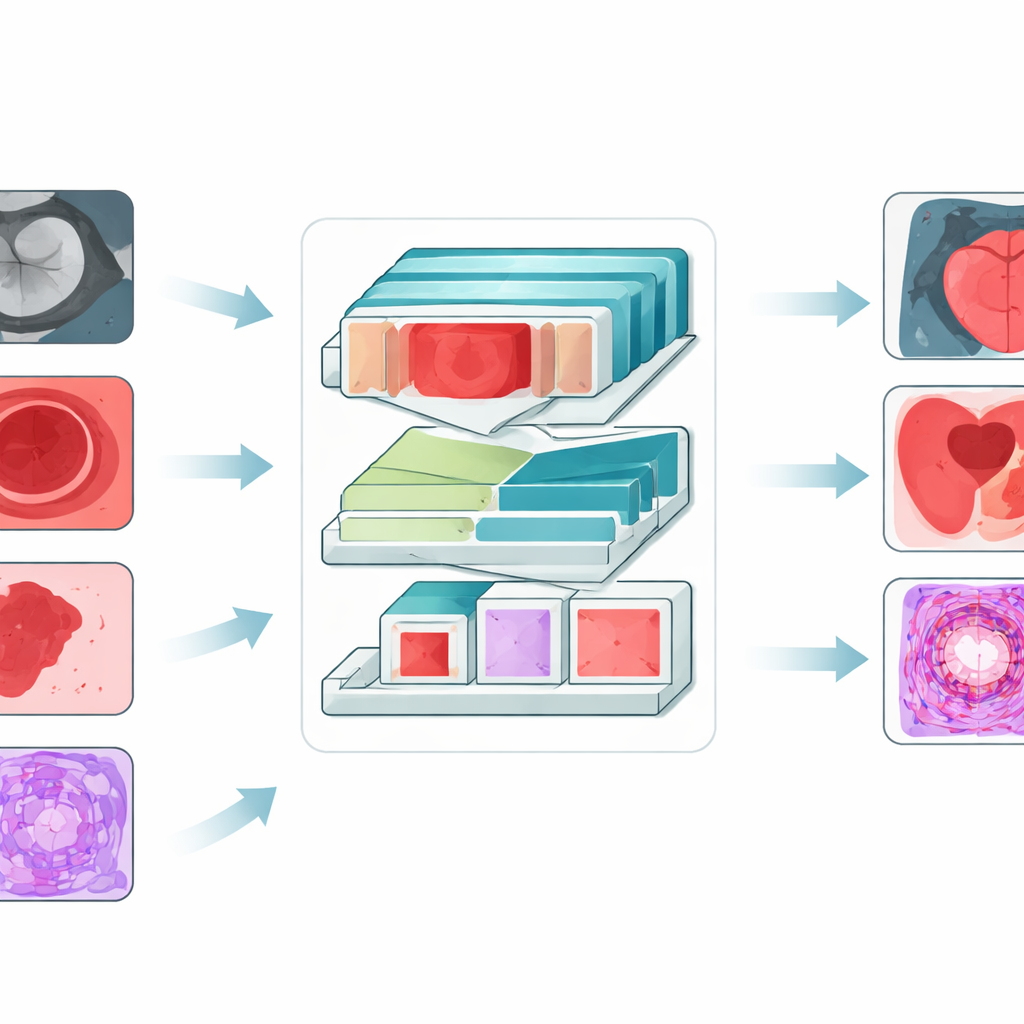

When doctors examine heart scans, colonoscopies, skin photos, or tissue slides, they often need a computer’s help to outline exactly where a tumor, organ, or suspicious spot begins and ends. This outlining step, called segmentation, underpins diagnosis, treatment planning, and even decisions about surgery. The paper introduces CFG‑MambaNet, a new artificial intelligence (AI) system designed to draw these boundaries more accurately and more reliably across many kinds of medical images.

The challenge of drawing precise borders

Modern AI tools can already label medical images, but they stumble in tricky situations that are common in real clinics. Some methods see only small neighborhoods of pixels at a time, so they miss the bigger picture. Others can see the whole image at once but demand enormous computing power, making them hard to use with large, detailed scans. Many struggle when the region of interest is faint, blurred, very small, or oddly shaped. As a result, traditional systems may cut off part of a heart wall, misjudge the size of a polyp in the colon, or overlook the thin edge of a skin lesion—errors that can ripple into incorrect measurements or delayed diagnosis.

A new way for AI to see the whole picture

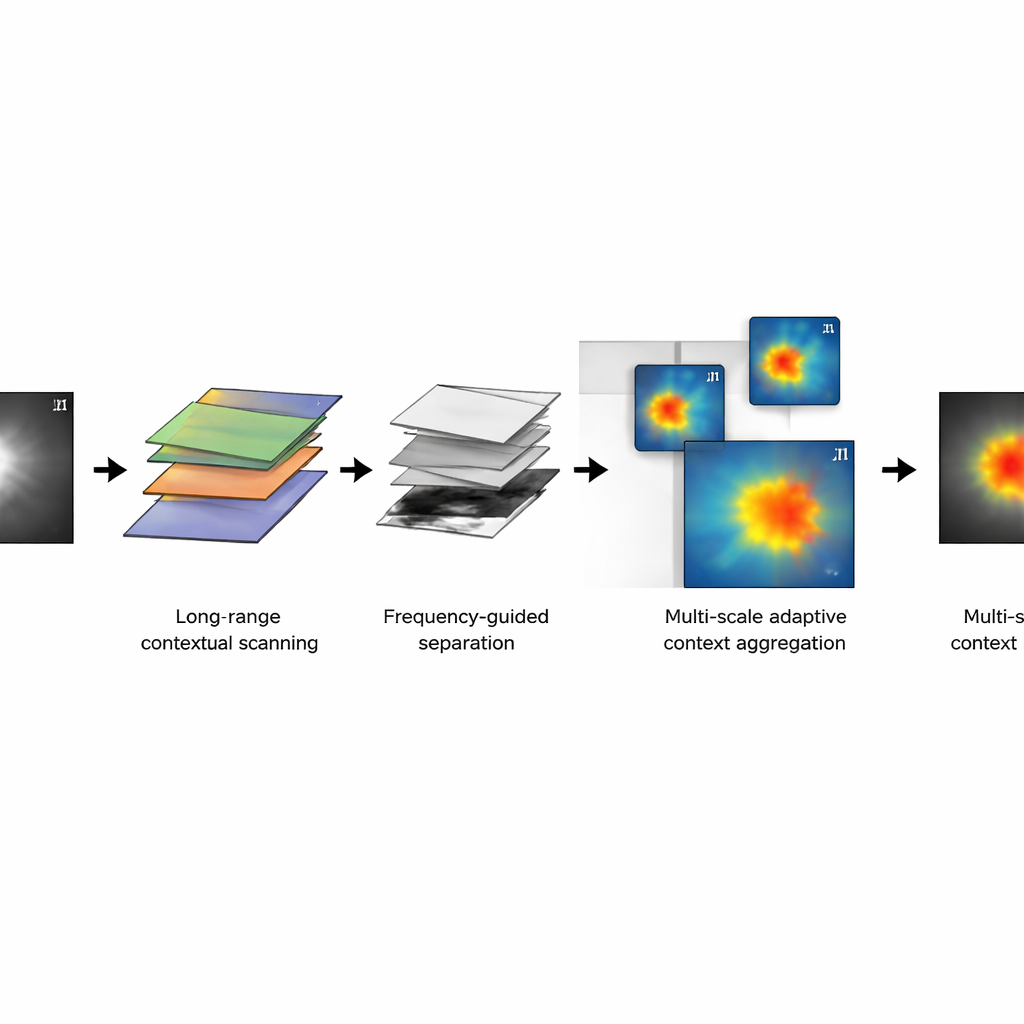

CFG‑MambaNet tackles these problems by rethinking how an AI network "looks" at an image. At its core is a visual state space block based on a recent architecture called Mamba. Instead of comparing every pixel with every other pixel—a costly step in many Transformer-based models—this block scans across the image in an ordered way, keeping track of long-range patterns with far less computation. This allows the network to understand how distant parts of an image relate to each other, such as the full shape of a ventricle in a heart scan, without slowing to a crawl on high‑resolution data.

Separating overall shape from fine details

A second idea in CFG‑MambaNet is to treat each image a bit like a piece of music, with low notes and high notes. In the frequency‑guided representation module, the AI splits the image’s information into smooth, slowly changing components (which capture overall organ shape) and rapid changes (which capture edges and textures). By adjusting these two parts separately and then recombining them, the system can sharpen fuzzy borders while still keeping the larger structure correct. This is especially useful for lesions whose edges fade into the background, like some skin spots or subtle tissue changes in pathology slides.

Adapting to tiny spots and large structures

Medical images often mix very large and very small structures: a full heart and a thin heart wall, a wide colon view and a tiny polyp. CFG‑MambaNet includes a multi‑scale adaptive context aggregation module that looks at each scene through several "zoom levels" at once. One branch focuses on broad background structure, another flexibly follows irregular shapes, and a third captures mid‑range patterns. The network then learns how much to trust each zoom level in different situations, highlighting the regions that matter most. Additional training tricks—such as a combined loss function that balances region accuracy and edge sharpness, and supervision at multiple depths in the network—help stabilize learning and further refine boundaries.

Proven gains across four types of medical images

To test CFG‑MambaNet, the authors evaluated it on four public datasets covering heart MRI scans, colonoscopy images, skin lesion photos, and microscopic pathology slides. In all four settings, the new method outperformed a wide range of leading segmentation models, including classic convolutional networks, Transformer‑based systems, and other Mamba‑style designs. It achieved higher overlap between predicted and true regions, smaller average distance between predicted and actual boundaries, and better sensitivity to hard‑to‑see lesions. This means sharper outlines of heart chambers, more accurate polyp masks in the colon, clearer borders for irregular skin lesions, and more faithful tracing of cancerous tissue under the microscope.

What this means for future care

From a lay perspective, CFG‑MambaNet is a smarter, more efficient "outlining assistant" for doctors. By seeing both the big picture and the fine details, and by working well on several very different imaging types, it moves automated segmentation closer to routine clinical use. While more testing in larger, real‑world patient groups is still needed, this approach could ultimately support more reliable measurements, earlier detection of disease, and better planning of treatments across cardiology, gastroenterology, dermatology, and cancer care.

Citation: Ren, G., Chen, Z., Su, P. et al. CFG-MambaNet: Contextual and Frequency-Guided Mamba Network for medical image segmentation. npj Digit. Med. 9, 202 (2026). https://doi.org/10.1038/s41746-026-02393-z

Keywords: medical image segmentation, deep learning, Mamba network, multi-scale imaging, clinical diagnostics