Clear Sky Science · en

Human–large language model collaboration in clinical medicine: a systematic review and meta-analysis

Why This Matters for Everyday Healthcare

Doctors are increasingly turning to powerful AI chatbots, called large language models, to help them think through complex cases, write notes, and interpret medical tests. This study asks a simple but crucial question: when doctors team up with these tools, do patients actually stand to benefit? By pulling together results from the best available trials, the authors show that the answer is more complicated than the hype suggests—sometimes the partnership helps, sometimes it does little, and in a few situations it may even get in the way.

What the Researchers Looked At

The team systematically searched major medical databases for studies in which clinicians worked either with or without help from an AI system based on large language models such as GPT-4. To be included, a study had to pit a “doctor plus AI” workflow directly against usual care by doctors alone, and sometimes against the AI working on its own. The clinical tasks spanned a range of real problems: figuring out what might be wrong with a critically ill patient, interpreting brain scans, writing and reading clinic notes, and deciding how to manage chest pain and other common complaints. In total, 10 peer‑reviewed trials formed the backbone of the analysis, with a few extra preprints used only to check how robust the conclusions were.

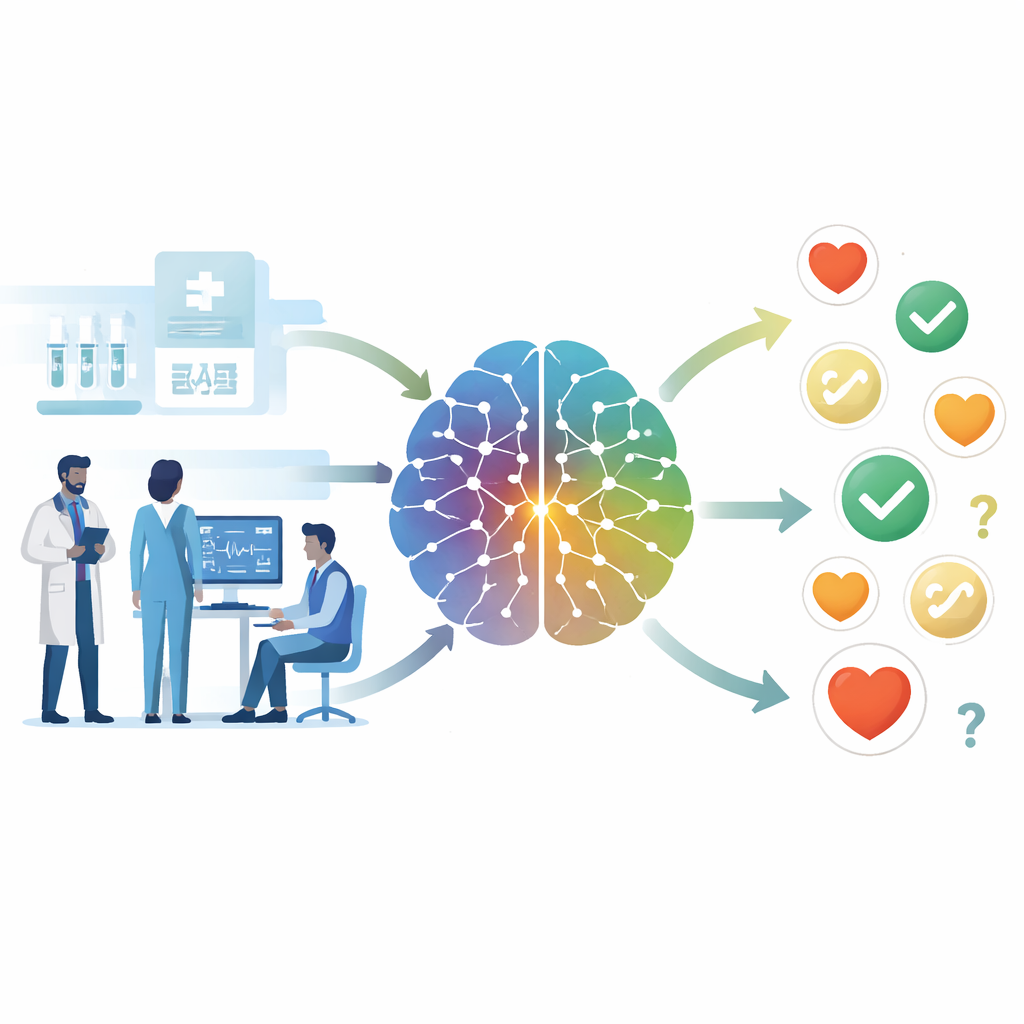

How Well the Doctor–AI Teams Performed

Across these studies, pairing doctors with AI showed small but noticeable improvements in some measures of diagnostic and management quality. In two randomized trials that used detailed scoring systems for case decisions, the doctor–AI teams scored about five percentage points higher than doctors alone. Put simply, if doctors working solo made about 100 key decisions, adding AI might prevent roughly five of those decisions from being wrong. However, the authors stress that the underlying data are thin: only a couple of trials contributed to these estimates, and the range of plausible real‑world results is wide enough to include no benefit—or even harm—in other settings.

Speed, Documentation, and Hidden Errors

Many hope AI will free up doctors’ time. Here, the evidence was underwhelming. When the researchers combined three trials that measured how long tasks took, they found essentially no overall time savings. In some simulated exercises doctors were a little faster with AI; in a real clinic study, the net effect on visit length was almost zero, though some subgroups saw modest gains. Documentation told a similar “mixed” story. AI assistance often made notes look clearer and more structured, and it helped non‑specialists better understand technical eye‑care reports. Yet when researchers checked facts, they found that about one in three AI‑supported notes still contained mistakes. This split—better‑looking records that can still be wrong—raises clear safety concerns.

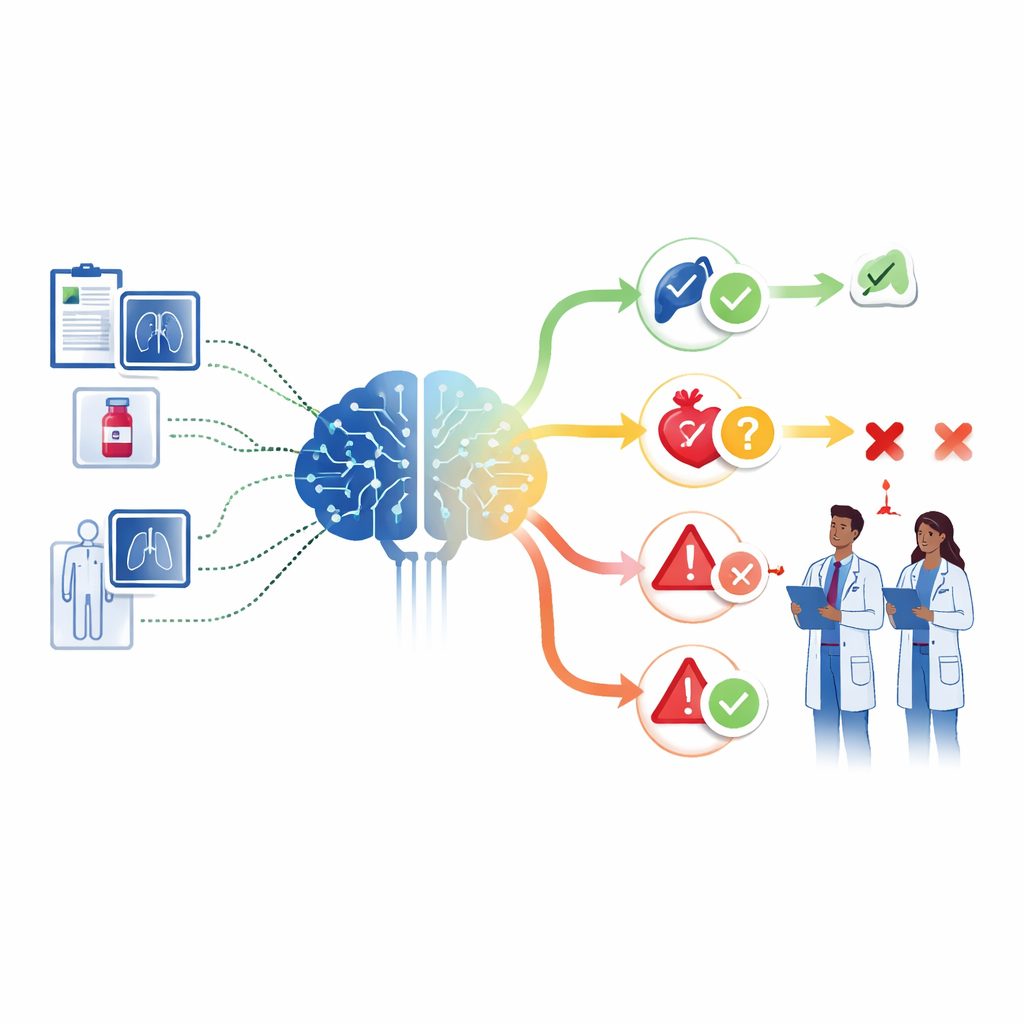

When Teaming Up Fails to Beat the Machine

A striking finding came from trials that also tested the AI alone. In one study of critically ill patients, the AI by itself did about as well as the doctor–AI team, and better than many doctors working solo. In another, AI‑generated test reports were clearly worse than those produced by human experts, whether or not the AI was used as an assistant. Together, these results expose what the authors call a “collaboration paradox”: simply inserting a human into the loop does not guarantee an improvement over a strong AI, and in some situations the partnership can dilute the strengths of both. Factors such as how advice is presented, how much doctors trust or distrust it, and how the tool is embedded in daily workflow all influence whether collaboration helps or hinders.

What This Means for the Future of Doctor–AI Teams

Overall, the review paints a picture of cautious promise rather than a revolution already achieved. Doctor–AI teams can modestly improve certain decision scores and make medical writing easier to read, but they do not reliably save time, and they still generate a worrying number of factual mistakes. The authors argue that health systems should roll out these tools gradually, with strong safeguards that focus on catching errors rather than just boosting efficiency. They also call for larger, real‑world clinical trials that test AI assistance in busy hospitals and clinics, not just in controlled case simulations. Until such evidence arrives, the safest path is to treat large language models as powerful but fallible assistants—and to design workflows where clinicians act as critical reviewers and gatekeepers, not passive acceptors, of AI advice.

Citation: Wang, G., Zhang, K., Jiang, J. et al. Human–large language model collaboration in clinical medicine: a systematic review and meta-analysis. npj Digit. Med. 9, 195 (2026). https://doi.org/10.1038/s41746-026-02382-2

Keywords: human–AI collaboration, clinical decision support, large language models, diagnostic accuracy, medical documentation