Clear Sky Science · en

Naturalistic facial dynamics enable quantitative clinical assessment of atypical expression phenotypes in children with autism spectrum disorder

Why everyday smiles and frowns matter

Parents, teachers, and clinicians often sense that children with autism express their feelings "differently," but those differences are hard to put into words or measure. This study shows that ordinary video of children playing and chatting—without any scripted tests—can be turned into detailed, objective clues about how their faces move over time, helping to flag autism earlier and understand symptom severity more precisely.

Watching real-life moments, not staged tests

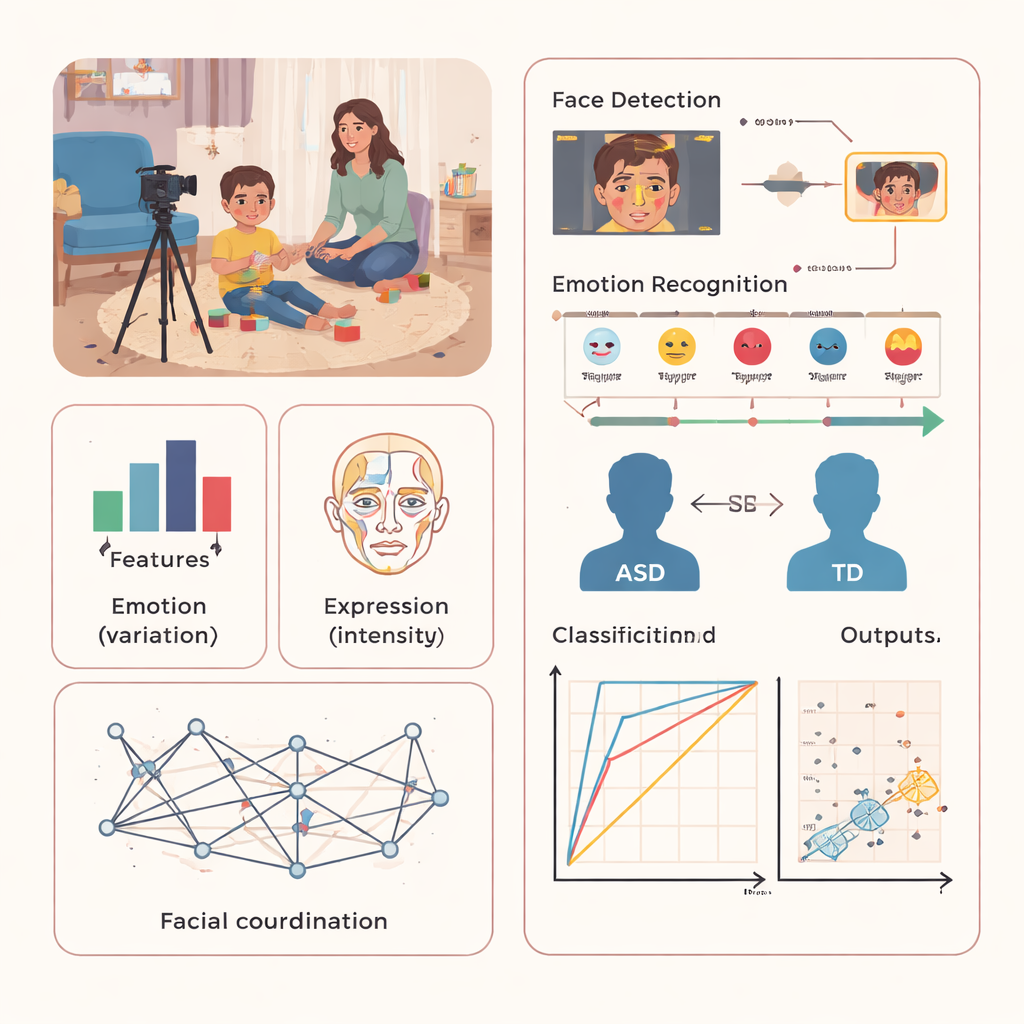

Instead of asking children to imitate faces or react to specific pictures, the researchers recorded 186 youngsters aged three to ten in relaxed, unscripted sessions that felt like home or school. Toys, picture books, and cartoons were available, and an adult simply interacted with each child while a camera captured the child’s face. Ninety-nine children had an autism diagnosis, and 85 were typically developing peers. Parents had already completed standard checklists about autism-related behaviors, giving the team reference scores for how strongly each child was affected.

Turning video into emotion “signatures”

From these videos, computer vision tools automatically found each child’s face in every frame and estimated which of five basic emotions it showed: neutral, happy, surprise, sad, or anger. The team then went beyond simple emotion counts. They measured how emotions changed over time (emotion variation), how strongly different facial muscles were activated (expression intensity), and how well muscles across the face moved together (facial coordination). These three ingredients created a kind of emotional “fingerprint” for each child that captured both big-picture mood swings and tiny, moment-to-moment adjustments in facial movement.

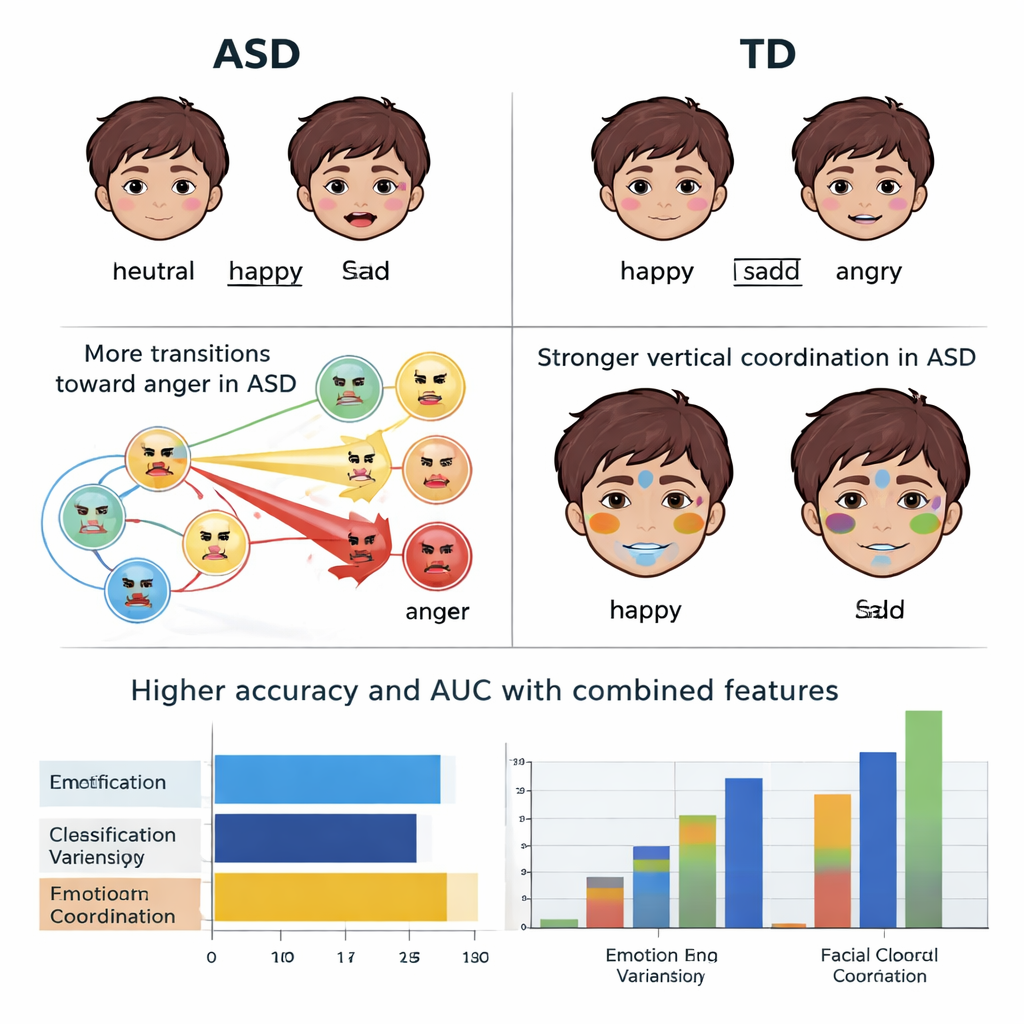

How autistic and non-autistic faces differ

When the researchers compared the two groups, one pattern stood out: anger-like expressions were more prominent and lasted longer in the autistic children, even in a generally friendly setting. The paths between emotions also differed. For example, children with autism were less likely to shift from sad back to neutral, and more likely to move into anger from other feelings. At the muscle level, their expressions tended to be stronger overall, especially in facial areas that are not usually central to a given emotion. This overuse of “non-core” muscles may help explain why their expressions can look unclear or unusual. Coordination across the face was also altered, with stronger coupling between upper and lower facial regions, suggesting that some parts of the face move together in a more rigid, less flexible way.

From subtle movements to screening tools

These detailed facial patterns turned out to be powerful signals. When the computer model used only the broad ups and downs of emotion, it could distinguish autism from typical development with modest accuracy. But when emotion variation was combined with expression intensity and coordination, the system correctly classified children about 92 percent of the time and produced a very high score on a standard accuracy measure (AUC). The same features could also estimate how severe a child’s symptoms were on common parent questionnaires, capturing around 40 percent of the variation in scores—a meaningful start, though not perfect.

What this means for families and clinicians

To a layperson, the message is that the "hard-to-describe" facial differences often noticed in children with autism are real, measurable, and surprisingly informative. By quietly analyzing ordinary interactions instead of relying on brief, expert-led tests, this approach may one day support large-scale, low-burden screening in clinics, schools, or even homes. It will not replace full clinical evaluations, but it could help identify children who need them sooner, and offer a more objective window into how their emotional expressions differ from their peers.

Citation: Du, M., Shi, P., Liu, Z. et al. Naturalistic facial dynamics enable quantitative clinical assessment of atypical expression phenotypes in children with autism spectrum disorder. npj Digit. Med. 9, 183 (2026). https://doi.org/10.1038/s41746-026-02375-1

Keywords: autism spectrum disorder, facial expressions, computer vision, digital health screening, child development