Clear Sky Science · en

DARE-FUSE: domain aligned evidence guided learning for joint brain tumor MRI segmentation and classification

Why Smarter Brain Scans Matter

Brain tumors are among the most feared diagnoses in medicine, and magnetic resonance imaging (MRI) is the main tool doctors use to see where a tumor begins and ends. Yet even expert radiologists can struggle to precisely outline a tumor and judge how it is changing over time, especially when its edges blur into swollen brain tissue. This paper introduces DARE-FUSE, a new artificial intelligence system designed to read brain MRI scans more reliably, drawing sharper tumor boundaries and offering clearer explanations of its decisions to support surgeons, oncologists, and patients.

Blurry Edges and Busy Clinics

In real hospitals, brain MRI scans are messy. Tumors often blend into surrounding swelling, metal implants can distort the image, and different hospitals use slightly different scanning settings. Radiologists must manually scroll through hundreds of images, mark out the tumor slice by slice, and then decide how it is behaving. That work is time-consuming, tiring, and subject to disagreement between experts. Existing AI tools can help outline tumors or label scans as “tumor” or “no tumor,” but most systems handle these tasks separately, and many falter when the images come from new centers or contain subtle, irregular growth at the margins.

A Unified AI Assistant for Tumor Maps and Labels

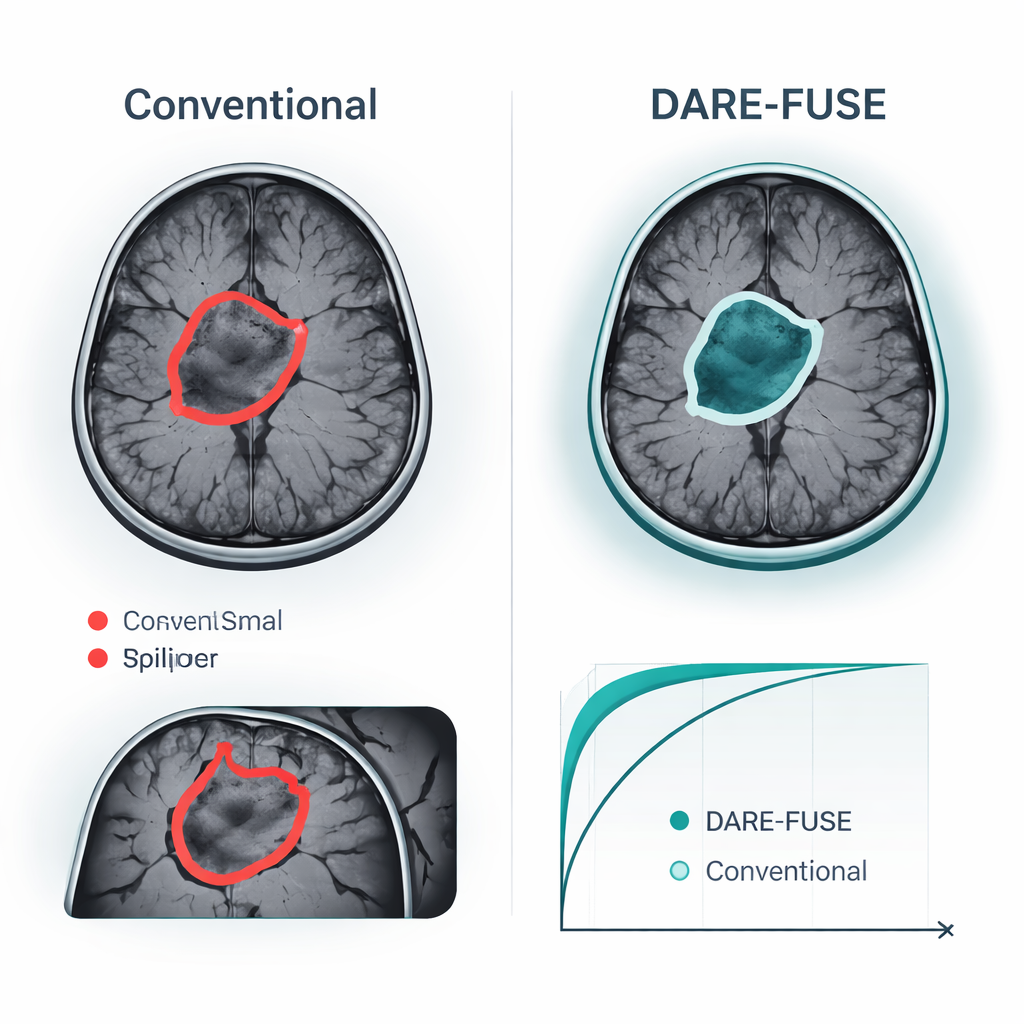

DARE-FUSE tackles several of these hurdles at once. It is built as a single pipeline that both traces the tumor on each MRI slice (segmentation) and classifies whole images into diagnostic groups (classification). At its core are two cooperating “views”: one network tuned to detailed shapes and boundaries, and another tuned to global patterns that distinguish different tumor types. A special alignment module keeps these views in sync across hospitals and scanners, so features learned from one dataset do not damage performance on another. The system also estimates its own uncertainty, essentially flagging areas where it is less sure about the exact tumor outline, which is vital for safe clinical use.

Using Clues from Heatmaps and “Tumor-Free” Reconstructions

Rather than trusting a single signal, DARE-FUSE learns from multiple kinds of evidence. One branch produces heatmaps, showing which parts of the brain most strongly support the AI’s classification decision. Another branch uses a generative model to imagine what the same scan might look like if the tumor were removed, then compares that “tumor-free” version with the original. The differences between the two highlight subtle structural changes and edges that might not light up strongly on a standard heatmap. A fusion module then combines these clues into a continuous “tumor prior” map: regions where several sources agree are treated as core tumor, while less certain regions are added more cautiously and down-weighted when the model’s uncertainty is high. This blended prior guides the final contour, helping avoid both missed pockets of tumor and spurious islands in healthy tissue.

Proven Gains on Public Brain Tumor Datasets

The authors tested DARE-FUSE on six large, multi-center brain tumor challenges (the BraTS series) and four public MRI collections used for image-level classification. Across all BraTS editions, the system matched or exceeded the best recent deep-learning models, achieving slightly higher overlap between its predicted tumor masks and expert drawings, and consistently smaller errors in the measured tumor surface. These gains were most striking in difficult cases: small tumors, low-contrast edges, and complex, irregular shapes. In classification tasks—deciding, for example, whether a scan shows a glioma, meningioma, pituitary tumor, or no tumor—DARE-FUSE also edged out strong transformer and weakly supervised baselines on accuracy and on a standard discrimination measure (AUC). Importantly, when the researchers artificially reduced the number of detailed annotations, the new system degraded gracefully and kept an advantage over semi-supervised and weakly supervised competitors.

What This Could Mean for Patients

For patients and clinicians, the main promise of DARE-FUSE is not a flashy new algorithm, but more dependable, interpretable imaging support. In practice, the system could propose a tumor outline, highlight regions where it is less confident, and display heatmaps explaining which image regions drive its classification. Doctors might accept low-uncertainty regions as a starting contour, then focus their attention on the flagged areas instead of redrawing everything from scratch. More accurate and consistent measurements of tumor volume and shape could improve treatment planning, radiotherapy targeting, and tracking of response over time. While the authors emphasize that their tool is an assistant—not a replacement—for expert judgment, their results point toward AI systems that can both see tumors more clearly and communicate their level of confidence in ways clinicians can act on.

Citation: Liu, Y., Sun, C., Niu, Y. et al. DARE-FUSE: domain aligned evidence guided learning for joint brain tumor MRI segmentation and classification. npj Digit. Med. 9, 178 (2026). https://doi.org/10.1038/s41746-026-02365-3

Keywords: brain tumor MRI, medical image segmentation, deep learning in radiology, clinical decision support, uncertainty-aware AI