Clear Sky Science · en

LLM-driven collaborative framework for knowledge-enhanced cancer pain assessment and management

Why Smarter Pain Care Matters

Cancer pain is not just an unpleasant side effect—it can dominate a person’s final months or years, making sleep, movement, and even simple conversations difficult. Although powerful painkillers exist, using them safely and effectively is tricky, especially when every patient’s cancer, other illnesses, and medicines differ. This article describes OncoPainBot, a new artificial-intelligence framework built on large language models (LLMs) that aims to help doctors sort through complex records, follow up-to-date guidelines, and design safer, more personalized pain plans for people living with cancer.

A Tough Problem in Everyday Cancer Care

Pain in cancer comes from many sources: tumors pressing on bones or nerves, operations, chemotherapy, and radiotherapy. Up to 70% of people with advanced cancer live with significant pain, yet relief is often incomplete. Doctors must juggle opioid drugs, non-opioid medicines, and add-on treatments while watching for dangerous side effects, especially in patients with fragile liver or kidney function. Current pain assessment tools rely heavily on brief scales and free-text notes, which can differ from one clinician to another and from one hospital to the next. As a result, treatment decisions may vary widely, and opportunities to improve comfort can be missed.

Turning Medical Text into Actionable Insight

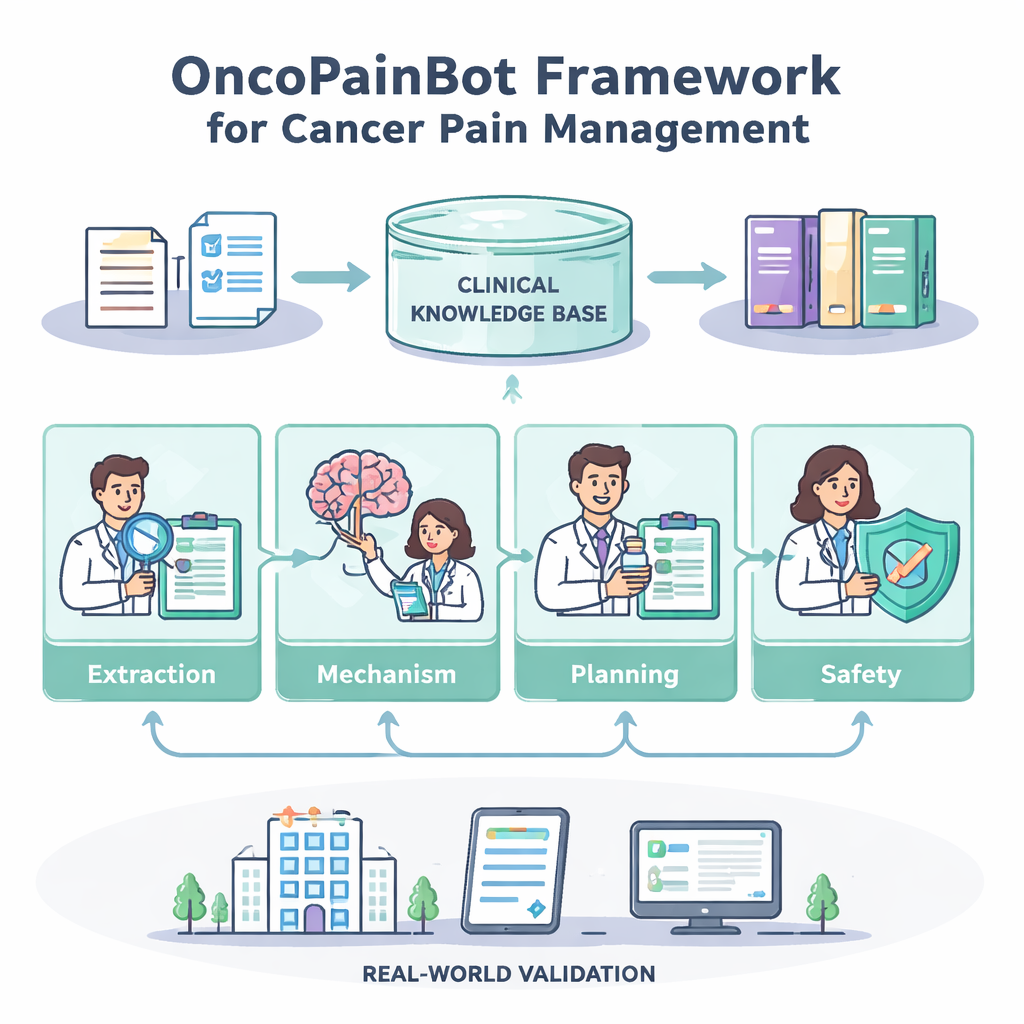

LLMs like ChatGPT and Claude can read and summarize long, messy documents, making them appealing for medical work. But ordinary “chatbots” are unsafe for cancer pain because they can invent details, miss drug conflicts, or ignore the latest guidelines. OncoPainBot tackles these problems by combining LLMs with a curated knowledge base built from major cancer organizations’ pain guidelines and by splitting the work into four cooperating “agents,” each mirroring a real clinical role. One agent pulls out key facts about a patient’s pain from electronic records, another reasons about what type of pain is present, a third drafts a treatment plan, and a fourth performs a safety check focused on drug interactions, organ function, and monitoring needs.

How the Four-Agent Team Works

The Pain-Extraction agent reads free-text notes and turns them into a structured picture: where the pain is, how strong it feels, what makes it better or worse, and which medicines have already been tried. The Pain-Mechanism Reasoning agent then uses that picture to infer whether the pain is mainly from tissue damage, nerve damage, or a mixture—an important clue for choosing the right drugs. Next, the Treatment-Planning agent consults the guideline-based knowledge base through a technique called retrieval-augmented generation, which lets the model pull in specific, up-to-date passages rather than relying on memory alone. It proposes stepwise plans—typically anchored on the World Health Organization’s “pain ladder”—including starting doses, ways to adjust them, and rescue doses for sudden pain flares. Finally, the Safety-Check agent acts like a cautious pharmacist, scanning for dose problems, risky combinations, and missing lab information, and flagging cases where the data are too thin to support a firm recommendation.

Putting the System to the Test

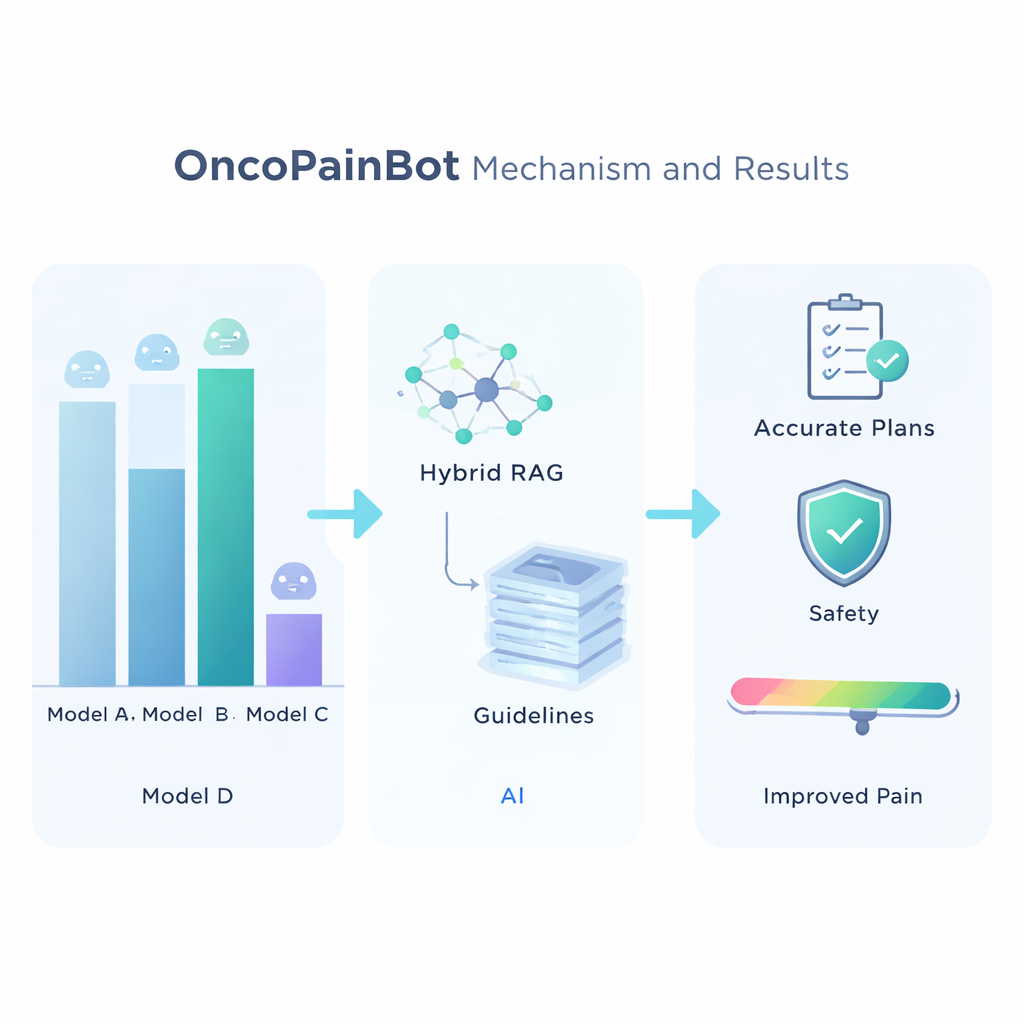

To choose the best underlying language model, the researchers compared seven leading systems on several medical question-answering tests. Claude 4 came out as the most accurate, though not the fastest, and was therefore selected as the “brain” of OncoPainBot. They then evaluated different ways of connecting this brain to the guideline library and found that a “Hybrid” retrieval strategy—using both keyword matching and deeper semantic search—gave the most reliable answers. With this setup in place, the team ran OncoPainBot on 516 real cancer pain records from a major Chinese hospital. The system’s written reports closely matched clinicians’ own notes in language and content, and its pain-treatment suggestions agreed with doctors’ real prescriptions in about 84% of cases. Importantly, most mismatches came from subtle, patient-specific nuances—such as undocumented opioid tolerance or complex organ failure—rather than from obviously wrong drug choices.

What This Could Mean for Patients

For people living with cancer, the promise of OncoPainBot is not that a machine will take over their treatment, but that it will give their care team a sharper, more consistent second opinion. The framework is designed as a “clinician-in-the-loop” tool: it highlights pain features that might otherwise be buried in notes, suggests guideline-aligned options, and calls attention to safety concerns, while leaving the final decisions to human doctors. The authors emphasize that their work is still in an early, retrospective stage and has been tested at only one center; real-time trials across multiple hospitals are still needed. Even so, their results suggest that carefully designed AI—grounded in solid evidence and transparent reasoning—could help standardize cancer pain care, reduce dangerous dosing mistakes, and, most importantly, make it more likely that patients spend less time suffering and more time living their lives.

Citation: Liu, H., Hu, Y., Li, D. et al. LLM-driven collaborative framework for knowledge-enhanced cancer pain assessment and management. npj Digit. Med. 9, 180 (2026). https://doi.org/10.1038/s41746-026-02362-6

Keywords: cancer pain management, clinical decision support, large language models, opioid therapy, retrieval-augmented generation