Clear Sky Science · en

Towards a speech-based digital biomarker for cognitive impairment: speech as a proxy for cognitive assessment

Why everyday talk can reveal brain health

Most of us take chatting with friends or describing a picture for granted. But as we age, subtle changes in how we choose words, form sentences, and pause between phrases can hint at how well our brain is working. This study asks a simple but powerful question: could a short recording of ordinary speech, collected at home with a laptop, act as an early warning sign for problems like dementia—without the need for long clinic visits and paper-and-pencil tests?

Listening instead of lengthy testing

Today, diagnosing cognitive decline usually depends on in‑person testing by specialists. These sessions are time‑consuming, expensive, and hard to repeat often or at large scale. At the same time, millions of older adults are at risk of conditions such as Alzheimer’s disease, where early detection matters: medications and lifestyle changes tend to work best before severe symptoms appear. Speech is an attractive alternative source of information. It is cheap to record, can be captured remotely, and naturally reflects many mental abilities, from memory to attention and planning. The researchers set out to test whether short, everyday speech samples could stand in as a “digital biomarker” of cognitive health.

Turning casual speech into measurable signals

The team recruited 1003 English‑speaking adults aged 60 and older from the United States and United Kingdom. Participants completed standard online thinking tests that measured four broad areas: language, executive function (planning and mental flexibility), memory, and speed. They also completed three simple speaking tasks at home: describing two well‑known black‑and‑white scenes used in clinical language testing, and talking about their past week. Using automatic speech‑recognition software, the scientists turned audio into text and then extracted dozens of measurable properties from both the sound and the words—such as how quickly people spoke, how often they paused, how varied their vocabulary was, and how frequently they used different kinds of words like nouns, verbs, or pronouns.

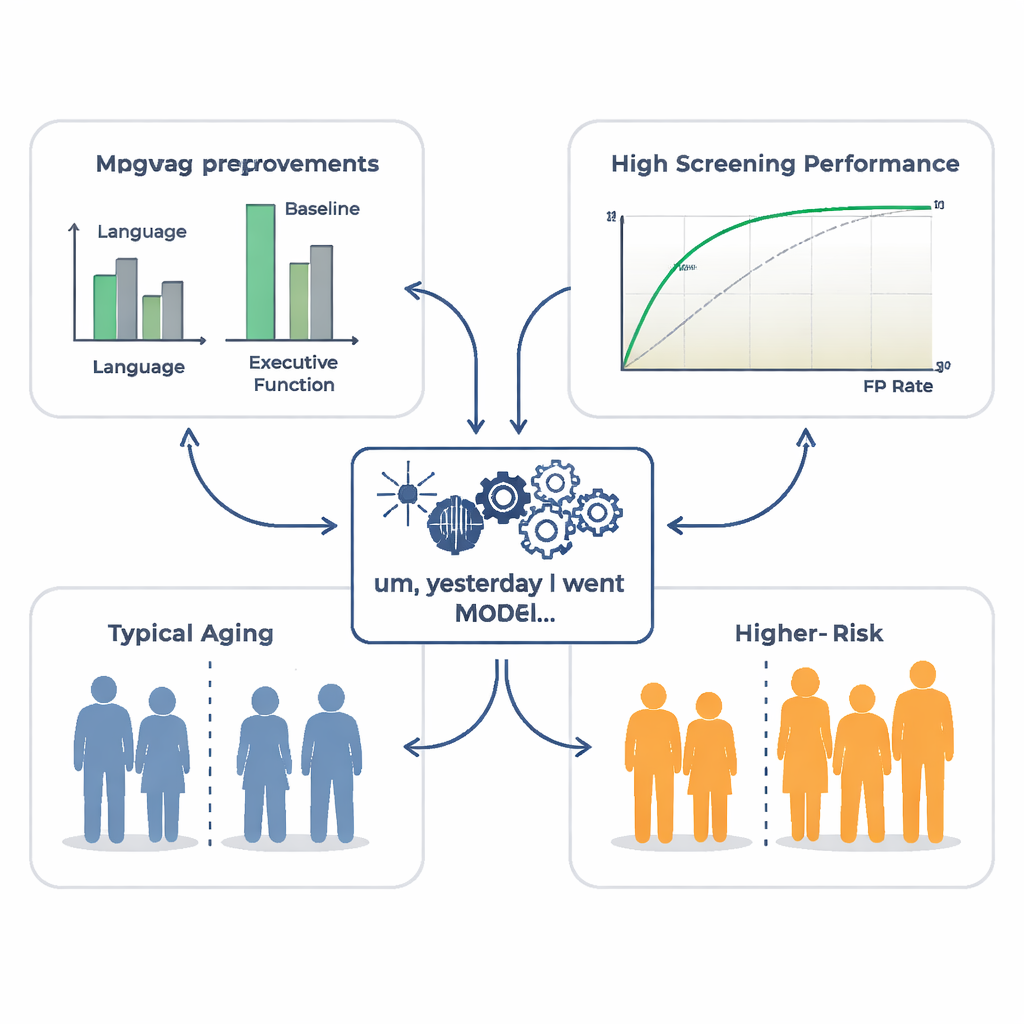

Teaching computers to estimate thinking skills

With these speech features in hand, the researchers trained machine‑learning models to predict each person’s cognitive test scores. They compared models that used only basic background information (age, gender, education, and country) with models that also used speech features. Adding speech made a striking difference: for language ability, the speech‑based model explained about 27% of the differences between people, more than four times what demographics alone could do. It also captured a meaningful share of variation in executive function and thinking speed, though much less for memory. Detailed analysis showed that rich, specific word use and smoother, more fluent delivery (faster speech rate and fewer or shorter pauses) tended to go hand‑in‑hand with stronger test scores.

Spotting those who may be slipping

Beyond estimating scores on a sliding scale, the team asked whether speech could help flag individuals whose performance was unexpectedly low for their age and education—people who might be at higher risk of developing dementia. Using the same speech features, they trained a separate computer model to distinguish these “cognitive low performers” from others. For language ability in particular, the model showed good screening performance, meaning that a simple picture‑description recording could help identify a subgroup of older adults who merit closer clinical attention or might be strong candidates for participation in treatment trials.

Putting the approach to the test in real patients

To see whether their models captured differences that matter clinically, the researchers applied them, without any retraining, to an independent dataset of people with Alzheimer’s disease and healthy peers who had performed the same picture‑description task decades earlier. Even though the recordings were older and noisier, the speech‑based scores came out clearly lower for the Alzheimer’s group across all four cognitive areas, especially language and executive function. This suggests that the patterns learned from a large group of mostly healthy older adults still make sense when applied to patients with diagnosed dementia.

What this could mean for everyday care

For non‑specialists, the key message is that short samples of ordinary speech hold a surprising amount of information about how well an older person’s brain is functioning, particularly for language and higher‑order thinking. While this method cannot replace full clinical evaluation—and is less informative for memory on its own—it could become a low‑cost, non‑intrusive way to monitor changes over time, prompt timely check‑ups, and help researchers find the right participants for clinical trials. In the future, a routine phone or video call might quietly analyze how we speak, offering an early nudge to seek help long before serious problems are obvious.

Citation: Heitz, J., Engler, I.M. & Langer, N. Towards a speech-based digital biomarker for cognitive impairment: speech as a proxy for cognitive assessment. npj Digit. Med. 9, 179 (2026). https://doi.org/10.1038/s41746-026-02360-8

Keywords: speech-based cognitive screening, digital biomarkers, Alzheimer’s disease, aging and dementia, machine learning in medicine