Clear Sky Science · en

Deep learning for malignancy and tumor origin prediction using cytology or histopathology whole slide images

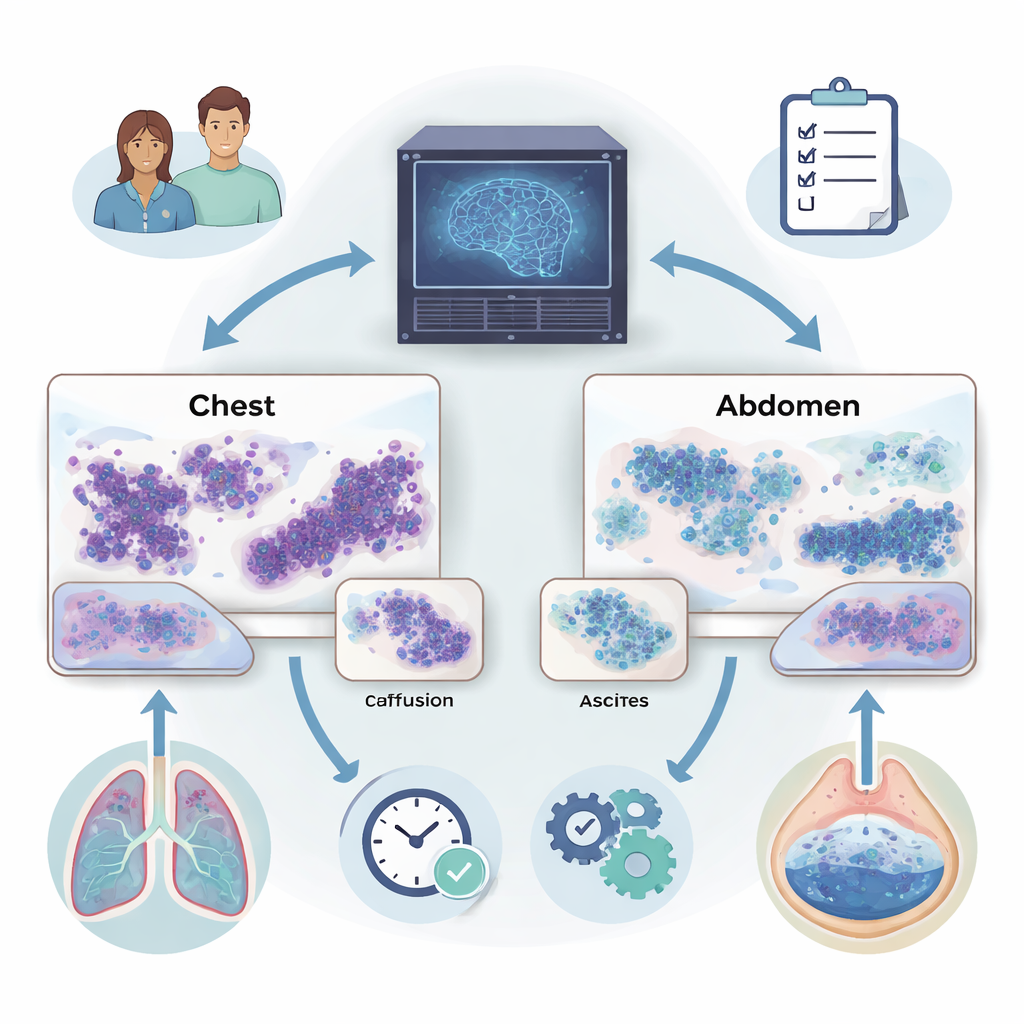

Why fluids around the lungs and belly matter

When fluid builds up around the lungs (pleural effusion) or in the abdomen (ascites), it can be an early sign that cancer has spread. Doctors examine these fluids under a microscope to look for cancer cells, but the task is painstaking and even experts sometimes disagree. This study describes a new artificial intelligence (AI) system that can scan entire digital slides of these fluids, help decide whether cancer is present, and even suggest where in the body the tumor likely began.

Turning microscope slides into digital maps

Modern pathology labs can scan glass slides into ultra‑high‑resolution digital images, each containing millions of cells. The researchers used these whole slide images from two kinds of preparations: thin “smears” of cells and compact “cell blocks” that resemble tiny tissue samples. They focused on fluids from the chest and abdomen collected at a major hospital, along with additional tissue samples from a large international cancer database. Because manually marking every cancer cell is impossible at this scale, the team built a method that could learn from slide‑level labels such as “malignant” or “benign” without detailed annotations.

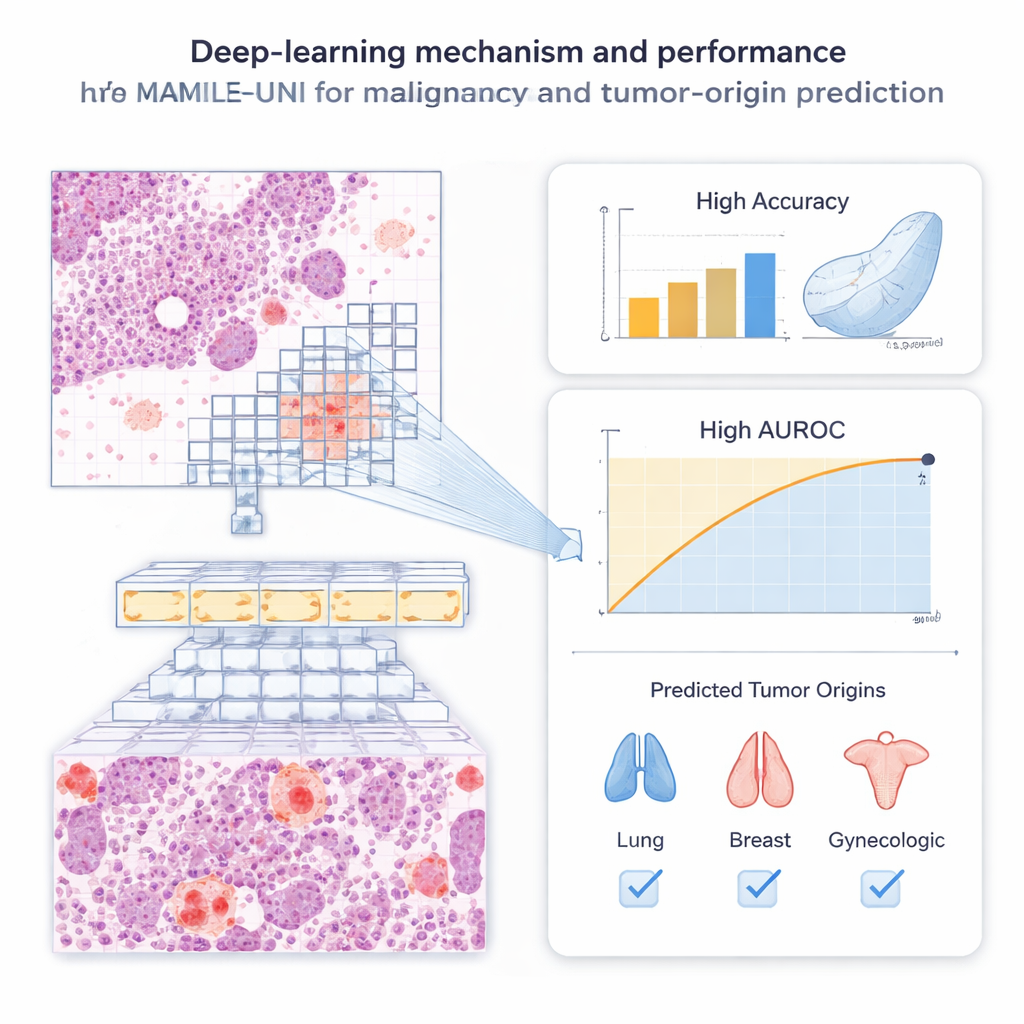

An AI that teaches itself what to look for

The system, called MAMILE‑UNI, combines two key ideas. First, it breaks each slide into many small image patches and passes them through a powerful “transformer” network that has been pre‑trained, without human labels, on millions of pathology images. This self‑training step lets the model discover useful visual patterns—like cell clusters and tissue textures—on its own. Second, an attention module learns which patches on a slide matter most for diagnosis, effectively mimicking how a pathologist scans for suspicious areas. Patches that strongly influence the decision are highlighted, producing heatmaps that show where the algorithm “looked” when it labeled a slide as cancerous or not.

Detecting cancer in chest and belly fluids

The team evaluated MAMILE‑UNI on 1,250 fluid slides from pleural effusions and ascites. Compared with five leading deep‑learning methods, the new system was consistently more accurate. For pleural effusions, it correctly distinguished malignant from benign slides about 9 out of 10 times for both smears and cell blocks. For ascites, it reached similar accuracy and was especially strong at maintaining both high sensitivity (catching true cancers) and high specificity (avoiding false alarms). Statistical tests showed that its predictions closely matched the true diagnoses and were significantly better than the competing AI models. Importantly, the system remained reliable even when cancer cells were rare on a slide, a situation that often challenges human readers.

Tracing where the cancer came from

Beyond simply flagging malignancy, the authors asked whether AI could infer where a metastatic tumor started—a major challenge when the primary site is unknown. Using cytology smears from pleural effusions and ascites, the model learned to assign slides to broad origin groups such as lung, breast, gastrointestinal tract, or gynecologic organs. It was particularly accurate for lung and breast cancers, while performance was more modest for rarer or visually varied tumors. To test generality, the researchers also applied MAMILE‑UNI to 1,196 tissue sections from 69 hospitals worldwide. On these histology slides, the system identified tumor origin with strikingly high accuracy, approaching near‑perfect agreement with ground‑truth diagnoses.

Speed, efficiency, and support for clinicians

Pathologists often spend at least ten minutes carefully reviewing a single digital cytology slide. In contrast, MAMILE‑UNI can process a whole slide and return a prediction in under two minutes on a standard graphics card, after compressing gigabyte‑sized images into compact feature sets. Curve‑based evaluations showed that the model tends to rank truly malignant cases near the top of its priority list, offers a favorable balance between benefits and harms across decision thresholds, and produces probability scores that align well with real‑world outcomes. Attention maps overlapped closely with areas marked by expert pathologists, suggesting that the AI’s focus is clinically meaningful rather than arbitrary.

What this means for patients and doctors

For patients with fluid in the chest or abdomen, timely and accurate diagnosis strongly shapes treatment decisions, yet current testing can be slow, subjective, and expensive. This study shows that a carefully designed AI system can reliably screen digital fluid and tissue slides for signs of cancer and provide clues about where the disease started, while using modest computing resources. The authors emphasize that MAMILE‑UNI is not a replacement for pathologists, but a support tool that could reduce workload, improve consistency, and expand access to high‑quality cancer diagnostics—especially in settings where specialist expertise and advanced laboratory tests are limited.

Citation: Wang, CW., Chu, TC., Wu, TK. et al. Deep learning for malignancy and tumor origin prediction using cytology or histopathology whole slide images. npj Digit. Med. 9, 175 (2026). https://doi.org/10.1038/s41746-026-02359-1

Keywords: cytology AI, pleural effusion, ascites, tumor origin prediction, digital pathology