Clear Sky Science · en

Melan-Dx: a knowledge-enhanced vision-language framework improves differential diagnosis of melanocytic neoplasm pathology

Why smarter melanoma diagnosis matters

Melanoma, a dangerous form of skin cancer, can often be cured if caught early—but only if doctors reading tissue samples under the microscope recognize it correctly. Unfortunately, even experienced specialists sometimes disagree about what they see, especially for borderline growths that look almost, but not quite, malignant. This article describes Melan‑Dx, a new artificial intelligence (AI) system that aims to support skin cancer experts by combining thousands of expert‑labeled microscope images with structured medical knowledge, offering faster, more consistent, and more transparent diagnoses.

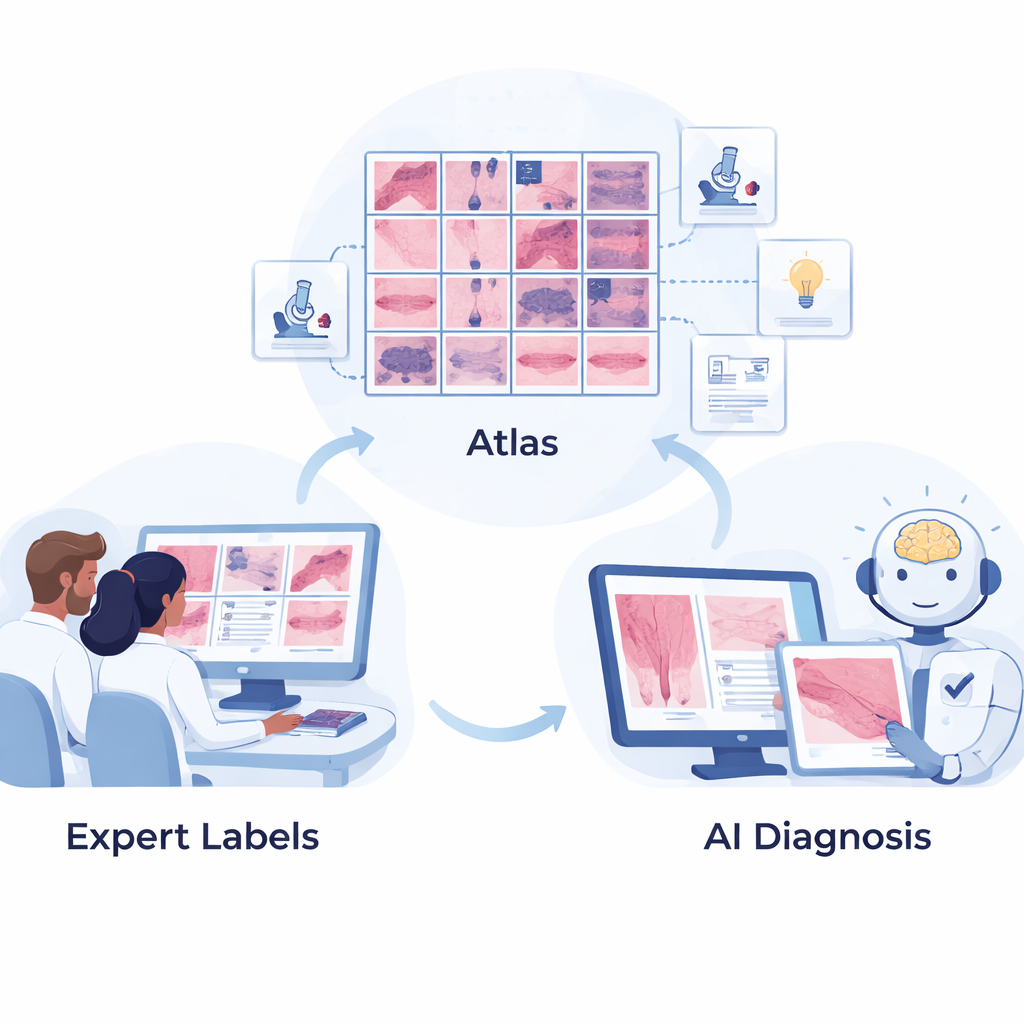

Building a rich atlas of skin tumor images

The first step was to assemble a high‑quality “atlas” of melanocytic tumors—the broad family of growths that includes harmless moles and life‑threatening melanomas. Dermatopathologists at the University of Pennsylvania carefully selected and labeled 2,893 microscope images covering 44 different types of melanocytic lesions, from common benign nevi to rare, aggressive melanomas. Each image focuses on a region of interest and is mapped into a three‑level hierarchy based on World Health Organization (WHO) tumor classifications, grouping diseases first by broad category, then by subtype, and finally by specific diagnosis. This structured layout mirrors how specialists think about these lesions in daily practice.

Teaching AI with medical knowledge, not just pixels

Melan‑Dx goes beyond typical image‑only AI by pairing pictures with text descriptions drawn from authoritative medical sources. For every disease type, the team compiled short, structured entries that describe what pathologists look for—such as cell shape, growth pattern, and special stain results—and how those features distinguish one lesion from another. A large language model helped organize this information, but human experts reviewed it for accuracy. Together, the images and text are converted into numerical “embeddings” and stored in a searchable database. This allows the AI to not only recognize visual patterns, but also link them to explicit diagnostic criteria, much like a doctor consulting a well‑indexed, illustrated textbook.

How the Melan‑Dx system reasons about a new case

When Melan‑Dx sees a new biopsy image, it processes it through two coordinated branches. In the image branch, a vision model encodes the picture and retrieves the most similar examples from the atlas, emphasizing those that match best and blending them into an enhanced representation. In the knowledge branch, the same image is used to pull up the most relevant text snippets describing possible diagnoses. Special “expert” modules for each disease type weigh which reference images and knowledge entries matter most, and fusion blocks combine these clues. The system is trained so that, for a correct diagnosis, the enhanced image and text representations line up closely, while mismatched pairs are pushed apart. This contrastive learning helps the AI separate dozens of subtly different tumor types while remaining grounded in medical knowledge.

Testing accuracy, safety, and efficiency

The researchers then compared Melan‑Dx to several leading pathology AI models across multiple tasks. For the basic question “melanoma or not?”, Melan‑Dx achieved up to 87% accuracy, outperforming both models that were lightly adapted and those that were fully retrained. In a tougher 40‑way classification across many melanoma and mole subtypes, it reached nearly 70% accuracy on the top guess and over 87% when allowed three guesses, again beating competing approaches. The system also respected the disease hierarchy: when it was wrong, it was more likely to confuse closely related conditions than to mix up benign and malignant categories, which better reflects real‑world clinical stakes. On whole‑slide images—large digital scans of entire tissue sections—Melan‑Dx improved cancer detection both when training data were scarce and when plenty of examples were available, and it did so while cutting training time by almost 90–97% because the core vision model does not need to be retrained.

What this means for patients and doctors

For patients, the promise of Melan‑Dx is not an all‑knowing robot doctor, but a smarter second opinion that can help reduce missed melanomas and unnecessary scares from overdiagnosis. For clinicians, the system offers not just a label but also evidence: it shows similar past cases and the key written criteria that support its suggestion, making its reasoning easier to scrutinize. Although the current work focuses on melanocytic tumors and relies on a carefully curated dataset from one center, the same strategy—linking images with structured medical knowledge and using retrieval to guide AI—could be extended to many other diseases. As a lightweight, explainable tool designed for human‑AI collaboration, Melan‑Dx points toward a future in which pathologists remain in charge, but are better equipped to deliver accurate, timely skin cancer diagnoses.

Citation: Yao, J., Li, S., Liang, P. et al. Melan-Dx: a knowledge-enhanced vision-language framework improves differential diagnosis of melanocytic neoplasm pathology. npj Digit. Med. 9, 171 (2026). https://doi.org/10.1038/s41746-026-02357-3

Keywords: melanoma diagnosis, computational pathology, medical AI, vision language models, skin cancer detection