Clear Sky Science · en

Consensus-based reporting guideline for participatory development and evaluation of digital health interventions

Why how we build health apps matters

From fitness trackers to mental health apps, digital tools are reshaping how people manage their health. But behind every successful app is a careful process of designing and testing it with the people who will actually use it. This paper looks at how experts from around the world joined forces to agree on a clear set of rules for reporting how such tools are developed and evaluated together with patients, professionals, and other stakeholders. Their new guideline aims to make digital health research more transparent, trustworthy, and easier to learn from.

The promise and problem of health technology

Digital health interventions—such as smartphone apps, online programs, or connected devices—are widely seen as one way to tackle rising healthcare pressures from aging populations, chronic illness, and staff shortages. These tools can improve access to care, support shared decision-making, and strengthen patient involvement. Yet in real life, people often do not use these tools as intended, struggle to stick with them, or simply abandon them. One major reason is that many tools are not designed closely enough with their intended users, or the process of involving users is not described clearly enough for others to copy or improve upon.

Why involving people in design needs clearer reporting

To avoid digital tools that miss the mark, many researchers now turn to participatory approaches: methods that actively involve patients, the public, health professionals, software developers, and policymakers in shaping and testing digital health tools. These approaches stress mutual learning, shared decision-making, and creativity. However, the language used—such as “co-design,” “user-centered design,” or “participatory health research”—is varied and overlapping. Studies often describe these processes in vague or inconsistent ways. As a result, it is hard to compare projects, judge how robust their methods were, or reuse what was learned. Existing reporting checklists for health research do not fully cover the specific challenges of participatory digital health work, leaving an important gap.

Bringing experts together to agree on what to report

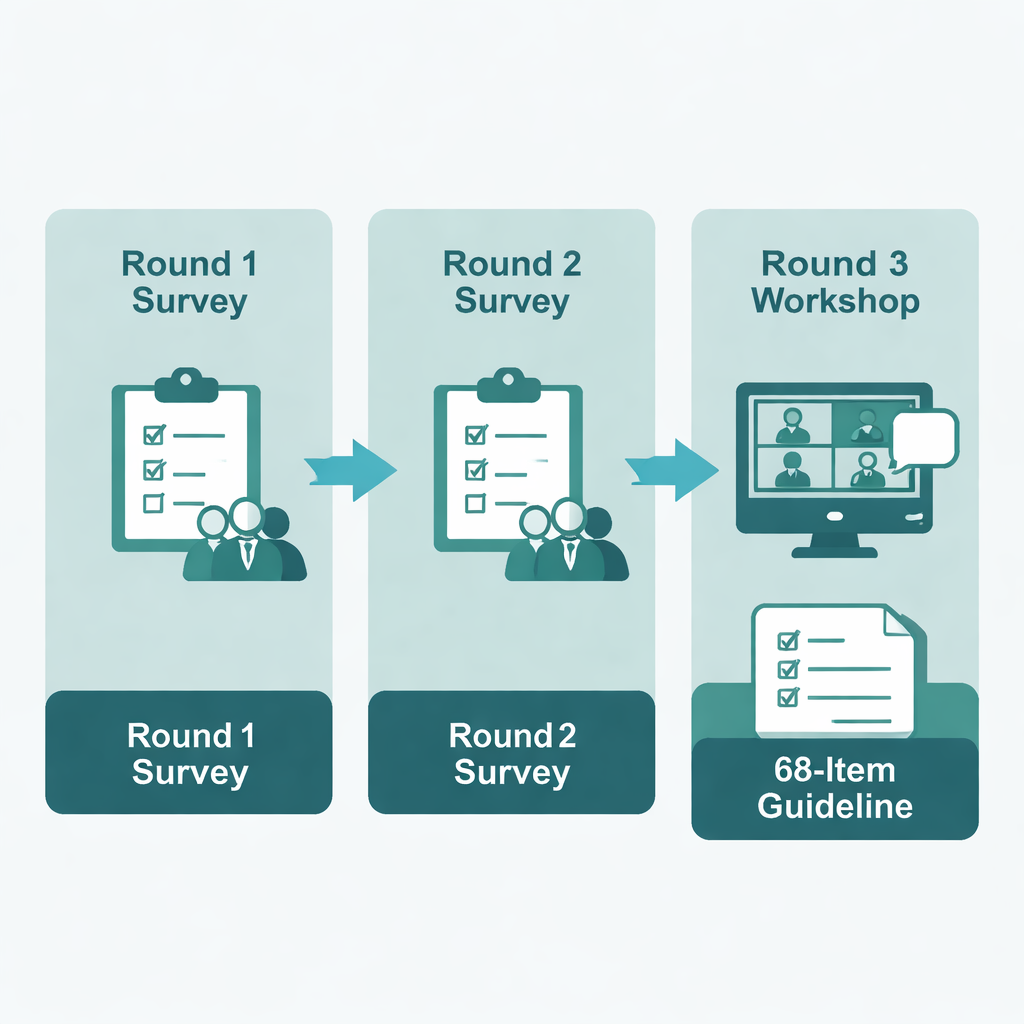

To close this gap, the authors developed a new reporting guideline called ParDE-DHI (Participatory Development & Evaluation of Digital Health Interventions). They followed established recommendations for creating reporting standards and used a structured consensus method known as a Delphi study. First, the team drafted 64 potential checklist items based on a scoping review and on existing reporting guidelines. Then they invited a large and diverse panel of experts—researchers, industry representatives, and healthcare providers from 23 countries—to rate how important each item was and to suggest changes or additions. The process unfolded over three rounds: two online surveys and one interactive workshop, with opportunities for both scoring and free-text feedback in each stage.

From many ideas to one detailed checklist

In the first survey, 66 experts rated the draft items; 42 reached the preset consensus threshold, while others were revised or expanded based on almost 200 comments. New suggestions from participants led to the addition of several items. In the second survey, 35 experts reconsidered updated and new items, again offering scores and comments. Remaining disagreements were brought to a third round—a virtual workshop—where a smaller group discussed the outstanding issues in breakout groups and plenary sessions. This open discussion allowed the panel to refine wording, merge or remove items, and add one last new item. Rather than shrinking the list at all costs, the group prioritized completeness and real-world usefulness, arriving at a final set of 68 reporting items, accompanied by an introductory text and with a fuller explanation document in development.

What the new guideline offers

The ParDE-DHI guideline tells authors what information to include when they describe how a digital health tool was developed and evaluated with stakeholders. It covers key elements such as how participation was defined, who was involved, how they were recruited, what level of influence they had, which evaluation methods and criteria were used, and which existing frameworks or standards were followed. The guideline is intended to sit alongside other reporting tools, such as those for describing interventions or economic evaluations, and includes example references to help less experienced researchers. By making development and evaluation processes more visible and comparable, ParDE-DHI aims to improve the quality, transparency, and repeatability of participatory digital health research.

What this means for patients and the public

For lay readers, the key message is that good health apps and digital services do not just appear—they are built and tested in partnership with the people who will rely on them. This new guideline gives researchers a detailed checklist to follow and to report on that partnership work. Over time, this should lead to clearer studies, fewer hidden weaknesses, and digital tools that are better matched to real needs. Ultimately, by shining more light on how digital health tools are created and judged, ParDE-DHI supports more trustworthy, effective technologies and strengthens the voice of patients and citizens in shaping the future of healthcare.

Citation: Weirauch, V., Mainz, A., Nitsche, J. et al. Consensus-based reporting guideline for participatory development and evaluation of digital health interventions. npj Digit. Med. 9, 169 (2026). https://doi.org/10.1038/s41746-026-02355-5

Keywords: digital health interventions, participatory design, reporting guidelines, Delphi study, patient engagement