Clear Sky Science · en

Scaling medical device regulatory science using large language models

Why this matters for patients and doctors

Modern medicine is rapidly filling up with "smart" devices that use artificial intelligence to read scans, track vital signs, and help doctors make decisions. In the United States alone, more than a thousand such tools have already been cleared or approved by the Food and Drug Administration (FDA). Each device leaves a paper trail of complex reports and safety records. Today, most of that information is still sifted by hand, which is slow, expensive, and quickly falls behind reality. This paper explores whether large language models—the same kind of AI behind advanced chatbots—can reliably read those documents at scale and turn them into usable data to help regulators, researchers, and the public understand how well these devices are built and how safely they perform.

The problem of too many complex documents

Every AI-powered medical device comes with thick decision summaries, safety reports, and recall notices. These documents are long, written in dense jargon, and often include tables, images, and inconsistent formatting. Past research has shown that answering basic questions—such as how a device was tested before approval, or what exactly went wrong when it malfunctioned—has required teams of experts to read hundreds of PDFs line by line. Simple search tools and pattern-matching can find obvious details like ID numbers, but they struggle with deeper questions that require judgment, such as whether a study was done across multiple hospitals or whether a device truly contributed to a patient’s injury or death. As the number of AI-enabled devices has exploded, this manual approach has become impossible to keep up with.

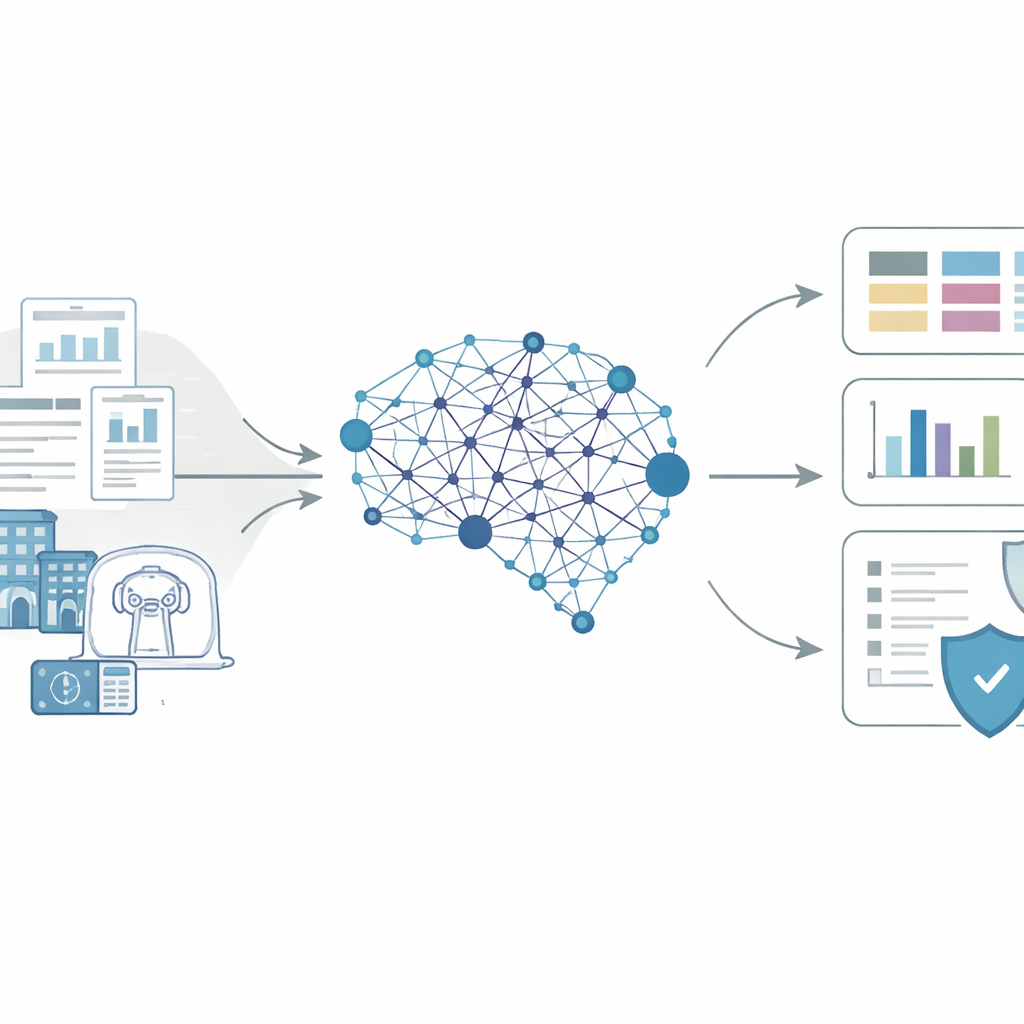

An AI pipeline that reads like an expert

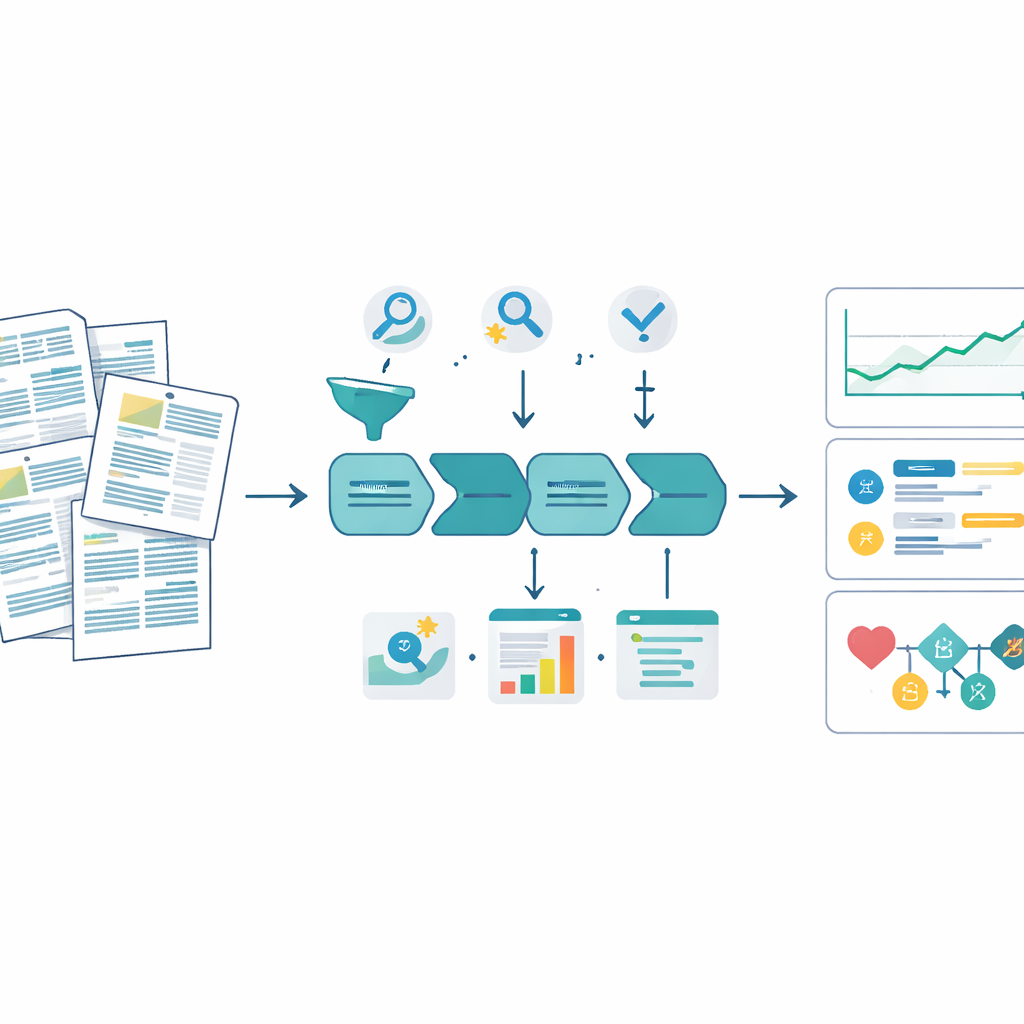

The authors built a general pipeline based on a state-of-the-art large language model to tackle this challenge. First, they pulled together all publicly available FDA decision summaries and safety reports for 1,247 AI or machine-learning devices and 1,852 related adverse event reports up to mid-2025, cleaning the PDFs and using optical character recognition when needed. Then, instead of asking the model to answer broad questions all at once, they broke the work into smaller, well-defined subtasks. For each document type, the model received detailed instructions grounded in official FDA guidance plus examples of how humans would label information. The model was asked to reason step by step and to output its answers in a strict, structured format, turning free‑form text into clear fields such as “number of study sites,” “type of safety event,” or “kind of device change.”

Checking accuracy in real regulatory questions

To see whether this system could be trusted, the team ran three case studies where earlier researchers had already spent months on manual review. First, they revisited how devices are tested before approval by asking if trials were run prospectively (collecting data going forward) and whether they involved multiple hospitals. Comparing model outputs to expert labels, they saw agreement rates often above 80 to 90 percent, comparable to agreement between human annotators themselves. Second, they used the model to re‑label safety reports describing malfunctions, injuries, or deaths, and to classify what went wrong with the device. When human reviewers compared the manufacturer’s original codes with those suggested by the model—without knowing which was which—they preferred the model’s choices the large majority of the time, especially for sensitive categories like death versus malfunction. Third, the researchers linked details from pre‑approval documents to later safety reports to explore which early choices—such as picking a predecessor device with past recalls or making major hardware changes—were statistically tied to higher risk of future problems.

What the findings reveal about safety and oversight

Once validated, the pipeline allowed the team to scale these analyses from dozens of devices to the entire known population of AI-enabled medical tools. They found, for example, that prospective clinical evaluations have remained relatively rare over three decades, hovering around one in ten devices, while mentions of multi‑site testing have grown substantially. In safety reports, the model uncovered patterns where the type of problem described in the text did not match the code submitted to the FDA—for instance, situations where hardware faults were labeled as image quality issues. When they linked pre‑approval features to later safety events, devices whose predecessors already had recalls or adverse event histories showed much higher hazards of new reports, whereas devices backed by clinical testing tended to have lower risk. These results are exploratory but illustrate the kinds of questions that can now be asked routinely rather than as one‑off projects.

Limits, safeguards, and the road ahead

The authors emphasize that their approach is not flawless and should not replace expert judgment. Accuracy around 80 percent may be more than sufficient for analyzing big-picture trends but not for making decisions about any single device or patient. Performance can vary across device types and years, and the quality of the underlying FDA documents and safety databases remains a major bottleneck. Still, this study shows that carefully designed language-model systems can turn mountains of unstructured regulatory text into structured, auditable data in days instead of years. For lay readers, the takeaway is that the same AI technologies powering consumer chatbots can also help watchdogs and researchers track how AI medical devices are built, tested, and monitored—potentially leading to faster detection of problems and better evidence to shape safer rules and products.

Citation: Li, H., He, X., Subbaswamy, A. et al. Scaling medical device regulatory science using large language models. npj Digit. Med. 9, 221 (2026). https://doi.org/10.1038/s41746-026-02353-7

Keywords: AI medical devices, regulatory science, large language models, FDA safety reports, health technology oversight