Clear Sky Science · en

Enhanced language models for predicting and understanding HIV care disengagement: a case study in Tanzania

Why keeping people in HIV care matters

Staying on HIV treatment is one of the most powerful tools we have to keep people healthy and to stop the virus from spreading. Yet in many parts of the world, especially in sub-Saharan Africa, some patients stop picking up their medicines or miss clinic visits, often for complex social and economic reasons. This study explores whether a new kind of artificial intelligence, called a large language model, can help doctors in Tanzania spot who is most at risk of dropping out of care so that support can reach them before problems arise.

Turning medical records into a helpful story

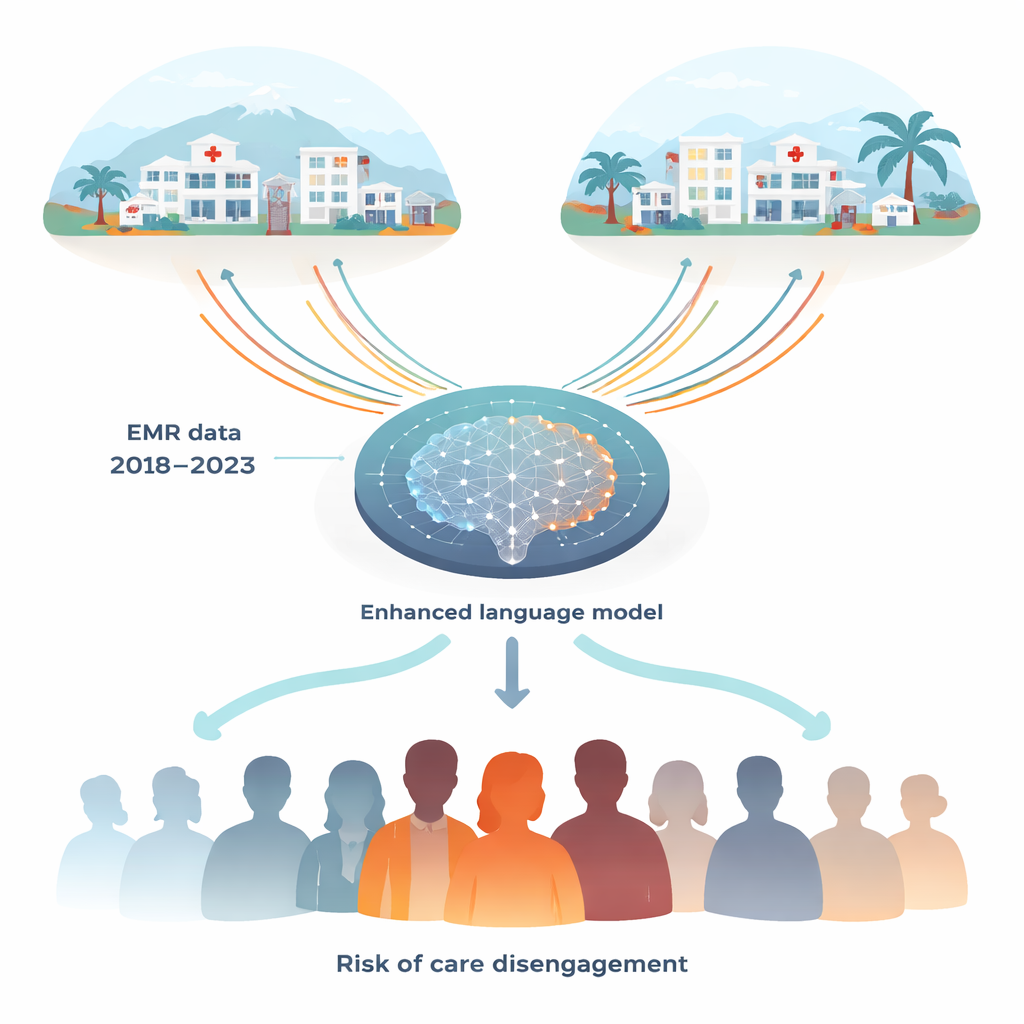

The researchers worked with more than 4.8 million electronic medical records from over 260,000 people living with HIV who received care in Tanzania between 2018 and 2023. These records included age, sex, clinic visit dates, number of pills dispensed, lab results such as viral load, and details about the health facilities. Instead of looking at single snapshots in time, the team focused on entire care histories, capturing patterns such as missed or delayed appointments and gaps in taking antiretroviral therapy. They then translated these data into plain-language summaries that a language model could read almost like a patient biography.

Teaching an AI to think like a careful clinician

The team adapted an open-source language model (Llama 3.1) and fine-tuned it on the Tanzanian records so it could answer a specific question: in the coming year, is this patient likely to miss treatment for weeks, develop an unsuppressed viral load, or be lost to follow-up? To do this consistently, the model was instructed to respond in a fixed sentence format describing three outcomes: whether the virus would be suppressed or detectable, whether the person would likely be lost to follow-up for more than 28 days, and whether their risk of treatment non-adherence would be high, moderate, low, or none. Because the input was also written as standardized text, the system could both process complex histories and explain its reasoning in human-readable language.

How the new model stacks up against older tools

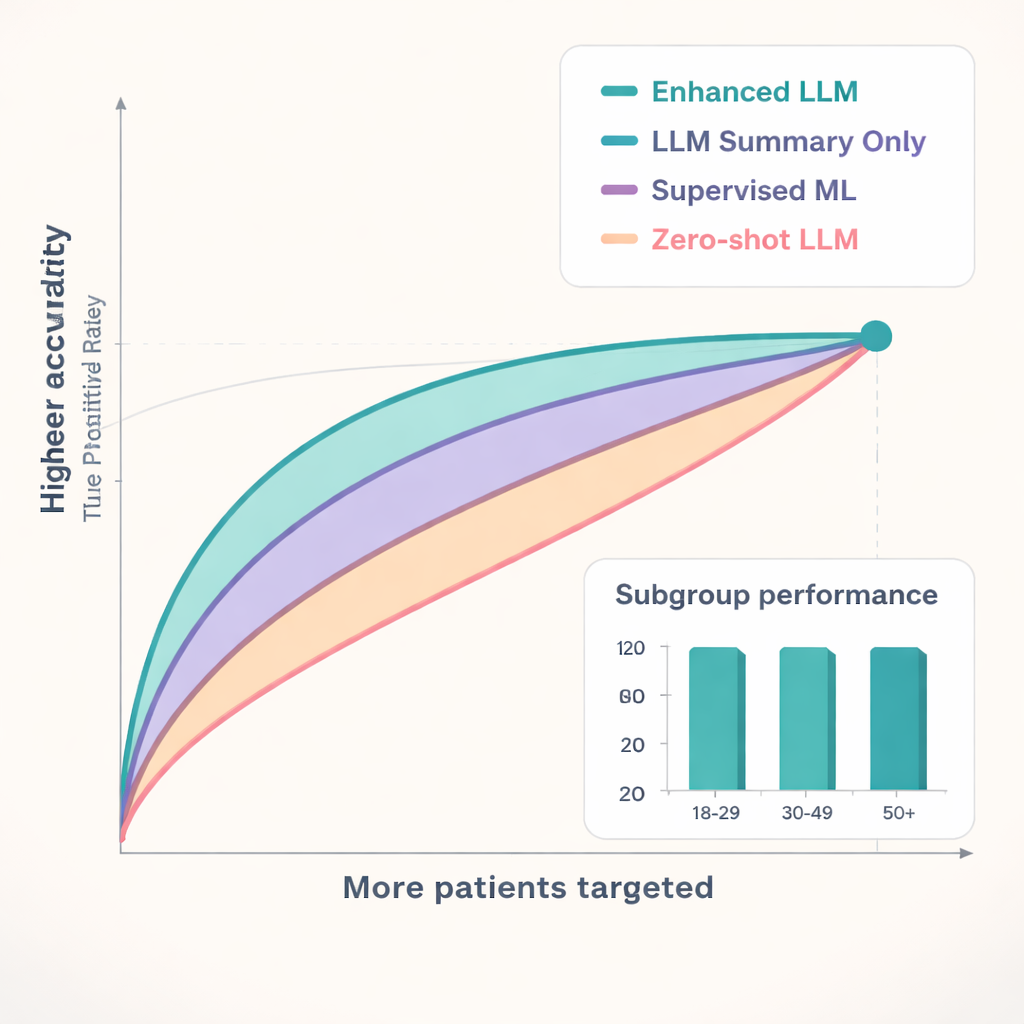

The enhanced language model was tested in two Tanzanian regions: Kagera, where it was trained, and Geita, where it had never seen the data before. Its performance was compared to a strong traditional machine-learning method and to the same language model used “out of the box” without fine-tuning. Across key outcomes, the enhanced model consistently ranked patients more accurately. For predicting who would be lost to follow-up—a disruption of 28 days or more in care—it reached accuracy scores (AUCs) of 0.77 in Kagera and 0.71 in Geita, higher than both the conventional model and the untuned language models. When health programs can only focus on a fraction of patients, this matters: among the 25% of patients the enhanced model flagged as highest-risk, roughly three out of four truly became lost to follow-up, allowing scarce resources to be aimed where they are most needed.

What the AI “pays attention” to

Because language models use attention mechanisms, the researchers could see which pieces of information most influenced predictions. The model focused strongly on factors related to care continuity: long gaps between visits, delayed or missed appointments, signals of poor pill-taking, and how long someone had lived with HIV. Age and sex also played roles, with especially strong performance in predicting loss to follow-up among older adults and people who had not been in care in 2021. Compared with the traditional model, which leaned more on basic demographics and pill counts, the enhanced language model drew a richer picture of patient engagement over time. Tanzanian HIV physicians who reviewed a sample of cases agreed with the model’s judgments 65% of the time, and in most of those aligned cases they found the AI’s written explanations clinically sensible.

Balancing promise, privacy, and practicality

The study also wrestled with real-world concerns about privacy and deployment. All data were de-identified and stored on a secure local computing cluster, and the team tested additional safeguards such as shifting visit dates slightly while preserving timelines. They note that using such advanced AI introduces technical and maintenance challenges, and that models trained in two Tanzanian regions may need adaptation elsewhere. Still, because the enhanced model was better at identifying high-risk patients even when such cases were relatively rare, it could make outreach programs more efficient—helping clinicians act earlier, before treatment lapses lead to viral rebound and greater risk of transmission.

What this means for people living with HIV

For a lay observer, the bottom line is that this kind of AI acts like an extra set of expert eyes scanning thousands of patient histories at once. It does not replace doctors or nurses, but it can alert them when someone’s pattern of visits and lab results suggests they may soon slip out of care. Used carefully and ethically, such tools could help health workers in Tanzania and similar settings direct phone calls, home visits, or financial support to those who need them most, boosting treatment success rates and bringing the world closer to long-standing goals of controlling the HIV epidemic.

Citation: Wei, W., Shao, J., Lyu, R.Q. et al. Enhanced language models for predicting and understanding HIV care disengagement: a case study in Tanzania. npj Digit. Med. 9, 165 (2026). https://doi.org/10.1038/s41746-026-02349-3

Keywords: HIV care retention, large language models, electronic medical records, sub-Saharan Africa, antiretroviral therapy adherence