Clear Sky Science · en

PrysmNet a polyp refining system using salience and multimodal guidance for reproducible cross domain segmentation

Why spotting tiny growths matters

Colorectal cancer often begins as small, harmless-looking bumps called polyps on the lining of the colon. Catching and removing these polyps early can prevent cancer, but even expert doctors miss a noticeable share of them during colonoscopy, especially when the growths are tiny or their edges are hard to see. This study introduces PrysmNet, a new artificial intelligence (AI) system designed to help doctors find and outline polyps more reliably across different hospitals, cameras, and patient groups, while keeping the system fast enough for real-time use during procedures.

A smarter helper for colonoscopy

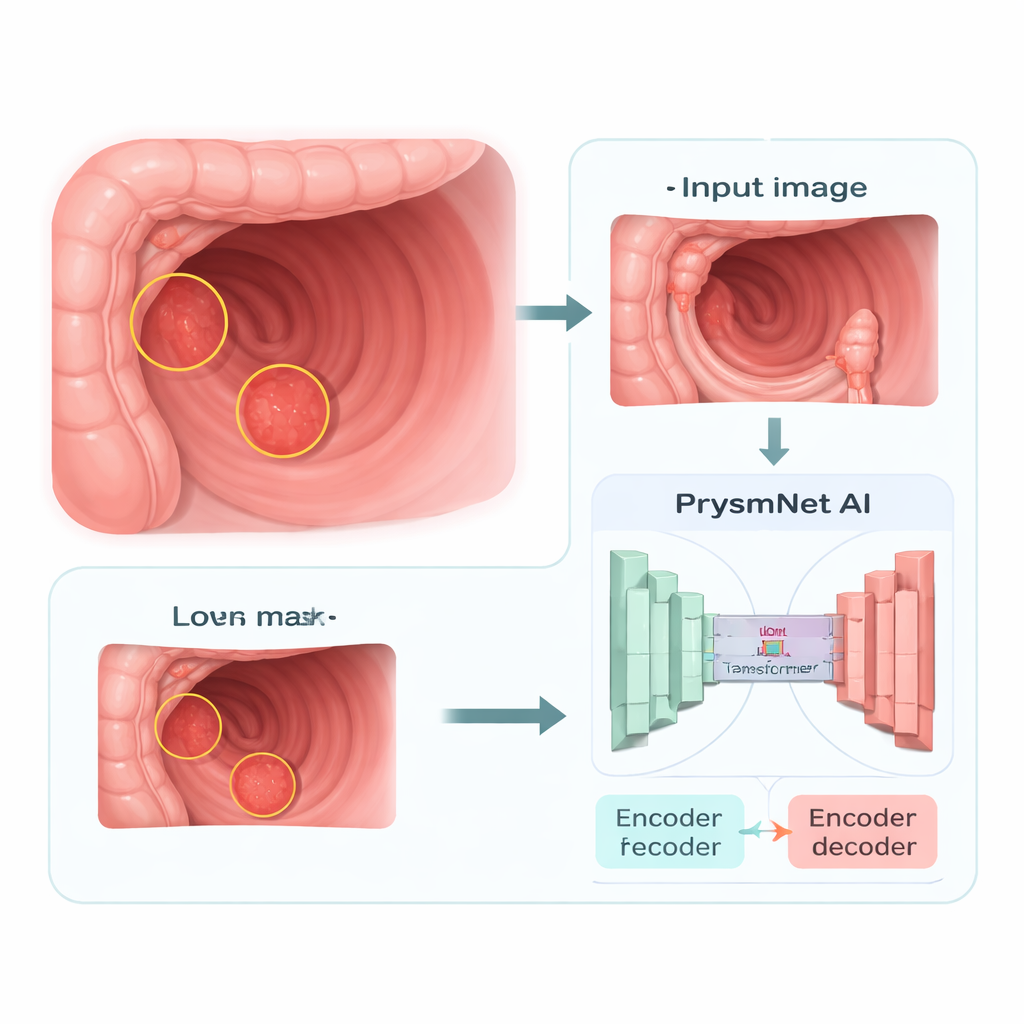

PrysmNet is a computer vision system that takes colonoscopy images as input and produces a detailed map showing which pixels belong to a polyp. Unlike many earlier tools that work best only on the kind of images they were trained on, this system is built to stay accurate when exposed to new equipment, lighting, and patient populations. It uses a modern “transformer” backbone, a type of AI originally developed for language and now popular in image analysis, to look at the whole scene at once and reason about where a polyp is likely to be, even when it occupies only a very small part of the frame or blends into the surrounding tissue.

Borrowing tricks from human vision

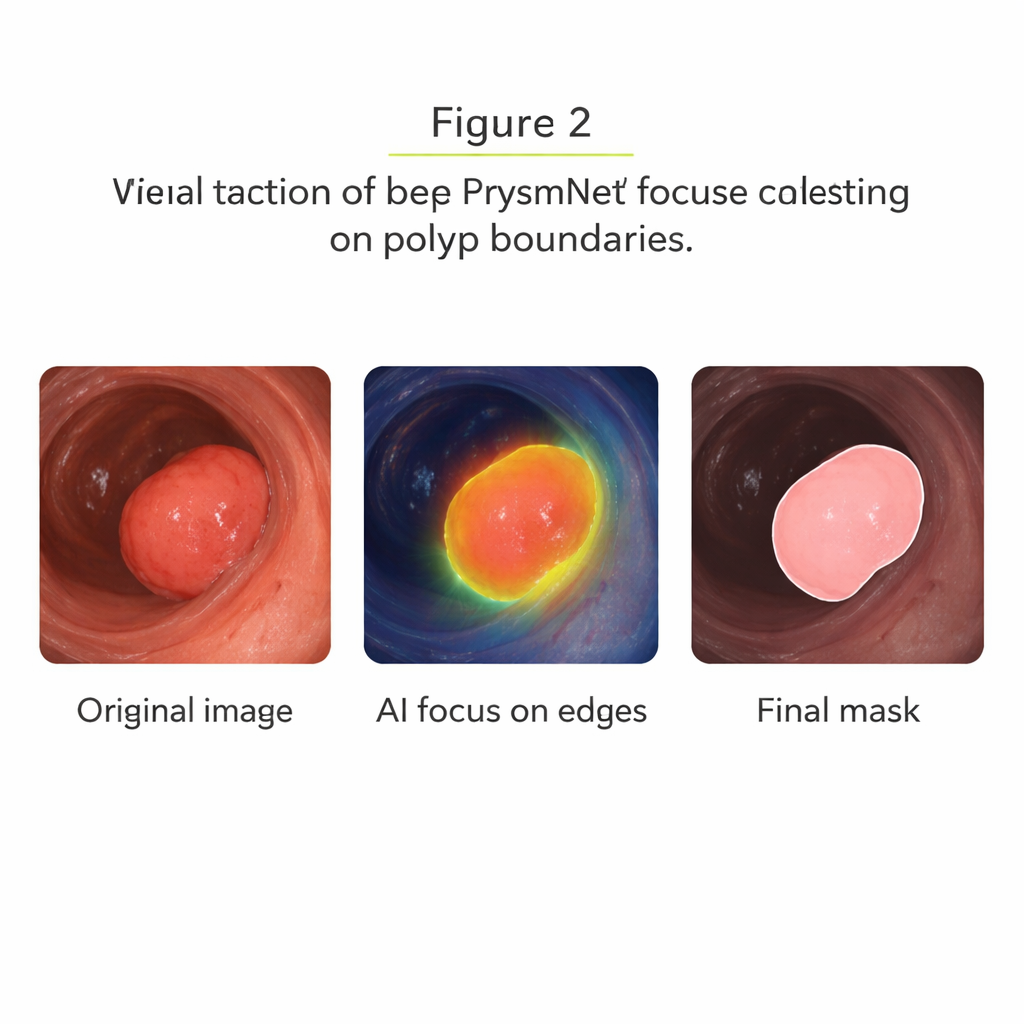

A key innovation in PrysmNet is a boundary-focused component inspired by how our own visual system detects edges and contrast. The authors add a “salience module” that scans the image features at several scales to highlight where intensity and texture change sharply, which often corresponds to the border of a polyp. Instead of treating all regions equally, the network is nudged to concentrate its effort along these borders, sharpening the outline it draws. This is especially important for flat or faint polyps, whose boundaries are easy to miss both for humans and for machines. By explicitly supervising this module on known polyp edges during training, the system learns to draw cleaner, more clinically useful masks.

Learning from a giant and using extra cues

To further improve robustness, the researchers let PrysmNet learn from an even larger, general-purpose segmentation model called the “Segment Anything Model,” which was trained on over a billion object outlines from everyday photos. During training, they run both systems on the same colonoscopy images and encourage PrysmNet to mimic the larger model’s overall shapes, boundaries, and internal features, while still respecting the expert-drawn medical labels. In parallel, they feed in simple extra views of each frame—edge maps and texture patterns—through a temporary guidance branch. This extra information helps the network become less sensitive to changes in color or lighting. Crucially, these guidance parts are turned off once training is complete, so the final system remains lightweight and fast for use in real clinics.

Proving it works in the real world

The team tested PrysmNet on several widely used polyp image collections, both in the same setting it was trained on and, more demanding, on data from different hospitals and camera systems. On standard benchmarks, the model matched or slightly exceeded the accuracy of the best existing methods. The more striking results came from a “cross-domain” test, where PrysmNet was trained only on two datasets and then evaluated on a third, independent multi-center set. Here it achieved higher overlap scores and noticeably cleaner boundaries than previous systems, including a strong recent competitor specifically tuned for polyp edges. Visual side-by-side examples show that PrysmNet better captures tiny and low-contrast polyps, and its attention maps concentrate around true lesion borders instead of spreading diffusely.

Remaining challenges and what this means for patients

Despite its advances, PrysmNet is not perfect. It can still be fooled by bright reflections that resemble tissue, and it occasionally misses extremely flat or nearly invisible lesions. These failures are rare in the tests—roughly a few percent of cases—but they highlight that AI should be seen as an assistant, not a replacement, for skilled endoscopists. Overall, this work demonstrates that combining a globally aware AI backbone with boundary-aware refinement and smart training guidance can make computer-aided colonoscopy more reliable. If integrated safely into endoscopy systems, tools like PrysmNet could help doctors spot more dangerous polyps, define cleaner removal margins, and ultimately reduce the risk of colorectal cancer for patients.

Citation: Xiao, J., Han, Y., Wang, L. et al. PrysmNet a polyp refining system using salience and multimodal guidance for reproducible cross domain segmentation. npj Digit. Med. 9, 158 (2026). https://doi.org/10.1038/s41746-026-02345-7

Keywords: colonoscopy AI, polyp detection, medical image segmentation, colorectal cancer prevention, deep learning in endoscopy