Clear Sky Science · en

Structure-aware multi-task learning with domain generalization for robust vertebrae analysis in spinal CT

Why Smarter Spine Scans Matter

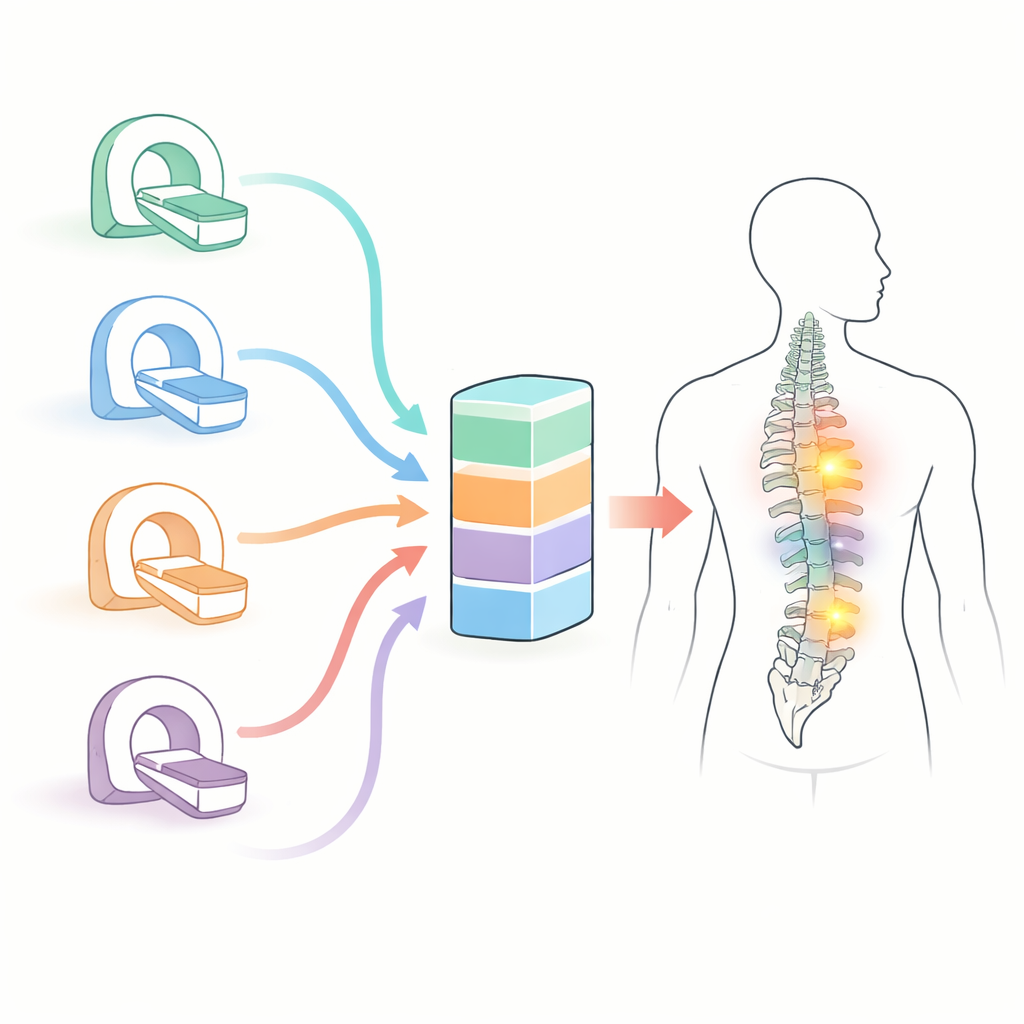

Back pain, fractures, and spinal tumors affect millions of people, yet reading spinal CT scans is painstaking work for radiologists. Every scan can contain dozens of vertebrae and subtle signs of damage that are easy to miss—especially when images come from many different hospitals and machines. This study introduces a new artificial intelligence (AI) system, called VertebraFormer, designed to automatically outline each vertebra, assign its correct position in the spine, and highlight suspicious lesions, all while staying reliable across a wide variety of real-world scans.

One System for Many Spine Problems

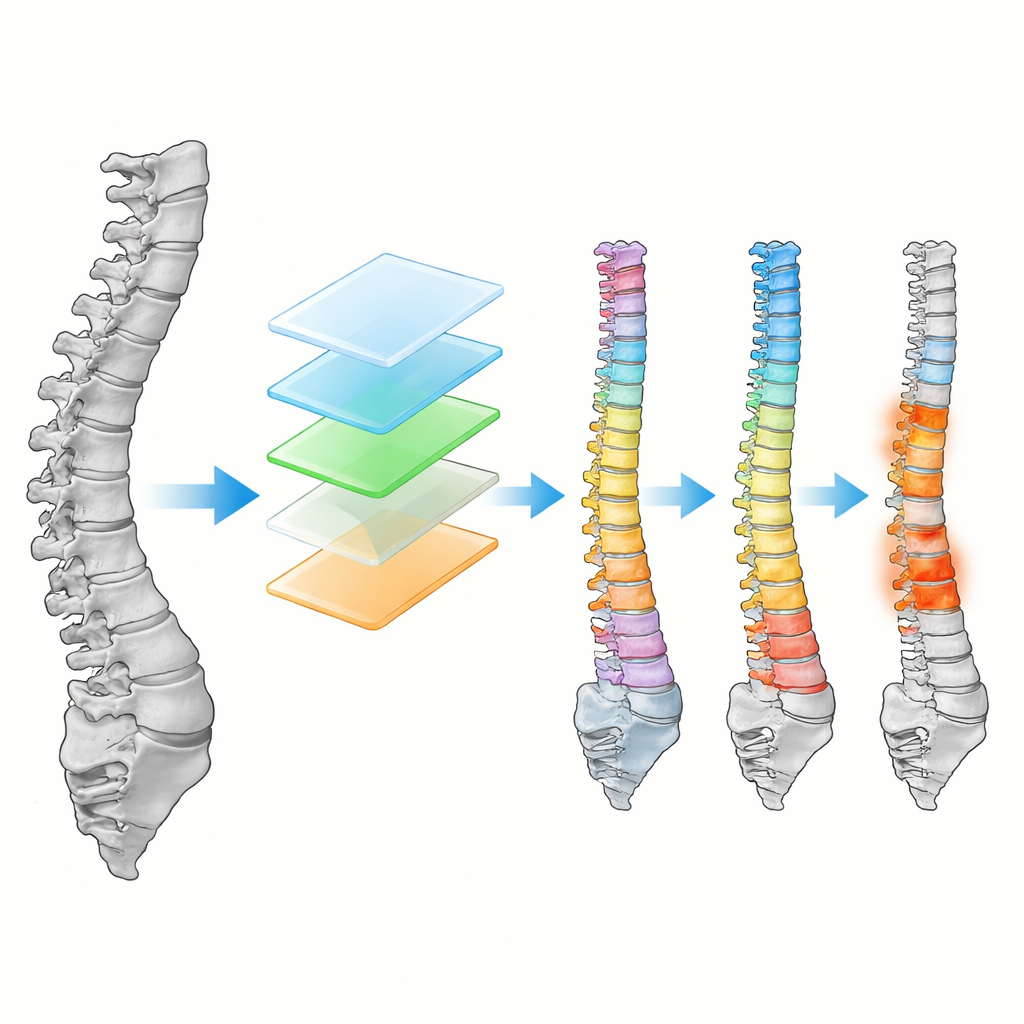

Instead of building separate algorithms for each task, the researchers created a unified model that tackles three jobs at once: drawing precise outlines of every vertebra, numbering them from the neck down to the lower back, and pointing to areas that may represent fractures, spread of cancer, or other damage. VertebraFormer is built on a modern “transformer” architecture, originally popularized in language and image understanding, which is especially good at seeing long-range patterns. This is crucial for the spine, where the shape of any one vertebra makes sense only in the context of the whole column.

A Diverse Benchmark of Real-World Scans

To test whether their system would hold up beyond a single lab or hospital, the team assembled a new benchmark they call MultiSpine. It combines six different datasets, including large public collections and private hospital cohorts, covering the neck, chest, and lower back regions, and in some cases both CT and MRI. The scans were acquired on various scanner brands with different imaging protocols, and expert radiologists annotated vertebral outlines, their anatomical labels, and—where available—pathology regions. The authors also went to unusual lengths to ensure there were no hidden duplicates across datasets, carefully tracking scan identifiers and using “perceptual hashing” to catch near-identical images.

How the AI Learns Spine Structure and Lesions

Inside VertebraFormer, a shared encoder first converts a 3D spinal scan into a set of patches and learns how these pieces relate across the entire column. On top of this shared backbone sit three specialized branches. One reconstructs a detailed 3D mask of all vertebrae. Another focuses on each vertebra in turn, using its location and surroundings to decide whether it is, for example, T11 or L3. A third branch produces heatmaps that glow brightest where a lesion is likely. Crucially, the model also includes a “dynamic modulation” unit that senses the imaging style—differences between scanners, protocols, or even CT versus MRI—and subtly adjusts its internal processing, aiming to remain accurate even when confronted with an unfamiliar type of scan.

Putting Robustness to the Test

The researchers benchmarked VertebraFormer against leading spine-analysis models on the MultiSpine dataset. It consistently achieved higher accuracy in outlining vertebrae, correctly numbering them, and detecting lesions. In a tougher “zero-shot” test, the model was trained on several datasets and then evaluated on a completely unseen one, mimicking deployment at a new hospital. Here, too, VertebraFormer outperformed alternatives and showed only modest performance drops. The team probed the design through ablation studies, demonstrating that each added component—the identification branch, lesion detector, and especially the domain-modulation block—contributed measurable gains. Despite its sophistication, the model processes about 14 full 3D volumes per second on modern hardware, beating an equivalently fast multi-network pipeline on all three tasks.

Handling Noisy and Shifted Data

Real clinical scans are far from perfect, so the authors stressed the model with simulated perturbations such as extra noise, intensity shifts, thicker slices, and metal artifacts. VertebraFormer remained stable under moderate degradations and only faltered under extreme conditions. They also showed that when domain information is mis-specified, performance drops, confirming that the modulation mechanism is meaningful rather than decorative. At the same time, alternative on-the-fly adaptation strategies, such as adjusting feature statistics or minimizing prediction uncertainty during testing, helped recover some performance when domain labels were unreliable or unavailable.

What This Means for Patients and Clinicians

For non-specialists, the key message is that VertebraFormer brings many pieces of spinal image analysis into a single, faster, and more reliable AI tool. By learning the overall structure of the spine, adapting to different scanners and hospitals, and simultaneously spotting both anatomy and disease, it reduces the need for multiple separate systems and can provide radiologists with clear outlines, consistent numbering, and intuitive heatmaps of suspicious areas. While it still needs prospective testing in live clinical workflows and broader training on rare conditions and multi-modal scans, this work lays important groundwork toward automated spine assessments that are accurate, interpretable, and robust enough to help doctors wherever the scans are taken.

Citation: Du, J., Ge, H., Zhang, R. et al. Structure-aware multi-task learning with domain generalization for robust vertebrae analysis in spinal CT. npj Digit. Med. 9, 217 (2026). https://doi.org/10.1038/s41746-025-02288-5

Keywords: spinal CT, vertebra segmentation, lesion detection, medical imaging AI, domain generalization