Clear Sky Science · en

The application of pre-trained large visual-language models for preliminary diagnosis of esophageal whitish plaques in large-scale esophageal cancer screening

Why these throat spots matter

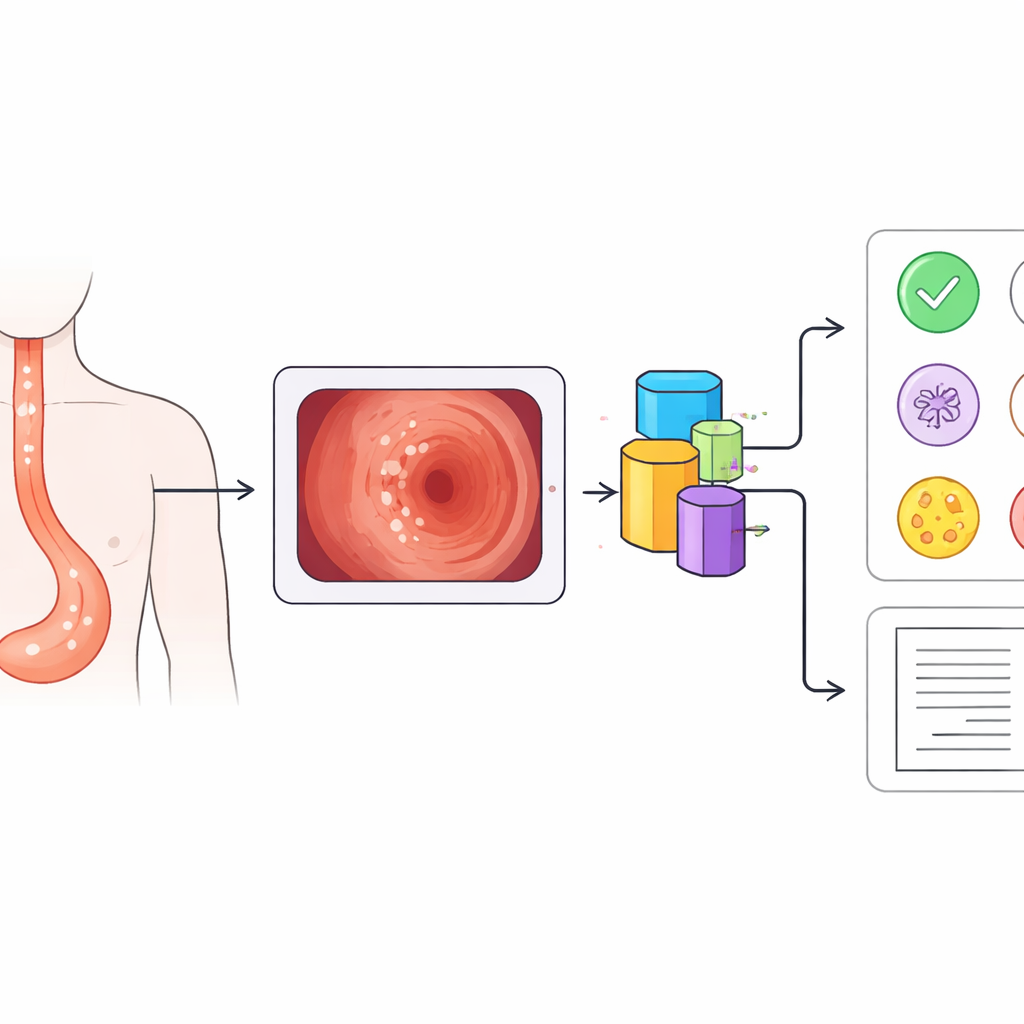

During routine stomach and throat exams, doctors often see small white patches inside the food pipe, or esophagus. Most are harmless, but some signal early cancer that can be cured if caught in time. Telling these look‑alike spots apart in busy screening programs is hard, even for experts. This study explores whether an advanced artificial intelligence (AI) system can help doctors quickly sort dangerous patches from harmless ones, and even describe what it sees in plain language.

Common white spots with very different risks

White patches in the esophagus are surprisingly common: in this large screening program, more than one in five patients had them. Yet these plaques can stem from very different problems. Some are early esophageal cancers, appearing as slightly raised, rough white areas that cannot be wiped away. Others are caused by fungal infection, which forms soft white coatings that may peel off to reveal raw tissue underneath. Still others are tiny benign growths called papillomas, or flat grainy patches known as glycogenic acanthosis, both usually harmless and suitable for simple follow‑up. Because treatment choices range from urgent biopsy to simple observation, getting this first visual judgment right is crucial.

Turning endoscope pictures into smart guidance

The researchers built a computer‑aided diagnosis system on top of a powerful vision‑language model known as BLIP, originally trained on huge collections of images and text. They fed the system 13,922 endoscopic images from more than 2,000 patients, covering the four main causes of whitish plaques and using both standard white‑light views and a special contrast mode called narrow‑band imaging. Unlike earlier tools that simply assign a disease label, this system does two things at once: it predicts which of the four conditions is present and generates a short written description of what it “sees” in the image, such as the location and appearance of the plaques.

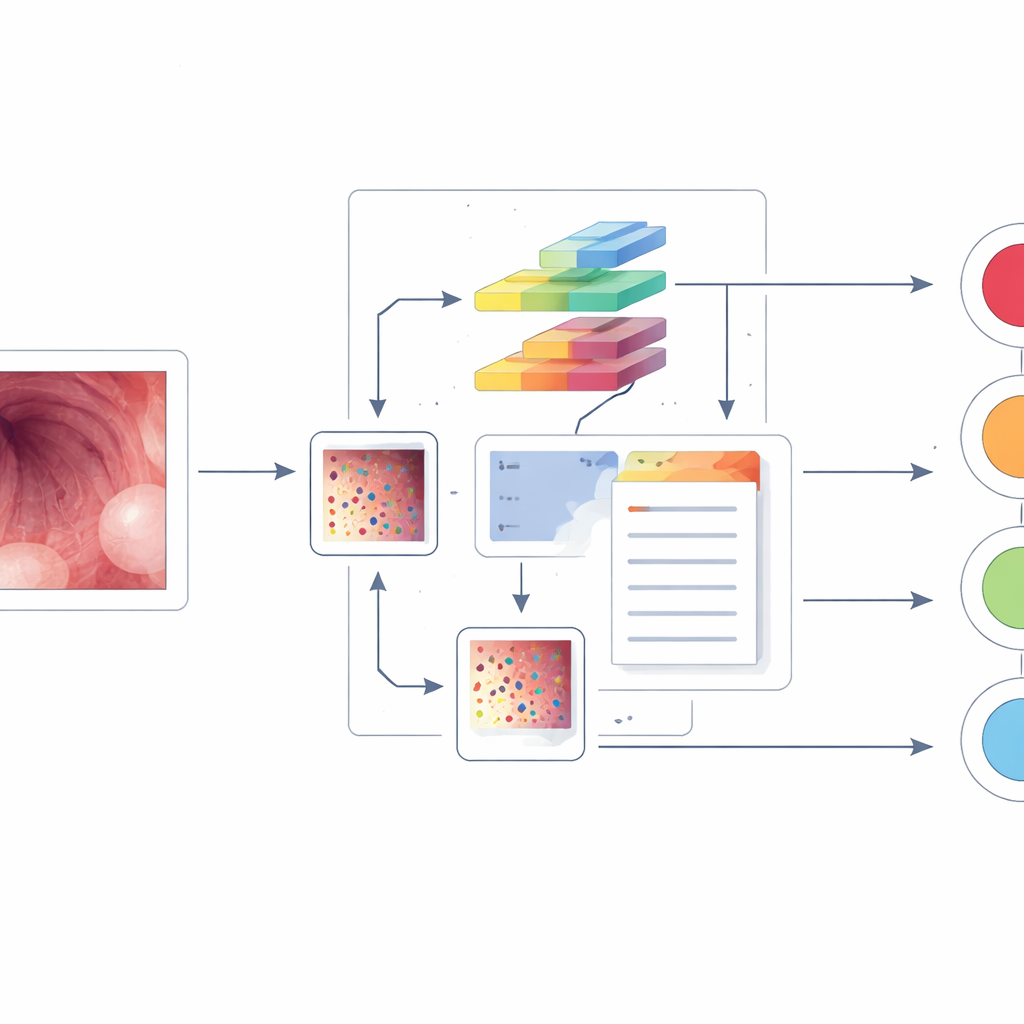

Teaching AI more with limited medical data

Medical image collections are small compared with everyday photo archives, which can limit AI performance. To address this, the team added special “positive‑incentive noise” modules to the BLIP model. In simple terms, these modules create gentle, data‑driven variations of each image and of the model’s internal feature maps, nudging the system to learn more robust patterns without overwhelming it with random changes. The model was then fine‑tuned so that its image understanding aligned closely with the expert diagnoses and text descriptions provided by experienced endoscopists.

Outperforming both rival models and human experts

When tested, the new system beat several leading image‑only AI models on all key measures of performance for all four diseases, using both endoscopy modes. It also outshone a specialized medical vision‑language model called LLaVA‑Med at the task of generating accurate diagnostic keywords within its text descriptions. In a direct “reading competition” against four endoscopists—two senior and two junior—the AI achieved higher overall accuracy in classifying images. Most strikingly, it was better than all of the doctors at catching early esophageal cancer, especially in terms of recall, meaning it missed fewer cancer cases while maintaining solid precision.

What this could mean for future checkups

The study suggests that carefully adapted vision‑language AI could become a valuable assistant in large‑scale screening programs. Such a system could flag suspicious white patches in real time, reduce missed early cancers, and spare many patients from unnecessary biopsies by reassuring doctors when a lesion looks safely benign. The work still needs to be tested on endoscopy videos, on rarer kinds of white plaques, and across multiple hospitals, but it points toward a future where AI not only spots trouble in medical images, but also explains its reasoning in language that supports faster, more consistent clinical decisions.

Citation: Li, Y., Li, X., Zhang, D. et al. The application of pre-trained large visual-language models for preliminary diagnosis of esophageal whitish plaques in large-scale esophageal cancer screening. npj Precis. Onc. 10, 94 (2026). https://doi.org/10.1038/s41698-026-01301-8

Keywords: esophageal cancer screening, endoscopy AI, vision-language models, computer-aided diagnosis, whitish esophageal plaques