Clear Sky Science · en

Multi-omics deep learning improves FDG PET-CT-based long-term prognostication of breast cancer

Why this matters for patients and families

When someone is diagnosed with breast cancer, one of the first questions they ask is, “What does this mean for my future?” Today’s staging systems and lab tests offer only rough estimates. This study explores whether combining medical scans, doctors’ notes, and basic clinical information with advanced artificial intelligence can give a clearer, more personalized outlook on long‑term survival and risk of the cancer coming back.

Looking inside the body’s fuel use

A key tool in this research is a scan called FDG PET-CT. It shows not only the shape of tissues, like a regular CT, but also how much sugar they consume, which reveals how active a tumor is. Doctors already know that certain numbers from these scans—such as how “bright” the tumor looks or how big it is—are linked to outcomes. However, these traditional measurements capture only a small slice of the rich information hidden in the images and often depend on labor‑intensive work by specialists to outline tumors by hand.

Teaching computers to read scans and reports

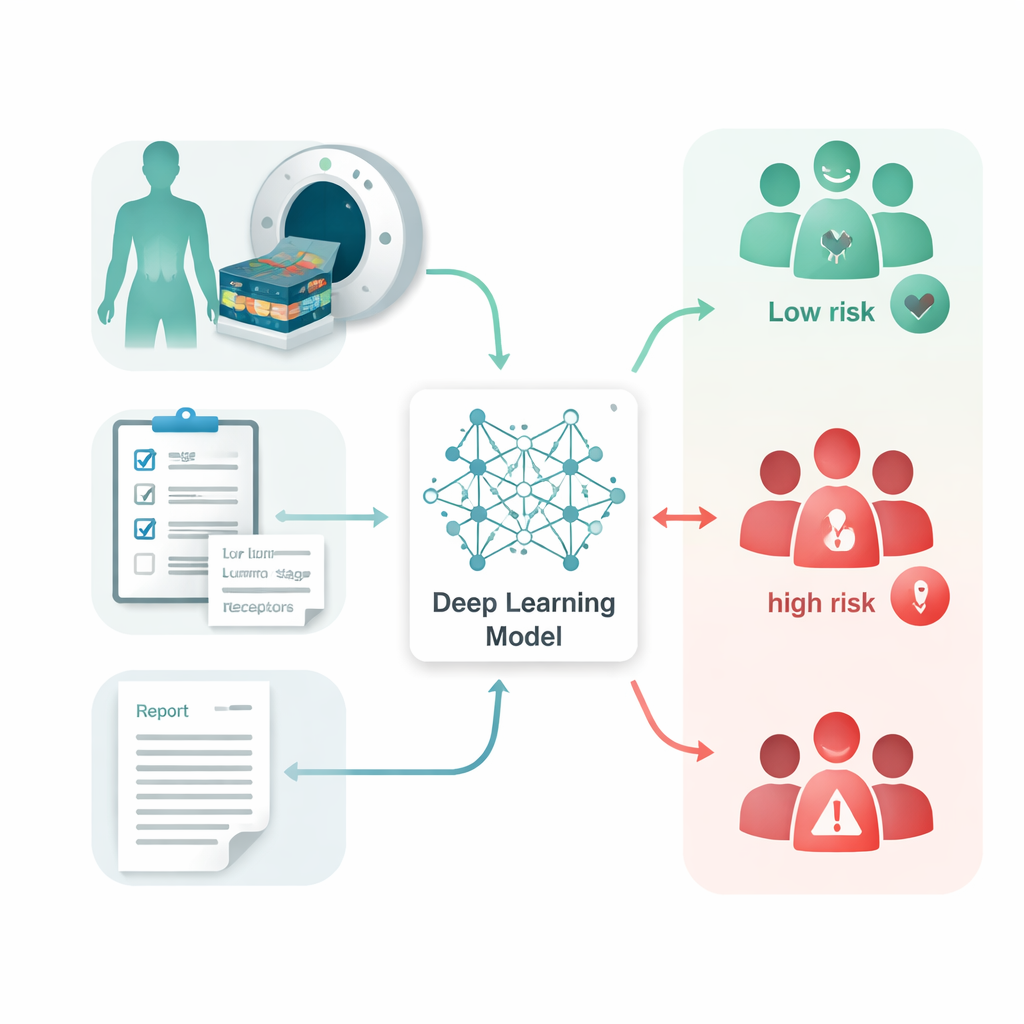

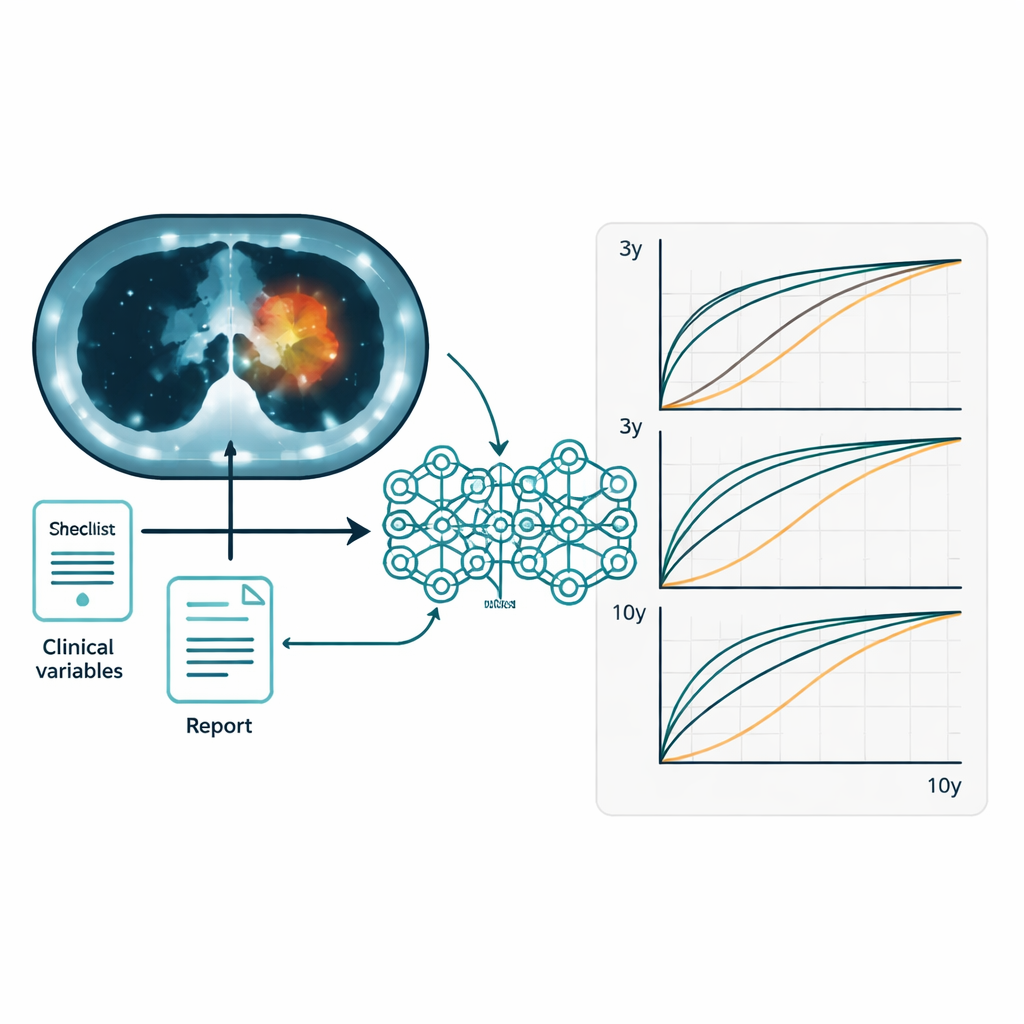

The researchers gathered FDG PET-CT scans, matching radiology reports, and routine clinical data from 1,210 women with breast cancer treated at one Dutch cancer center over 15 years. None had visible distant metastases at diagnosis. They built a system called the Multi-Omics Prognostic Stratification (MOPS) model, which uses deep learning—a type of artificial intelligence that learns patterns from large datasets—to combine three kinds of information: the scans themselves, the written reports that describe what radiologists saw, and clinical factors such as age, tumor size, lymph node status, and hormone receptor types. An automated program first outlined breast tumors and affected lymph nodes so the model could concentrate on the most relevant regions without manual tracing.

Getting more from combining many clues

The team first checked how well the usual scan-based numbers predicted who would live longer and who might see their cancer return. Measures that reflect the overall tumor burden, such as metabolic tumor volume and total lesion glycolysis, did better than a simple peak brightness measure, but their accuracy was still modest. A deep learning model that analyzed the entire chest on PET-CT improved on these traditional parameters. Next, the researchers tested three data “streams” separately: the images, the written reports, and the clinical information. Of these, clinical data alone provided the strongest single source of prognostic power. Yet when all three were fused together in the MOPS system, performance improved further, offering more reliable predictions for both overall survival and disease-free survival over 3, 5, and 10 years.

Opening the black box

Because doctors must be able to trust and explain any tool that influences treatment decisions, the team designed MOPS with interpretability in mind. Heatmaps overlaid on CT slices showed that the model focused on the primary breast tumors and involved lymph nodes, rather than irrelevant parts of the image. For clinical data, the model highlighted familiar high-impact factors such as tumor size (T stage), lymph node status, and family history. In the text reports, it tended to emphasize words describing lymph nodes, tumor location, and metabolic activity, echoing radiologists’ own reasoning. Across different tumor stages and biological subtypes, the model was able to divide patients into higher- and lower-risk groups, though the distinction was naturally less pronounced for very small, early-stage tumors that already have excellent survival rates.

What this could mean for care

In practical terms, this work suggests that thoughtfully combining imaging, doctors’ notes, and standard clinical information can sharpen estimates of a breast cancer patient’s long-term outlook beyond what any single source can provide. If validated in other hospitals and scanner types, a tool like MOPS could help doctors identify patients who truly need closer follow-up or more intensive treatment, while sparing lower-risk patients from unnecessary therapies and anxiety. Rather than replacing clinicians, the system acts as a second set of eyes, distilling complex data into an individualized risk score that supports clearer conversations about prognosis and next steps.

Citation: Liang, X., Zhang, T., Braga, M. et al. Multi-omics deep learning improves FDG PET-CT-based long-term prognostication of breast cancer. npj Precis. Onc. 10, 74 (2026). https://doi.org/10.1038/s41698-026-01283-7

Keywords: breast cancer prognosis, PET-CT imaging, deep learning, multi-omics, survival prediction