Clear Sky Science · en

Methods for randomized, blinded, controlled evaluation of putative disease interventions in multilaboratory, preclinical assessment networks

Why this matters for everyday health

Many promising medical treatments look powerful in animal studies but then flop in large, expensive clinical trials. This paper shows, in concrete detail, how scientists can redesign those early animal tests so that their results are more trustworthy and more likely to predict what will happen in real patients—using stroke as a test case.

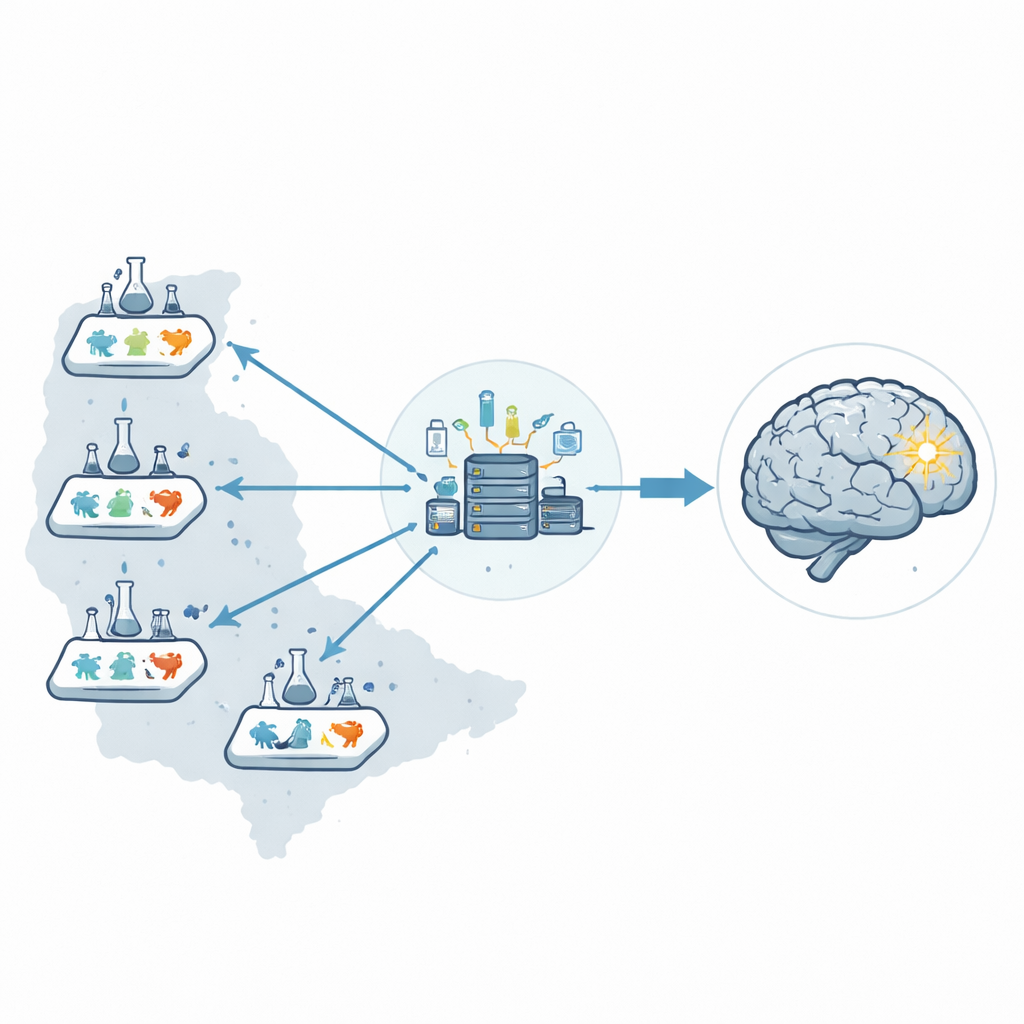

Building a network instead of a single lab

Rather than relying on one laboratory, the researchers created a six-lab preclinical network across the United States, called the Stroke Preclinical Assessment Network. A central coordinating center managed the entire operation: shipping coded drug vials, assigning treatments at random, receiving all data, and running the statistics. By separating those roles from the people performing surgery or assessing outcomes, they reduced the chance that human expectations could subtly influence results.

Putting fairness and concealment into practice

To mimic the rules of a good clinical trial, every animal was enrolled, tagged, and tracked from the moment it arrived in a lab. Treatments were concealed in identical vials so surgeons could not tell real drugs from placebos while inducing stroke and giving therapy. A structured randomization plan ensured that males and females, different stroke models, and all six sites contributed evenly to each treatment group. Even if an animal died or a procedure failed, it stayed in the record so that losses could not be quietly ignored, helping to avoid hidden biases.

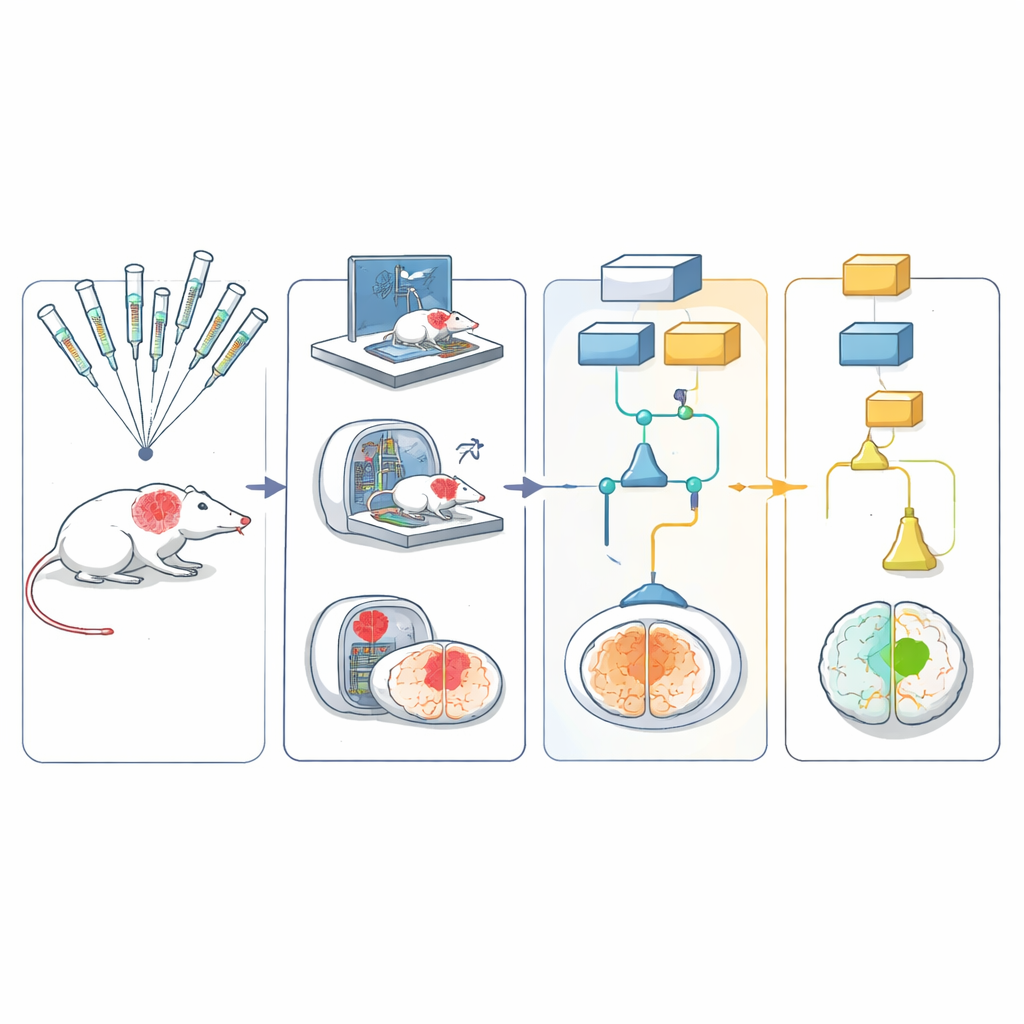

Testing treatments in realistic stroke models

The network used five different rodent models that together captured important aspects of human stroke, including age, high blood pressure, and diet-induced obesity. Stroke was produced in the same way across sites by briefly blocking a major brain artery, then restoring blood flow—similar to modern clot-removal procedures in people. Animals received one of six candidate protective treatments or a matching control. The team then followed them with simple movement tests, such as how they turned in a corner or walked on a grid, and with brain scans to measure the size of the injury over 30 days.

Blinded scoring, shared data, and smart statistics

To keep judgments unbiased, behavior tests were recorded on video and uploaded to a central archive. These videos, stripped of any identifying information, were sent to trained raters at other laboratories, who scored them without knowing which treatment the animal had received or where it was tested. Magnetic resonance images were run through an automated analysis pipeline that segmented the brain and injury area with minimal human input. All results fed into a multiarm, multistage statistical design that allowed several treatments to be tested in parallel: weak or clearly ineffective candidates could be dropped early, while promising ones continued to later stages.

What the results showed about stroke therapies

Across four stages and 2,615 animals, the system proved workable even during the disruptions of the COVID-19 pandemic. The methods consistently kept treatment groups balanced, minimized errors in dosing, and showed improving data quality as sites climbed the learning curve. In the end, five of six treatments were ruled out, while one—uric acid, a free-radical scavenger—met the preplanned bar for benefit. At the same time, the work exposed limitations of some popular models, such as very high death rates in aged mice, suggesting they may not be practical or realistic for future studies.

Big picture: a template for more reliable preclinical science

For a lay reader, the key message is that how we test treatments in animals matters as much as what we test. By importing the safeguards of modern clinical trials—randomization, blinding, full accounting of every subject, and careful statistics—into animal research, this network shows that early studies can be both more rigorous and more efficient. The detailed playbook they provide can be adapted to other diseases, offering a path toward lab findings that stand up to replication and give doctors, patients, and funders more confidence that a therapy truly has a chance to work in the clinic.

Citation: Lamb, J., Nagarkatti, K., Diniz, M.A. et al. Methods for randomized, blinded, controlled evaluation of putative disease interventions in multilaboratory, preclinical assessment networks. Lab Anim 55, 74–82 (2026). https://doi.org/10.1038/s41684-026-01683-z

Keywords: stroke, preclinical trials, animal models, research rigor, multicenter studies