Clear Sky Science · en

Early detection of metastatic risk in primary cutaneous melanoma using weakly supervised learning

Why this matters for patients and doctors

Skin melanoma can be deadly not because of the spot on the skin itself, but because some tumors quietly spread to other organs. Today, doctors mostly rely on how thick the tumor is and whether the surface is broken to guess which patients are at greatest risk. This study asks whether modern artificial intelligence (AI) can read far more from routine microscope images of the original skin tumor and flag dangerous cancers earlier, especially in patients who still appear to have relatively small tumors.

Looking for silent warning signs in tissue images

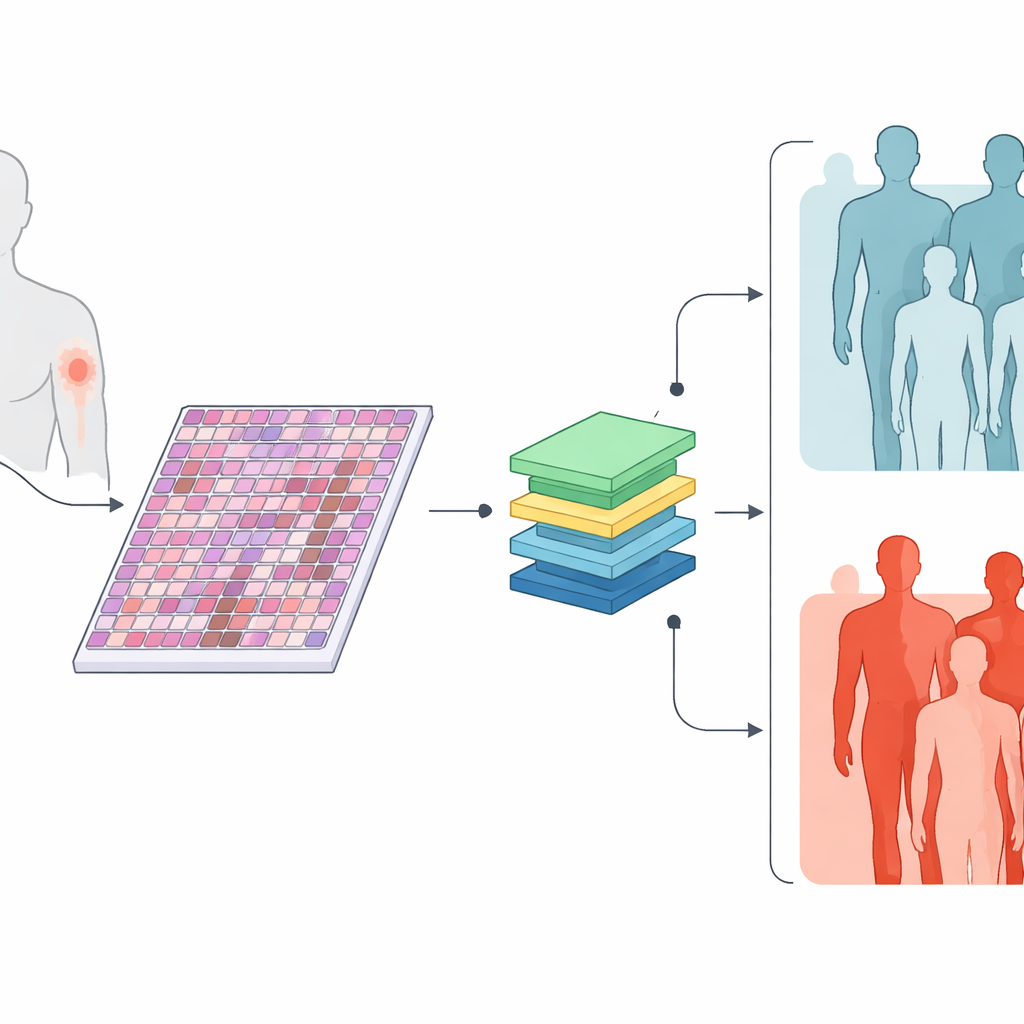

The researchers collected digital versions of standard microscope slides from 426 primary skin melanomas, along with basic information such as tumor thickness, ulceration, cell division rate, and tumor size. Roughly three in five of these tumors later produced spread to lymph nodes or distant organs, while the rest did not over at least three years of follow-up. Instead of asking pathologists to mark specific areas by hand, the team let the computer examine every part of each slide, cutting each giant image into many small patches. The question was simple: can a computer, trained only on whether each patient ultimately developed spread, learn to spot visual patterns that separate high‑risk from low‑risk tumors?

Teaching machines to read tissue like a map

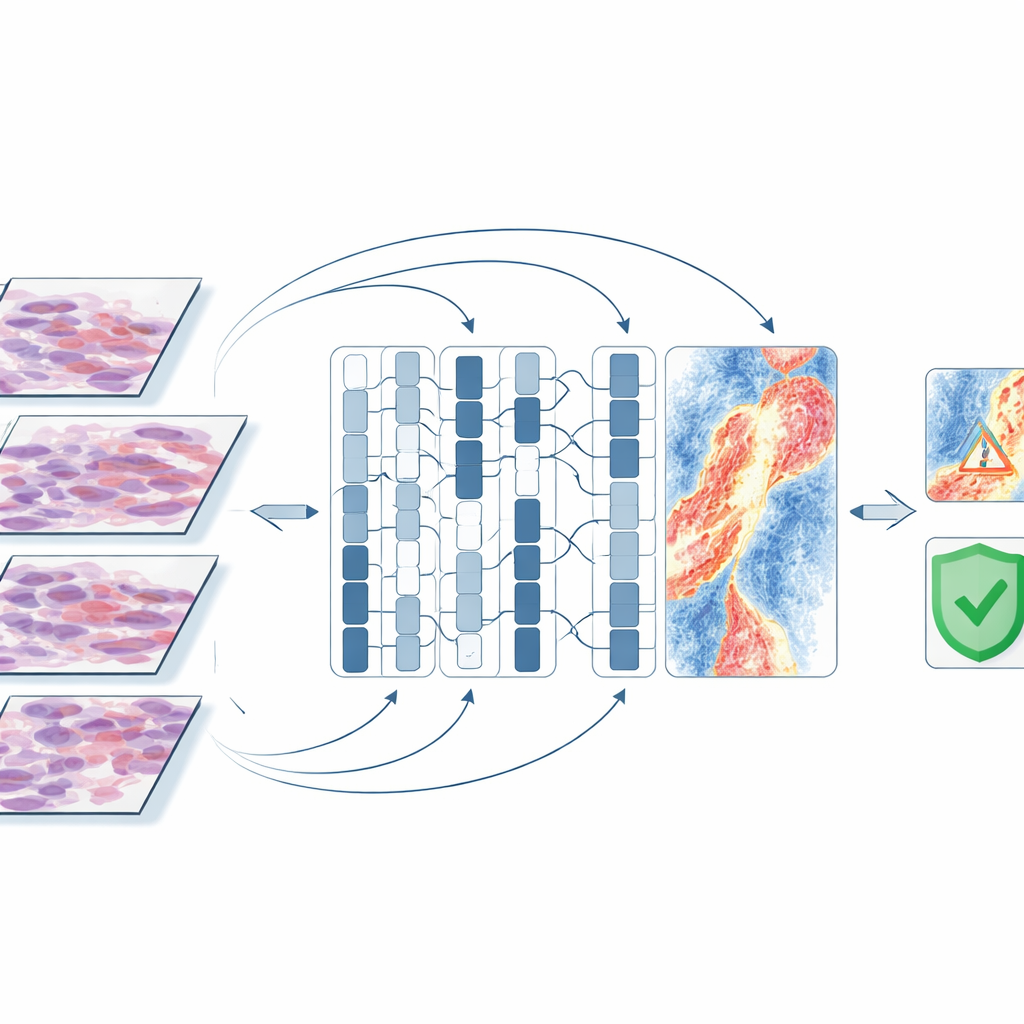

The team used recent AI methods that were first trained on enormous collections of medical images and text, then adapted them to melanoma. One model, called TransMIL, looked only at the tissue images. Another, MultiTrans, combined the image information with a compact text description of the tumor’s clinical features. A third, simpler model, BertMLP, used only those clinical features and ignored the images. When tested on a separate group of slides it had not seen before, both image-based models correctly separated metastatic from non‑metastatic tumors about 85% of the time and showed higher overall accuracy than the clinical‑data‑only model. This suggests that the microscope images contain rich clues about future behavior that current routine measurements do not fully capture.

Stronger help where decisions are hardest

The advantage of the image-based AI was clearest in medium‑thickness tumors, a group where doctors most struggle to decide who needs aggressive treatment. In these T2 melanomas, the image models clearly outperformed the clinical‑only model, which tended to label too many tumors as low‑risk. The image-based systems also performed well across thicker tumors, but those cases are already known to be dangerous by standard measures. In several patients who were initially classified as non‑metastatic but later developed spread, the AI models correctly flagged the primary tumors as high risk years earlier, hinting at how such tools might one day support earlier, more targeted therapy.

What the AI “looks at” inside the tumor

To understand what the computer was using as clues, the researchers generated attention maps that highlight slide regions most influential for a given prediction. In tumors that eventually spread, the models often focused not on dense clumps of tumor cells, but on the surrounding environment: blood vessels, areas where the skin surface had broken down, and bands of inflammatory cells deeper in the skin. In tumors that did not spread, the highlighted regions tended to be intact surface layers with few signs of damage. Misclassified cases often contained bland connective tissue, fat, or artifacts from slide preparation, suggesting that the computer struggled when clear tissue signals were weak. These patterns line up with current understanding of how melanoma cells escape into lymphatic channels and the bloodstream, lending biological credibility to the AI’s choices.

Limits, next steps, and what this could mean

This work was done at a single hospital on a few hundred tumors, and the models were not yet tested across different centers or used to predict survival time. The approach also does not replace the pathologist; instead, it adds a new layer of risk information extracted automatically from routine slides. Still, the findings show that weakly supervised AI can uncover meaningful warning signs of spread directly from primary melanoma tissue, without labor‑intensive manual marking. If validated in larger, multi‑center studies and combined with other data such as skin photographs and gene activity tests, such tools could help doctors better identify patients with seemingly early‑stage melanoma who quietly carry a high risk of metastasis, and offer them closer follow‑up or earlier preventive treatment.

Citation: Dahlén, F., Shujski, I., Yacob, F. et al. Early detection of metastatic risk in primary cutaneous melanoma using weakly supervised learning. Sci Rep 16, 11234 (2026). https://doi.org/10.1038/s41598-026-45588-w

Keywords: melanoma, metastatic risk, digital pathology, artificial intelligence, weakly supervised learning