Clear Sky Science · en

An ensemble of vision and swin transformers with LLM-based explanations for sugarcane leaf disease diagnosis

Why spotting sick sugarcane leaves matters

Sugarcane is a backbone crop for sugar, biofuels, and many rural livelihoods, but its leaves are vulnerable to a range of diseases that quietly erode yield. Farmers usually rely on visual inspection, which can be slow, inconsistent, and hard to scale across large fields. This paper explores how modern artificial intelligence can automatically read leaf photos to detect multiple sugarcane diseases with high accuracy, and then use a language model to turn those predictions into plain-language advice for farmers.

How the leaf photos are turned into data

The researchers built their system using an open sugarcane leaf image collection from Kaggle, containing nearly twenty thousand color photos. Each image belongs to one of six categories: healthy or one of five common diseases, including Bacterial Blight, Mosaic, Red Rot, Rust, and Yellow Leaf Disease. The photos were taken under real farm conditions, so they include changing light, shadows, and cluttered backgrounds. To prepare the data, the team removed duplicates and corrupted images, then split the dataset into training, validation, and test sets while keeping the same balance of disease types in each. During training, they augmented only the training images with rotations, flips, and zooming to mimic different camera angles and distances, making the system more robust without inflating its test performance.

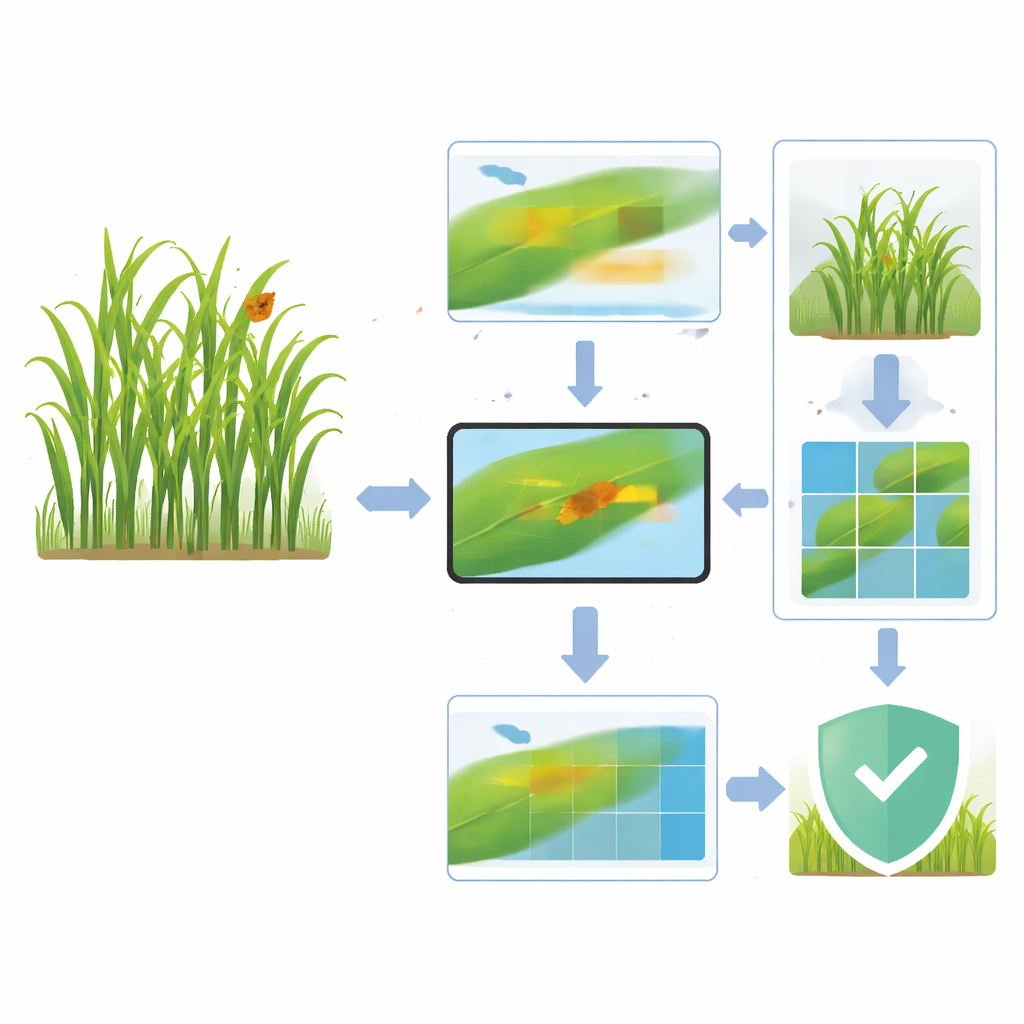

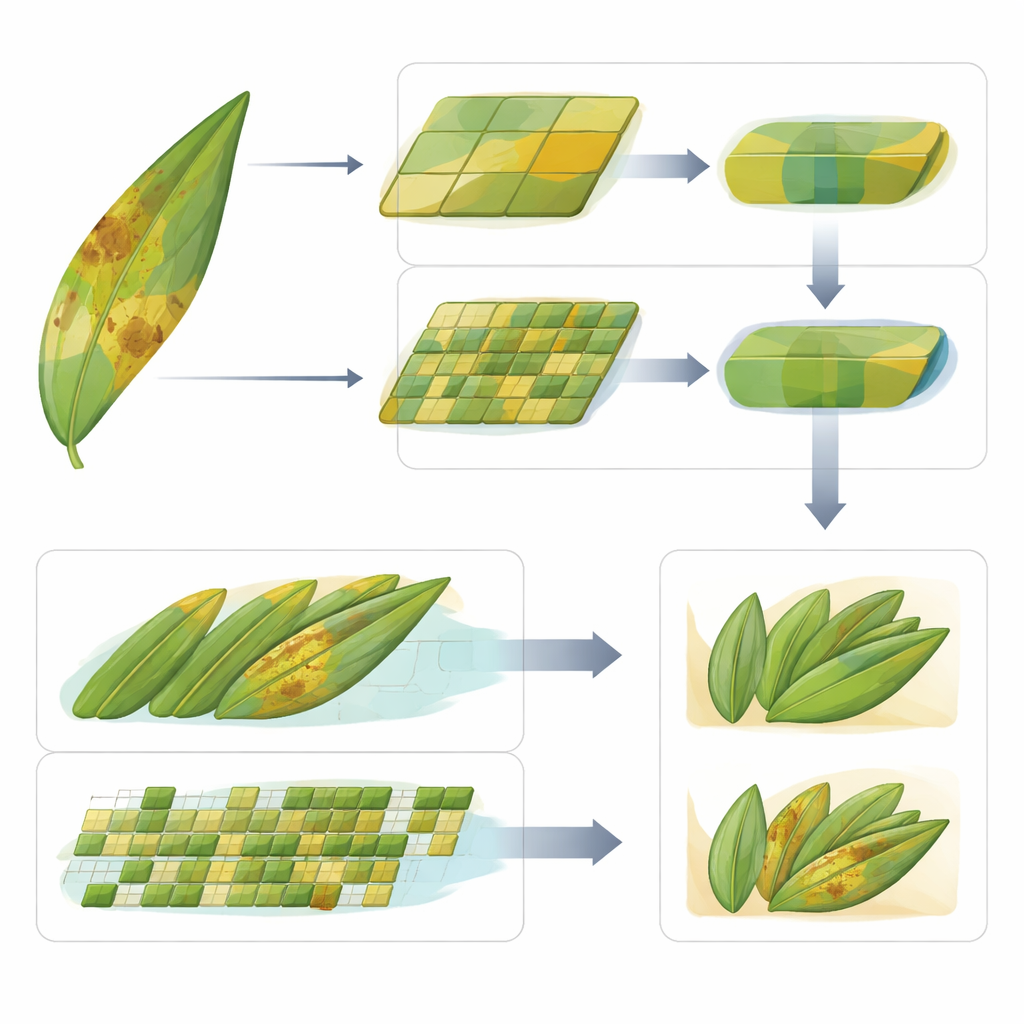

Two complementary ways of looking at a leaf

At the heart of the study is an “ensemble” that combines two advanced vision models known as transformers. One, the Vision Transformer (ViT), views each image as a set of patches and learns patterns across the entire leaf at once. This global view is well suited to diseases that spread as large, diffuse areas of discoloration. The other, called Swin Transformer, works with smaller overlapping windows that travel across the image, building a layered understanding of fine textures and small spots. This local focus helps with diseases that appear as tiny lesions, streaks, or specks. By design, ViT is sensitive to broad color changes while Swin pays attention to small, clustered details—two sides of how real leaf diseases show up in the field.

How the two models join forces

Rather than building a complicated new network, the authors combine ViT and Swin in a simple and transparent way. Each model first examines the same leaf image and produces its own probability scores for the six classes. These scores are then averaged, with no extra trainable weights, and the highest combined probability decides the final diagnosis. This averaging strategy balances the strengths of each model and avoids overfitting on a dataset that, while reasonably large, still reflects a specific set of regions and conditions. Experiments show that replacing Swin with a traditional convolutional network removes crucial local detail, and using only ViT misses subtle cues—evidence that the gain comes from the true synergy of global and local attention, not just from stacking more models.

How well the system works in practice

On the held-out test set of nearly three thousand images, the ensemble reaches an accuracy of about 97 percent, with similarly high precision, recall, and F1-scores across all six classes. It outperforms strong convolutional baselines like ResNet, EfficientNet, MobileNet, and DenseNet, as well as the individual ViT and Swin models. The confusion matrix shows that most errors occur between visually similar diseases, such as Yellow Leaf and Mosaic, but overall misclassification rates remain low. Receiver operating characteristic curves for each class are almost perfect, indicating that the ensemble is highly confident and consistent in separating healthy from diseased leaves and among different disease types.

Turning predictions into farmer-friendly guidance

To move beyond raw labels, the authors link their image ensemble to a large language model (LLM) hosted online. Once a leaf photo is classified, the predicted disease name is sent to the LLM, which returns a short explanation of likely symptoms and general management suggestions, intended for farmers and extension workers. A web interface built on the Hugging Face platform allows users to upload a leaf image, see the predicted disease, and read the AI-generated guidance within a few seconds. The authors emphasize that these recommendations are advisory and should be checked with agronomy experts, because LLMs can sometimes generate overconfident or incomplete advice. Still, this language layer makes the system more approachable for non-specialists.

What this means for future smart farming tools

In plain terms, the study shows that combining two “ways of seeing” the same leaf—one that sees the forest, one that sees the trees—can yield a very reliable digital scout for sugarcane disease. The ensemble of ViT and Swin Transformers captures both broad and fine-grained symptoms, while the attached language model helps translate technical predictions into human-friendly suggestions. Although the models still need testing on more regions, lighting conditions, and devices, and the language outputs require expert vetting, this work points toward practical phone or tablet tools that could help farmers spot problems early, reduce guesswork, and support more precise use of treatments in sugarcane and, eventually, many other crops.

Citation: Saritha, M., Rasane, K. An ensemble of vision and swin transformers with LLM-based explanations for sugarcane leaf disease diagnosis. Sci Rep 16, 10707 (2026). https://doi.org/10.1038/s41598-026-45453-w

Keywords: sugarcane disease detection, transformer vision models, precision agriculture, plant leaf imaging, AI decision support