Clear Sky Science · en

Using machine learning algorithms to predict MACE in peritoneal dialysis patients

Why this matters for people on home dialysis

For many people with kidney failure, peritoneal dialysis offers the freedom to treat themselves at home instead of in a clinic. Yet these patients face a high risk of serious heart and blood vessel problems, such as heart attacks and strokes. This study asks a practical question with real-life stakes: can we use modern computer techniques to spot, early on, which peritoneal dialysis patients are most likely to run into major heart trouble, so doctors can step in before disaster strikes?

Who was studied and what was measured

The researchers looked back at the medical records of 1,006 adults who started peritoneal dialysis at two hospitals in China between 2010 and 2016. All patients had been on this treatment for at least three months. At the time dialysis began, the team collected 86 pieces of information for each person, including age, other illnesses such as diabetes or heart failure, blood pressure, lab tests, heart ultrasound results, and medications. Everyone was then followed for up to roughly ten years to see who experienced a major cardiac or cerebrovascular event, a group of problems the authors call “MACE,” including heart attack, severe chest pain, stroke, cardiac arrest, hospital stays for heart failure or dangerous heart rhythms, and death from any cause.

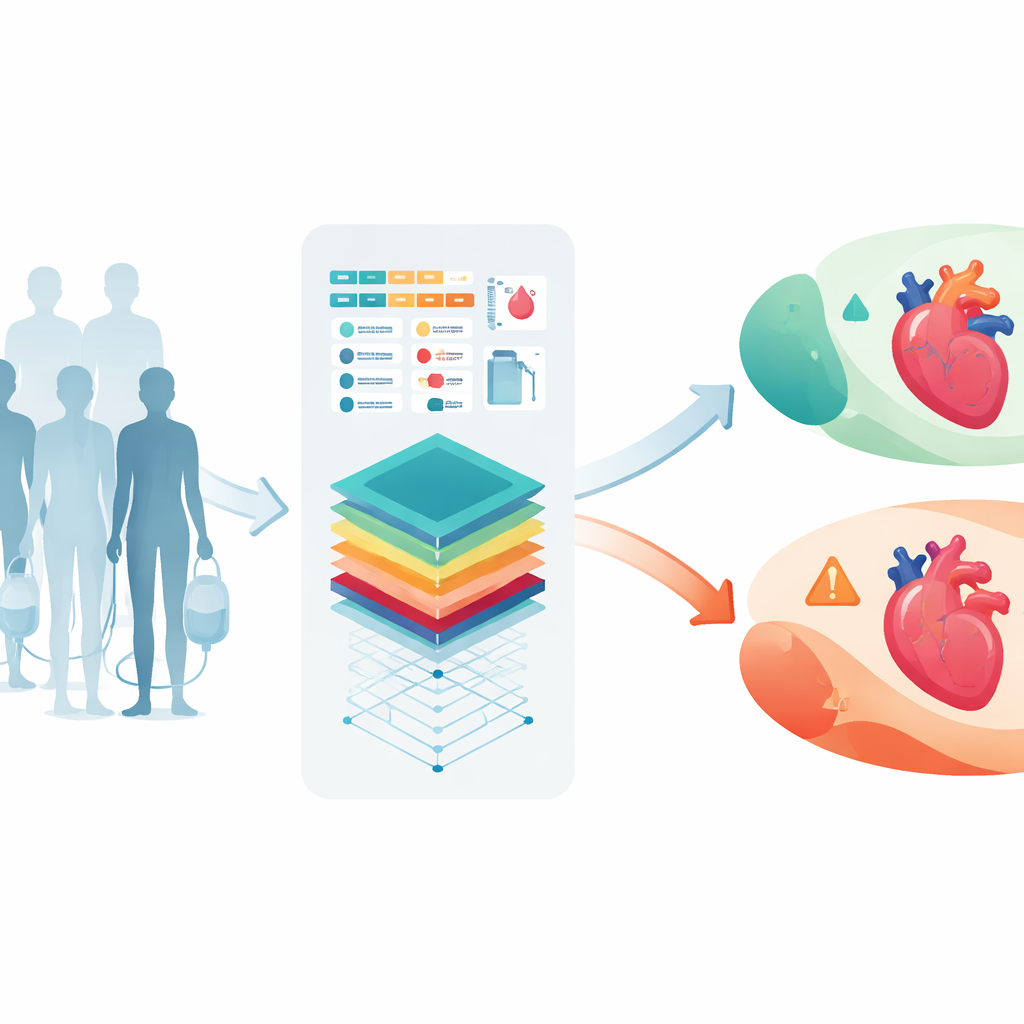

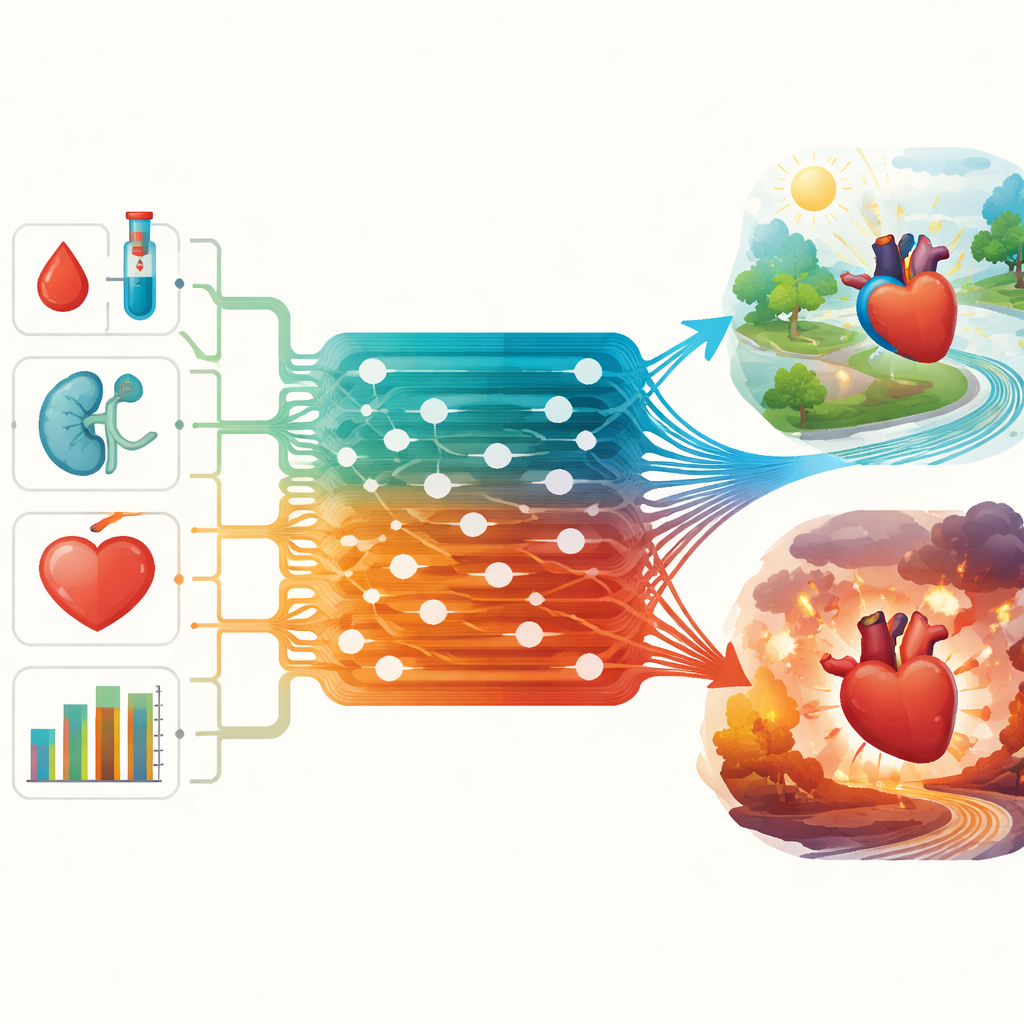

Smarter prediction with machine learning

Instead of relying only on traditional statistics, the team turned to three machine learning approaches that can uncover complex patterns in large datasets: Random Forest, XGBoost, and AdaBoost. They split their data into groups to train the models, test them, and then check their performance in a separate hospital’s patients. The goal was to see how well each approach could predict who would suffer a major event at any time, within the first year, and within the first five years after starting peritoneal dialysis. The strength of a model was judged using a standard score called the area under the curve (AUC), where a value closer to 1.0 means better discrimination between high- and low-risk patients.

What the models learned about risk

Across the entire follow-up period, 409 of the 606 patients in the main development group had a major event. For predicting these overall events, the Random Forest method worked best, with an AUC of about 0.80, meaning it could correctly distinguish higher- from lower-risk patients most of the time. In this long-term view, the most influential signals were levels of parathyroid hormone, a marker tied to bone and blood vessel health, a history of congestive heart failure, and age. When the focus narrowed to events in the first year, only 114 patients were affected, and XGBoost came out ahead with an AUC of 0.86. Here, good “protective” cholesterol (HDL), age, and blood calcium levels stood out. For the five-year horizon, Random Forest again performed best, and age, blood creatinine, and estimated kidney filtration rate—indicators of remaining kidney function and dialysis adequacy—rose to the top.

Checking reliability and real-world performance

To make sure these results were not a fluke, the authors compared their machine learning tools with a more familiar time-to-event method called Cox regression and tested everything in a separate group of 400 patients from another hospital. The key risk factors identified by the newer methods closely matched those found with traditional analysis, but the machine learning models generally did a better job of ranking patients by risk. In the external hospital group, the main model still performed well, correctly classifying outcomes in roughly seven out of ten patients. The study also highlighted the importance of other intertwined factors—such as overall illness burden, body weight, blood fats, albumin (a marker of nutrition), urine output, and blood pressure—that together shape heart risk in this vulnerable population.

What this means for patients and care teams

The authors conclude that carefully designed machine learning tools can help doctors estimate, at the very start of peritoneal dialysis, which patients face especially high odds of serious heart and blood vessel problems in the coming years. Age consistently mattered, but several factors tied to mineral balance, blood fats, dialysis adequacy, and overall health also played major roles—and many of these can be monitored and treated. While the study is retrospective and needs confirmation in future prospective work, it points toward a future in which home dialysis care is guided by quiet background algorithms that flag those in danger early, enabling targeted treatment to prolong life and reduce hospital stays.

Citation: Xu, L., Zhang, Y., Abbas Al-Janabi, A.A. et al. Using machine learning algorithms to predict MACE in peritoneal dialysis patients. Sci Rep 16, 10553 (2026). https://doi.org/10.1038/s41598-026-45362-y

Keywords: peritoneal dialysis, cardiovascular risk, machine learning, kidney failure, risk prediction