Clear Sky Science · en

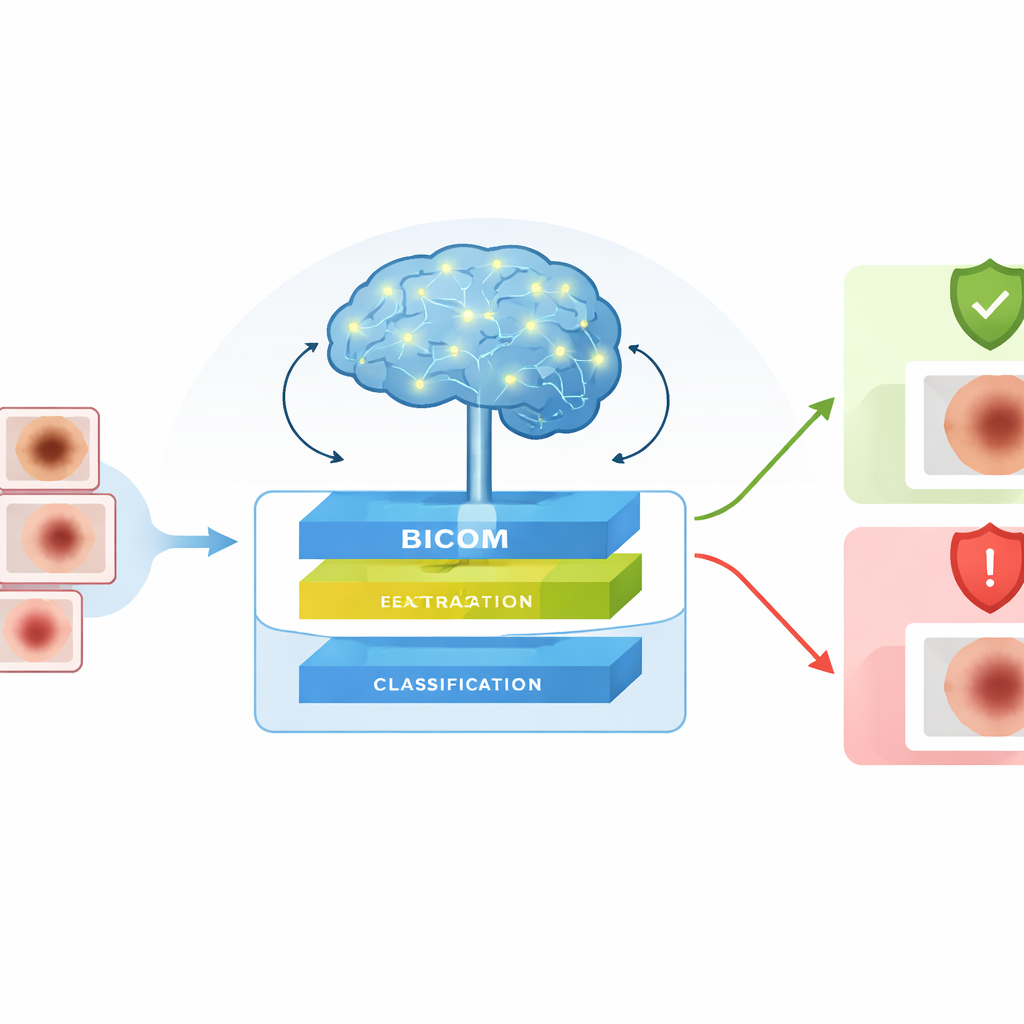

A brain-inspired computational framework for image-based risk assessment

Why this research matters for skin health

Skin cancer is one of the few cancers that people can literally see on their own bodies, yet early signs are often subtle enough to fool the naked eye. This study presents a new computer system, inspired by how the brain works, that examines close-up skin images to estimate cancer risk. The goal is not to replace dermatologists, but to give them a fast, consistent second opinion that works both in large hospitals and smaller clinics, helping catch dangerous lesions earlier while avoiding unnecessary alarms.

A smart helper for doctors, not a replacement

The authors introduce Bicom, a complete framework that looks at dermoscopic images—special magnified photos of skin spots—and judges whether a lesion is likely benign or malignant. Bicom is designed to fit into real clinical workflows, either running on secure hospital servers or at the point of care. It focuses on three practical needs: handling very detailed images without slowing to a crawl, recognizing lesions of many shapes and sizes, and dealing honestly with uncertainty when the image is ambiguous. Instead of making a single, rigid decision, the system can flag doubtful cases for extra internal review before giving its final risk estimate.

Seeing both the big picture and tiny details

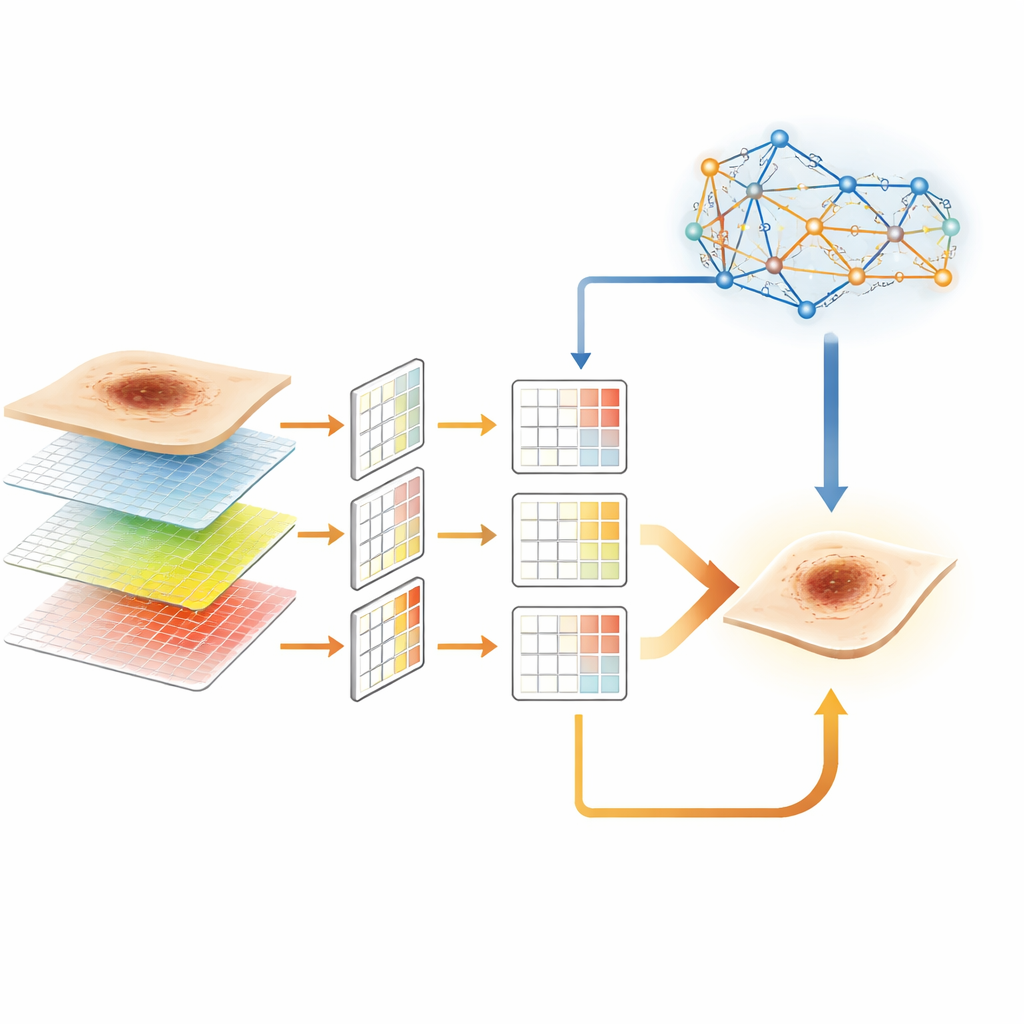

To read skin images well, a computer must pay attention to both broad patterns and fine details at the same time. Bicom tackles this by upgrading an existing image-analysis backbone into a new module called F-ResNeSt. This part of the system builds a “pyramid” of features from each image, capturing information at multiple scales, from overall lesion shape down to small irregularities at the border. At the same time, an efficient attention mechanism allows the model to link distant image regions without the heavy computing cost that normally comes with such global comparisons. The result is a compact yet rich description of each lesion that is better suited to subtle medical differences than standard networks.

Making fast, scalable, and careful decisions

Once these layered features are extracted, Bicom passes them to an improved classifier called L-CoAtNet. This stage blends strengths of two worlds: the local sensitivity of traditional image filters and the global awareness of attention-based models. By using a streamlined form of attention, L-CoAtNet keeps memory and computation demands modest, which is crucial for high-resolution medical images and clinics without top-tier hardware. Together, F-ResNeSt and L-CoAtNet form a hierarchical pipeline that can be trained end to end, turning raw images into an initial estimate of cancer risk while remaining practical for real-world deployment.

Letting a brain-like module double-check the hard cases

Where Bicom differs most from many earlier systems is in how it handles uncertainty. After the main classifier produces a risk score, the framework computes a confidence value that measures how far the prediction is from a “coin-flip” situation. If the model is unsure, the case is routed to a brain-inspired spiking neural network module. Instead of using continuous signals, this module works with brief, spike-like activations similar to nerve impulses, which are naturally suited to sparse, energy-efficient processing. It re-examines the internal features for tricky images—such as blurry, low-contrast, or borderline lesions—and refines the decision, especially near the boundary between benign and malignant classes.

How well the system works in practice

The researchers tested Bicom on thousands of public skin lesion images and an additional subject dataset, comparing it with widely used image models and several specialized disease-risk systems. They measured not only overall accuracy but also how often the model correctly identifies cancers, how well it avoids false alarms, and how reliably it separates benign from malignant cases across many decision thresholds. In all these measures, Bicom either matched or surpassed strong baselines, including modern hybrid networks. Careful ablation experiments showed that each piece—the multi-scale feature pyramid, the efficient attention, and the spiking refinement—adds measurable benefit, and together they yield the best and most stable performance.

What this means for patients and clinics

To a lay reader, the main message is that the authors have built a more thoughtful kind of computer assistant for skin cancer risk: one that looks at lesions from multiple angles, uses its computing power efficiently, and knows when it might be wrong. By blending ideas from modern artificial intelligence with concepts borrowed from brain science, Bicom moves beyond one-shot guesses toward a more cautious, layered decision process. If validated on larger, more varied patient groups and made lightweight enough for everyday devices, systems like this could help clinicians spot dangerous lesions earlier and give patients more reliable reassurance when a suspicious spot is, in fact, safe.

Citation: Zhou, F., Hu, S., Du, X. et al. A brain-inspired computational framework for image-based risk assessment. Sci Rep 16, 10720 (2026). https://doi.org/10.1038/s41598-026-45033-y

Keywords: skin cancer, dermoscopic imaging, medical AI, risk prediction, brain-inspired computing