Clear Sky Science · en

The influence of structured reporting on the accuracy of head and neck sonographies

Why clear scan reports matter

When doctors use ultrasound to examine the head and neck, they rely on written reports to decide how to treat patients. If those reports are incomplete or confusing, important details can be missed, leading to delayed diagnoses or less effective surgery plans. This study asks a simple but crucial question: can a more structured, checklist-style way of writing ultrasound reports make them not only more complete, but also more correct?

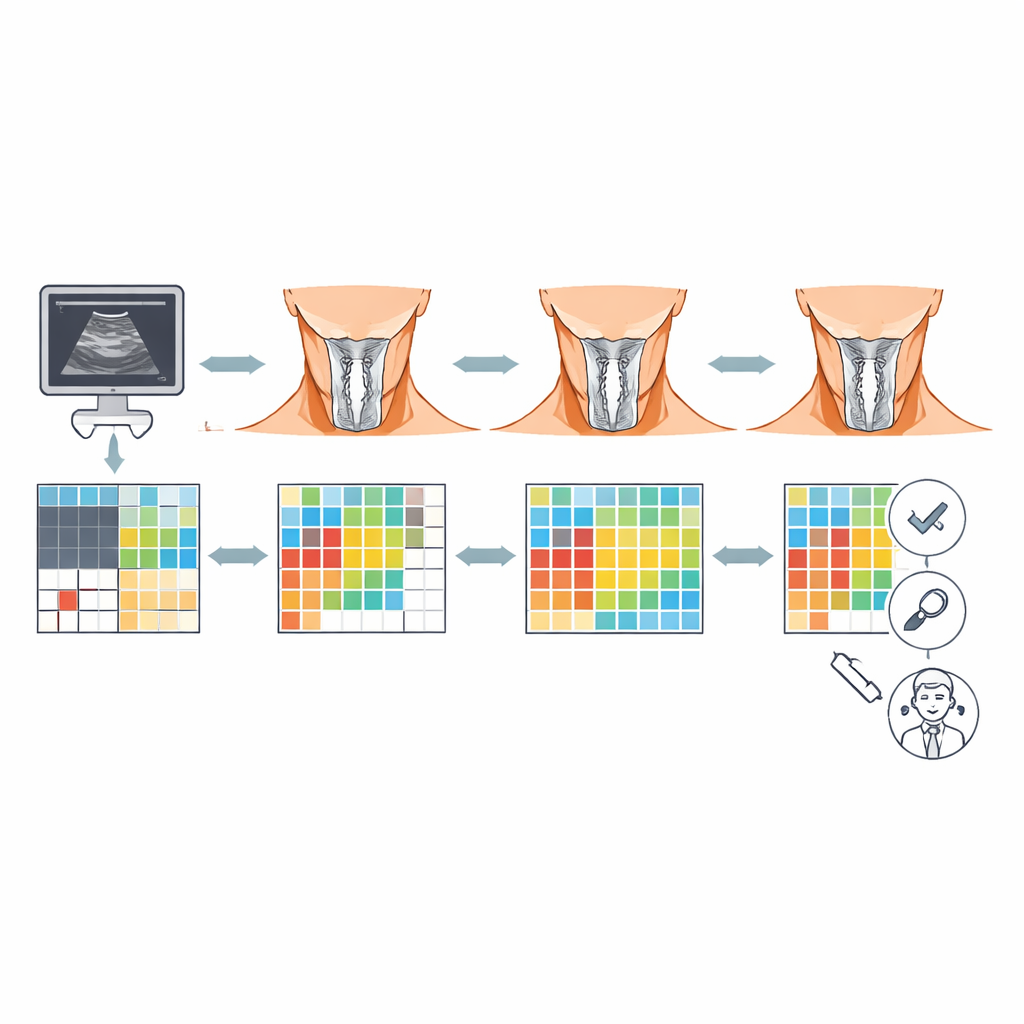

From free writing to guided checklists

Traditionally, many doctors describe ultrasound findings in free text, much like dictating a short letter. This flexible style allows personal phrasing but can easily skip key details or use unclear wording. In contrast, structured reporting guides the examiner through a standardized template: important regions of the head and neck are listed in order, and the doctor is prompted to confirm normal findings, point out abnormalities, and describe them in a uniform way. The authors suspected that such structured reports might not only cover more ground but also better reflect what expert examiners would write.

How the study was set up

The researchers enrolled 128 doctors in training who were attending certified head and neck ultrasound courses. These participants were already familiar with ultrasound but usually wrote free-text reports in their everyday work. The group was randomly split: one half wrote conventional free-text reports, and the other half used a dedicated structured reporting template provided through an online platform. Each participant received two patient cases with brief clinical histories and real ultrasound images showing typical head and neck problems, such as inflamed lymph nodes, salivary stones, neck cysts, or thyroid disease. Their task was to write reports based only on the provided information, just as they would in a busy clinic.

Measuring completeness and correctness

To judge how good the reports were, the team first created expert “master reports” for each case. These master versions listed everything that should be mentioned: which areas were normal, which were diseased, and how exactly the findings should be described. They then built scoring sheets that broke reports into many small items—such as identifying a structure as normal, ruling out a disease, or accurately describing the size, location, and appearance of a lesion. Two experienced head and neck ultrasound specialists independently scored all reports. One score captured completeness (did the doctor mention all the necessary elements?), and a separate score captured accuracy (were the mentioned elements correctly described compared to the expert standard?). To make scores comparable across cases of different complexity, the researchers used percentages rather than raw point totals.

What the numbers revealed

The difference between the two reporting styles was striking. On average, structured reports contained far more of the information that should be there: about 72% completeness versus only about 21% for free-text reports. Accuracy followed a similar pattern: structured reports reached roughly 77% accuracy compared with about 13% for free text. This advantage held true for each of the ten clinical cases examined. Statistical analyses confirmed that using structured reporting was the only factor that reliably predicted better completeness and accuracy; neither gender, medical specialty, formal ultrasound certification level, nor the number of prior exams changed the outcome. Interestingly, within the structured group, more complete reports also tended to be more accurate, whereas in the free-text group, mentioning more items did not clearly translate into describing them correctly.

Limits and future directions

The authors note that the study has boundaries. Participants reported on preselected cases based on images, rather than performing live scans themselves, so the results reflect how well people write up findings, not how well they acquire them. The structured-report group also received brief training on how to use the template, which might have given them a small learning advantage. Finally, the measure of “report accuracy” captured how closely a report matched the expert template, including the correct documentation of normal regions. It did not directly test whether doctors always nailed the final diagnosis in the real-world sense. Future research, the authors suggest, should study structured reporting in everyday practice, including its impact on true diagnostic accuracy, teamwork between specialties, and ultimately patient outcomes.

What this means for patients and doctors

This study provides strong evidence that using structured templates for head and neck ultrasound reporting leads to reports that are both more complete and more correct than traditional free text. For patients, that can translate into clearer communication among doctors, better surgical planning, and fewer missed details. For healthcare systems, structured reports also create cleaner, more uniform data that can feed into big-data research and future artificial intelligence tools. In simple terms, moving from free-form notes to well-designed checklists appears to be a powerful step toward safer, more consistent care in head and neck imaging.

Citation: Weimer, J.M., Künzel, J., Raczek, C. et al. The influence of structured reporting on the accuracy of head and neck sonographies. Sci Rep 16, 8560 (2026). https://doi.org/10.1038/s41598-026-43561-1

Keywords: structured reporting, head and neck ultrasound, diagnostic accuracy, medical education, clinical documentation