Clear Sky Science · en

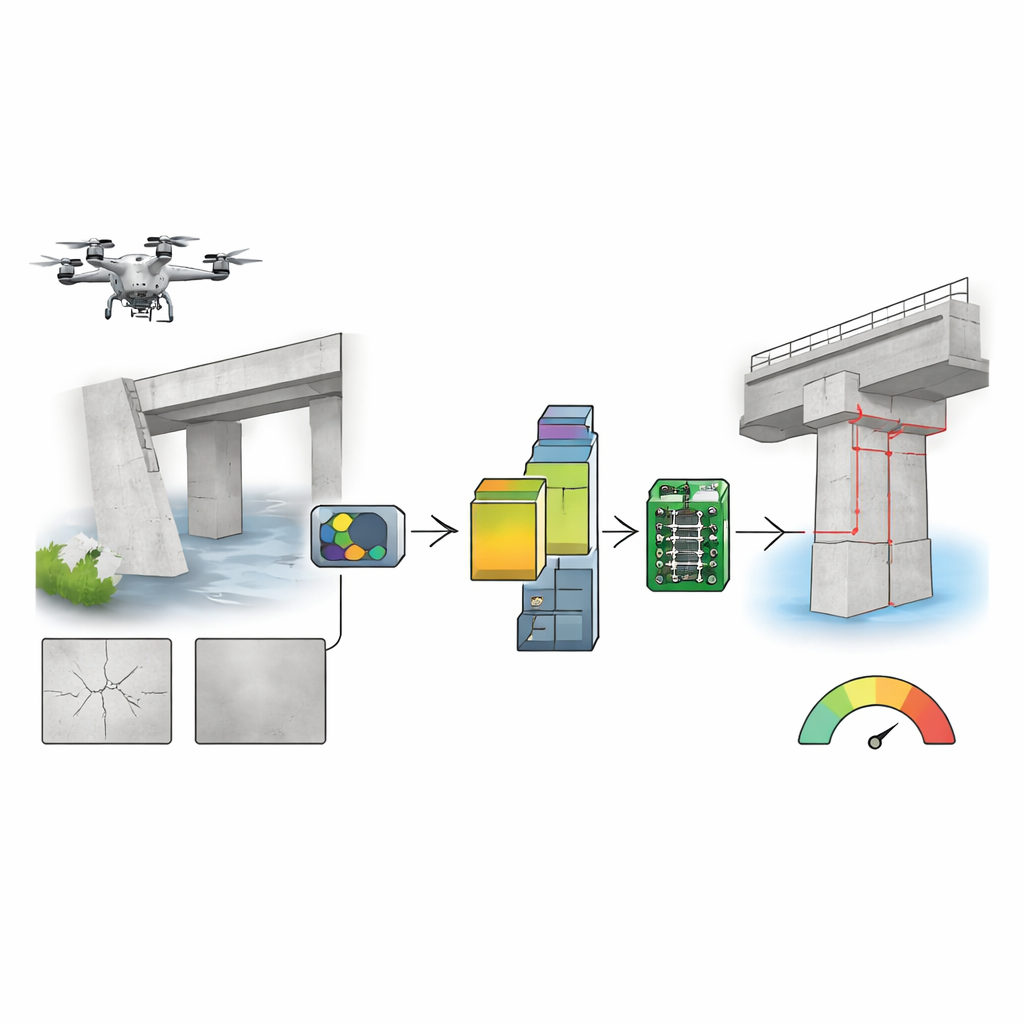

TinyML pipeline for efficient crack classification in UAV-based structural health inspections

Smarter Eyes in the Sky

Bridges, dams, and other critical structures age just like people do, and tiny cracks can be early warning signs of future failures. Engineers increasingly send small drones to photograph these surfaces, but today many of those images must be sent to distant servers for analysis, burning battery power and risking data privacy. This paper explores how to shrink the crack-detecting "brains" into a tiny, milliwatt-scale chip that can ride on the drone itself, making inspections faster, safer, and far more efficient.

Why Cracks Matter

Traditional methods of tracking the health of structures often rely on contact sensors bolted or glued to concrete and steel. These systems can be expensive to install and tend to pick up problems only after damage has progressed. Visual inspection offers a more direct view, but sending human inspectors onto scaffolding or into traffic lanes is slow, risky, and subjective. Small unmanned aerial vehicles (UAVs) equipped with cameras promise a better way: they can quickly sweep along bridge decks and walls, capturing thousands of detailed photos that reveal hairline fractures. The challenge is what to do with all that data when the drone has only limited battery life and often unreliable network connections.

The Problem with Sending Everything to the Cloud

Most current systems follow an "edge acquisition–cloud inference" pattern. The drone simply acts as a flying camera, streaming images to a powerful computer elsewhere that runs a deep-learning model to decide whether each patch of concrete contains a crack. This approach makes sense from a computing standpoint, but it comes with major drawbacks. High-quality image streaming drains the drone’s battery, cutting flight time dramatically. If the wireless link drops or weakens, the inspection mission can stall at exactly the wrong moment. And piping detailed images of strategic infrastructure to remote servers raises understandable privacy and security concerns. These tensions motivate a different approach: putting the intelligence directly on the drone, on hardware barely more powerful than a digital watch.

Shrinking the Brain to Fit a Tiny Chip

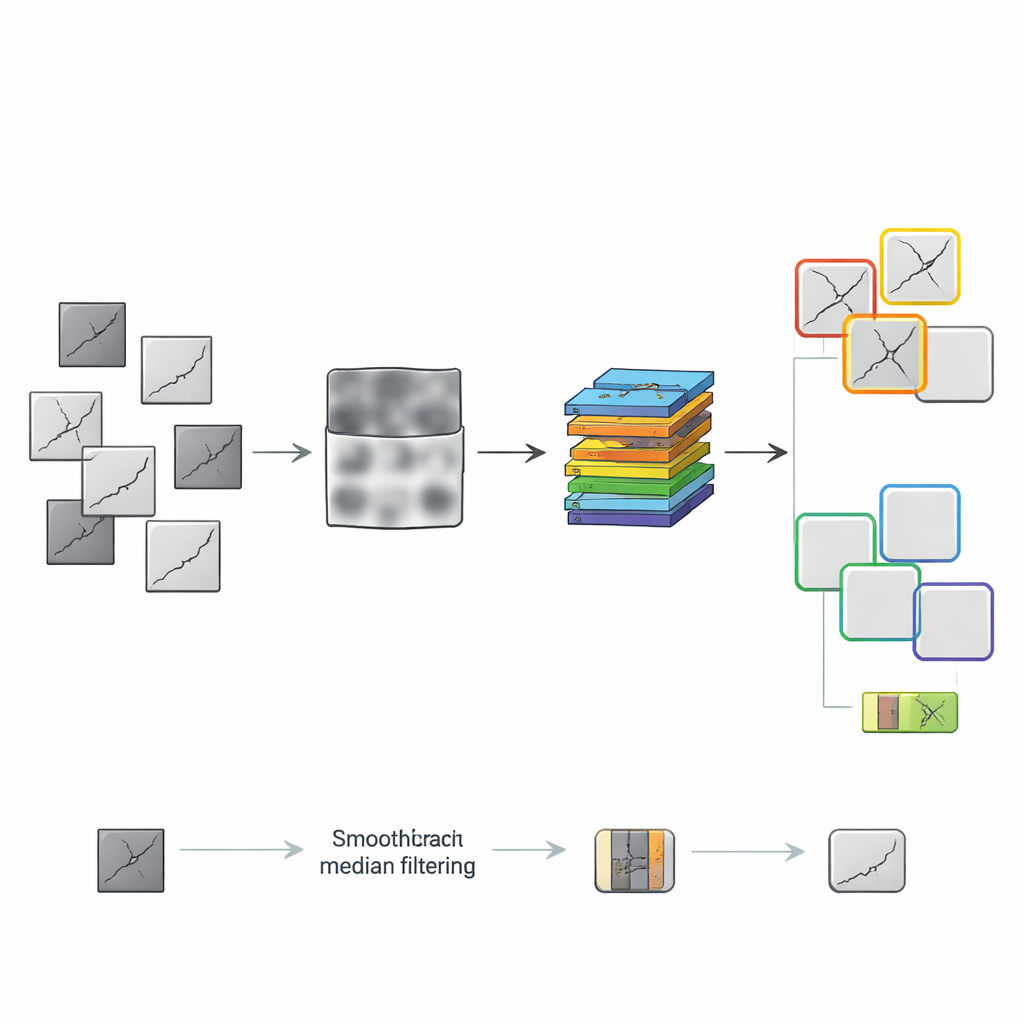

The authors built an end-to-end pipeline that runs on a low-power microcontroller, the STM32H7, using a compact neural network called MobileNetV1x0.25. Rather than inventing a new model, they focused on everything around it: how the images are preprocessed and how the model is compressed. They used a widely studied dataset of over 50,000 concrete images, split into small patches labeled "crack" or "no crack," then trained and tested different ways of preparing these patches for the tiny model. One route followed a handcrafted sequence of steps such as converting to grayscale, boosting contrast, removing noise, smoothing, and finally turning images into stark black-and-white silhouettes. Another route let a "greedy" search strategy build a preprocessing chain step by step, always keeping the combination that actually improved the model’s performance.

Finding the Sweet Spot in the Pipeline

The tests revealed that more processing is not always better. The manual pipeline, which ended with harsh binarization, actually harmed the neural network, stripping away subtle shading the model needed to see fine cracks. By contrast, the greedy search found that a simpler pair of steps—grayscale conversion followed by median filtering to gently smooth noise—gave the best results. On top of this, the team systematically explored four ways to squeeze the model: converting its numbers from full precision to eight-bit integers, training it while it "pretended" to be quantized, trimming away less important weights, and clustering similar weight values together. They tried these techniques alone and in combinations, then deployed the resulting models onto the microcontroller board and measured not just accuracy, but memory use, processing time, and energy per decision.

Tiny Computer, Big Performance

One configuration emerged as the best all-around choice: a grayscale-plus-median-filter input feeding a model that combines pruning with quantization-aware training in an eight-bit format. This compact setup achieved an F1-score—a balance of catching real cracks and avoiding false alarms—of 0.938, a jump of more than 11 percentage points over earlier on-device crack detectors. At the same time, it needed only about 2.9 megabytes of working memory, 309 kilobytes of program storage, and less than half a second to process each image patch. Each decision consumed roughly 0.6 joules of energy. When mounted on a DJI Mini 4 Pro drone, running this crack classifier continuously would cut flight time by only about 4 percent, compared with roughly a quarter of the battery drained by popular, much heavier edge-computing boards.

What This Means for Real-World Inspections

For non-specialists, the key message is that serious structural assessments no longer require shipping sensitive images to distant data centers or carrying bulky, power-hungry computers into the sky. By carefully tuning how images are cleaned up and how the neural network is compressed, the authors show that a thumb-sized chip can reliably spot cracks in concrete while barely nibbling at a drone’s battery. The system remains reasonably robust to blur from motion and to changing lighting, and it behaves sensibly even when crack images are rare among many healthy patches. Together, these results push drone-based inspections closer to a future where swarms of small, inexpensive UAVs can quietly patrol our infrastructure, spotting trouble early with smart, efficient onboard intelligence.

Citation: Zhang, Y., Nürnberg, A., Rau, L.S.M. et al. TinyML pipeline for efficient crack classification in UAV-based structural health inspections. Sci Rep 16, 8964 (2026). https://doi.org/10.1038/s41598-026-43534-4

Keywords: drone inspection, concrete cracks, tiny machine learning, structural health monitoring, edge AI