Clear Sky Science · en

Axes of self-motion and object motion shape how we perceive world-relative motion

Why your view of motion can be surprisingly tricky

Every time you walk down a hallway, ride a bike, or explore a virtual reality game, the entire scene appears to move across your eyes. Yet you can still tell which objects are actually moving in the world and which are just “sliding” on your retina because you are moving. This study asks a deceptively simple question: how does your brain separate your own motion from the motion of other things, and does it matter whether you and the object move in the same direction or at right angles to each other?

How the eye’s motion picture is sorted out

As you move, the pattern of shifting light on your eyes is called optic flow. Every point in the scene sweeps across your vision in a way that depends on how far it is and how you are travelling. When another object moves at the same time, its image motion is a blend of your motion and its own. The leading idea is that the brain performs a kind of subtraction, removing the part of the motion caused by self-movement to recover “world-relative” object motion. This process is known as flow parsing. Real scenes, and high-quality virtual reality, are rich in depth cues such as apparent size and the slight difference between the two eyes’ views, and those cues might help the brain do this subtraction more accurately.

Testing motion in a virtual room

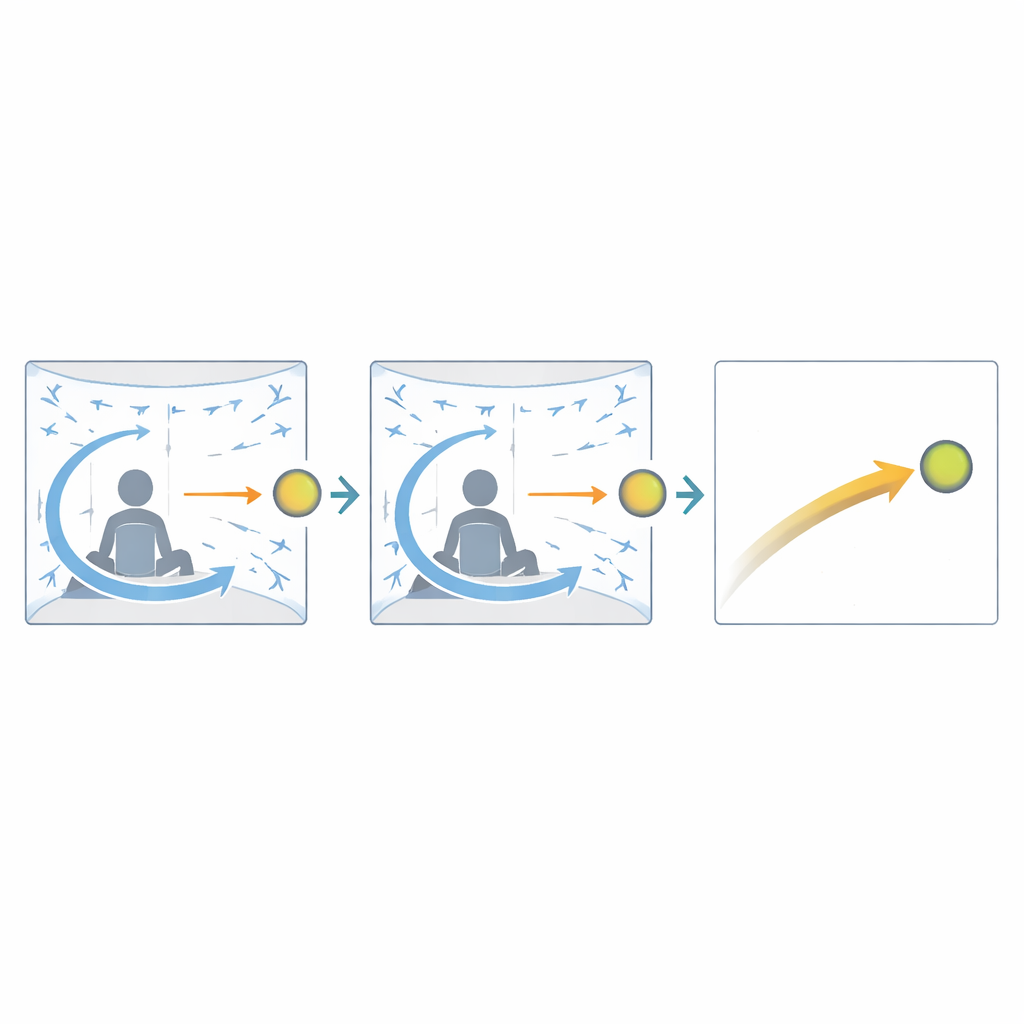

The researchers put volunteers into a large curved-screen 3D display that filled most of their field of view. In the first experiment, people looked into a virtual room with tiled floor, walls, and ceiling, and a bright ball placed slightly to the left or right of where they were looking. On each brief trial, both the viewer and the ball moved: the scene simulated either moving forward or backward, or sliding left or right, while the ball itself could move along either the same line (forward–backward) or sideways (left–right). After half a second, the scene vanished and participants reported whether the ball seemed to move one way or the opposite way along a given line. By adjusting the ball’s motion over many trials, the team found the setting where the ball appeared still relative to the scene and used this to compute a “gain” that indicates how completely self-motion had been factored out.

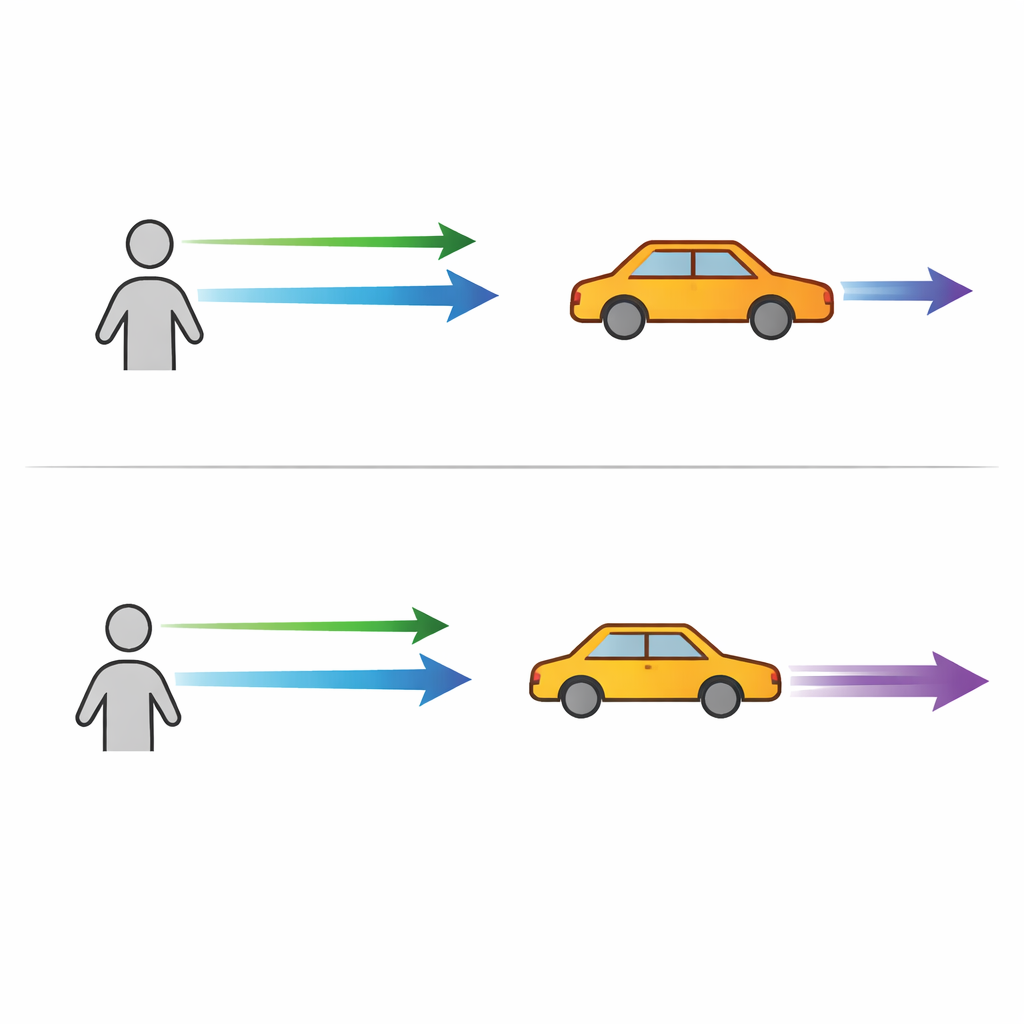

When crossing paths helps the brain

In the room scene, the brain’s flow parsing was rarely perfect: gains typically fell between zero (no compensation for self-motion) and one (fully correct world-relative motion). Crucially, performance depended on the relationship between the viewer’s path and the ball’s path. When the viewer slid left–right, the brain did a better job for balls moving forward or backward than for balls moving left–right. Conversely, when the viewer moved forward or backward, it was easier to judge balls that moved sideways than those that also moved in depth. In other words, motion was perceived more accurately when self-motion and object motion were at right angles rather than parallel. The exact side of the ball, how far out it was, and whether the viewer was heading toward or away from it had little effect.

Floating objects and stronger depth cues

In a second experiment, the simple room was replaced by a loose cloud of colored cubes surrounding the ball, more like a classic laboratory display. These nearby objects provided stronger depth information and richer local motion around the target. The same patterns of viewer and ball motion were tested. Again, the key result was an advantage for orthogonal motion: people were better at separating out self-motion when they and the ball moved along different axes than when both travelled along the same line. In these cluttered scenes, gains were generally higher, and in one condition—sideways-moving balls during forward–backward self-motion—performance was so good it was statistically indistinguishable from perfect compensation.

What this means for everyday life and virtual worlds

To a lay observer, the take‑home message is that your brain does not rely on a single cue to understand motion in the world. It combines the sweeping background pattern from your own movement with signals about how far away things are, including changes in their apparent size and the subtle differences seen by each eye. This study shows that when your path and an object’s path cross at right angles, those distance and depth cues change more, giving your brain extra leverage to untangle what is really moving where. When everything lines up along the same direction, those helpful changes are weaker and your judgments are less accurate. For designers of virtual reality and training simulators, this means that layouts and motion patterns that emphasize clear depth relationships and crossing motion can make it easier for users to judge object motion correctly, bringing virtual experiences closer to how we perceive motion in the real world.

Citation: Guo, H., Allison, R.S. Axes of self-motion and object motion shape how we perceive world-relative motion. Sci Rep 16, 8914 (2026). https://doi.org/10.1038/s41598-026-42955-5

Keywords: optic flow, motion perception, virtual reality, depth cues, self-motion