Clear Sky Science · en

Development and evaluation of a multistage transfer learning framework for robust medical image analysis

Why smarter image reading matters

Modern medicine leans heavily on pictures—from mammograms to chest X-rays—to catch diseases early and guide treatment. But teaching computers to read these images as accurately as human experts usually demands huge, carefully labeled datasets that many hospitals simply do not have. This study introduces a new way to train artificial intelligence systems that makes better use of existing images, including inexpensive lab photos of cancer cells, to boost performance on real-world scans while easing privacy and data demands.

From everyday photos to hospital scans

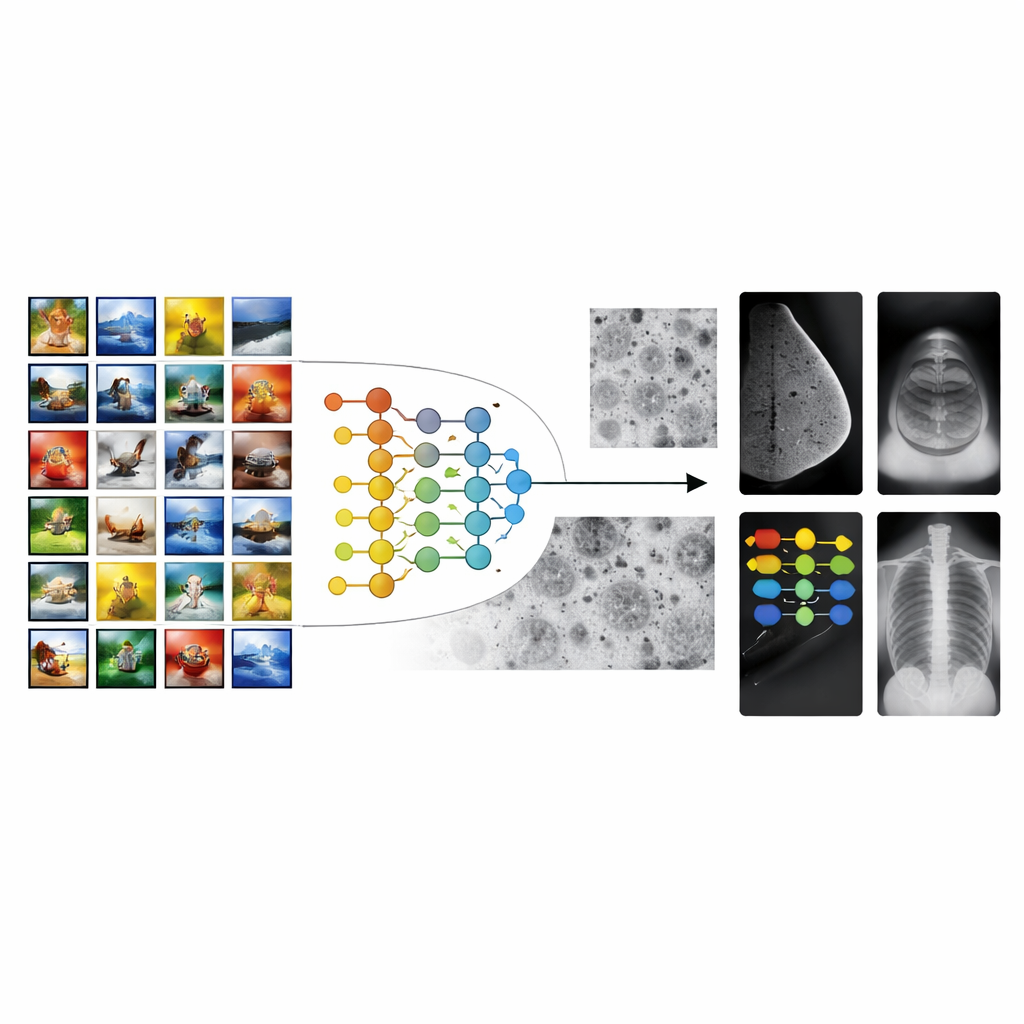

Most medical imaging AI systems start from models trained on millions of everyday pictures, such as animals, objects, and landscapes. This strategy, known as transfer learning, gives algorithms a “head start” on recognizing shapes and textures. However, there is a large gap between vacation photos and medical scans. The patterns that matter in a mammogram or an X-ray—tiny specks, faint shadows, or subtle tissue textures—look nothing like objects in ordinary photos. As a result, conventional transfer learning can stall, leading to tools that perform well in the lab but struggle across different hospitals, machines, or patient groups.

Building a bridge with cell images

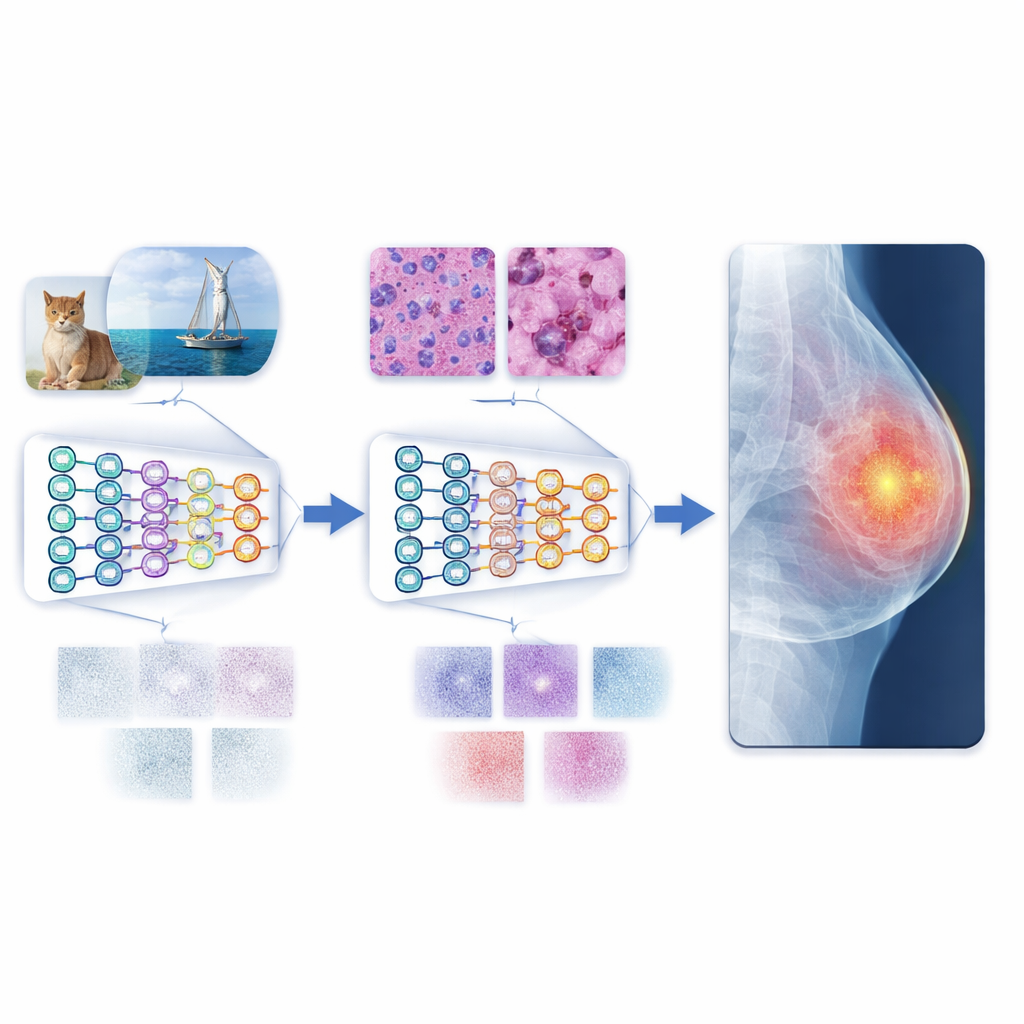

The authors propose a multistage transfer learning (MSTL) framework that adds a crucial middle step between general images and clinical scans. After first training on a large natural-image collection, the model is fine-tuned on microscopic pictures of cancer cell lines grown in the lab. These cell images share many visual features with medical scans: dense, crowded structures; fine-grained textures; and subtle variations in brightness. They are also relatively cheap to produce, can be generated in large numbers, and avoid the privacy concerns tied to patient data. By first adapting to this cell-image world, the model learns features that are more relevant to disease patterns before it ever sees a mammogram, ultrasound, or X-ray.

Testing across different types of scans

To evaluate this strategy, the researchers trained both traditional convolutional neural networks and newer vision transformers on three common imaging tasks: breast cancer detection in mammograms, breast lesion analysis in ultrasound, and pneumonia detection in chest X-rays. They compared three training styles: starting from scratch, using conventional transfer learning from natural images, and using the new multistage method with cancer cell images as the bridge. The multistage approach consistently delivered the best results, often pushing accuracy close to perfect on the tested datasets. Vision transformers, which can capture long-range patterns across an entire image, outperformed standard convolutional networks in nearly every setting, especially when paired with the multistage training.

Measuring how well knowledge transfers

Beyond simple accuracy scores, the team examined how easily features learned in one stage carried over to the next. They used three transferability measures that reflect how compatible the learned image patterns are with new tasks. For mammograms and chest X-rays in particular, these measures closely tracked actual performance, especially for the strongest model, a base vision transformer (ViTB-16). This tight relationship suggests that the intermediate cell-image stage does more than just improve numbers; it produces representations that genuinely “fit” medical images better. Additional experiments showed that cutting the number of cell images in half hurt performance, and replacing them with other medical modalities (such as endoscopy or eye images) was less effective, underscoring the special value of cancer cell lines as a bridge.

Toward more reliable automated diagnosis

In everyday terms, the study shows that teaching an AI system to read lab-grown cell pictures before hospital scans makes it a more skilled and reliable “reader” of medical images. This multistage path reduces the mismatch between colorful everyday photos and the muted, complex patterns in clinical images, allowing the model to generalize better even when only modest amounts of labeled medical data are available. Combined with modern vision transformers, the approach delivers state-of-the-art performance on several benchmark datasets. While more diverse data and broader tests are still needed, the framework points toward scalable, privacy-friendly tools that could support doctors in diagnosing disease more accurately and consistently around the world.

Citation: Ayana, G., Park, Sy., Jeong, K.C. et al. Development and evaluation of a multistage transfer learning framework for robust medical image analysis. Sci Rep 16, 8873 (2026). https://doi.org/10.1038/s41598-026-42157-z

Keywords: medical image analysis, transfer learning, deep learning, vision transformers, cancer cell imaging