Clear Sky Science · en

Design and implementation of a deep learning framework for automated crop classification and health diagnosis in precision agriculture

Smarter Fields for a Hungry World

Feeding a growing global population means getting more food out of every field while wasting less water, fertilizer, and labor. Yet farmers still spend countless hours walking their land, visually checking leaves and soil by eye. This paper introduces an automated way to watch over crops using flying drones, orbiting satellites, and buried sensors, all connected to a deep learning system that can spot trouble early and suggest quick action.

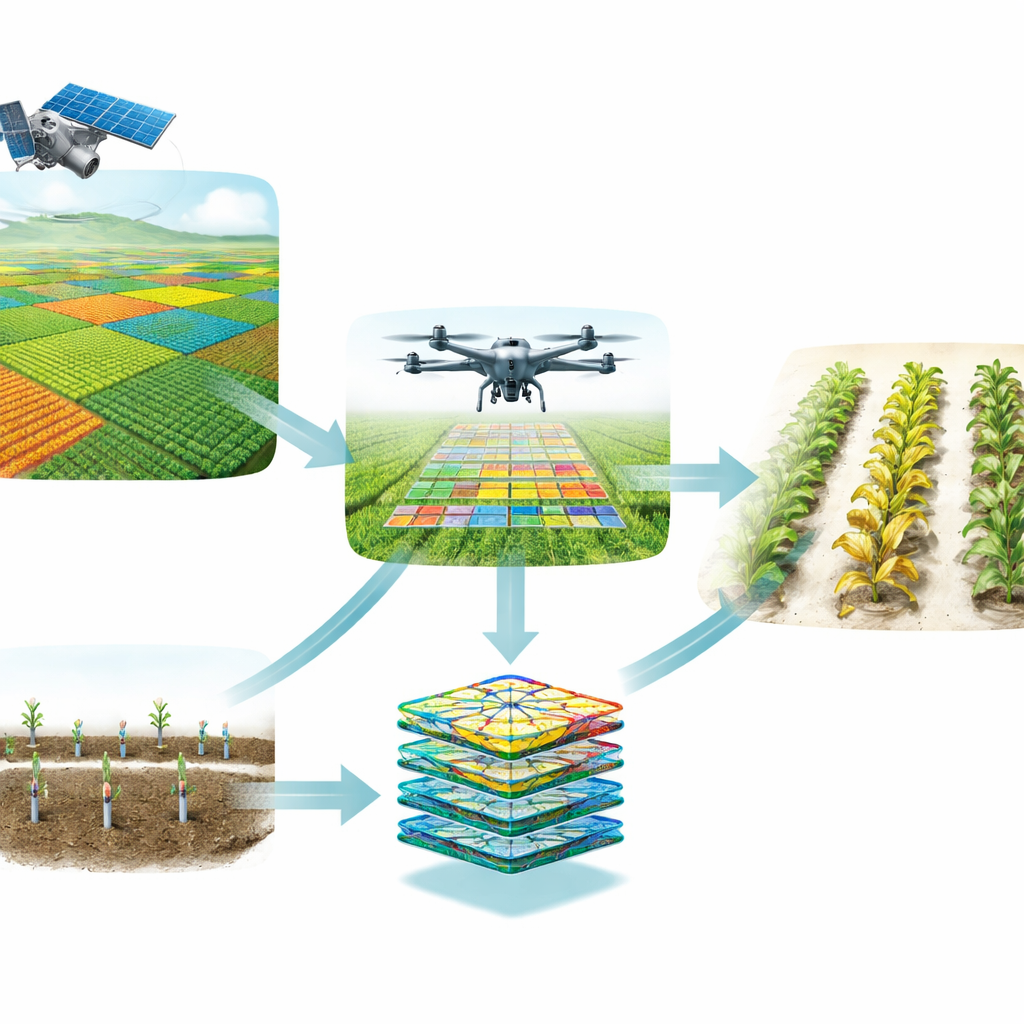

Bringing the Sky and Soil Together

Most high-tech farm tools look at only one piece of the puzzle: close-up photos of leaves or raw numbers from soil probes. The authors argue that this “siloed” view misses important clues. Their framework instead blends three vantage points. From space, satellite images reveal large-scale patterns, such as which parts of a field are under stress. From the air, drones capture detailed color and near-infrared views of individual plants. In the ground, Internet-connected sensors track moisture, nutrients, temperature, and other conditions. By aligning these data sources in both time and location, the system can connect what it sees on the leaves with what is happening in the soil and the surrounding environment.

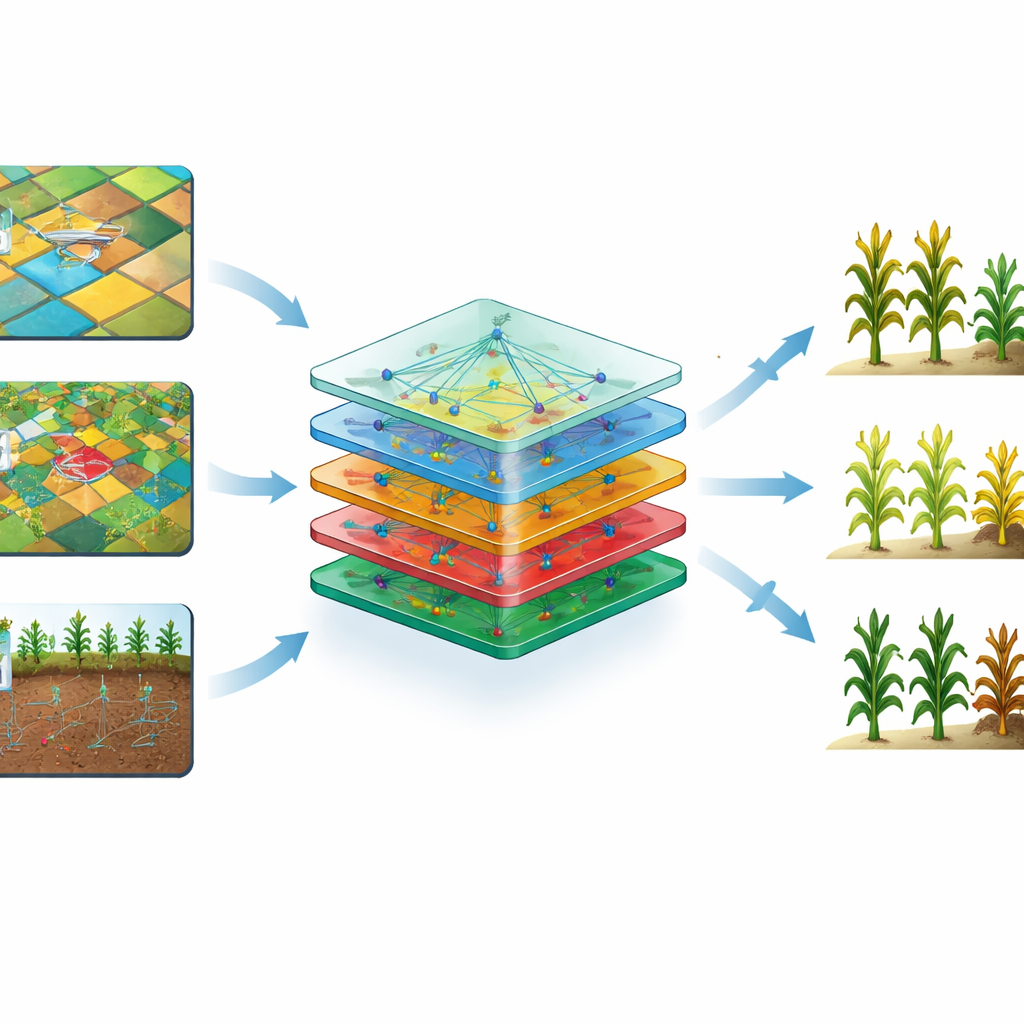

How the Digital Crop Doctor Learns

The heart of the framework is a deep learning model trained to recognize crop types and health conditions. First, all incoming data are cleaned and standardized: cloudy satellite scenes are normalized, drone images are resized and adjusted for changing light, and gaps in weather records are filled in. The system also augments the image data by rotating and flipping pictures so that the model learns to ignore camera angle and focus on real plant features. Then a specialized image-analysis network, known as a convolutional neural network, pulls out patterns such as leaf texture, color changes, and lesion shapes, while additional layers process the numerical sensor readings. An “attention” mechanism helps the model concentrate on the most informative regions—like a patch of spotted leaves—while tuning out background soil or sky.

From Raw Data to Real-Time Decisions

Once trained, the model works like an always-on crop doctor. Multi-source data are streamed into the system and fused into a single internal representation. The attention-guided layers compare what they see to thousands of past examples, then the final classification block decides whether a plant is healthy or shows signs of disease, pest damage, or stress. Instead of simply labeling a field as good or bad, the framework links its visual diagnosis to current soil moisture and nutrient levels. That combination allows it to prioritize alerts: for example, a disease pattern paired with humid conditions might trigger a high-urgency warning, prompting immediate, targeted spraying rather than blanket treatment across the entire farm.

Putting the System to the Test

To see whether this approach works beyond theory, the authors trained and evaluated their framework on a public precision-agriculture dataset that includes satellite imagery, drone photos, and ground-sensor readings for key staple crops: maize, potato, and wheat. They split the data into training, validation, and testing sets to avoid overfitting and compared their model against standard deep learning and traditional machine learning techniques. Their multi-modal system consistently achieved more than 90 percent accuracy in identifying crop type and health status, while also making faster predictions than baseline models. Importantly, when one data source was degraded—such as drone images affected by shadows—the system could still maintain high accuracy by leaning more heavily on soil and satellite information.

What This Means for Farmers

The study’s bottom line is that combining views from sky to soil allows computers to assess crop health more reliably than either human scouts or single-sensor tools alone. For farmers, that could mean earlier warnings of disease outbreaks, more precise use of water and chemicals, lower labor costs, and ultimately higher yields with less environmental impact. While the current system still depends on good connectivity to send data to the cloud, future versions could run directly on drones or edge devices in the field. If realized at scale, such smart, multi-eyed guardians of the farm could become a cornerstone of truly sustainable, data-driven agriculture.

Citation: Pal, A.K., Patro, B.D.K. & Chaube, S. Design and implementation of a deep learning framework for automated crop classification and health diagnosis in precision agriculture. Sci Rep 16, 11436 (2026). https://doi.org/10.1038/s41598-026-42151-5

Keywords: precision agriculture, crop disease detection, deep learning, drone and satellite imaging, smart farming