Clear Sky Science · en

GWKNN: an enhanced k-nearest neighbor algorithm with G metric reconstruction and Grey Wolf Optimizer

Smarter Pattern Recognition for Today’s Data Deluge

From medical scans to bank transactions, modern life generates oceans of data. Much of this information has to be automatically sorted into categories: healthy or sick, fraudulent or normal, spam or genuine. A classic workhorse for this kind of task is the k-nearest neighbors (KNN) algorithm, which labels a new case by looking at the most similar past examples. Yet as datasets become bigger, more complex, and more imbalanced, this simple idea starts to crack. The paper introduces GWKNN, a revamped version of KNN designed to make smarter use of distances between points and to treat rare but important cases more fairly.

Why Simple Similarity Falls Short

Conventional KNN assumes that all features of a data point contribute equally and measures similarity with a standard straight-line distance. That can work well when data are low-dimensional and neatly separated, but real-world data are often high-dimensional, noisy, and a mix of different types of information. In those cases, the usual distance can be misleading, causing the algorithm to pick unhelpful neighbors. At the same time, many datasets are imbalanced: common categories dominate, while rare but crucial categories, such as an early-stage disease, are underrepresented. When KNN votes among nearby examples, the majority class tends to drown out these minority cases, leading to biased and sometimes dangerous decisions.

Teaching the Algorithm a Better Sense of Distance

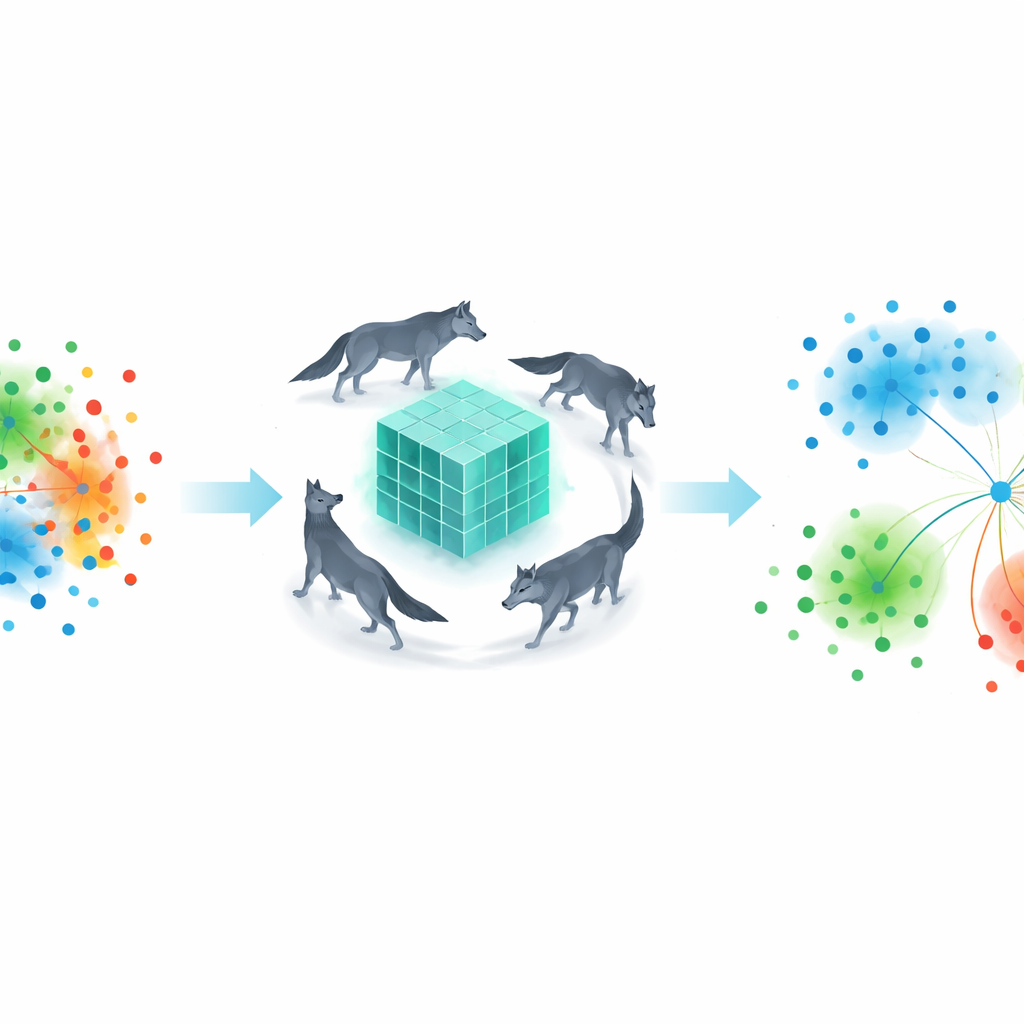

The first major innovation in GWKNN is a learned distance measure. Instead of locking in the usual straight-line rule, the authors let the algorithm discover how far apart data points should be in a way that best separates the categories. They encode this as a flexible “G metric” that reshapes the space so that informative features matter more and redundant ones matter less. To tune this metric, the method borrows inspiration from the hunting behavior of grey wolves. A swarm-intelligence procedure, called the Grey Wolf Optimizer, explores many possible ways to stretch and squeeze the data space, keeping those that reduce classification errors while maintaining mathematical stability. Over many iterations, the virtual “wolves” converge on a distance rule that makes similar points cluster together more reliably, even in high-dimensional, tangled datasets.

Giving Rare Cases a Louder Voice

The second improvement targets voting bias. Standard KNN simply counts how many of the k neighbors belong to each class and picks the majority. GWKNN instead weighs each vote according to how common that class is in the overall training data. Classes that appear less often are given stronger weights; very frequent classes get weaker ones. A small smoothing term prevents extremely rare categories from overwhelming the decision. This way, if a new data point is near a handful of minority-class examples and many majority-class examples, the minority signals are not automatically swamped. The scheme is simple to compute but has a powerful effect: it pushes the classifier to pay more attention to rare but meaningful patterns, improving fairness and recall for minority classes.

Putting the New Method to the Test

To see whether GWKNN truly helps in practice, the authors evaluated it on 12 benchmark datasets from the well-known UCI repository. These collections span financial data, medical measurements, handwriting, plant seeds, and several high-dimensional cancer and gene-expression datasets, with both two-class and multi-class problems. They compared four versions of KNN: the plain baseline, a version with only the new distance metric, a version with only the new voting weights, and the full GWKNN combining both ideas. They also pitted GWKNN against seven widely used classifiers, including support vector machines, decision trees, random forests, logistic regression, naive Bayes, and a neural network. Across repeated train–test splits, they tracked not only average accuracy but also how much results fluctuated.

Results: More Accurate and More Consistent

The combined GWKNN approach came out on top or tied for best performance on most datasets, particularly on those with many features and uneven class sizes. On relatively simple tasks, all methods performed well and the gains were modest, but GWKNN still tended to slightly improve accuracy and reduce variability. On more difficult gene-expression datasets with thousands of features, the advantages were clearer: the learned distance metric helped the algorithm form more meaningful neighborhoods, and the weighted voting improved recognition of underrepresented classes. Statistical tests across all datasets confirmed that GWKNN’s rankings were significantly better than those of standard KNN and some classical models, indicating that its improvements are not just lucky fluctuations but robust across different data conditions.

What This Means for Everyday Data Decisions

For non-specialists, the takeaway is that GWKNN refines a very intuitive idea—“look at similar past cases”—to better match the messy reality of modern data. By learning how to measure similarity in a data-driven way and by boosting the influence of rare categories during voting, the method aims to be both more accurate and more fair. While this extra sophistication comes with added computational cost, especially for very large and high-dimensional datasets, GWKNN shows strong promise for tasks where correct classification of minority cases really matters, such as early disease detection or fraud spotting. The work illustrates how classic algorithms can be upgraded with insights from optimization and fairness to keep pace with the scale and complexity of today’s information.

Citation: Guo, Z., Liu, G., Liu, W. et al. GWKNN: an enhanced k-nearest neighbor algorithm with G metric reconstruction and Grey Wolf Optimizer. Sci Rep 16, 8857 (2026). https://doi.org/10.1038/s41598-026-41851-2

Keywords: k-nearest neighbors, distance metric learning, class imbalance, swarm intelligence, data classification