Clear Sky Science · en

An explainable vision transformer model with transfer learning for accurate bean leaf disease classification

Why Sick Bean Leaves Matter to Everyone

Beans are a staple food for hundreds of millions of people, especially in developing countries, providing affordable protein and fiber. Yet two common leaf diseases—Angular Leaf Spot and Bean Rust—can quietly strip fields of their yield, threatening both diets and farmer incomes. This study explores how a new kind of artificial intelligence can spot these diseases early and, crucially, show farmers exactly what it sees, turning a mysterious black box into a tool they can understand and trust.

Hidden Threats on Everyday Leaves

Bean plants are constantly under attack from fungal invaders that scar their leaves, reduce photosynthesis, and lead to smaller, poorer-quality harvests. Traditionally, experts walk the fields to check for trouble, but this process is slow, subjective, and unrealistic at large scales. Meanwhile, many modern AI systems that analyze plant photos can be stunningly accurate, yet remain opaque to users: they deliver a disease label without any explanation. For farmers making high-stakes decisions about spraying, replanting, or harvesting, blind trust in a silent algorithm is a risky proposition.

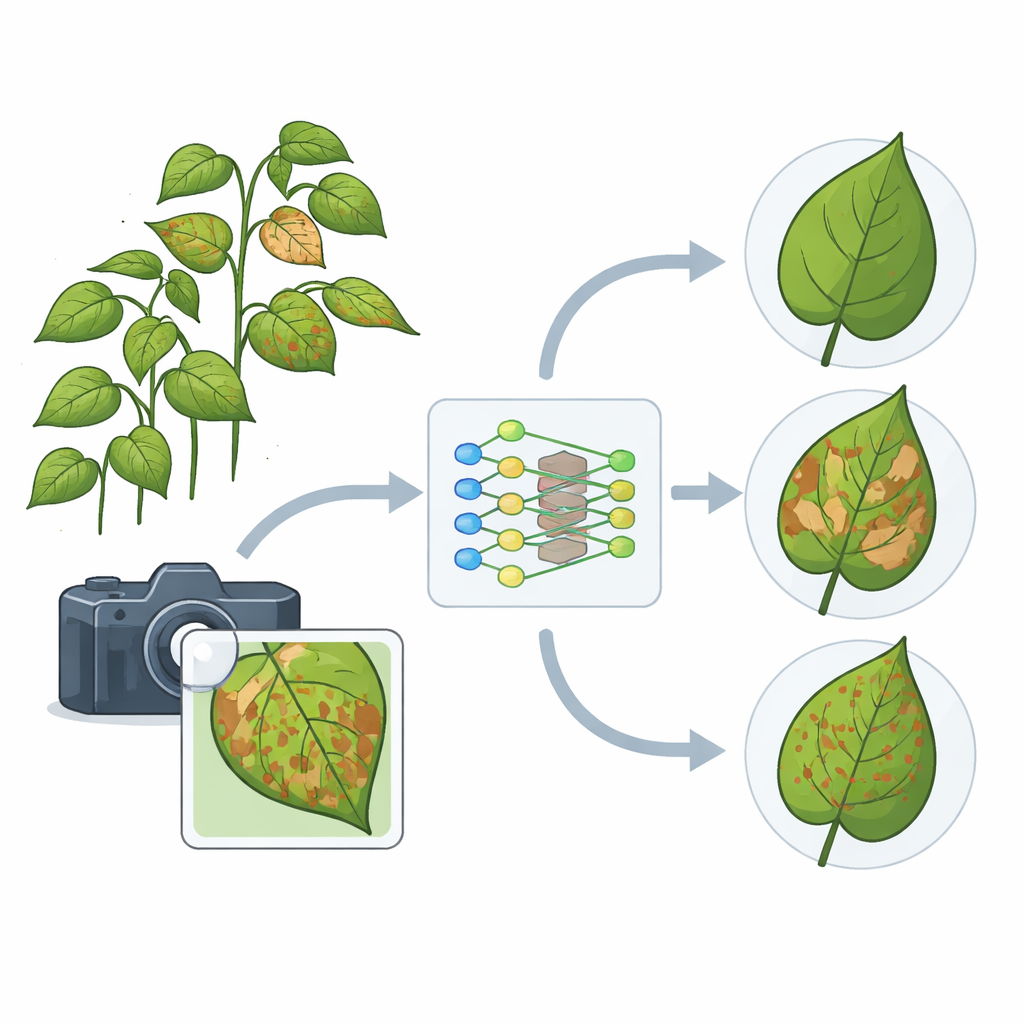

A Smarter Way to Read Leaf Images

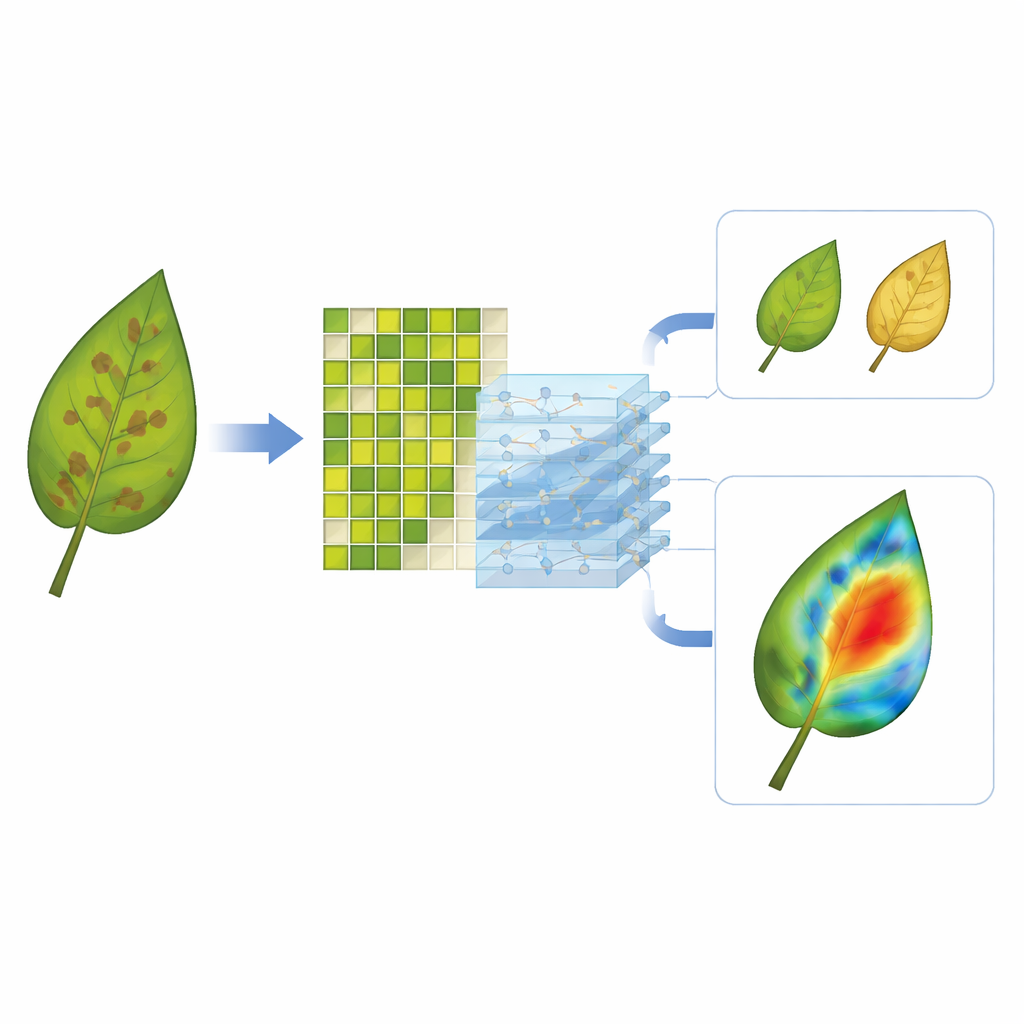

The researchers propose an automated diagnosis system built on a “vision transformer,” a relatively new family of image models that has been reshaping computer vision. Instead of scanning an image with small sliding filters, this model chops a leaf photo into many small patches and learns how all of these patches relate to one another at once. That global view helps it detect subtle, scattered disease signs that older methods might miss. To overcome the usual need for enormous training sets, the team starts from a model already trained on millions of general images and then fine-tunes its final layers on bean leaves, a strategy known as transfer learning.

Turning Dark Boxes Into Glass Boxes

What makes this system stand out is not only how well it classifies leaves as healthy, Angular Leaf Spot, or Bean Rust, but how clearly it shows its work. The authors integrate an explainability technique called GradCAM++, which turns the model’s internal signals into a heatmap over the original photo. Bright regions on the leaf correspond to the spots and pustules that most influenced the decision. For diseased leaves, the model’s attention locks onto the characteristic lesions; for healthy leaves, it distributes attention broadly rather than latching onto random background textures. This creates a visual feedback loop in which agronomists and farmers can verify that the model is focusing on real symptoms rather than soil, fingers, or camera artifacts.

Putting the System to the Test

To gauge performance, the team uses a public “I-Bean” dataset, originally assembled in Ugandan fields and labeled by plant health experts. They greatly expand the training portion by rotating, flipping, and color-shifting images to mimic different camera angles and lighting conditions. After fine-tuning the model on this enriched dataset and keeping its core feature extractor fixed, they evaluate it on an untouched test set. The system reaches about 97.5 percent accuracy, with similarly high scores for precision, recall, and a combined F1 measure. Confusion between the three leaf states is rare, suggesting that the model reliably separates healthy plants from each disease type even when their visual differences are subtle.

Steps Toward Smarter, Fairer Farming

Despite its strong performance, the approach still faces hurdles. Vision transformers are computationally heavy, making it hard to run them in real time on low-cost smartphones or drones without further optimization. The dataset, though augmented, represents only three disease states and a limited range of lighting extremes. The authors outline future directions such as compressing the model so it can live on edge devices, expanding to more diseases and stress symptoms, and exploring lighter transformer variants. If these challenges are met, the result could be a portable, trustworthy assistant that helps farmers around the world spot disease early, save yields, and manage resources more wisely—while always being able to show them exactly why it reached its conclusion.

Citation: Potharaju, S., Singh, A., Singh, D. et al. An explainable vision transformer model with transfer learning for accurate bean leaf disease classification. Sci Rep 16, 10402 (2026). https://doi.org/10.1038/s41598-026-41723-9

Keywords: bean leaf disease, plant disease detection, vision transformer, explainable AI, precision agriculture