Clear Sky Science · en

An optimized real-time qualitative HOG-based visual servoing system for autonomous wheelchair

Smarter Rides for People Who Need Them Most

For many people who rely on powered wheelchairs, steering through busy hallways or uneven sidewalks can be tiring, stressful, or even impossible without help. This paper presents a new way for a wheelchair to “see” its surroundings through a small camera and steer itself smoothly and safely in real time, even using inexpensive hardware. By carefully redesigning how visual information is processed and turned into motion, the author shows that intelligent wheelchair navigation can run on a tiny, low‑power computer while still keeping riders comfortable and in control.

Why Regular Wheelchairs Struggle with Real Life

Traditional powered wheelchairs are usually driven directly by a joystick or rely on simple bump sensors to avoid obstacles. Those approaches often fail in crowded, changing spaces such as hospital corridors, shopping malls, or city sidewalks. Users report that what they want most is smooth, predictable motion and reliability under different lighting conditions, not raw speed. At the same time, many high‑end robotics methods that use cameras and complex math need powerful computers, which are too costly and bulky for everyday wheelchairs. This gap—between what users need and what low‑cost hardware can handle—is exactly what the study aims to close.

Teaching a Wheelchair to Read Patterns Instead of Points

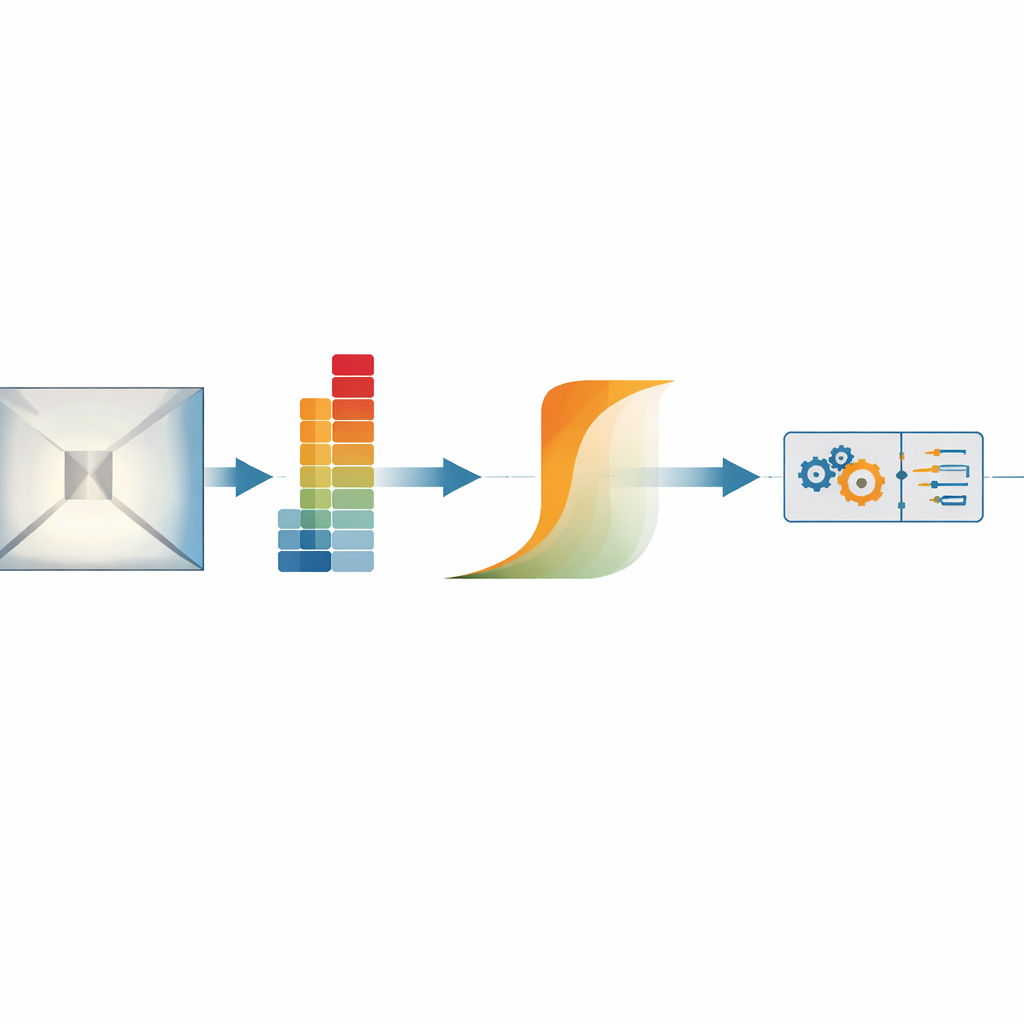

The system uses a camera mounted on the wheelchair to watch the scene ahead and represent it not as individual dots or landmarks, but as patterns of edges and lines known as gradient histograms. In simple terms, it looks at how brightness changes across the image and condenses that into a compact fingerprint of the scene. This kind of pattern description is naturally tolerant to changing light and partial blockages, such as a passerby briefly crossing the camera view. The wheelchair compares the current pattern to a “goal” pattern that corresponds to a desired view—like the look of a straight corridor or a landmark at the end of a path—and then adjusts its motion to make the two match more closely.

Allowing Wiggle Room for Safer Control

Rather than insisting on a perfect match between the current and goal views, the method introduces a flexible “confidence zone.” If the wheelchair’s camera view is close enough to the goal, the control system deliberately relaxes, avoiding nervous back‑and‑forth corrections. This is achieved through a mathematical activation function that gradually ramps the steering response up or down depending on how large the visual error is, instead of simply pushing harder whenever any error exists. As a result, the wheelchair can handle partial occlusions and visual uncertainty without sudden jerks, maintaining smooth paths in tasks like corridor following or moving toward a sequence of visual targets.

Making Advanced Vision Work on a Tiny Computer

A major challenge is that these rich visual fingerprints are usually expensive to compute. The author tackles this by rewriting the calculations so they use efficient, “all‑at‑once” operations rather than slow nested loops, trimming unnecessary precision, and carefully organizing memory use. Running on a Raspberry Pi—a credit‑card‑sized computer often used in hobby projects—the improved software boosts processing speed from unusable levels (around one image every 12 seconds) to about five and a half images per second. The wheelchair’s motors receive commands at a much faster, steady rate, so the wheels move smoothly while the camera and vision system update in the background. Extra safety layers, including hardware brakes and a watchdog that halts the chair if commands stop arriving, are built in to suit real assistive use.

From Lab Theory to Everyday Help

Through experiments in hallways, sidewalks, and controlled video tests, the system consistently steers the wheelchair from one visual goal to the next while gradually reducing its steering corrections as it nears each target. The camera’s pattern‑matching error steadily shrinks, confirming that the chair is visually lining itself up without losing track of important features along the way. In plain language, the study shows that a small, affordable computer and a simple camera are enough to give a powered wheelchair a steady, camera‑guided “autopilot” that respects comfort and safety. This opens the door to more accessible, camera‑based navigation aids for people with limited mobility, and lays the groundwork for future upgrades such as richer 3D perception and even smoother obstacle avoidance.

Citation: Hafez, A.H.A. An optimized real-time qualitative HOG-based visual servoing system for autonomous wheelchair. Sci Rep 16, 8688 (2026). https://doi.org/10.1038/s41598-026-41566-4

Keywords: autonomous wheelchair, assistive robotics, computer vision, visual navigation, real-time control