Clear Sky Science · en

Hybrid knowledge- and data-driven modelling for robust spike detection and sorting in human C-fiber microneurography

Listening In on the Nerves of Pain and Itch

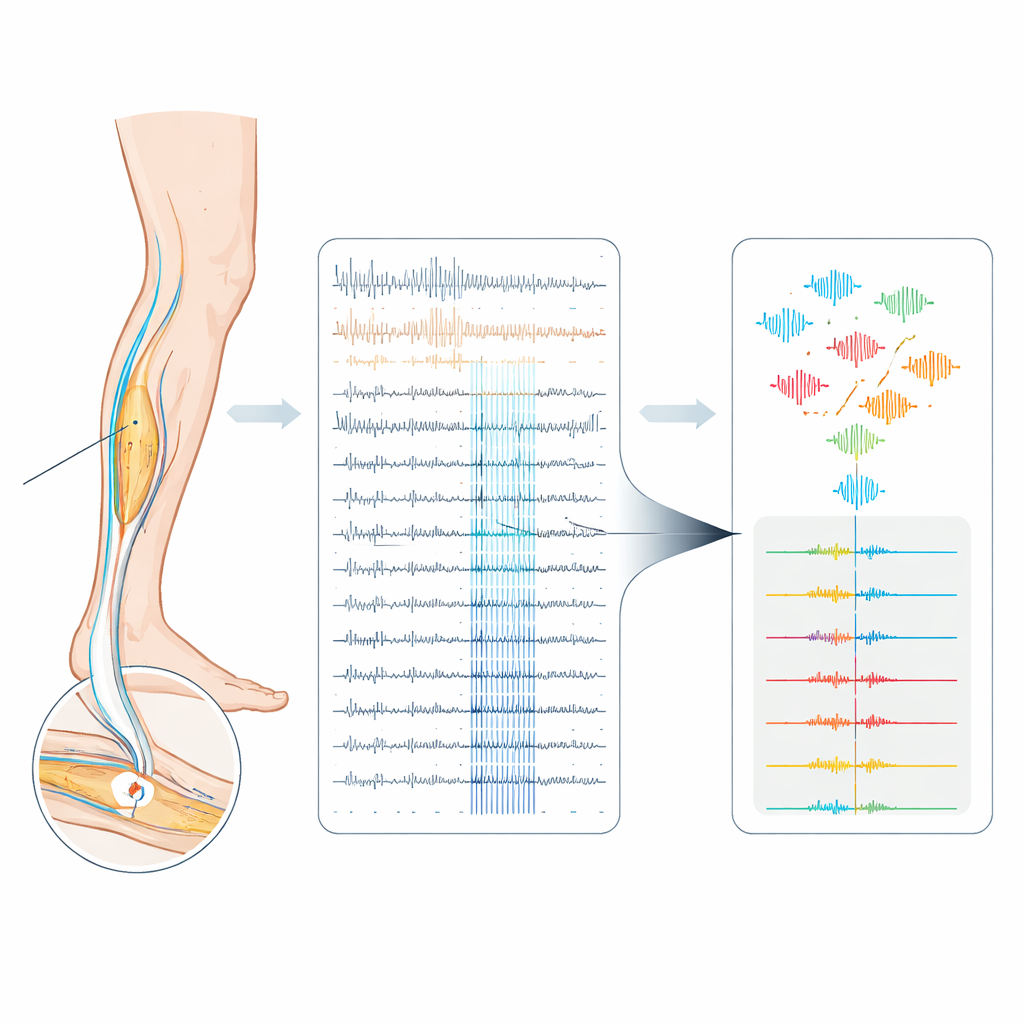

Our everyday experiences of pain and itch begin as tiny electrical pulses racing along slender nerve fibers in the skin. Scientists can eavesdrop on these signals in awake volunteers using a technique called microneurography, placing a hair-thin electrode into a nerve. But in these recordings, many nerve fibers talk at once, and their electrical “voices” sound almost identical. This paper introduces a new computer-based method to better separate and identify these overlapping signals, with the long-term goal of decoding how human nerves encode sensations like pain and itch.

Why Nerve Spikes Are So Hard to Tell Apart

Each sensory nerve fiber communicates with the brain through short electrical bursts called spikes. Not only the number of spikes, but also their precise timing and pattern, can change how a stimulus feels. Unfortunately, in human peripheral nerves the recorded spikes from different fibers often look nearly the same and are buried in noise. A single metal electrode usually picks up several fibers at once, and their spike shapes drift slowly over long experiments. Existing automatic methods for separating spikes were mostly designed for arrays with many electrodes, where spatial information helps. When applied to single-electrode recordings from human C-fibers—unmyelinated fibers crucial for pain and itch—these methods tend to be unreliable.

Using the Nerve’s Own Timing as a Guide

The authors build on a clever trick already used in microneurography called the “marking method.” During an experiment, the skin is given gentle electrical pulses at a low, steady rate. Each pulse reliably provokes one spike from each activated C-fiber after a fixed delay, so repeated responses from the same fiber form a vertical “track” when the data are plotted trial by trial. If a fiber has fired extra spikes just before the next pulse, its conduction slows slightly and the next response arrives later. This delay, known as activity‑dependent slowing, serves as a fingerprint of how active that single fiber has been. The new work extends this idea by redesigning the stimulation protocol so that not only the regular background pulses, but also extra pulses inserted between them, are treated as timing anchors. As a result, all electrically evoked spikes in the recording become precisely time‑locked and labeled, creating a rare “ground truth” dataset in a noisy human nerve.

A Hybrid Path from Raw Noise to Clean Spike Trains

Armed with this ground truth, the team builds a semi‑automated analysis pipeline that mixes expert knowledge with machine learning. In the knowledge-driven stage, they first compute average spike templates for all visible tracks and choose the fiber with the largest, cleanest spike as the main target. They measure the usual delay of that fiber’s responses and look for intervals where the delay lengthens, signaling extra activity. Spike detection is then limited to these intervals, greatly shrinking the search space and reducing false alarms. In the data-driven stage, each detected waveform is converted into numerical features—either compact descriptors or the raw 3‑millisecond voltage snippet itself—and fed into several classifiers, including support vector machines and a popular boosted tree method called XGBoost. The models are trained on the reliably labeled spikes from the ground truth protocol and tuned by cross‑validation to find the best model–feature combination for each recording.

How Well the New Approach Performs

The authors test their pipeline on six challenging recordings from human volunteers, where signal quality and the number of active fibers vary. They compare their results to Spike2, a widely used commercial program that relies on template matching. Across datasets, no single machine‑learning recipe wins every time, but XGBoost using raw waveforms tends to give the highest median performance. Recordings with higher signal‑to‑noise ratios and fewer fibers sort best, while one particularly noisy dataset with very similar spike shapes remains essentially unsortable. Overall, the new pipeline achieves higher F1‑scores and markedly fewer false positives than Spike2, especially when attention is restricted to time intervals where physiological latency shifts indicate real activity. In a realistic example where an itch‑inducing chemical is injected into the skin, the pipeline and Spike2 largely agree on which spikes come from the fiber of interest, but the new method avoids many questionable extra spikes with implausibly high firing rates.

What This Means for Understanding Pain and Itch

For non‑specialists, the key message is that the study delivers a more trustworthy way to listen to individual nerve fibers in humans, even when their signals are tiny, noisy, and overlapping. By combining known physiological behavior—how spikes line up in time and how their delays change with recent activity—with modern machine learning, the authors can better decide which spikes truly belong to a given fiber and which do not. This improved sorting is a necessary step before scientists can safely interpret detailed spike patterns as codes for pain, itch, or other sensations. While some recordings remain too messy to analyze, the pipeline offers clear criteria to judge when data are usable and lays groundwork for future studies that aim to decode spontaneous pain signals in nerve disease and to tailor treatments based on how individual human nerve fibers fire.

Citation: Troglio, A., Fiebig, A., Maxion, A. et al. Hybrid knowledge- and data-driven modelling for robust spike detection and sorting in human C-fiber microneurography. Sci Rep 16, 8975 (2026). https://doi.org/10.1038/s41598-026-41561-9

Keywords: microneurography, C-fibers, spike sorting, pain and itch, machine learning