Clear Sky Science · en

Prediction, syntax and semantic grounding in the brain and large language models

How Your Brain Guesses the Next Word

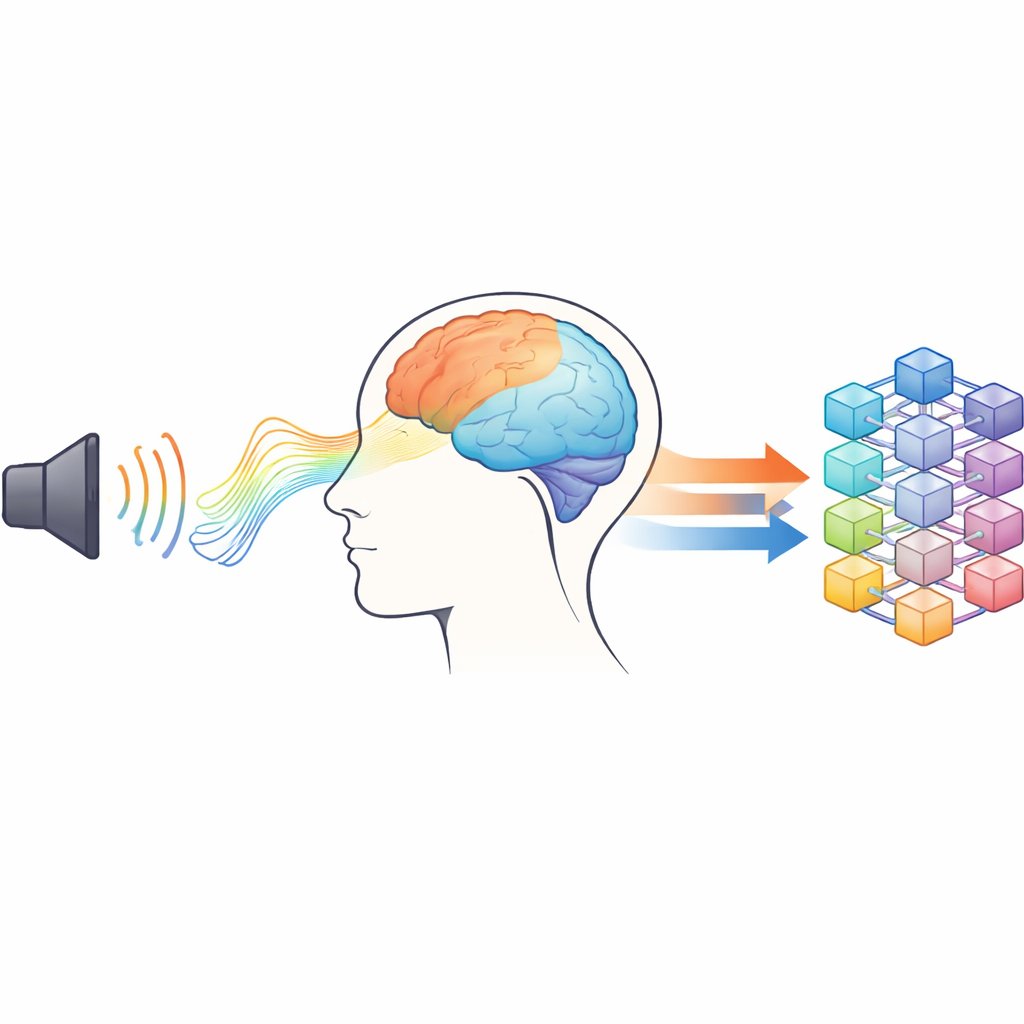

When you listen to a story, it often feels effortless to follow along—but under the surface, your brain is constantly guessing what will come next. At the same time, modern AI systems like large language models (LLMs) also predict upcoming words to generate fluent text. This study brings these two worlds together, asking how the human brain anticipates words in real time and how those processes compare to the way an advanced AI model works.

Listening to a Story in the Lab

To study natural language understanding, the researchers moved beyond artificial lists of words or short, isolated sentences. Instead, 29 young adult volunteers listened to about 50 minutes of a German science-fiction audiobook while their brain activity was recorded. Two complementary techniques were used at the same time: electroencephalography (EEG), which measures tiny voltage changes at the scalp, and magnetoencephalography (MEG), which detects the magnetic fields generated by brain activity. Together, these methods can track the brain’s responses to each word with millisecond precision while people follow a continuous storyline.

Following Different Types of Words

The audiobook was automatically split into individual words and labeled by grammatical type: nouns (such as “planet”), verbs (such as “run”), adjectives (such as “dark”), and proper names. For each word in the story, the scientists cut out a short time window of the EEG and MEG signals before and after the word was spoken and then averaged these pieces within each word class. This revealed reliable electrical and magnetic “signatures” for the different word types, including well-known components linked to meaning and sentence structure. Importantly, the team found that activity for nouns started to build up even before the word actually began, suggesting that the brain was especially ready for this kind of word in context.

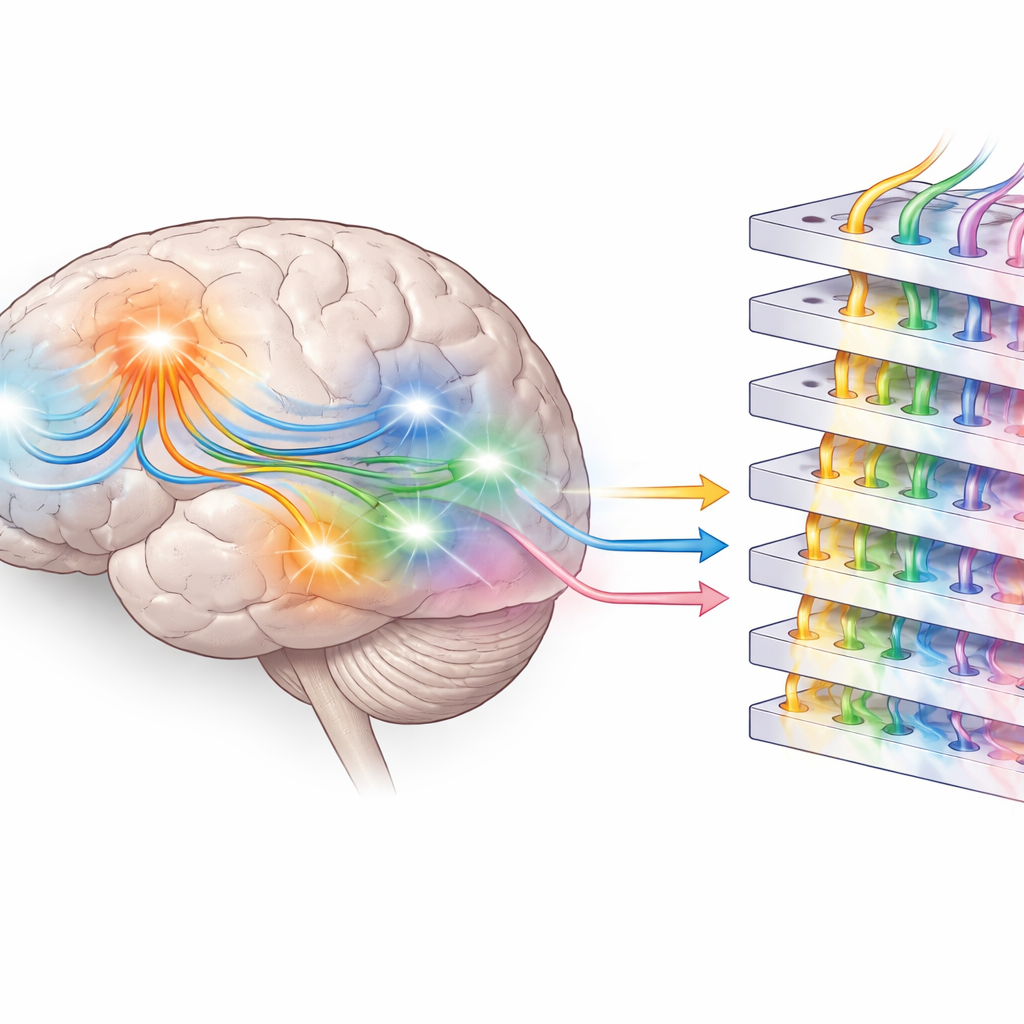

Where Meaning Meets Movement

To see where in the brain these signals arose, the researchers used computer models to estimate the likely sources of the MEG and EEG patterns inside the head. Nouns did not just activate classic language regions in the temporal lobes; they also engaged areas compatible with parts of the sensorimotor system, near regions involved in movement and bodily sensation. Verbs, in contrast, showed a different and more limited pattern. This supports the idea of “embodied” language, in which understanding a word—especially a concrete noun—partly reactivates networks tied to perception and action, grounding meaning in past sensory experiences rather than in abstract rules alone.

Comparing Brains and Large Language Models

The team then turned to Meta’s Llama 3.2 language model to provide a computational reference point. First, they tested “semantic prediction” by feeding the model the preceding context from the audiobook and asking how likely it judged the real next word to be. Nouns turned out to be the easiest for the model to predict, matching their central role in building up the story. Next, the researchers explored “syntactic prediction” by examining the internal activations, or embeddings, inside Llama. Even without extra training, hidden layers of the model naturally grouped words according to the grammatical type of the following word, and a simple probe network could often tell which word class would come next. Across layers, the internal structure for proper names and nouns became more distinct, echoing the growing separation of roles seen in the brain’s activity patterns.

Two Kinds of Readiness for Words

Taken together, the findings suggest that the brain prepares for upcoming language on at least two levels. In temporal regions, activity before word onset appears to reflect a kind of grammatical or “syntactic” readiness—knowledge about where certain word types tend to appear in a sentence. In more frontal and sensorimotor regions, readiness patterns seem to carry richer “semantic” expectations tied to meaning and experience, especially for nouns and names. Large language models, trained only to predict the next word, develop their own layered internal structures that partially mirror these distinctions, but they lack direct grounding in the physical world. By combining high-speed brain recordings with analyses of state-of-the-art AI, this work helps clarify how humans anticipate words during everyday listening and how far today’s machines have come in approximating that core feature of human language understanding.

Citation: Kölbl, N., Rampp, S., Kaltenhäuser, M. et al. Prediction, syntax and semantic grounding in the brain and large language models. Sci Rep 16, 8728 (2026). https://doi.org/10.1038/s41598-026-41532-0

Keywords: language prediction, brain and AI, large language models, semantic grounding, EEG MEG language